Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

A Novel Approach for Car Accident Detection and Analysis Using AIML Techniques

Authors: Preethi S Gowda, Dr. Nirmala Shivanand

DOI Link: https://doi.org/10.22214/ijraset.2023.55522

Certificate: View Certificate

Abstract

In the context of escalating concerns regarding road safety, this study introduces an automated traffic accident detection and analysis framework that utilizes surveillance footage. The approach synergistically combines the Motion Interaction Field (MIF) model and YOLO v5 algorithm to achieve accurate crash detection and precise vehicle classification. The primary objective is to develop an autonomous system for identifying and evaluating traffic events captured by security cameras. The MIF model extracts valuable insights from interactions among moving objects within images and videos, tailored explicitly for detecting damaged vehicles. Localization of damaged vehicles is achieved using the YOLO v5 algorithm, complemented by perspective transformation to enable vertical image representation. The unbiased finite impulse response (UFIR) technique, notable for its insensitivity to statistical noise data, effectively determines vehicle motion. Furthermore, this work delves into an in-depth analysis of car crashes, estimating impact speed and point of collision from an aerial perspective. This work encompasses meticulous accident clip analysis, precise car direction determination, and error identification. The outcomes underscore the potential of the proposed framework to revolutionize accident detection and analysis, contributing to heightened road safety and streamlined traffic management strategies.

Introduction

I. INTRODUCTION

Advancements in Artificial Intelligence and Machine Learning (AIML) techniques have significantly transformed accident detection and analysis. AIML algorithms have proven effective in enhancing the efficiency and accuracy of incident management within traffic systems. By automatically identifying and categorizing various types of accidents using real-time data, AIML models leverage computer vision techniques to analyze images or video feeds and recognize patterns indicative of accidents. Deep learning algorithms enable these models to learn from extensive datasets, facilitating accurate detection and classification of incidents such as collisions, vehicle rollovers, or pedestrian accidents. AIML algorithms also play a crucial role in accident analysis by investigating underlying causes and contributing factors. By integrating historical accident data, weather conditions, road infrastructure details, and other contextual information, these algorithms identify correlations and patterns to determine likely accident causes. The insights derived from such analysis assist transportation authorities and policymakers in making informed decisions to enhance road safety measures, optimize traffic regulations, and plan infrastructure improvements. In summary, AIML techniques for accident detection and analysis provide real-time incident identification, reduce response times, and enable data-driven decision-making in traffic management. Automation of these processes has the potential to improve road safety, emergency response, and traffic flow in urban environments. By integrating AIML-based systems within traffic management centers, accurate incident assessment, prompt deployment of emergency services, and efficient resource allocation can be achieved. As the demand for efficient traffic incident management continues to rise, the promising advancements in automated detection and assessment systems hold immense promise in improving the overall safety and efficiency of urban transportation networks.

II. RELATED WORKS

In the field of automated traffic accident detection and analysis, several studies have contributed to advancing the capabilities of surveillance-based systems. Tiago Tamagusko et al. [1] employed hierarchical learning with pre-trained models to achieve road accident detection through binary image classification. Their innovative approach showcased the potential of transfer learning, particularly in scenarios with limited data availability. The integration of the ImageNet dataset for model refinement highlighted the importance of utilizing comprehensive resources. Sergio Robles-Serrans et al. [2] introduced a noteworthy AI-based deep learning technique that achieved an impressive 98% accuracy in video-based traffic accident detection. Through meticulous visual and temporal feature extraction, their approach demonstrated a robust capability to discern accident-related patterns.

While their study was primarily focused on vehicular collisions, it laid the groundwork for future investigations into addressing challenges like low illumination, resolution, and occlusion. Guanxiong Liu et al. [3] proposed a pioneering edge-computing-based model designed to address congestion and speed detection. Their two-tier architecture, capable of adapting to varying network conditions and unpredictable weather, highlighted the potential of edge intelligence in traffic management. The effectiveness of their approach in outperforming standalone edge and cloud schemes underscored the value of dynamic decision-making in video analysis. Bulbula Kumeda et al. [4] harnessed the power of Deep Vision Neural Networks (DVNN) with CNN techniques for vehicle accident and traffic classification. Their multi-level convolutional architecture achieved high accuracy, demonstrating the potential of CNN-based models in image classification tasks. However, their study revealed the need for further exploration into integrating object position and orientation information, offering avenues for future enhancements. Earnest Paull Ijjina [5] proposed a solution centered around Mask R-CNN for accident detection, leveraging acceleration, trajectory, and angle anomalies. By addressing obstructions within a camera's field of view, their approach presented a reliable method for providing rapid and accurate information to relevant authorities. The study illuminated the significance of efficient accident detection systems in real-world scenarios.

Hu et al. [6] introduced an online framework for 3D vehicle detection and tracking from monocular videos. Their unique approach encompassed candidate box detection, 3D box estimation, data association, and tracking techniques. By overcoming challenges related to occlusion and reappearance, their work demonstrated a novel methodology for simultaneous 3D vehicle detection and tracking, contributing to the advancement of traffic surveillance systems. Collectively, these studies highlight the ongoing evolution in traffic accident detection and analysis methodologies. They underscore the integration of diverse techniques such as deep learning, edge computing, and multi-dimensional tracking to create more accurate and adaptable automated solutions. While remarkable progress has been made, challenges such as environmental variability and accurate object representation continue to inspire further research in this vital field.

III. METHODOLOGY

A. Artificial Intelligence and Machine Learning

Artificial Intelligence and Machine Learning (AIML) techniques are crucial in automating accident detection and analysis. These algorithms utilize real-time data from traffic sensors and surveillance cameras to identify accident patterns. By leveraging computer vision techniques, they analyze images and videos to detect sudden changes in vehicle movement and collisions. AIML's capability to learn from large datasets using deep learning algorithms enables accurate detection and classification of various accident types, including collisions and pedestrian incidents. Consequently, AIML significantly contributes to enhancing road safety, optimizing traffic flow, and improving emergency response in urban environments.

B. Computer Vision

Computer vision, a branch of artificial intelligence, plays a vital role in automating accident detection and analysis. By analyzing visual data from surveillance cameras or sensors, computer vision algorithms extract meaningful patterns and features to identify accidents. Real-time or recorded visual data is analyzed to recognize accident indicators like sudden changes in vehicle trajectories or collisions.

Moreover, computer vision enables detailed accident analysis by extracting relevant information from images or video data, including vehicle positions, speeds, and contextual factors. The integration of computer vision facilitates real-time incident identification, and faster emergency response, and provides insights for road safety improvements and traffic management decision-making. Ultimately, computer vision empowers automated accident detection and analysis, contributing to enhanced road safety and optimized traffic systems.

C. YOLO v5

YOLOv5 (You Only Look Once version 5) is a powerful object detection algorithm widely used for accident detection and analysis. It offers real-time and accurate identification of vehicles, pedestrians, and road obstacles. With its high precision and fast processing speed, YOLOv5 efficiently detects accident-related elements in images or video feeds.

By leveraging deep neural networks and advanced feature extraction techniques, YOLOv5 recognizes accident patterns, such as sudden changes in movement or collisions. Its integration enhances incident identification, facilitating prompt response and aiding in the analysis of contributing factors. YOLOv5 is a valuable tool for automating accident detection and analysis, contributing to improved road safety and traffic management.

D. Motion Interaction Field

The Motion Interaction Field (MIF) is a strategy utilized in accident detection and analysis. It relies on analyzing the interactions among moving objects within images or videos to identify potential accidents. By observing the contacts and motions of various objects, such as vehicles or pedestrians, MIF algorithms can detect sudden changes or abnormal behaviours that may indicate an accident occurrence. This approach enables the identification of damaged vehicles and helps in determining the location and severity of accidents. Incorporating MIF into accident detection and analysis systems, enhances the ability to autonomously detect accidents and provides valuable insights for incident management and decision-making in road safety.

E. Convolutional Neural Network (CNN)

CNNs are Deep Feedforward Neural Networks widely used in computer vision to recognize and classify functions from images. Neural networks execute convolutions and iteratively refine predictions for improved accuracy. This involves repeated convolutional operations on input images to improve recognition akin to human perception. In training, the neural architecture adjusts internal weights using labeled data to minimize prediction-label differences. This iterative learning enhances object recognition. As the network iterates, it captures intricate patterns and visual traits, becoming skilled in generalizing and predicting unseen images. This learning parallels human visual recognition development, relying on diverse training data for robust recognition performance.

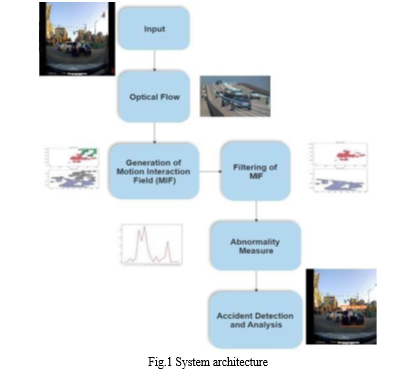

In Fig 1 architecture of the accident detection and analysis system is demonstrated. The traffic surveillance images or video is sent as an input to the optical flow for describing the image motion. Once the image motion is determined the Motion Interaction field (MIF) is generated for detecting and localizing traffic accidents. Later, MIF is filtered for detecting crashes in surveillance images or videos. The abnormality is measured when the non-symmetrical region with MIF intensity is above the threshold which shows the occurrence of the traffic accident in the video. Through the measured abnormality the accident can be detected and located by mapping the coordinated to the video frame.

- Input Video

The proposed system will take input videos or photos as its primary data source which contains the footage that needs to be analyzed for accident detection and analysis.

2. Optical Flow

It is a technique used to track the motion of an object within an image or video sequence. In this step, the system will apply optical flow algorithms to estimate the motion vectors for each pixel in consecutive frames of the input which helps in understanding the movement patterns of objects over time.

3. Generation of MIF

Using the information provided by the optical flow the system generates a MIF which represents the magnitude or intensity of motion at each pixel location in the images or video. It provides a visual representation of the motion patterns in the images or video, highlighting areas with significant movement.

4. Filtering of MIF

The generated MIF may contain noise or unwanted artifacts. To improve the accuracy of the subsequent steps, the system applies filtering techniques to remove noise and enhance the relevant motion patterns.

5. Abnormality Measure

In this step, the system analyzes the filtered MIF to determine the abnormality or deviation from normal motion patterns. This involves comparing the current MIF with a reference or baseline MIF, representing the expected or typical motion patterns without any accidents or abnormal events.

6. Accident Detection and Analysis

Using the abnormality measure obtained in the above step, the system detects and analyzes accidents or abnormal events in the images or video.

Overall, this system architecture combines optical flow analysis, MIF generation filtering, abnormality measure calculation, and accident detection to identify and analyze abnormal events in the input video.

IV. RESULTS AND DISCUSSION

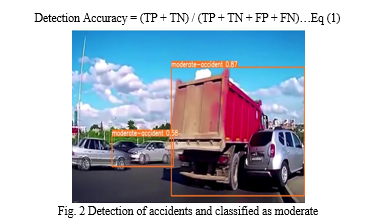

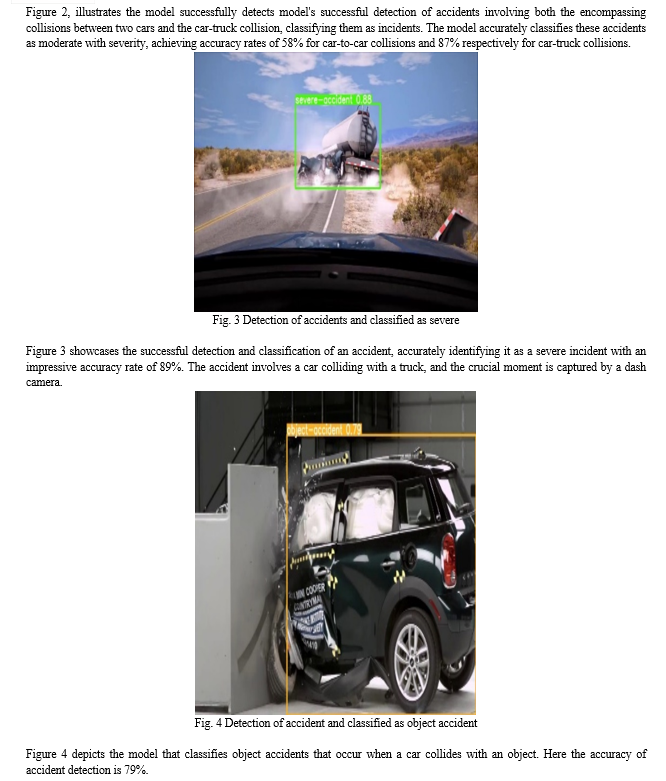

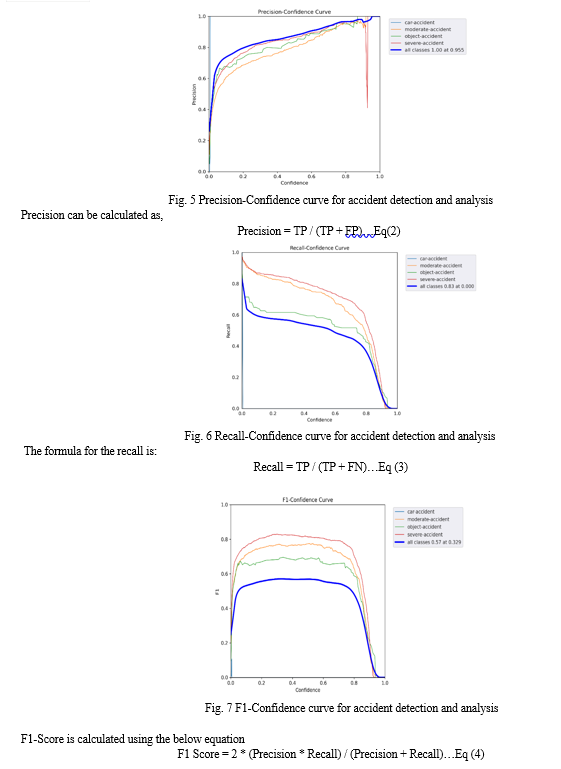

Datasets consist of 543 images of which 434 are used for training and 108 images are deployed for validation. The images presented below depict the input image fed into the accident detection model, which then proceeds to recognize and classify accidents within the image. By employing bounding boxes and labels, the model effectively identifies the specific regions where accidents occur, providing a comprehensive visual representation. This capability enhances accident analysis and enables a more efficient and targeted response. Furthermore, the model's ability to accurately detect accidents in diverse scenarios showcases its robustness and potential for real-world applications. The integration of visual representations and classifications aids in gaining valuable insights into accident patterns and facilitates informed decision-making in accident management and prevention strategies. Detection accuracy, also known as classification accuracy, is a common metric that measures the percentage of correctly classified instances out of the total number of instances in the data repository. The mathematical expression for detection accuracy is given below,

Conclusion

In conclusion, this proposed work introduced an automatic traffic accident detection and analysis framework using surveillance footage. The combination of the MIF model for crash detection and YOLO v5 for identifying crashed vehicles proved effective in accurately detecting accidents and successfully classifying them. The utilization of hierarchical clustering makes the framework practical for traffic police use. Also, the model excels at evaluating accident severity and gathering valuable data to facilitate efficient claim verification. The proposed model could be enhanced by addressing challenges by exploring alternative deep-learning models to improve identification accuracy when vehicles are obstructed. Integrating image enhancement algorithms would boost accident detection in diverse climate conditions and poor video quality.

References

[1] Tiago Tamagusko, Matheus Gomes Correia, Minh Anh Huynh, Adelino Ferreira, “Deep Learning applied to Road Accident Detection with Transfer Learning and Synthetic Images”, in proceedings of the scientific committee of the International Scientific Conference, Jun. 2022. [2] Sergio Robles-Serrano, German Sanchez-Torres, and John Branch-Bedoya, “Automatic Detection of Traffic Accidents from Video Using Deep Learning Techniques”, in MDPI, Vol.10, no.4, pp.537-563, 2021. [3] Guanxiong Liu, Hang Shi, Abbas Kiani, Abdallah Khreishah, Jo Young Lee, Nirwan Ansari, Chengjun Liu, and Mustafa Yousef, “Smart Traffic Monitoring System using Computer Vision and Edge Computing”, In Proceedings of the Fifth international conference on Internet of Things,2021, pp. 2312-2320. [4] Bulbula Kumeda, Zhang Fengli, Ariyo Oluwasanmi, Forster Owusu, “Vehicle Accident and Traffic Classification Using Deep Convolutional Neural Networks, In Proceedings of IEEE International Conference on Intelligent Transportation Systems, 2019, pp. 453-467. [5] Earnest Paul Ijjina et al., “Computer Vision-based Accident Detection in Traffic Surveillance, In proceeding of IEEE International Conference, Jul 2019, pp. 3456-3467. [6] H.-N. Hu et al., “Joint monocular 3D vehicle detection and tracking,” in Proceeding of IEEE/CVF International Conference Computer Vision (ICCV), Oct. 2019, pp. 5389–5398. [7] A. Kuramoto, M. A. Aldibaja, R. Yanase, J. Kameyama, K. Yoneda, and N. Suganuma, “Mono-camera based 3D object tracking strategy for autonomous vehicles,” in Proceedings of IEEE Intelligent Vehicles Symp. (IV), Jun. 2018, pp. 459–464. [8] E. Odat, J. S. Shamma, and C. Claudel, “Vehicle classification and speed estimation using combined passive infrared/ultrasonic sensors,” IEEE Transaction Intelligent Transport Systems, vol. 19, no. 5, pp. 1593–1606, May 2018. [9] A. V. Bharadwaj, S. Paul, L. R. Kumar, and A. Somanathan, “An improved real-time approach for video-based angular motion detection and measurement,” in Proceeding of IEEE Int. Conf. Comput. Intell. Comput. Res. (ICCIC), Dec. 2018, pp. 1–6. [10] G. Lee, R. Mallipeddi, and M. Lee, “Trajectory-Based Vehicle Tracking at Frame Rates”,2017.

Copyright

Copyright © 2023 Preethi S Gowda, Dr. Nirmala Shivanand. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET55522

Publish Date : 2023-08-26

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online