Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

AlexNet and DenseNet-121-based Hybrid CNN Architecture for Skin Cancer Prediction from Dermoscopic Images

Authors: Preeti Gupta, Sachin Mesram

DOI Link: https://doi.org/10.22214/ijraset.2022.45325

Certificate: View Certificate

Abstract

Skin cancer, one of the most common cancer types, has increased in prevalence during the past several decades. In order to properly care for patients, it is essential to appropriately identify skin lesions in order to differentiate between benign and malignant lesions. Although there are several computational methods for categorising skin lesions, convolutional neural networks (CNNs) have been shown to perform better than conventional techniques. In this study, we propose a hybrid CNN model for classification of skin cancers using dermoscopic images. To create a hybrid model for classifying skin cancers, we combine a pre-trained AlexNet CNN model with an optimised pre-trained DenseNet-121 CNN model. In this study, we put the suggested hybrid CNN model to the test using dermoscopic images from ISIC 2016–17. When evaluated on the ISIC Dataset, the suggested technique achieves extremely excellent classification performance, with an accuracy of 0.9065.

Introduction

I. INTRODUCTION

A. Background

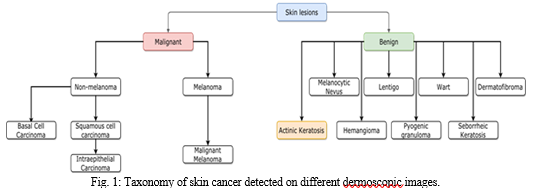

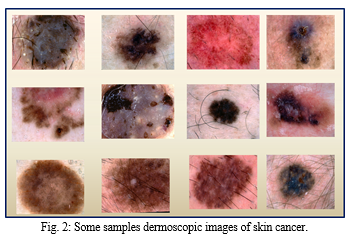

The largest organ in the human body is the skin. Skin cancers develop when skin cells degenerate and proliferate uncontrollably, having the potential to spread to many parts of the body. Skin cancer is the most widespread illness in the world. One in five persons in industrialised nations may acquire skin cancer over their lifetime, making it the most common type of cancer. Malignant melanoma, the worst form of skin cancer, is one of the worst types, killing 10,000 people annually. If discovered early enough, it can be treated with a straightforward excision, but a delayed diagnosis is associated with a higher risk of death (an estimated 50 precent mortality rate). We offer a fully automated computational method for classifying skin lesions [1-3]. The suggested method makes advantage of deep features optimised from several notable abstraction layers and CNN architectures. Melanoma is the most dangerous type of skin cancer, and in the past 30 years, its occurrence has risen. Early detection of melanoma is key to successful treatment. When melanoma is contained, its five-year relative survival rate is 98%; but, when it has advanced, it is only about 14%. Therefore, it is essential to identify melanoma early and precisely. Dermoscopy imaging is used to identify pigmented skin lesions in order to diagnose melanoma or suspected skin lesions. It is used as a first step to detect worrisome skin lesions with a non-invasive technique. Taxonomy of skin cancer is presented in Fig. 1 and sample pictures of different skin malignancies are shown in Fig. 2.

B. Motivation

An extreme hazard to human life is cancer. It occasionally causes people to pass away. There are many different forms of cancer that may develop in the human body, and one of the cancers that spreads the fastest and can be fatal is skin cancer. Numerous factors can cause it, including smoking, drinking alcohol, allergies, infections, and viruses, as well as physical activity, environmental changes, and exposure to ultraviolet (UV) radiation. Sunlight's UV rays have the ability to damage the DNA in skin cells. Strange swellings in the human body might potentially result in skin cancer. The projected mortality rate for skin cancer is 50% since it is associated with an increased risk of death. A simple excision can be used to cure it if found in time, but a later diagnosis is associated with a greater risk of mortality. To lower the number of deaths from skin cancer, if an individual's life can be saved by early detection. Therefore, a non-invasive, effective skin lesion detection technology is very necessary.

C. Contributions

In this study, we present a hybrid CNN framework for classifying skin lesions using two pre-trained CNN architectures, namely AlexNet and DenseNet-121. The following are the main contributions of this work:

- Thoroughly examine the performance of the AlexNet CNN model with the RMSProp optimizer for the classification of skin lesions into multiple classes.

- Thoroughly examine the performance of the DenseNet-121 CNN model with the Adam optimizer for the classification of skin lesions into multiple classes.

- Proposed hybrid CNN architecture for automated non-invasive skin lesion grade identification utilising AlexNet and DenseNet-121 CNN models.

- The proposed hybrid CNN model was trained and tested using the ISIC 2016–2017 dermoscopic image dataset.

The remainder of the article is organized as follows: A thorough literature review has been offered after the introductory section. The brief descriptions of AlexNet and DenseNet-121 have been provided after that. The suggested method has since been thoroughly discussed in the following section. Later, the experimental analysis, conclusion, and future work were presented.

II. LITERATURE REVIEW

Skin lesion detection systems of many different forms have been proposed by several researchers. These largely draw inspiration from several CNN approaches like AlexNet, ResNet, and GoogLeNet [1-3]. Only a few automated systems for classifying skin lesions are now available, and the majority of these PC-based systems need extra peripherals and/or specific settings in order to collect data.

An automated Melanoma Recognition, a comprehensive system, was created by Ganster et al. [4]. Automated image segmentation was accomplished using the combined outputs of three algorithms, and 96% of the images were effectively segmented as a result. A 24-NN classifier has demonstrated a melanoma detection rate of 73% when categorising into three groups, as well as 87% sensitivity and 92% specificity for the "not benign" class in a two-group setting.

Dermatologists were able to establish lesion borders using a method devised by Ercal et al. [5] that combined the use of a commercial Neural Network classifier with computed attributes to show irregularity and asymmetry in lesion morphologies, in addition to colours that are comparable. Eighty precent of the time, this method was successful in classifying data. Both of the aforementioned techniques weren't developed with the general public in mind or with mobile devices in mind.

The software tool MoleSense [6] from Opticom Data Research (Canada) gathers images of skin lesions, analyses them according to the ABCD theory, and returns feature values without classifying them. These investigations are, however, constrained by the use of specific pre-trained network topologies or specific layers for deep characteristic extraction. Additionally, there was just one pre-trained network in use. In addition, [7] used a single pre-trained Inception-v3 [9] network, [8] used a single pre-trained VGG16, and [10] used a single pre-trained AlexNet.

In this work, we classified images of skin lesions captured using a camera into three categories: nevus, seborrheic keratosis, and malignant, using a pre-trained and enhanced version of the AlexNet CNN and DenseNet-121 CNN architectures. We chose to employ these two CNN designs because they both have a track record of delivering effective results in a variety of applications, including image processing, object recognition, medical image processing, image fusion, and remote sensing [11–18].

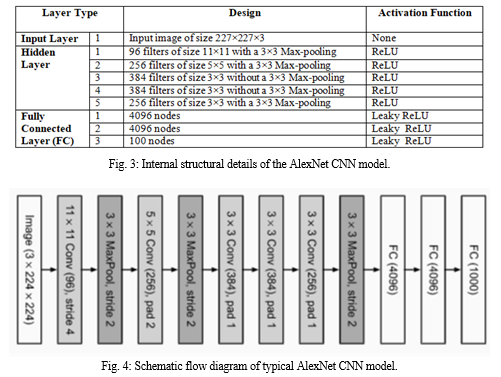

III. ALEXNET CNN MODEL

Convolutional neural network (CNN) architecture known as AlexNet was created by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton, who served as Krizhevsky's Ph.D. adviser. The ImageNet Enormous Scale Visual Recognition Challenge 2012 was won by AlexNet, an 8-layer CNN, by an astoundingly large margin. This network challenged the pre-existing paradigm in computer vision by demonstrating for the first time that features acquired through learning may surpass manually-designed features. There are eight learnable layers in the AlexNet. Rectified linear unit (ReLU) activation is used in each of the five levels of the model, with the exception of the output layer, which uses max pooling followed by three fully connected layers. They discovered that the training process was nearly six times faster when the ReLU was used as an activation function. Additionally, they made use of dropout layers, which stopped their model from overfitting. Additionally, the ImageNet dataset is used to train the model. There are almost a thousand classifications and about 14 million photos in the ImageNet collection. Additionally, Fig. 3 shows internal AlexNet CNN model details, while Fig. 4 shows the fundamental flow diagram of the standard AlexNet CNN model.

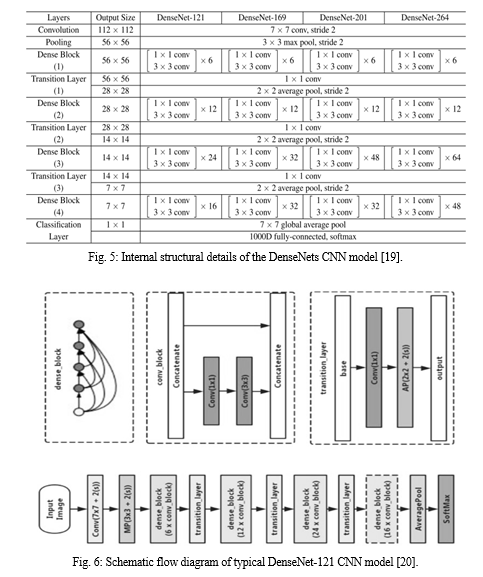

IV. DENSENET-121 CNN MODEL

Each convolutional layer of a conventional feed-forward convolutional neural network (CNN), aside from the first one (which receives input), gets the output of the preceding layer and generates an output feature map, which is then passed on to the following convolutional layer. As a result, there is "L" direct connections for every "L" layers, one between each layer and the following layer. The "vanishing gradient" problem, however, develops when the CNN has more layers, or as it is deeper. This means that when the information's travel from the input to the output layers lengthens, some information may "vanish" or "get lost," which decreases the network's capacity to learn effectively. By altering the typical CNN design and streamlining the connection between layers, DenseNets alleviate this issue. Each layer in a DenseNets design is connected to every other layer directly, giving rise to the moniker Densely Connected Convolutional Network. There are L× (L+1)/2 direct connections between 'L' levels. Additionally, Fig. 5 shows internal DenseNet-121 CNN model details, while Fig. 6 shows the fundamental flow diagram of the standard DenseNet-121 CNN model.

V. PROPOSED HYBRID MODEL FOR SKIN CANCER DETECTION

The development of computer-aided diagnostics for the detection of skin lesions has benefited from the numerous essential tools provided by deep learning. The use of deep learning techniques demands for a lot of training data, which is one need. The major issue with the medical industry is the dearth of high-quality, available data. This describes the medical industry. Many hospitals and clinics have significant amounts of data about patients, but they are unable to make it available to the public for a variety of reasons, most notably privacy concerns. Despite this issue, several authors have successfully surpassed these limitations by pushing the boundaries of technology and themselves. Additionally, it is feasible to obtain data and thus make use of CNN's diagnostic capabilities in the medical area with the aid of creations like ISIC Archive. There are primarily two study trends in the area of skin lesions that may be seen. With the use of transfer learning and CNN architectures, the first technique partially gets around the data availability problem. Another method applies supervised learning to the bigger, unlabelled data obtained using unsupervised learning approaches before classifying the data. Because a bigger dataset was not available, the technique presented in this article used the former strategy, transfer learning.

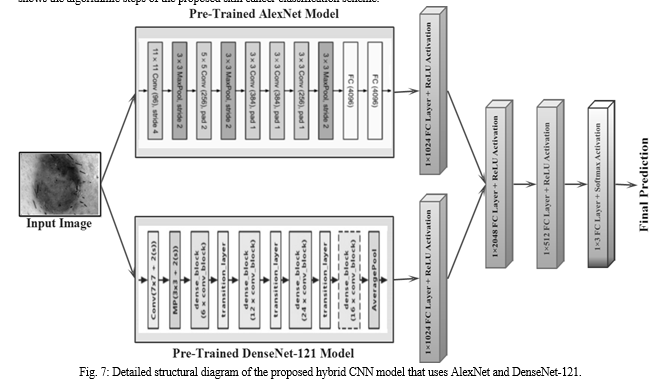

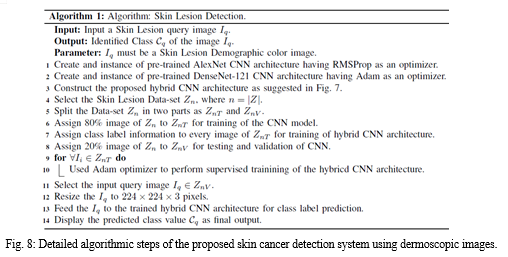

In order to detect probable skin lesions using a dermoscopic image, we have presented a hybrid CNN model that integrates features of pre-trained AlexNet with RMSProp optimizer and DenseNet-121 with Adam optimizer. A dermoscopic image is first given as input to both of the chosen CNN models. Next, we have selected the features to be fed into a new 1 x 1024 Fully Connected (FC) layer with the ReLU activation function from the AlexNet architecture's final 1 x 4096 FC layer output. Simultaneously, we chose the output of the DenseNet-121 architecture's final average pooling layer and fed the chosen features as input to a new 1 x 1024 FC layer with ReLU activation function. Then, we introduced a new 1 x 2048 FC layer by fusing the features produced by AlexNet's 1 x 1024 FC layer and DenseNet-121 architecture's 1 x 1024 FC layer. In order to down-sample the features and obtain more salient visual information for classification, we have finally added another 1 x 512 FC layer. The final classification has been performed by employing a new 1 x 3 FC layer with Softmax activation function. Additionally, 80% of the dataset's images have been used to retrain the proposed hybrid CNN model. While the performance of the suggested hybrid model has been validated using the dataset's remaining 20% of images. Next, Fig. 7 shows the proposed hybrid CNN model's detailed structural diagram. Next, Fig. 8 shows the algorithmic steps of the proposed skin cancer classification scheme.

VI. EXPERIMENTAL RESULT AND DISCUSSION

A. Dataset Description

The dataset utilised in this study includes of skin mole images of nevus, seborrheic keratosis, and malignant skin lesions for skin lesion identification by ISIC 2016–17 challenges. These images are freely usable from their official website and represent nevus, seborrheic keratosis, and malignant skin lesions. 4946 images overall are included in the dataset. 1372 of these images fall under the Nevus category, 1880 fall under the Melanoma category, and 1694 fall under the Seborrheic Keratosis category. Additionally, 80% of the images in the dataset were used for training, while the remaining 20% were used for testing.

B. Results and Outcomes

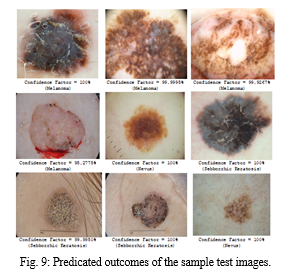

For the training set and test set, we partitioned our dataset into 80:20 divisions. When we trained our model, we typically obtained accuracy of greater than 90%. Further, we discovered that when the algorithm is run for more than 100 iterations, the model overfits the supplied data, but when it is run for just five iterations, the model underfits the supplied data. As a consequence, we chose the 10 to 90 iterations that provide the best results. By personally looking at certain test images, we also examined the accuracy of identifying whether a particular image of a skin lesion is malignant or benign. The result of the sample test images are shown in Fig. 9.

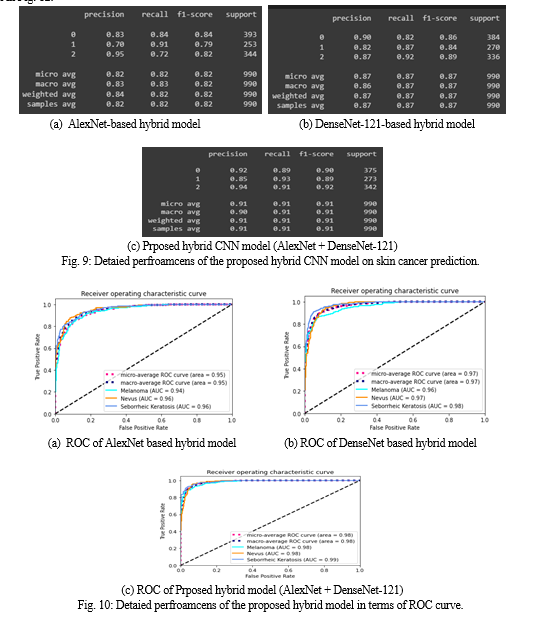

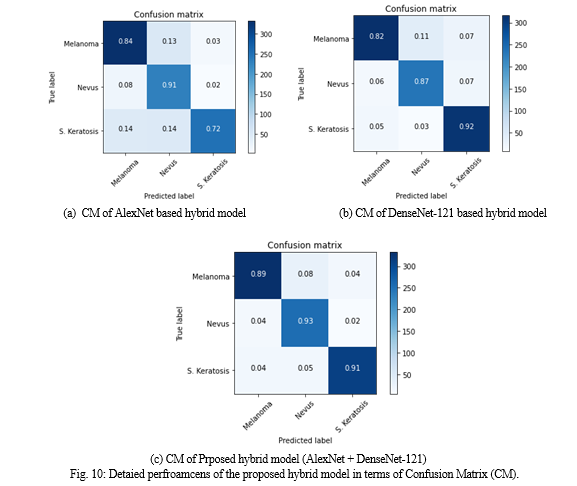

Furthermore, Adam served as the optimizer, the learning rate was set to 1, and the batch size was 32. The suggested hybrid model was trained five times, for a total of less than 100 epochs. This has been done to increase the credibility of comparative studies. The final classification layer is kept constant for both the DenseNet-121 and AlexNet models since the transfer learning concept is used in this work. The suggested model has been trained, and the trained model then is used for testing and validation. The performance of the hybrid model, which exclusively employs the AlexNet network as a backbone, is shown in detail in Fig. 10(a). Here, we have a achieved precision of 0.8192. Additionally, Fig. 10(b) displays the specific performance of the hybrid model using the DenseNet-121 network alone as the backbone. Here, we have recorded a precision of 0.8626. The performance of the hybrid model, which employs the AlexNet and DenseNet-121 networks as a backbone, is further shown in detail in Fig. 10(c). Here, we have recorded a precision of 0.9065. The suggested hybrid model's ROC curves are shown in Fig. 11 and the proposed model's confusion matrices are shown in Fig. 12.

The suggested hybrid model, out of the three models, provided the best average test accuracies, as can be seen from the above demonstrated results. Although AlexNet and DenseNet-121 models were used in the majority of similar research, the suggested hybrid CNN model beat both of them on this dataset. This demonstrates that, even for the same field of work, it is not necessarily required for the same model to perform better. The hybrid model is a better option for the task of classifying skin lesions, according to the recorded data.

Conclusion

The importance of the automated classification system, which aids in skin lesion diagnosis, was addressed in this article. We\'ve discussed about many strategies that were used to improve prediction accuracy, which might be useful in the healthcare profession. However, there are still numerous issues and challenges in accomplishing this aim. This is especially the case when studying clinical images, which typically exhibit a huge spectrum of variations due to various factors including cameras and other ambient influences. As a consequence, in this work, we have proposed a hybrid CNN model that used the strengths of AlexNet and DenseNet-121, two distinct CNN models, to classify three skin lesions with significantly improved results. The work that has been described has also provided methods for the decision-making process for the model selection through the use of a systematic set of general processes that may be used to any other application. The work presented has shown a way of utilizing the best model to construct a hybrid CNN model for the work. Then, using a number of experiments on the model, we have demonstrated the effectiveness and robustness of the proposed scheme in terms of average precision, recall, and f-measure.

References

[1] Jayapriya K, Jacob IJ. Hybrid fully convolutional networks?based skin lesion segmentation and melanoma detection using deep feature. International Journal of Imaging Systems and Technology. 2020 Jun; 30(2):348-57. [2] Murphree DH, Ngufor C. Transfer learning for melanoma detection: Participation in ISIC 2017 skin lesion classification challenge. arXiv preprint arXiv:1703.05235. 2017 Mar 15. [3] Qassim H, Verma A, Feinzimer D. Compressed residual-VGG16 CNN model for big data places image recognition. In2018 IEEE 8th annual computing and communication workshop and conference (CCWC) 2018 Jan 8; 169-175. [4] Ganster H, Pinz P, Rohrer R, Wildling E, Binder M, Kittler H. Automated melanoma recognition. IEEE transactions on medical imaging. 2001 Mar; 20(3):233-9. [5] Salah B, Alshraideh M, Beidas R, Hayajneh F. Skin cancer recognition by using a neuro-fuzzy system. Cancer informatics. 2011 Jan;10:CIN-S5950. [6] Ramlakhan K, Shang Y. A mobile automated skin lesion classification system. In2011 IEEE 23rd International Conference on Tools with Artificial Intelligence 2011 Nov 7; 138-141. [7] Kawahara J, BenTaieb A, Hamarneh G. Deep features to classify skin lesions. In2016 IEEE 13th international symposium on biomedical imaging (ISBI) 2016 Apr 13; 1397-1400. [8] Lopez AR, Giro-i-Nieto X, Burdick J, Marques O. Skin lesion classification from dermoscopic images using deep learning techniques. In2017 13th IASTED international conference on biomedical engineering (BioMed) 2017 Feb 20; 49-54. [9] Mirunalini P, Chandrabose A, Gokul V, Jaisakthi SM. Deep learning for skin lesion classification. arXiv preprint arXiv:1703.04364. 2017 Mar 13. [10] Szegedy C, Vanhoucke V, Ioffe S, Shlens J, Wojna Z. Rethinking the inception architecture for computer vision. In Proceedings of the IEEE conference on computer vision and pattern recognition 2016; 2818-2826. [11] Pradhan J, Kumar S, Pal AK, Banka H. Texture and color visual features based CBIR using 2D DT-CWT and histograms. In International Conference on Mathematics and Computing 2018 Jan 9 (pp. 84-96). Springer, Singapore. [12] Majhi M, Pal AK, Pradhan J, Islam SK, Khan MK. Computational intelligence based secure three-party CBIR scheme for medical data for cloud-assisted healthcare applications. Multimedia Tools and Applications. 2021 Feb 16:1-33. [13] Keles A, Keles MB, Keles A. COV19-CNNet and COV19-ResNet: diagnostic inference Engines for early detection of COVID-19. Cognitive Computation. 2021 Jan 6:1-1. [14] Kumar S, Pradhan J, Pal AK. A CBIR technique based on the combination of shape and color features. In Advanced Computational and Communication Paradigms 2018; 737-744. [15] Soni P, Pradhan J, Pal AK, Islam SH. Cybersecurity Attack-resilience Authentication Mechanism for Intelligent Healthcare System. IEEE Transactions on Industrial Informatics. 2022 Jun 1. [16] Pradhan J, Bhaya C, Pal AK, Dhuriya A. Content-Based Image Retrieval using DNA Transcription and Translation. IEEE Transactions on NanoBioscience. 2022 Apr 29. [17] Raj A, Pradhan J, Pal AK, Banka H. Multi-scale image fusion scheme based on non-sub sampled contourlet transform and four neighborhood Shannon entropy scheme. In2018 4th International Conference on Recent Advances in Information Technology (RAIT) 2018 Mar 15; 1-6). [18] Pradhan J, Pal AK, Banka H. Medical Image Retrieval System Using Deep Learning Techniques. In Deep Learning for Biomedical Data Analysis 2021;101-128. [19] Huang G, Liu Z, Van Der Maaten L, Weinberger KQ. Densely connected convolutional networks. In Proceedings of the IEEE conference on computer vision and pattern recognition 2017; 4700-4708. [20] Ji Q, Huang J, He W, Sun Y. Optimized deep convolutional neural networks for identification of macular diseases from optical coherence tomography images. Algorithms. 2019 Feb 26;12(3):51.

Copyright

Copyright © 2022 Preeti Gupta, Sachin Mesram. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45325

Publish Date : 2022-07-04

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online