Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Animal Specie Detection Using Deep Convolution Neural Network

Authors: Shubham Bhandari, Shruti Gautam, Shubham Sharma, Neelam Srivastav

DOI Link: https://doi.org/10.22214/ijraset.2022.42388

Certificate: View Certificate

Abstract

India is a diverse country in term of wild life, all kind of wild life animal in north and southern part of India like Bengal Tiger, Rhino, Leopard are some of them and many of them are in stage of extinct, only few of them are remaining so to check their population continuous monitoring and research is important. To monitor in forest one to one is very tedious and time consuming job so technology have evolved which include installing cameras in animal living areas and acquire videos and pictures, hence save time and money. The images received in camera\'s not recognisable in general as pictures are blurred , without animals, or difficult to detect, resulting in doubt and error. To cater this obstacle, we suggest a database driven network captured images with dataset of different animal species stored from spatiotemporal domain and pictures with animal are captured from forest are compared with the dataset, identify if a graph matches the animal or not. This animal detection model have developed by us based on self training by Deep Neural Network (DCNN). The technology is used for classification using ML and AI algorithms, support vector machine, k-mean nearest neighbour, and ensemble tree. This suggest system can accurately classify pictures up to 91% accuracy.

Introduction

I. INTRODUCTION

Bulk amount of data is available now for wildlife animals for any country according to their behaviour, feature and domain . Installed camera technique including technologies are used in wildlife animal monitoring and image analysis because it is easy to use and low in cost. As large qty of data is available for wild life animal it become more handy to analysis and compare behaviour and growth of specie in different area of the earth and can be study impact of human intervention on wild life animals and biodiversity over different seasons, areas and species. To study and capture wildlife animals sensor based cameras are installed on trees to create Installed camera network. The camera traps starts; each time motion is detected, and a small video of animals are recorded with details about the surroundings. Installed camera networks are vital for study of wild life animal information with no disturbance. Besides, Installed camera networks are financially attainable, simple to convey at bigger space and have low maintenance cost; therefore, they are generally utilized for wild life observing. We can without much of a stretch get information about the visual parts of creature from the Installed camera pictures, that help to know the behaviour and biometric highlights of species alongside the significant information identified with natural life environment and environmental factors. As of late, an immense arrangement of Installed camera pictures have been procured which challenges the ability of manual comment and picture measuring. There is a critical need to plan different devices for mechanized preparing of these enormous Installed camera pictures, for example, creature recognizable proof, division, extraction, and following. This work propose a technique to recognize natural life creature utilizing CNN dependent on Installed camera pictures

Before DCNN technique deep neural networks were used for object recognition such as Region based Convolutional Neural Networks (RCNN). The object recognition made of two parts: first; the find out the regions in image by proposal technique which will have all required objects and second; by classification find out whether region have required objects. object location as far as creature identification manages issue of precision and speed because of the profoundly powerful and exceptionally jumbled picture successions acquired from camera traps. The available region proposal approaches create a huge amount of candidate regions. Thus, it is essential to examine the error free attributes of Installed camera picture sequence in spatiotemporal area to show a productive locale proposition approach that makes few applicant districts. Thus, we utilized the Installed camera picture groupings that are investigated utilizing Iterative Embedded Graph Cut (IEGC) method to make a little gathering of Wild Animal Detection Using Deep Convolutional Neural Network applicant creature locales . Additionally for better applicant creature locale order, DCNN highlights are utilized with various classifiers, for example, Support Vector Machine (SVM) and its variations and Ensemble classifiers

In this research we have considered both the creature movement and spatial setting to create competitor creature districts utilizing IEGC. In addition, we have seen that DCNN picture highlights function admirably and improve the presentation of arrangement utilizing various classifiers. We have planned a hearty and solid creature identification model dependent on Installed camera picture successions containing exceptionally unique and jumbled pictures The past investigations untamed life discovery, for example, frontal area foundation division, object recognition, picture arrangement, and confirmation.

Existing investigation on foundation deduction was finished with the suspicion of static foundation. Numerous models for foundation division utilize a base edge that consists of just foundation expressly. The test of dynamic foundation is taken care of by considering the neighbourhood division affectability utilizing criticism circles at pixel level of pictures in a video .

We see that dependable and productive article division and identification require the evacuation of suspicion about fixed foundations. For division in powerful pictures, there are various points to consider, i.e., spatial setting, diagram cut at region level, assessment of scenes at pixel level, and closer view foundation distinguishing proof at consecutive level, and so forth. X. Ren et al [1[i]].utilized Installed camera pictures that are gained from exceptionally powerful pictures utilizing a dependable and proficient article cut technique.

Latest work in object identification and detection show the model work of DCNN [14[ii]] . To get minimize the in depth study of whole picture animals, with surrounding between est is examined coming about quick preparing of DCNN-based article location. A few investigations have been done on object identification utilizing object proposition approach . C. Szegedy et al.utilized Deep Neural Network (DNN) to foresee objects utilizing bouncing box object districts through relapse models. Sermanet et al. [18] modelled a completely associated neural organization for expectation of box directions and location of articles. D. Erhan et al. used DNN for locale proposition and multi-class forecast.

The investigation of picture confirmation is pertinent as picture check is where applicant object proposition is confirmed, i.e., regardless of whether creature is available or not. The cycle of picture check has two key stages: include portrayal and separation or similitude measures. Highlights can be handmade, for example, HOG, HAAR-like descriptors. In this work, we have utilized highlights removed utilizing DCNN for Installed camera information

II. ALGORITHM USED

Solid and strong natural life recognition from profoundly powerful and jumbled picture successions of Installed camera network is a difficult assignment. Subsequently to increase high performance, pictures should be examined at pixel or little locale level. Nonetheless, because of low differentiation and jumbled pictures, it gets hard to distinguish whether a specific district or pixel dependent on nearby data speaks to creature or foundation. Consequently, we have to break down worldwide picture includes moreover. For instance, the district of a creature body might be considered foundation locale. In such case, nearby data supportive of cessing won't be adequate, prompting prerequisite of worldwide preparing (to extricate worldwide picture highlights) to distinguish creature. For instance, perceive whether a creature is available or not, one ought to likewise distinguish body parts like head, legs and so forth as opposed to body as it were.

A. Creature Background Verification Model

In this work, we have planned a model that confirms the creature and foundation patches from the Installed camera pictures. The difficulties related with the model are the immense varieties in foundation, for example, dynamic surface of foundation, change of position of unessential items (like leaf, branch), enlightenment contrasts because of climate, season and shadows. In this manner, highlights must be invariant to all above changes. Additionally, our model needs to work with competitor creature fixes that are of variable sizes and proportions since they are acquired through group diagram cuts.

To deal with above difficulties, we present the accompanying plan for creature foundation check model. Our plan has three stages: (1) preprocessing, (2) adjusted DCNN highlights, and (3) characterization through learning calculations.

B. Animal Detection Model

Existing writing has demonstrated that DCNN is exceptionally effective descriptors for object acknowledgment, arrangement and recovery, and so forth. There are different convolutional layers and at any rate one completely associated layer in a DCNN. For interpretation invariant highlights, DCNN has pooling layer. In our work, we seek after the engineering depicted by Krizhevsky et al. utilizing the VGG-F pretrained model . The pretrained model has been scholarly on gigantic assistant ILSVRC 2012 dataset. The pretrained model has a picture size for contribution of 224 × 224; subsequently, we resize the pictures to 224 × 224, without thinking about its genuine size and proportion.

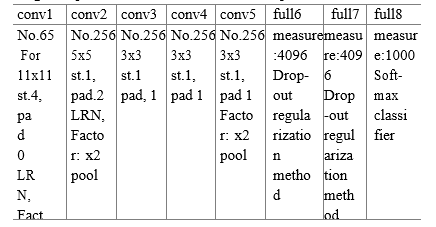

The picture resize brings about picture bends which can be ignored because of the way that all the pictures experience similar mutilations, and the impact of resizing is immaterial. The DCNN gives a component vector of 1000 measurements. We use DCNN highlights as they are self-learned highlights that upgrade the presentation of the framework, and these highlights contain data that represents pixles of a picture like edge, shape. In Table-1, VGG-F model is specified

As can be seen from Table 1, there are five convolutional layers, specifically conv1–5 while three completely associated layers, in particular full6–8. The boundaries of each layer are given in the Table 1 as convolutional layer; number of channels with their size; step esteem; spatial cushioning and down-inspecting element of max-pooling. Step tells the portion of spatial measurements over the info while cushioning tells the size of the cushioning along the fringes of the contribution to a convolutional layer. Likewise, the pooling layer alongside a convolutional layer helps in lessening the size of the portrayals. Also, pooling helps in conquering the issue of overfitting. Essentially for completely associated layers, the dimensionality of each layer alongside the strategy utilized for regularization is given, and in last layer, delicate max classifier is utilized that assesses the deviation of yield to the objective

III. EXPERIMENTAL RESULTS

A. Datasets

We have utilized the standard Installed camera dataset. for experimentation and evaluating the framework execution. Installed camera pictures permit evaluating the framework for untamed life discovery even in profoundly powerful and exceptionally jumbled characteristic pictures. The dataset contains 20 types of creatures with around 100 picture succession for every species. The accessible pictures are available in both daytime arrangement and evening design, bringing about untamed life checking framework for both daytime and evening time. Installed camera networks give complex pictures profoundly jumbled characteristic recordings and furthermore high-goal pictures. The pictures got change in goal from 1920 × 1080 to 2048 × 1536. The quantity of pictures in each grouping differs from 10 to 300 that is very small. The quantity of pictures in a picture arrangement relies upon the time of activity by creature. An aggregate of 1110 patches are extricated from the dataset utilizing the given bouncing boxes and their areas.

IV. RESULT AND ANALYSIS

We used different evaluation standards as (1) TPR : True Positive Rate

(2) PPV : Positive Predictive Values

(3) FDR :Discovery Rate

(4) F1-measure

(5) AUC :Area Under Curve to study the result of system.

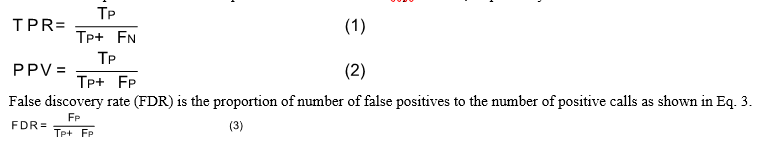

TPR is ratio of number of true positives :total number of positive in sample and PPV : positive predictive values is the ratio of number of true positives :number of positive calls as shown in Eqs. 1 and 3, respectively.

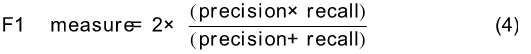

F1-measure is the trade-off between recall and precision as shown in Eq. 4. The area under curve (AUC) is a measure of the overall performance of the classifier. The higher the value of area under curve represents better classifier performance.

We have accomplished normal exactness 90.7% with SVMs, KNNs, and troupe classifiers. The investigations are performed utilizing variations of SVM, for example, direct SVM, quadratic SVM, cubic SVM, and medium Gaussian SVM. The most elevated accu-scandalous accomplished utilizing nonlinear SVMs, i.e., quadratic and cubic is 91.1% and 91.2% individually. Notwithstanding, weighted KNN and outfit supported tree additionally perform well like SVM with 91.4% and 91.2% precision, separately. We saw that favourable to presented framework acquired huge outcomes with exceptionally jumbled pictures. The general exactness of the framework is acquired in the scope of 89–91.4% and most noteworthy precision is 91.4% accomplished with weighted KNN classifier. The outcome shows that DCNN highlights furnish great outcome with various AI calculations.

Conclusion

In this paper, we have proposed a dependable and vigorous technique for creature recognition in exceptionally jumbled pictures utilizing DCNN. The jumbled pictures are acquired utilizing Installed camera organizations. The pictures in Installed camera picture groupings likewise give the competitor creature area proposition done by staggered chart cut. We have present a check step in which the proposed area is ordered into creature or foundation classes, Thus, deciding if the proposed district is really creature or not. We Wild Animal Detection Using Deep Convolutional Neural Network 337 applied DCNN highlights to AI calculation to accomplish better execution. The trial results shows that proposed framework is effective and hearty wild creature location framework for both daytime and evening.

References

[1] R. Kays et al., eMammal Citizen science camera trapping as a solution for broad-scale, long- term monitoring of wildlife populations, in Proc. North Am. Conservation Biol., 2014, pp. 8086. [2] V. Mahadevan and N. Vasconcelos, Background subtraction in highly dynamic scenes, in Proc. IEEE Conf. Comput. Vis. Pattern Recog., Jun. 2008, pp. 16. [3] R. Girshick, J. Donahue, T. Darrell, and J. Malik, Rich feature hierarchies for accurate object detection and semantic segmentation, in Proc. IEEE Conf. Comput. Vis. Pattern Recog., Jun. 2014, pp. 580587. [4] R. Girshick,Fast r-CNN, in Proc. Int. Conf. Comput. Vis., pp. 14401448, 2015. [5] K. H. Shaoqing Ren and J. S. Ross Girshick, Faster R-CNN: Towards real-time object detection with region proposal networks, in Adv. Neural Inf. Process. Syst., 2015, pp. 9199. [6] J. R. Uijlings, K. E. van de Sande, T. Gevers, and A. W. Smeulders, Selective search for object recognition, in Int. J. Comput. Vis., vol. 104, no. 2, pp. 154171, 2013. [7] M. M. Cheng, Z. Zhang, W. Y. Lin, and P. Torr, BING: Binarized normed gradients for objectness estimation at 300fps, in Proc. IEEE Conf. Comput. Vis. Pattern Recog., Jun. 2014, pp. 32863293. [8] P.L. St-Charles, G.-A. Bilodeau, and R. Bergevin, Flexible background subtraction with self- balanced local sensitivity, in Proc. IEEE Conf. Comput. Vis. Pattern Recog. Workshops, Jun. 2014, pp. 414419. [9] M. Oquab, L. Bottou, I. Laptev, and J. Sivic, Learning and transferring mid-level image representations using convolutional neural networks, in Proc. IEEE Conf. Comput. Vis. Pattern Recog., Jun. 2014, pp. 17171724. [10] A. S. Razavian, H. Azizpour, J. Sullivan, and S. Carlsson, CNN features off-the-shelf: An astounding baseline for recognition, in Proc. IEEE Conf. Comput. Vis. Pattern Recog. Work- shops, Jun. 2014, pp. 512519

Copyright

Copyright © 2022 Shubham Bhandari, Shruti Gautam, Shubham Sharma, Neelam Srivastav . This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET42388

Publish Date : 2022-05-08

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online