Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Assistive Devices for Visually, Audibly and Verbally Impaired People

Authors: A. Bhagyashree, Akheelesh B Palled, Ranjith Kumar, Akshay Hegde, Saravana Kumar

DOI Link: https://doi.org/10.22214/ijraset.2022.45267

Certificate: View Certificate

Abstract

There are some people who don’t have the ability to speak or they lose it in an accident. They find it difficult to express their thoughts or to convey their message to other people. In this project we propose a Sign Language Glove which will assist those people who are suffering for any kind of speech defect to communicate through gestures i.e. with the help of single handed sign language the user will make gestures of alphabets. The glove will record all the gestures made by the user and then it will translate these gestures into visual form as well as in audio form. This project uses Arduino controller to control all the processes and flex sensors along with accelerometer sensors will track the movement of fingers as well as entire palm. A LCD will be used to display the user’s gesture and a speaker to translate the gesture into audio signal is planned if possible for execution. This project can be further developed to recognize complex like food, water, etc.

Introduction

I. INTRODUCTION

Talking hands, is a pair of gloves, which can recognize the signs (Indian sign language) made by our hands, and converts it into form of speech, which helps speech impaired people communicate with others implemented through Arduino programming and a Smartphone application. According to the recent statistics about 7.5% of Indians are speech challenged. Indian Sign Language is the only mode of communication used by them. Sign languages are not easy to recognize as they are difficult to understand and also highly complex to learn.

The day to day functioning of people with disabilities as well as their independence can be developed and improved by use of products based on Assistive Technology.

A cogently operating sign language recognition system can provide a room for a speech challenged person to communicate with non-signing people without the need of a decoder. It can be used to accomplish speech or text, making the mute more self-dependent. Sadly, here hasn’t been a system with these facilities so far. All research till now have been restricted to small scale systems competent of recognizing only a nominal subgroup of a full sign language. However, these systems have not been effective enough to make them independent.

Technology has always been of great help to the disabled and given them a helping hand to allow them to live a normal and healthy life like others. we have come up with a novel idea of a glove named Handtalk that will convert hand movements into text and allow the deaf to express themselves better.

The Handtalk glove needs to be worn on the hand by the deaf or mute person and depending on the variation of movement, the device will convert it intelligently into voice for the other person to comprehend it easily.

The Handtalk glove senses the movements through the flex sensors pads which detect the different patterns of motion and the way the finger curls. The device can sense carefully each resistance and each movement made by the hand. Currently the device can convert only few words, but depending on the success of this device few more additional words may be added later onto this expressive system.

The Gestures can be converted to voice by using a APR 9600 Voice storage and retrival chip. Prerecorded voices are stored into APR Memory and when corresponding gestures are received, the appropriate voices are reproduced by the APR through the speaker.

Sign language is the language used by deaf and mute people and it is a communication skill that uses gestures instead of sound to convey meaning simultaneously combining hand shapes, orientations and movement of the hands, arms or body and facial expressions to express fluidly a speaker’s thoughts. Signs are used to communicate words and sentences to audience. A gesture in a sign language is a particular movement of the hands with a specific shape made out of them. A sign language usually provides sign for whole words. It can also provide sign for letters to perform words that don’t have corresponding sign in that sign language.

II. LITERATURE SURVEY

A. Anabarasai Rajamohan, Hemavathy R

“Deaf-Mute Communication Interpreter”, International Journal of Scientific Engineering and Technology, 1 May 2013, Volume 2 Issue 5, pp.336-341.

The project tries to provide communication by, means of a glove based deaf-mute communication interpreter system. The glove is internally equipped with five flex sensors, tactile sensors and accelerometer. For each specific gesture, the flex sensor produces a proportional change in resistance and accelerometer measures the orientation of hand.

B. T. Yamunarani , G. Kanimozhi

“Hand gesture recognition system for disabled people using Arduino”, International Journal of Advance Research and Innovative Ideas in Education, Vol-4 Issue-2 2018.

The main aim of this proposed work is to develop a cost effective system which can give voice to voiceless people with the help of Sign language. In the proposed work, the captured images are processed through MATLAB in PC and converted into speech through speaker and text in LCD by interfacing with Arduino

C. Moataz Soliman, Tobi Abiodun, Tarek Hamouda, Jiehan Zhou, Chung - Horng Lung

“Smart Home: Integrating Internet of Things with Web Services and Cloud Computing”, 2013 IEEE 5th International Conference on Cloud Computing Technology and Science.

The main focus of this approach is, the smart utilization of Arduino in creating an intelligent prototype by embedding it with sensors and actuators. Further, a case study is done on measuring different parameters such as light and humidity, controlling various devices such as air-conditioners, ovens, washing machine etc. as well as controlling the security apparatus of the house using RFID technology.

D. Nikolaos Bourbakis, Anna Esposito, D. Kabraki

“Multi-modal Interfaces for Interaction and Communication between Hearing and Visually Impaired Individuals: Problems & Issues”, 19th IEEE International Conference on Tools with Artificial Intelligence.

The authors have proposed a prototype Tyflos - Koufos to reduce the communication gap between deaf and blind. This paper discusses in particular the necessity for the conversion of various modalities into a common medium which is understandable and shared by visual and hearing impaired.

III. MATERIALS

Many smart gloves are proposed in recent years where preferred technology was wireless mode with many distinct features, but those were not reliable, light weight, cheap, Easy to use, plug and play type prototypes. It is because of components used for fabrication which are normally available in market such as flex sensors, microcontroller, and wireless transmitter, and these were powered by battery which was little heavy as compare to other components. Therefore, these kinds of assemblies are bulky, and difficult to use.

- Design of Smart e-Tongue for the Physically Challenged People.

- MyVox—Device for the communication between people: blind, deaf, deaf- blind and unimpaired.

- PiCam:IoT based Wireless Alert System for Deaf and Hard of Hearing.

- Design of a communication aid for physically challenged.

- BLIND READER: An Intelligent Assistant for Blind

IV. OBJECTIVE

- To develop means of communication between blind, deaf and dumb people.

- To develop a wearable technology by affixing flex sensors to a glove which will record and analyse various hand gestures of visually, audibly and verbally impaired individual.

- To develop efficient techniques of speech to text and text to speech conversion.

- To gain knowledge on efficient braille communication

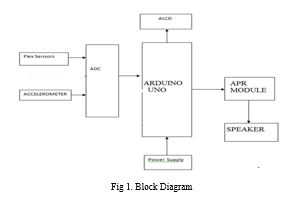

V. BLOCK DIAGRAM

The figure shows the block diagram of the project, equipped with flex sensors, relay, Aurdino, speaker, APR module, Accelerometer, an LCD screen display and power supply to enable and provide both textual and speech outputs in an efficient manner and also provide easier communication between differently abled individuals.

VI. METHODOLOGY

Data is collected from the inputs provided in the prototype, like the flex sensors and the keypad, important messages and signs to be conveyed as the output is fed to the Aurdino and programmed with the high level language C and is conveyed through the outputs provided in the system , like the LCD screen and the speaker, which helps the blind, deaf and dumb people to not only convey these messages to the normal people but also convey them to such differently abled people as well. The apr9600 device offers true single-chip voice recording, non-volatile storage, and playback capability for 40 to 60 seconds. the device supports both random and sequential access of multiple messages. Sample rates are user-selectable, allowing designers to customize their design for unique quality and storage time needs.

More sensors can be employed to recognize full sign language. A handy and portable hardware device with built-in translating system, speakers and group of body sensors along with the pair of data gloves can be manufactured so that a deaf and dumb person can communicate to any normal person anywhere.

VII. WORKING

- APR 9600 single chip voice recorder and playback device from Aplus integrated circuits makes use of a proprietary analog storage technique implemented using flash non- voltaic memory process in which each cell is capable of storing up to 256 voltage levels.

- Microphone amplifier, automatic gain control ( AGC ) circuits, internal anti aliasing filter, integrated output amplifier and message management are some of the features of the APR 9600.

- Voice signal from the microphone is fed into the chip through a differential amplifier.

- Then the signal is processed into the memory array through a combination of the sample and hold circuits and analogue read/write circuit.

- The incoming voice signals are sampled and the instantaneous voltage samples are stored in the non- voltaic flash memory cells in the 8-bit binary encoded format.

- During playback, the stored signals are retrieved from the memory, smoothed to form a continuous signal level at the speaker.

VIII. OUTCOMES

- Gesture Recognition using MEMS sensors

- Accelerometer based palm movement recognition

- Flex Sensor Based Fingerprint Movement recognition

- Voice output using APR module

IX. FUTURE ENHANCEMENT

The completion of this prototype suggests that sensor gloves can be used for partial sign language recognition.

- To reduce the size of unit we can use SMD.

- High quality sensor can use. The range can be increased

???????

???????

Conclusion

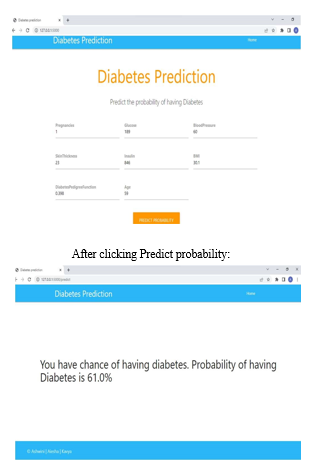

This paper introduced the Smart Hand Gloves for Disable People. It will provide the more reliable, efficient, easy to use and light weight solution to user as compare to other proposed papers. This will responsible to create meaning to lives of Disable People. During this project we face various types of challenges. We have tried to minimize the problem. One problem is there to make it Wireless. So, we observed and analysed different research papers and products available in market which are bulky, difficult of handle, and delicate in structure. Since this was a prototype, our focus was to build a model, which can solve or minimize the communication problem for the disable people.Machine learning can help doctors identify and cure diabetes. We will conclude that improving the accuracy of the classification will help the machine learning models

References

[1] Pravin Dhulekar, Swapnali Choudhari, Priyanka Aher, Yogita Khairnar, Arduino based Anti Photography System for Photography Prohibited Areas , Journal of Science and Technology (JST) Volume 2, Issue 5, May 201 [2] A.K.Veeraraghavan, S.Shreyas, Ramachandran, V.Kaviarasan, A Survey on Reduction of Movie Piracy using Automated Infrared System, International Journal of Innovative Research in Computer and Communication Engineering, Vol. 5, Issue 11, November 2017. [3] P.A.Dhulekar,PriyankaAher,YogitaKhairnar,Swapnali Choudhari “Design of IR based Image Processing Technique for Digital Camera Deactivation” presented in IEEE International Conference on Global Trends in Signal Processing, Information Computing and Communication 2016. [4] Kiran Kale, SushantPawar and PravinDhulekar, “Moving Object Tracking Using Optical Flow and Motion Vector Estimation” Published in IEEE 4th International conference on reliability, Infocom technologies and optimization (ICRITO 2015), 2-4 Sept 2015. [5] J.Manasa, J.T.Pramod, Dr.S.A.K.Jilani, Mr.S.JaveedHussain, Real Time Object Counting using Raspberry Pi, International Journal of Advanced Research in Computer and Communication Engineering, Vol. 4, Issue 7, July 2015. [6] Virendra Kumar Yadav, Saumya Batham, Anuja Kumar Acharya,\\\"Approach to accurate circle detection: Circular Hough Transform and Local Maxima concept\\\", Published in Electronics and Communication Systems (ICECS), 2014 ,International Conference on 13-14 Feb. 2014 [7] Snehasis Mukherjee, Dipti Prasad Mukherjee, “Tracking Multiple Circular Objects in Video Using Helmholtz Principle”,Published in: Advances in Pattern Recognition, 2009. ICAPR \\\'09. Seventh International Conference on, 4-6 Feb. 2009 [8] Khai N. Truong, Shwetak N.Patel ,Jay W. Summet ,Gregory D. Abowd,“Preventing camera recording by designing a capture resistant environment”, Proceeding UbiCom’05 proceedings of 7th International Conference on Ubiquitos Computing , Pages 73-76,Springer-verlag Berlin, Heidelber. [9] L. Mieremet, Ric (H.)M.A. Schleijpena, P.N. Pouchelle, ”Modeling the detection of optical sights using retro- reflection”,Laser Radar Technology and Applications XIII, edited by Monte D. Turner, Gary W. Kamerman, Proc. of SPIE Vol. 6950, 69500E,2008 [10] Raquib Buksh, Soumyajit Routh, Parthib Mitra, Subhajit Banik, Abhishek Mallik, Sauvik Das Gupta, Implemantation of MATLAB based object detection technique on Arduino board and iROBOT create, International Journal of Scientific and Research Publications, Volume 4, Issue 1, January 2014 [11] Harshada H. Chitte, Pravin A. Dhulekar, “Human Action Recognition for Video Surveillance” Presented in International Conference on engineering confluence, Equinox, Mumbai, Oct 2014 [12] Panth Shah, Tithi Vyas, Interfacing of MATLAB with Arduino for Object Detection Algorithm Implementation using Serial Communication , International Journal of Engineering Research & Technology (IJERT), ISSN: 2278- 0181,Vol. 3 Issue 10,page no. 1069- 1071, October- 2014 [1] Sneha, N. and Gangil, T., 2019. Analysis of diabetes mellitus for early prediction using optimal features selection. Journal of Big Data, 6(1), p.13.(2019) [2] Sisodia, D. and Sisodia, DS, 2018. Prediction of diabetes using classification algorithms. Procedia computer science, 132, pp.1578-1585. (2018) [3] Rahul Joshi and Minyechil Alehegn, ?Analysis and prediction of diabetes diseases using machine learning algorithm?: Ensemble approach, International Research Journal of Engineering and Technology Volume: 04 Issue: 10 | Oct -2017 [4] Ridam Pal, Dr. Jayanta Poray, and Mainak Sen, Application of Machine Learning Algorithms on Diabetic Retinopathy?, 2017 2nd IEEE International Conference on Recent Trends in Electronics Information & Communication Technology, May 1920, 2017, India. [5] Dr. M. Renuka Devi and J. Maria Shyla, ?Analysis of Various Data Mining Techniques to Predict Diabetes Mellitus?, International Journal of Applied Engineering Research ISSN 0973-4562 Volume 11, Number 1 (2016) [6] Veena Vijayan V. And Anjali C, Prediction and Diagnosis of Diabetes Mellitus, ?A Machine Learning Approach? ,2015 IEEE Recent Advances in Intelligent Computational Systems (RAICS) | 10- 12 December 2015 | Trivandrum. [7] Santhanam, T. and Padmavathi, M.S., 2015. Application of K-means and genetic algorithms for dimension reduction by integrating SVM for a diabetes diagnosis. Procedia Computer Science, 47, pp.76- 83. (2015).

Copyright

Copyright © 2022 A. Bhagyashree, Akheelesh B Palled, Ranjith Kumar, Akshay Hegde, Saravana Kumar . This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45267

Publish Date : 2022-07-03

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online