Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Audio to Sign Language Converter

Authors: Rishin Tiwari, Saloni Birthare, Mr. Mayank Lovanshi

DOI Link: https://doi.org/10.22214/ijraset.2022.47271

Certificate: View Certificate

Abstract

The hearing and speech disabled people have a communication problem with other people. It is hard for such individuals to express themselves since everyone is not familiar with the sign language. The aim of this paper is to design a system which is helpful for the people with hearing / speech disabilities and convert a voice in Indian sign language (ISL). The task of learning a sign language can be cumbersome for people so this paper proposes a solution to this problem using speech recognition and image processing. The Sign languages have developed a means of easy communication primarily for the deaf and hard of hearing people. In this work we propose a real time system that recognizes the voice input through Pyaudio, SPHINX and Google speech recognition API and converts it into text, followed by sign language output of text which is displayed on the screen of the machine in the form of series of images or motioned video by the help of various python libraries.

Introduction

I. INTRODUCTION

Over 5% of the world's population or 466 million people has disabling hearing loss. The Sign language is used as a means of communication primarily for the deaf and hard of hearing people, it can also be used by people who can listen, such as those unable to speak, or by those with deaf family members. There are somewhere between 138 and 300 different types of sign language used throughout the world today. This system gives output by keeping Indian Sign language in mind and hence mainly focusing on disabled citizens of India.

Indian sign language is very scientific and has its own grammar, but lack of awareness has meant that many deaf people are not even aware of institutions where they can learn it and equip themselves for public communication. According to the latest census by India's National Association of the Deaf, which estimates that 18 million people roughly 1 percent of the Indian population are deaf. But the country only has about 700 schools which teach sign language. This research provides a ubiquitous system for welfare of disabled people by facilitating means of communication with hearing/speech disabled people mainly Indians and also saves time and money to learn sign language.

This work provides a solution to the problem faced by hearing and speech disabled people by the help of speech recognition and image processing techniques with the implementation in python programming language. Speech recognition is a kind of technology that is using computer to transfer the voice signal to an associated text or command by identification and understand [1].

The rest of the paper is organized as follows: Section II explores some research already done related to this system. Section III gives detailed explanation of the methods and techniques which are used to device the required system. Section IV explores the dataset used in the system and the flow of how the working of system takes place. Section V gives information about the result of the research. Section VI comprises of acknowledgment. Section VII provides details about future scope of this research and concludes the research.

II. LITERATURE SURVEY

[1] Youhao Yu presented the main techniques used for the speech recognition task and all the processes involved in the speech recognition task. The importance and applications of speech recognition in various domains is also explained. It is useful to decide which speech recognition technique to use for the task of converting the speech input to text output for this system.

[8] Purva C. Badhe, Vaishali Kulkarni presented an algorithm of converting the Indian sign language to English. It uses Gesture recognition and converts Gesture into the respective text. This research paper gave the design of model training, data acquisition, data preparation and other important processes useful for developing our desired system.

[3] Madhuri Sharma, Ranjna Pal, Ashok Kumar Sahoo proposed a system helpful in communication between signing people and non-signing people by designing a system for automatic recognition of sign language using KNN classification techniques and Neural networks. This system is helpful to understand the converse of the desired system and provides knowledge of Indian sign language fundamentals.

[11] Taner Arsan and O?uz Ülgen designed a system using Java Language to convert sign language to voice and vice-versa using Microsoft Kinect Sensor XBOX 360 for motion capturing and conversion of sign language to voice. Google Voice recognition used for recognizing voice and converting to sign language by the help of the program CMU Sphinx for Java conversion. The proposed system is useful to understand the sign language and the use of Google speech recognition that is used in our desired system.

[7] M Mahesh, Arvind Jayaprakash, M Geetha proposed an android application to convert the sign language to natural language and enable dumb and deaf people to communicate with others easily. This system gives a great idea of image processing and for conversion from one input format to the other. A converse mobile application of this research can be designed as a future scope work for our desired project which is presently a desktop application.

III. METHOD AND TECHNIQUES

In this section, we explore the different methods and concepts used to design the desired system. The foremost task is to detect the audio signal from the user which is done using speech recognition technique. The recognized audio is checked and converted to string using different python libraries and then matched with the dataset designed. The resultant image/GIF is then displayed on the screen of the system in the form of Indian Sign language.

A. Speech Recognition

Speech recognition which is a subfield of Computer linguistics is ability of machines to recognize and translate a spoken language in text format. Google Speech-to-Text enables developers to convert audio to text by applying powerful neural network models in an easy-to-use API. The API recognizes more than 120 languages and variants to support global user base. We can enable voice command-and-control, transcribe audio from call centres, and more. It can process real-time streaming or pre-recorded audio, using Google’s machine learning technology [13]. PyAudio provides Python bindings for recording an audio input form microphone. With PyAudio, we use can play and record audio on a variety of platforms.

SPHINX an accurate large-vocabulary speaker-independent continuous speech recognition system is used to recognize the audio. SPHINX attained a word accuracy of 96% with a grammar (perplexity 60), and 82% without grammar (perplexity 997) [14].

B. Image Extraction and Processing

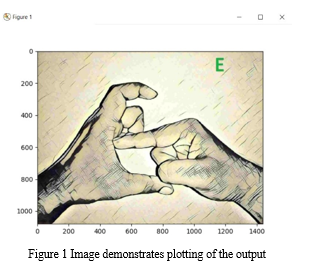

PIL (Python Imaging Library) is used in this system to manipulate, opening, filtering images. It is one of the core libraries for image manipulation in Python programming language and is freely available on the internet to download. PIL provides many functionalities for image processing using Python and it supports wide arrange of image formats [15]. NumPy array is used for manipulating the pixels of image and perform various operations on image. Matplotlib which is extension of NumPy provides functionality of plotting the image/GIF as the output on the user interface. One such example of plotting of the image using matplotlib is shown in Figure 1.

C. Interface Design

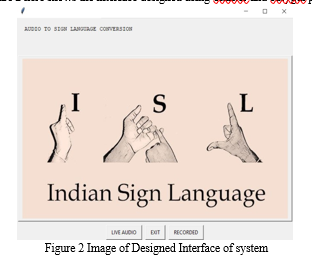

The graphical user interface (GUI) of the system should be designed in such a way that it is simple and understandable by people. To design such GUI we have used Python easygui module. It provides an easy-to-use interface for simple GUI interaction with user. Tkinter standard python GUI library is also used in designing the interface. Tkinter has been used to design buttons, frame, labels and other important widgets. Figure 2 here shows the interface designed using Tkinter and easygui python modules.

IV. DATASET AND METHODOLOGY

A. Dataset

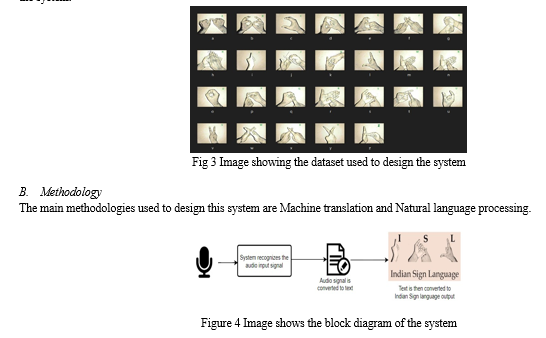

For any project which requires machine translation and natural language processing the Dataset used is the key for proper functioning of the system. In our project we have used Indian Sign language dataset which has images for all the characters of English and GIF dataset for some commonly used words and sentences. Figure 3 shows the dataset of the characters used to design the system.

The methodology can be seen in the block diagram shown in figure 4. First the audio input is taken from the user through microphone or any other input device and then by machine translation process the audio is converted into a text string format. The converted string is then processed using Python string module and matched with the respective resultant image in the ISL dataset. If the text matches the dataset successfully then the output of the audio signal is displayed on the screen of the system by the help of Image extraction and plotting techniques.

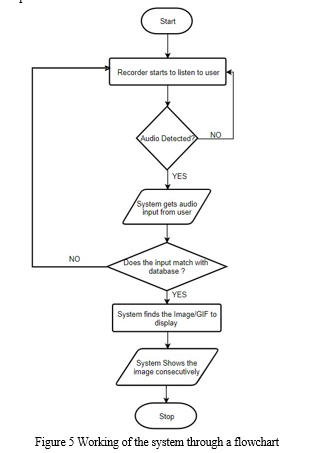

Figure 5 represents the workflow of the processes involved in the working of the system, starting from the audio input to the final ISL output image/GIF. The process used in our system design is converse of the popular approach of gesture recognition system and converting sign language to text or audio format [3][4].

- Machine Translation: Machine translation is part of Computational linguistics and is the process of converting text or speech from one language to another with the help of a software [6]. In this system we have used Dictionary based machine translation in which translation takes place based upon dictionary entries. The computer systems that offer Dictionary-based machine translation are using bilingual dictionaries. The words are translated word-by-word like a dictionary does.

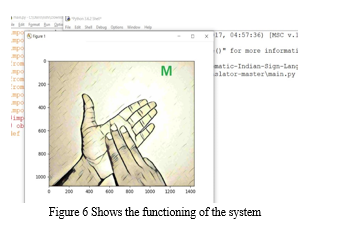

- Natural language Processing (NLP): NLP is a subfield of Computer linguistics, Artificial intelligence and computer science which deals with programming of computer systems to process large amount of natural language data. It mainly deals with manipulation of natural language, like text and speech, by the help of computer software. The origin, phonology, Morphology, syntax and concepts of sign languages are better discussed in research done by Taner Arsan and O?uz Ülgen in their research on Sign language converter [11]. Figure 6 shows the working of our system as expected and displaying output in the form of sign language on screen.

V. RESULT

As per implementation of our system we designed an Audio to Sign language converter which is effective and can be beneficial to a lot of hearing and speech disabled people and make communiction with them a lot more easier. This desktop application will be very useful for less fortunate people and will make their life more easy. Indian Sign language will be very useful when it will reach to all the areas in the country. This system can be used in schools, airports, universities, colleges and briefly everywhere.

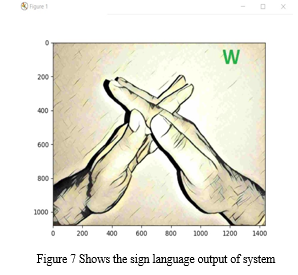

Figure 7 here shows the proper working of the designed system with the desired output in form of Indian Sign language.

VI. ACKNOWLEDGEMENT

We would also like to show our gratitude to Medi-caps University and Mr. Mayank Lovanshi, Department of Information Technology, Medi-caps University, Indore for their support and insight. Although any error in the system are our own and should not influence the reputation of the esteemed person.

Conclusion

The paper achieves the goal of designing a system which recognizes speech, converts it into text and displays the Indian sign language. The system has been developed in the form of a desktop application which can be used everywhere across the country and make communication easier with people having hearing or speech disabilities and make life of people easier. The system designed is a real time application with very less error rate in recognizing the speech audio through microphone. The system is well tested with bugs and errors and functioning as expected. The future scope of this research includes developing such converter in form of mobile application. The converse of this system has already been developed in the form of mobile application which performs task of converting sign language to text or speech [12] [7]. If this system is developed in form of mobile application or deployed on the internet in the form of webpage then it can be more ubiquitous and more people can make use of it. This system can also extend for other sign language of other countries or can be integrated with the opposite task of converting sign language to text/speech through image processing and then can provide means of two-way communication for speech/hearing disabled people. In this way it can improve to a greater level and reach more audience throughout the globe and helping in the welfare of people.

References

[1] Youhao Yu, “Research on Speech Recognition Technology and Its Application” , IEEE 2012 [2] “A proposed framework for Indian Sign Language Recognition” by Ashok Kumar Sahoo, Gouri sankar Mishra & Pervez Ahmed , International Journal Of Computer Application, October 2012. [3] Madhuri Sharma, Ranjna Pal, Ashok Kumar Sahoo , “Indian sign language recognition using neural network and KNN classifiers” ARPN journal of Engineering and Applied Sciences 8, 2014. [4] Mohammed Elmahgiubi ; Mohamed Ennajar ; Nabil Drawil ; Mohamed Samir Elbuni “Sign language translator and gesture recognition” , IEEE December 2015. [5] “Indian Sign Language Recognition System”, by Yogeshwar I. Rokade , Prashant M. Jadav in July 2017 International Journal of Engineering and Technology(IJET). [6] Anand Ballabh, Dr. Umesh Chandra Jaiswal, “ A study of Machine translation methods and their challenges”, Published 2015 [7] M Mahesh, Arvind Jayaprakash, M Geetha, “ Sign language translator for mobile platforms” , IEEE September 2017 [8] Purva C. Badhe, Vaishali Kulkarni, “Indian sign language translator using gesture recognition algorithm”, IEEE November 2015 [9] http://www.islrtc.nic.in/video-hiearchy-select [10] http://indiansignlanguage.org/dictionary/#isl-dict-cat [11] Taner Arsan and O?uz Ülgen, “Sign language converter”, International Journal of Computer Science & Engineering Survey (IJCSES) August 2015 [12] Cheok Ming Jin, Zaid Omar, Mohamed Hisham Jaward , “A mobile application of American sign language translation via image processing algorithms” , IEEE May 2016 [13] https://cloud.google.com/speech-to-text/ [14] K.-F. Lee, H.-W. Hon, M.-Y. Hwang, S. Mahajan, R. Reddy, “The SPHINX speech recognition system”, IEEE August 2002 [15] Ms.Harshada, Snehal, Sanjay, Pranaya, Suchita, Shweta, Darshana, “Python Based Image Processing”, Avishkar, January 2015

Copyright

Copyright © 2022 Rishin Tiwari, Saloni Birthare, Mr. Mayank Lovanshi. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET47271

Publish Date : 2022-11-02

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online