Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- References

- Copyright

Chest X-Ray Report Analysis

Authors: Dhanushwi Arava, Seyi Swathhy Yaganti, Naga Sravani Vemu

DOI Link: https://doi.org/10.22214/ijraset.2022.43971

Certificate: View Certificate

Abstract

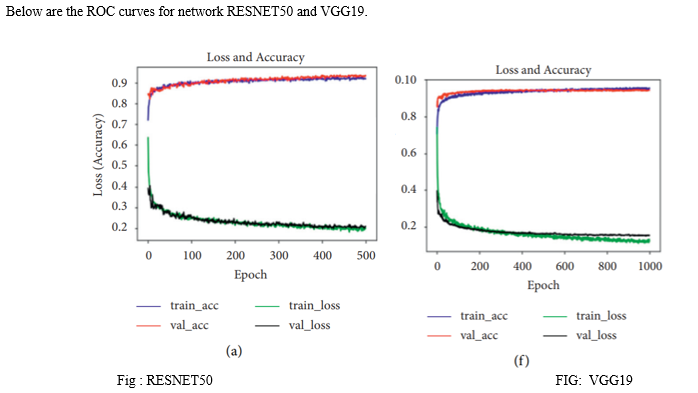

The detection methods used in x-ray image findings use image classification which gives inaccurate and gives low recognition accuracy. So, we would use an image model feature fusion. This method will take the image and then perform some operations such as rotation, translation etc. Then it reduces training parameters and reduce overfitting of the data. Because when there is overfitting in the data, the results will be in accurate and gives the negative impact on the new data. When the model gets fine-tuned and trained, we would evaluate the trained model and draw a ROC curve. ROC curve is the simple diagnostic test. The closer the apex of the curve to the upper left corner, the greater will be the accuracy. More different experiments tell us that the classification model will give more accuracy to the results than compared to the classic model for the chest x-ray report analysis. After preprocessing the image, we will train the model using the VGG19, RESNET50, DENSENET, INCEPTION and check which among the methods would yield us

Introduction

I. INTRODUCTION

As the first case was registered in December 2019 at Wuhan, China. Later from the dated month, the covid cases had been risen exponentially. Multiple strategies would be highly necessary to handle the current outbreak; these include computational modelling, statistical tools, and quantitative analyses to control the spread as well as the rapid development of a new treatment. At the time of pandemic, a CT scan would help the person to identify in which stage of covid he was present.

There was also a drawback that the CT scan would not clearly give us the report and the doctor could not give the correct findings. Because a CT scan helps to capture the bones, vertebrates, soft tissues all at a time. Therefore, they have used X – ray to identify at which stage he was present. X – ray would always give the minute findings such as identifying the dense tissues than compared to the CT scan.

With the development of pandemic, the machine learning and deep learning technologies shown their development. At first the deep learning technology, Inception network has been applied for CT scan which is used for image classification and the transfer learning inception v3 is applied for X- ray images. In the transfer learning it focuses on the storing the knowledge gained while solving one problem and applies to the different problem but related.

II. LITERATURE STUDY

The coronavirus disease pandemic which was originated in China has quickly spread to various countries. There are about 56,000+ positive cases that are reported worldwide. With the second largest population in the world, will have difficulty in controlling the transmission of severe acute respiratory syndrome coronavirus among population [1].

There are many technologies that are emerged as pandemic increases. We have used different deep learning technologies for the diagnosis of covid-19 cases. Some of the technologies that helped in diagnosis are Molecular Test, Serological test, Chest CT scan, Swab test, chest X – ray. [2] Among this diagnosis we can observe that the chest X – ray provide us the accurate results. CT scan would provide us the results, but the CT scan would involve in the ionizing radiation, which can cause harm to the biological tissue. They have 50 to 1000 times higher radiation dose than conventional X – rays. A recent study estimates that for every 1.0 mSv exposure to radiation would increase a 5% risk of developing a fatal cancer. Thus, a radiation dose of 100 mSv will have 0.5% risk of cancer. Also, for the paediatric patients due to developing of organs, it is not recommended for a CT scan for covid test. Exposure should be limited as said in ALARA principle (as low as reachable). [3]

In short, a CT scan for a person is recommended when the person want to assess the whole body at a time. The dataset was collected from two websites with two classes: Covid – 19, normal ranging from the paediatric patients who is 5 years old to a 98-year-old lady. There are approximately 3616 images on the website for covid 19 and approximately 10000 images depicting normal people x-ray.[11]

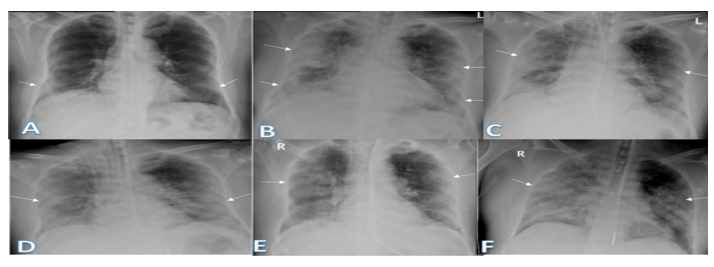

These are the images of persons affected by covid.

III. PREPROCESSING

The process starts with collecting the images from the website and pre-process them to remove the overfitting of the data. Over fitting leads to inaccurate results of the data and the results would not be efficient as expected. We can use transfer learning, which is a strategy that helps to store the knowledge gained while solving a problem and applies to the different problem but related. In deep learning, transfer learning involves CNN for a specific task utilizing large scale datasets. It extracts the significant features of the image.

A CNN image is a convolutional neural network which is used to process the pixel data easily. [4]. There are two commonly used strategies to exploit the capabilities of pre trained CNN.

A. Feature Extraction

The first strategy is called feature extraction and the second strategy is sophisticated procedure. In the first strategy, the pre-trained model retains both the initial architecture and the weights. The pre-trained model, hence, retains the feature extractor and the extracted features are inserted into a new network.

B. Sophisticated Procedure

In second strategy, sophisticated procedure, the modifications may include architecture adjustment and parameter tuning. In this way, the knowledge mined from previous task is retained, while the new trained parameters are inserted into the network.

After the pre-processing of the data, the data is divided into two sets namely train set and test set. For the below data 60% of the data set is considered as train set and the remaining 40% is considered as test set. The train set data is further trained in three ways. They are VGG, DENSENET, ResNet50. The data set is further tested and calculate the accuracy, precision, recall and f1 – score.

IV. MODEL TRAINING

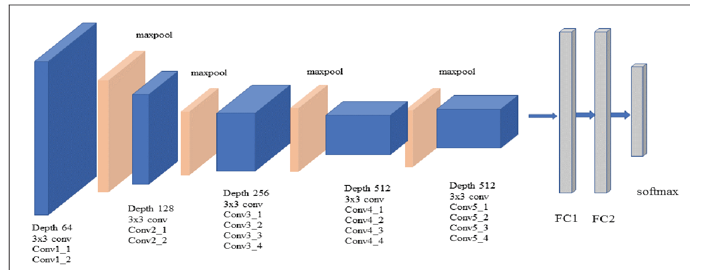

A. VGG16

The data for VGG network has the following observations. VGG was first proposed by Simonyan and Zisserman in early 2014 at the University of Oxford, UK. VGG19 stands for the Visual Geometry Group. This helps in the face detection and image classification. It has a better performance. The input for VGG would be a RGB 224 x 224 images. The RGB average of all images would be calculated and processed to the VGG19 convolutional network. VGG has 19 hidden layers consisting of 16 convolutional layers and 3 fully connected layers. VGG19 helps for better results and analysis.[7] The fully connected layers would result in the better feature extraction. To reduce the training time, the experiment only fine-tunes the parameters of the fifth convolution block of the VGG19 model, allowing it to achieve accurate classification of fault types, thereby improving the accuracy of the experiment.

Since the entire dataset is divided into three parts: training, test, and unused data[8]

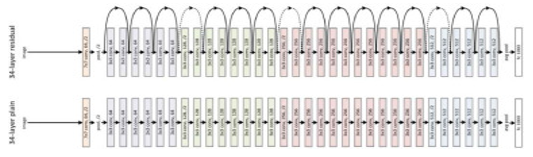

B. RESNET-50

The data that is trained in ResNet-50 has been observed with the following features of working: RESNET50 is a convolutional layer that has 50 layers deep. We can load millions of images and it helps to classify into 1000 categories. It automatically adds the output from the earlier layer to the input layer. For this, RESNET uses skip connection. It helps to vanish gradient problem. A ResNet 50 architecture consists of sequences of convolutional blocks with average pooling. Softmax is used at last for the classification.

While the number of stacked layers can enrich the features of the model, a deeper network can show the issue of degradation. In other words, as the number of layers of the neural network increases, the accuracy levels may get saturated and slowly degrade after a point. As a result, the performance of the model deteriorates both on the training and testing data.

This degradation is not a result of overfitting. Instead, it may result from the initialization of the network, optimization function, or, more importantly, the problem of vanishing or exploding gradients.

For all the above processing of the images we would need the hardware used for all the experiments and the 8th generation core i7 laptop PC with 16GB RAM. GPU of 6GB and 256GB SSD with usual Windows OS.

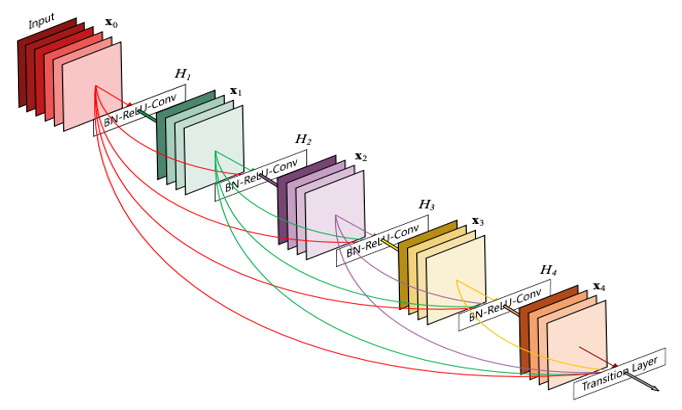

C. DenseNet

In the Dense Convolutional Network (DenseNet), which connects each layer to every other layer in a feed-forward fashion. Whereas traditional convolutional networks with L layers have L connections - one between each layer and its subsequent layer - our network has L(L+1)/2 direct connections.

But there is also a disadvantage in using DenseNET is that the excessive connections not only decrease network computation, efficiency, and parameter efficiency, but it can also make networks result in over fitting. The DenseNet shows the better features, has more efficiency but the ResNet takes a fewer parameter. DenseNet requires heavy GPU memory due to concatenation operators. It will not be a cost-efficient for more industries who would consider in this stream.

V. EVALUATION

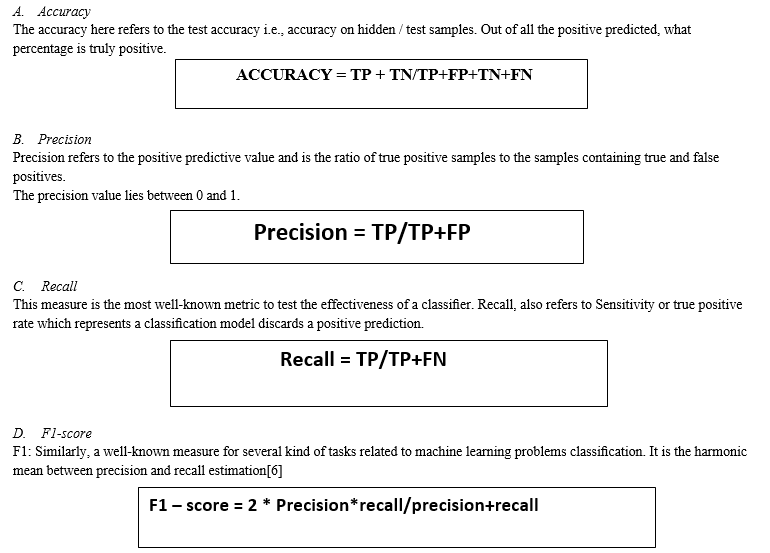

To evaluate our proposed model and comparable baselines, we use several metrics such as test accuracy, precision, recall, F1 measure, and area under the probability curve - ROC. A detailed overview of the evaluation metrics is as follows:

There are 4 parameters in the model evaluation i.e., the confusion matrix.

- TP – correctly predicted covid case.

Model correctly predicts the positive class (prediction and actual both are positive). In the above example, 10 people who have covid are predicted positively by the model.

- FP – misclassified as a covid case.

model gives the wrong prediction of the negative class (predicted-positive, actual-negative). In the above example, 22 people are predicted as positive of having covid, although they don’t have a covid. FP is also called a TYPE I error.

- TN – normal person that is correctly classified as healthy

Model correctly predicts the negative class (prediction and actual both are negative). In the above example, 60 people who don’t have covid are predicted negatively by the model.

- FN – the number of cases incorrectly identified as healthy

model wrongly predicts the positive class (predicted-negative, actual-positive). In the above example, 8 people who have covid are predicted as negative. FN is also called a TYPE II error.

|

PREDICTED/ ACTUAL |

POSITIVE |

NEGATIVE |

|

POSITIVE |

TP |

FP |

|

NEGATIVE |

FN |

TN |

VI. RESULTS

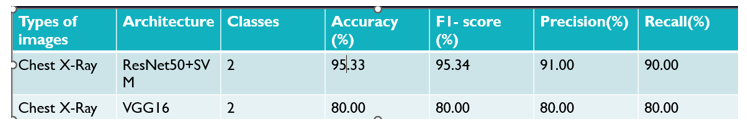

Based on the models we have selected, the below is the accuracy, f1-score, precision, recall.

The main metric measures are test accuracy and test-time augmentation scores as it correctly classi?es the cases into each label - positive forCOVID-19 and negative for non-COVID-19. We employ seven CNN-based models which are VGG19 [23], ResNet34 [24], ResNet50 [24], DenseNet201 [25], Ef?cientNet-B0 [6], ?ne-tuned Ef?cientNet-B0, and COVIDPEN.’s For X-ray dataset, we found ResNet50 to be effective after our proposed model. However, for this dataset,

- According to the analysis and discussion of the evaluation criteria of the comparative research algorithm on the test set, the accuracy, precision, recall, and F1- score of models RESNet 50 + SVM are higher than those of comparative research algorithms, showing that model can more effectively distinguish COVID-19 patients from healthy people and is a major in-depth architecture.

- We can also observe that the performance of all indicators of model is better than that of other research methods.

- The more the accuracy the more we say that the model is good to go, and it is ready to identify whether the person has covid or not based on the chest findings.

A. Problem Statement And Its Benefits To The Society

The main problem statement is that there is no correct model or the analysis that is used to identify a disease which is complex to identify with the eyes. The disease can be clear at most of the times but at the complex times it could not give the good results. For instance, if we take the person x- ray who is affected with covid, we could observe the white spaces in the lungs and around the heart. Therefore, with that we can clearly say that the person is affected with covid. The following figures helps us to understand the concept better.

This project can be further used to classify the x- ray findings in the future for the identification of pneumonia or any chest problems and also their severity. When this model is fine-tuned, it helps in the classification and saves the time for report generation. It is used to evaluate the lungs, heart and chest wall and may be used to help diagnose shortness of breath, persistent cough, fever, chest pain or injury.

B. Theoretical Analysis

CT and X-rays are effective tools for diagnosing and evaluating COVID-19. We used four CNN models and two cascaded network models to divide X-ray samples into two categories: COVID-19 and healthy people. We applied these model architectures for feature extraction and classified categories through an FC layer. Experimental results showed that, under the same conditions, cascade network model ResNet50+SVM is best for classifying COVID-19 and healthy people. It could significantly improve classification performance, with accuracy of 95.33%, F1-score of 95.34%, 91.00% precision, 90.00% recall, and 98.7% AUC. We discussed and compared our research and recent work. The results showed that model ResNet 50+SVM is better than other models in classifying COVID-19 and healthy people, can accurately classify them, and can assist doctors in the rapid detection of COVID-19. We concluded from these two aspects that model ResNet50 + SVM is well distinguished between COVID-19 patients and healthy people and could help reduce the workload of doctors in detecting COVID-19 cases.

The proposed method has major limitations. The experiment only applies to X-ray images, and not CT images, because X-ray images are RGB and CT images are grayscale. This experiment can only be used to classify COVID-19 patients and healthy people, and it cannot classify COVID-19 and general pneumonia. We will next focus on the classification of COVID-19, bacterial pneumonia, and viral pneumonia.

References

DATABASE https://www.kaggle.com/datasets/tawsifurrahman/covid19-radiography-database [1] S. Udhaya Kumar1, D. Thirumal Kumar1, B. Prabhu Christopher2 and C. George Priya Doss., A rise and impact of covid 19 in India [2] NastaranTaleghani, FariborzTaghipour, Diagnosis on covid 19 and treatment, 2020 [3] Paula R. Patel; Orlando De Jesus, on the “CT SCAN and the working”, 2020 [4] A. M. Alqudah, S. Qazan, H. Alquran, I. A. Qasmieh, and A. Alqudah, “COVID-2019 detection using x-ray images and artificial intelligence hybrid systems,” Biomedical Signal and Image Analysis and Project, 2020. [5] Ioannis D. Apostolopoulos & Tzani A. Mpesiana Covid-19: automatic detection from X-ray images utilizing transfer learning with convolutional neural networks, 2020 [6] A.Victor IkechukwuS.MuraliR.DeepuR.C.Shivamurthy, ResNet-50 vs VGG-19 vs training from scratch: A comparative analysis of the segmentation and classification of Pneumonia from chest X-ray images,2021 [7] Jeanie Zhou,1 Xinyu Yang,2 Lin Zhang,2 Siyu Shao ,2 and Gangying Bian2 Multisignal VGG19 Network with Transposed Convolution for Rotating Machinery Fault Diagnosis Based on Deep Transfer Learning [8] Dongsheng Ji , Zhujun Zhang , Yanzhong Zhao, and Qianchuan Zhao, [9] Gao Huang, Zhuang Liu, Laurens van der Maaten, Kilian Q. Weinberger Densely Connected Convolutional Networks [10] Yasin and Gouda Egyptian Journal of Radiology and Nuclear Medicine

Copyright

Copyright © 2022 Dhanushwi Arava, Seyi Swathhy Yaganti, Naga Sravani Vemu. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET43971

Publish Date : 2022-06-08

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online