Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

A Review of Deep Learning Methods Applied to Ocular Diseases Recognition and Detection

Authors: Prof. Vijayalakshmi, Mounesh , L Vinay, L Monisha, Nithin MS

DOI Link: https://doi.org/10.22214/ijraset.2022.41104

Certificate: View Certificate

Abstract

Artificial intelligence is having an important effect on different areas of drug, and ophthalmology isn\'t the exception. In particular, deep literacy styles have been applied successfully to the discovery of clinical signs and the bracket of optical conditions. This represents a great eventuality to increase the number of people rightly diagnosed. ophthalmology, deep literacy styles have primarily been applied to eye fundus images and optic consonance tomography. On the one hand, these styles have achieved outstanding performance in the discovery of optical conditions similar as diabetic retinopathy, glaucoma, diabetic macular degeneration, and age- related macular degeneration. On the other hand, several worldwide challenges have participated big eye imaging datasets with the segmentation of part of the eyes, clinical signs, and optical judgments performed by experts. In addition, these styles are breaking the smirch of black-box models, with the delivery of interpretable clinical information. This review provides an overview of the state-of-the- art deep literacy styles used in ophthalmic images, databases, and implicit challenges for optical opinion.

Introduction

I. INTRODUCTION

Iris cataract tumours are the most dangerous tumours in the eye, commonly known as ‘eye tumours’.Most cataracts develop when aging or injury changes the tissue that makes up eye’s lens. Protiens and fibres in the lens begin to break down, causing vision to become hazy or cloudy. Some inherited genetic disorders that cause health problems can increase your risk of cataracts. The term ocular is used with tumour to represent that it is accompanied with eye. It can be intraocular, which means inside the eye or extra-ocular which means that it affects the outside part of the eye. The most common types of diseases are detected are cataract, diabetic retinopathy, redness level. Some other tumours related to the eye are Lacrimal Gland Tumour, lid tumour, etc. The exact cause of this disorder is not known, but certain risk factors have been noticed. The disorder is seen more often in people who have light eye colour . Age, certain inherited skin disorders, exposure to ultraviolet (UV) light, certain genetic mutations etc are also considered as the reasons for eye tumours. The eye tumour can be spread and shall affect the vision.

Routine checkups are the best methods for diagnosing the tumour. Ophthalmoscope is commonly used to diagnose. Ultrasound, Fluorescein angiography test OCT, Semiconductor Detectors are the other common methods to diagnose. Needle biopsy is rarely used for diagnosis. CT to age, health state, suspected disease, symptoms and past examination records. Cytogenetics and gene expression profiling are used to collect more information about prognosis. Iris tumours are most common eye tumours and it is classified into different types. For earlier detection of these tumours, generalised procedure is needed to diagnose the abnormality.

The two most commonly used therapeutic procedures are surgery or radiation therapy. In Radiation therapy damage produced in the tumour cells causes them to die and slowly shrink. The most common radiation therapies are endocrine therapy, brachytherapy, or sealed source radiotherapy 5. Shrinking of the tumour region can be completely cured with local therapy. The local therapy consists of laser photocoagulation, cryotherapy, thermotherapy, Plaque radiotherapy etc. Laser photocoagulation is the primary therapy with xenon or argon arc. This coagulates all blood supply to the tumour and it can control 70% of the abnormality. Cryotherapy commonly treated for small tumours. The procedures for chemotherapy start with cryotherapy as a preparation step. Thermotherapy is the method of applying heat to the affected area using ultrasound, microwaves, or infrared radiation.

Ophthalmology is a branch of medical science that investigates the disorders of the eye. Bio medical imaging software is the efficient tool for ophthalmologists in diagnosing eye diseases. These imaging techniques also help them in surgical treatment. Most of the image diagnosing machines are working with the principle of machine learning. Fundus and OCT image records can reveal the symptoms of diabetes. Many cameras are available now for capturing iris regions of the eye. These images are used both for biomedical and biometric applications. The eye tumour examinations can be undertaken with the pictures. More research works should come across in the field of ocular disease detection detection

Early ocular disease detection is an economic and effective way to prevent blindness caused by diabetes, glaucoma, cataract, age-related macular degeneration (AMD), and many other diseases. According to World Health Organization (WHO) at present, at least 2.2 billion people around the world have vision impairments, of whom at least 1 billion have a vision impairment that could have been prevented1. Rapid and automatic detection of diseases is critical and urgent in reducing the ophthalmologist’s workload and prevents vision damage of patients. Computer vision and deep learning can automatically detect ocular diseases after providing high-quality medical eye fundus images. In this article, I show different experiments and approaches towards building an advanced classification model using convolutional neural networks written using the TensorFlow library.

II. DATASET

Ocular Disease Intelligent Recognition (ODIR) is a structured ophthalmic database of 5,000 patients with age, color fundus photographs from left and right eyes, and doctors’ diagnostic keywords from doctors. This dataset is meant to represent the ‘‘real-life’’ set of patient information collected by Shanggong Medical Technology Co., Ltd. from different hospitals/medical centers in China. In these institutions, fundus images are captured by various cameras in the market, such as Canon, Zeiss, and Kowa, resulting in varied image resolutions. Annotations were labeled by trained human readers with quality control management2. They classify patients into eight labels including normal (N), diabetes (D), glaucoma (G), cataract (C), AMD (A), hypertension (H), myopia (M), and other diseases/abnormalities (O).

After preliminary data exploration I found the following main challenges of the ODIR dataset:

- Highly unbalanced data. Most images are classified as normal (1140 examples), while specific diseases like for example hypertension have only 100 occurrences in the dataset.

- The dataset contains multi-label diseases because each eye can have not only one single disease but also a combination of many.

- Images labeled as “other diseases/abnormalities” (O) contain images associated to more than 10 different diseases stretching the variability to a greater extent.

- Very big and different image resolutions. Most images have sizes of around 2976x2976 or 2592x1728 pixels.

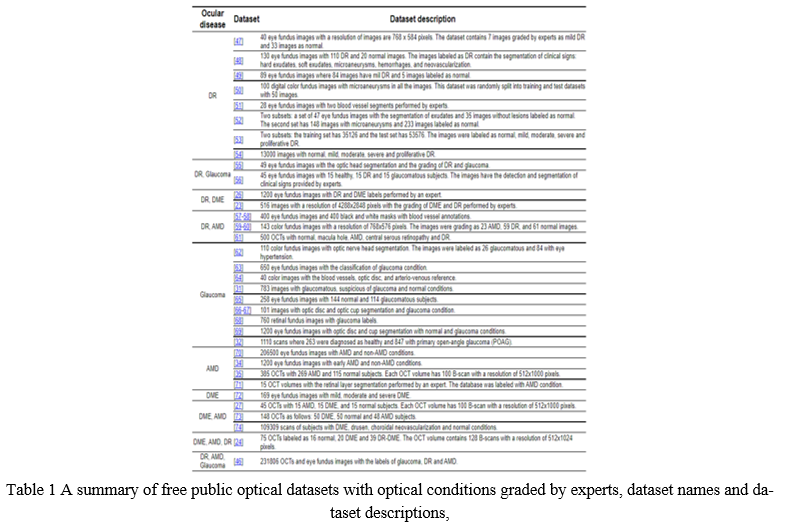

All these issues take a significant toll on accuracy and other metrics. In recent times, the discovery of clinical signs and the grading of optical conditions have been considered engineering grueling tasks. In addition, worldwide experimenters have published their styles and a set of EFIs and OCTs databases with different optical conditions, population, accession bias and image resolution. The available optical datasets for each optical complaint, the type of optical image and the study population are presented in Table 1.

III. PERFORMANCE METHODS

Deep learning approaches have shown astonishing results in problem domains like recognition system, natural language processing, medical sciences, and in many other fields. Google, Facebook, Twitter, Instagram, and other big companies use deep learning in order to provide better pplications and services to their customers. Deep learning approaches have active applicatio s using Deep Convolutional Neural Networks (DCNN) in object recognition, speech recognition, natural language processing, theoretical science, medical science, etc.

In the medical field, some researchers apply deep learning to solve different medical problems like diabetic retinopathy, detection of cancer cells in the human body, spine imaging and many others. Although unsupervised learning is applicable in the field of medical science where sufficient labeled datasets for a particular type of the disease are not available. In particular, the state-of-the-art methods in ocular images are based on supervised learning techniques.

A. Performance Metrics in Deep Learning Models

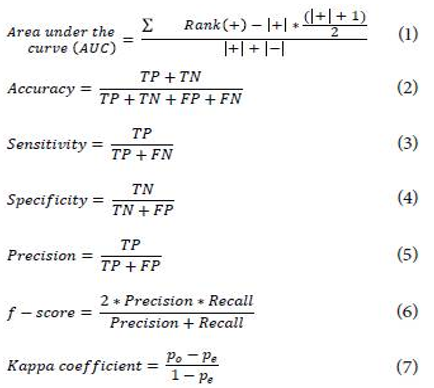

The performance comparison of deep learning methods in classification tasks is performed by the calculation of statistical metrics. These metrics assess the agreement and disagreement between the expert and the proposed method to grade an ocular disease 35,62,74. The performance metrics used in state-of-the-art works are presented in Equations (1 - 7) as follows:

where,

TP = True positive (the ground-truth and predicted are non-control class)

TN = True Negative (the ground-truth and predicted are control class)

FP = False Positive (predicted as a non-control class but the ground-truth is control class)

FN = False Negative (predicted as control class but the ground-truth is non-control class)

po = Probability of agreement or correct classification among raters.

pe = Probability of chance agreement among raters.

IV. DEEP LEARNING METHODS FOR DIAGNOSIS SUPPORT

A. Dl Methods using Eye Fundus Images

The state-of-the-art DL methods to classify ocular diseases using EFIs are focused on conventional or vanilla CNN and multi-stage CNN. The most common vanilla CNN used with EFIs are the pre-trained inception-V1 and V3 models on the ImageNet database (http://www.image-net.org/). The inception-V1 is a CNN that contains different sizes of convolutions for the same input to be stacked as a unique output. Another difference with normal CNN is that the inclusion of convolutional layers with kernel size of 1x1 at the middle and global average pooling at the final of its architecture 79. On the other hand, inception-V3 is an improved version batch normalization and label smoothing strategies to prevent overfitting 91.

94 used the U-Net model proposed by 92 to segment the retinal vessel from EFIs. Then, two new datasets were created with and without the vessels to be used as inputs in the inception-V1. This method obtained an AUC of 0.9772 in the detection of DR in the DRIU dataset. 96 and 98 proposed a patch-based model composed of pre-trained inception-V3 to detect DR in the EyePAC dataset. 98 used a private dataset with segmentations of clinical signs to classify an EFI into normal or referable DR with a sensitivity of 93.4 % and specificity of 93.9 %. The ensembled of four inception-V3 CNN by 96 reached an accuracy of 88.72 %, a precision of 95.77 % and a recall of 94.84 %.

The multistage CNN is centered first on the detection of clinical signs to sequentially grade the ocular disease. 95 located different types of lesions to integrate an imbalanced weighting map to focus the model attention in the local signs to classify DR obtaining an AUC of 0.9590. 97 used a similar approach to generate heat maps with the detected lesion as an attention model to grade in an image-level the DR with an AUC of 0.954. 99 uses a four-layers CNN as a patches-based model to segment exudates and the generated exudate mask was used to diagnose DME reporting an accuracy of 82.5 % and a Kappa coefficient of 0.6. Then, 104 proposes a three-stage DL model: optic and cup segmentation, morphometric features estimation and glaucoma grading, with an accuracy of 89.4 %, a sensitivity of 89.5 % and a specificity of 88.9 %. Finally, 101 proposed a model to segment optic disc and cup and calculate a normalized cup-discratio to discriminate healthy and glaucomatous optic nerve of EFIs. Table 2 presents a brief summary of DL methods in eye fundus images used to support an ocular diagnosis.

V. DISCUSSION

This review reports the deep learning state-off-the-art works applied to EFIs and OCT for ocular diagnosis as presented in Tables 2 and 3. The main DL methods in the detection of ocular diseases using EFIs are focused on the fine-tuning of pre-trained CNNs such as Inception V1 94 and Inception V3 96. In addition, the pre-trained CNNs applied to OCT obtained an outstanding performance as reported with pre-trained ResNet 35,105, VGG-16 111 and Inception V3 74. Thus, the feature extraction stage performed by CNNs using a non-medical domain dataset from ImageNet is enough to discriminate healthy and unhealthy patterns from ocular images. On the other hand, the best CNN models using OCT volumes as input are customized models with two or three stages. In particular, these DL models used two or more ocular medical datasets reported in Table 1 to perform the feature extraction of local signs, added to a classification stage for grading the ocular diseases as reported for EFIs in 95,97,99-103 and for OCTs in 106-109.

The number of free public available datasets contributes to the design of new DL methodologies to classify ocular conditions as reported in Table 1. However, the use of a private dataset limits the comparison among performance metrics reached by DL methods 74,98,110-111. The replication of studies reported by 98 and 110 have been criticized for the lack of information related to the description of the method and the hyperparameters used 113. The use of public repositories as GitHub (https://github.com/) to share datasets and codes is still a need. Nowadays, the growing interest of big technology companies and medical centers to create open challenges has increased the number of ocular datasets such as the DR detection by Kaggle 53,84, the blindness detection by the Asia Pacific Tele-Ophthalmology Society (APTOS) 54 and iChallenge for AMD detection by Baidu 34. These new datasets contain diverse information related to acquisition devices, image resolution, and worldwide population. Moreover, DL techniques are leveraging the new data to the design of new robust approaches with outstanding performances as reported in Tables 2 and 3.

The lack of validation of DCNN models with real-world scans or fundus images is still a problem. We found a couple of methods validated with ocular images from medical centers 96, 108-111. However, the number of free public real-world ocular images is limited to five sets of images 31,46,53-54,74. The clinical acceptance of the proposed DCNN models depends critically on the validation in clinical and nonclinical datasets.

VI. ACKNOWLEDGEMENT

I would like to express my sincere gratitude to several individuals and HKBK College of Engineering for supporting me throughout my graduate study. First, i wish to express my sincere gratitude to my Professor Vijayalakshmi , for her enthusiasm, patience, insightful comments, helpful information, practical advice and ideas that have helped me tremendously at all times in my research. Her immense knowledge, profound experience and professional experience in Quality control has enabled me to complete this research successfully. Without her support and guidance, this project would not have been possible, I couldn’t have imagined having a better guide in my study.

I also wish to express my sincere thanks to Visvesvaraya Technological University for accepting me into the graduate program. In addition, I am deeply indebted to all the staffs of my college.

Conclusion

Deep Literacy styles are new ways that descry and classify different abnormalities in eye images with great eventuality toeffectively optical complaint opinion.These styles take advantage ofa large number of available datasets with different reflections of clinical signs and optical conditions to perform the automatic point birth thatsupports medical decision timber

References

[1] W. Stitt, N. Lois, R. J. Medina, P. Adamson, and T. M. Curtis (2013), \"Advances in Our Understanding of Diabetic Retinopathy,\" Clinical Science, vol. 125, no. 1, pp. 1-17, 2013. doi:10.1042/CS20120588 Links [2] N. Gurudath, M. Celenk, and H. B. Riley, \"Machine Learning Identification of Diabetic Retinopathy from Fundus Images,\" In 2014 IEEE Signal Processing in Medicine and Biology Symposium (SPMB), 2014, pp. 1-7. doi:10.1109/SPMB.2014.7002949 Links [3] R. Priyadarshini, N. Dash, and R. Mishra, \"A Novel Approach to Predict Diabetes Mellitus Using Modified Extreme Learning Machine,\" In 2014 International Conference on Electronics and Communication Systems (ICECS), 2014, pp. 1-5. doi:10.1109/ECS.2014.6892740 Links [4] G. Quellec et al ., \"Automated Assessment of Diabetic Retinopathy Severity Using Content-Based Image Retrieval in Multimodal Fundus Photographs,\" Investigative Ophthalmology & Visual Science, vol. 52, no. 11, pp. 8342-8348, 2011. doi:10.1167/iovs.11-7418 Links [5] R.A. Welikalaa et al ., \"Automated Detection of Proli-ferative Diabetic Retinopathy Using a Modified Line Operator and Dual Classification,\" Computer Methods and Programs in Biomedicine, vol. 114, no. 3, pp. 247-261, 2014. doi:10.1016/j.cmpb.2014.02.010 Links [6] S. Roychowdhury, D. D. Koozekanani, and K. K. Parhi, \"DREAM: Diabetic Retinopathy Analysis Using Machine Learning,\" IEEE Journal of Biomedical and Health Informatics, vol. 18, no. 5, pp. 1717-1728, 2013. doi:10.1109/JBHI.2013.2294635 Links [7] D. Usher, M. Dumskyj, M. Himaga, T.H. Williamson, S Nussey, and J. Boyce, \"Automated Detection of Diabetic Retinopathy in Digital Retinal Images: A Tool for Diabetic Retinopathy Screening,\" Diabetic Medicine, vol. 21, no. 1, pp. 84-90, 2004. doi:10.1046/j.1464-5491.2003.01085.x Links [8] S. Philip et al ., \"The Efficacy of Automated \"Disease/ No Disease\" Grading for Diabetic Retinopathy in a Systematic Screening Programme,\" British Journal of Ophthalmology, vol. 91, no. 11, pp. 1512-1517, 2007. doi:10.1136/bjo.2007.119453 Links [9] S. C. Cheng and Y. M. Huang, \"A Novel Approach to Diagnose Diabetes Based on the Fractal Characteristics of Retinal Images,\" IEEE Transactions on Information Technology in Biomedicine, vol. 7, no. 3, pp. 163-170, 2003. doi:10.1109/TITB.2003.813792 Links [10] M. García, C.I. Sánchez, M.I. López, D. Abásolo, and R. Hornero, \"Neural Network Based Detection of Hard Exudates in Retinal Images,\" Computer Methods and Programs in Biomedicine, vol. 93, no. 1, pp. 9-19, 2009. doi:10.1016/j.cmpb.2008.07.006 Links [11] W. Lu, Y. Tong, Y. Yu, Y. Xing, C. Chen, and Y. Shen, \"Applications of Artificial Intelligence in Ophthalmology: General Overview,\" Journal of Ophthalmology, 2018. doi:10.1155/2018/5278196 Links [12] D. C. S. Vandarkuzhali, and T. Ravichandran, \"Elm Based Detection of Abnormality in Retinal Image of Eye Due to Diabetic Retinopathy,\" Journal of Theoretical and Applied Information Technology, vol. 6, pp. 423-428, 2005. Links [13] B. Antal and A. Hajdu, \"An Ensemble-Based System for Automatic Screening of Diabetic Retinopathy,\" Knowledge-Based Systems, vol. 60, pp. 20-27, 2014. doi:10.1016/j.knosys.2013.12.023 Links [14] T. K. Yoo and E. C. Park, \"Diabetic Retinopathy Risk Prediction for Fundus Examination Using Sparse Learning: A Cross-Sectional Study,\" BMC Medical Informatics and Decision Making, vol. 13, no. 1, pp. 106, 2013. doi:10.1186/1472-6947-13-106 Links [15] N. H. Cho et al ., \"IDF Diabetes Atlas: Global Estimates of Diabetes Prevalence for 2017 and Projections for 2045,\" Diabetes Research and Clinical Practice, vol. 138, pp. 271-281, 2018. doi:10.1016/j.diabres.2018.02.023 Links

Copyright

Copyright © 2022 Prof. Vijayalakshmi, Mounesh , L Vinay, L Monisha, Nithin MS. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET41104

Publish Date : 2022-03-30

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online