Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Deep Learning Techniques to Classify the Aerial Images with Gabor Filter

Authors: Prakruthi D P, P. Sumathi

DOI Link: https://doi.org/10.22214/ijraset.2022.42572

Certificate: View Certificate

Abstract

Aerial Images are a valuable data source for earth observation, can help us to measure and observe detailed structures on the Earth’s surface. Aerial images are drastically growing. This has given particular urgency to the quest for how to make full use of ever-increasing Aerial images for intelligent earth observation. Hence, it is extremely important to understand huge and complex Aerial images. Aerial image classification, which aims at labeling Aerial images with a set of semantic categories based on their contents, has broad applications in a range of fields. Propelled by the powerful feature learning capabilities of deep neural networks, Aerial image scene classification driven by deep learning has drawn remarkable attention and achieved significant breakthroughs. However, recent achievements regarding deep learning for scene classification of Aerial images is still lacking.

Introduction

I. INTRODUCTION

Aerial images, a valuable data source for earth observation, can help us to measure and observe detailed structures on the Earth’s surface. Aerial images are drastically growing. This has given particular urgency to the quest for how to make full use of ever-increasing Aerial images for intelligent earth observation. Hence, it is extremely important to understand huge and complex Aerial images.

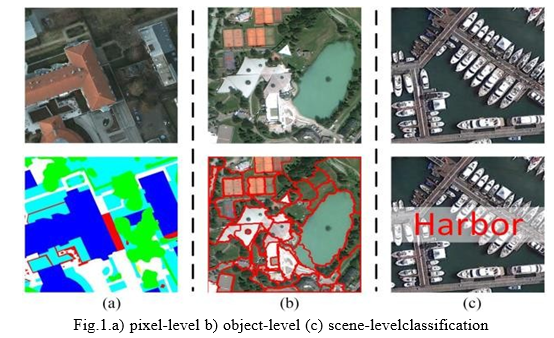

Aerial image scene classification is to correctly label given Aerial images with predefined semantic categories, as shown in below Fig 1. For the last few decades, extensive researches on Aerial image scene classification have been undertaken driven by its real-world applications, such as urban planning, natural hazards detection, environment monitoring, vegetation mapping, and geospatial object detection.

Dataset going to use in this system is RSSCN7 Dataset and the details are given below.

RSSCN7 Data Set: The second data set is called RSSCN7 data set, which is collected from Google Earth. It contains 2800 aerial scene images with 400 × 400 pixels from 7 classes. There are 400 images in each category. Fig. 1 shows the examples which are randomly selected from each class.

II. RELATED WORK

Here, literature review on different techniques given by various researchers is being presented.

A. Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping.

Google Earth (GE) releases free images in high spatial resolution that may provide some potential for regional land use/cover mapping, especially for those regions with high heterogeneous landscapes. In order to test such practicability, the GE imagery was selected for a case study in Wuhan City to perform an object-based land use/cover classification. The classification accuracy was assessed by using 570 validation points generated by a random sampling scheme and compared with a parallel classification of QuickBird (QB) imagery based on an object-based classification method. The results showed that GE has an overall classification accuracy of 78.07%, which is slightly lower than that of QB. No significant difference was found between these two classification results by the adoption of Z-test, which strongly proved the potentials of GE in land use/cover mapping. Moreover, GE has different discriminating capacity for specific land use/cover types. It possesses some advantages for mapping those types with good spatial characteristics in terms of geometric, shape and context. The object-based method is recommended for imagery classification when using GE imagery for mapping land use/cover. However, GE has some limitations for those types classified by using only spectral characteristics largely due to its poor spectral characteristics.

B. Human Settlements: A Global Challenge for EO Data Processing and Interpretation.

The availability of fine spatial resolution earth observation (EO) data has been always considered as a plus for human settlement monitoring from space. It calls, however, for new and efficient data processing tools, capable to manage huge data amounts and provide information for urban area monitoring, management, and protection. Issues related to data processing at the global level imply multiple-scale processing and a focused data interpretation approach, starting from human settlement delineation using spatial information and detailing down to material identification and object characterization using multispectral and/or hyperspectral data sets. This paper attempts to provide a consistent framework for information processing in urban Aerial, stressing the need for a global approach able to exploit the detailed information available from EO data sets.

C. Very High Resolution Multi angle Urban Classification Analysis.

The high-performance camera control systems carried aboard the Digital Globe World View satellites, WorldView-1 and WorldView-2, are capable of rapid retargeting and high off-nadir imagery collection. This provides the capability to collect dozens of multiangle very high spatial resolution images over a large target area during a single overflight. In addition, World- View- 2 collects eight bands of multispectral data. This paper discusses the improvements in urban classification accuracy available through utilization of the spatial and spectral information from a WorldView-2 multiangle image sequence collected over Atlanta, GA, in December 2009. Specifically, the implications of adding height data and multiangle multispectral reflectance, both derived from the multiangle sequence, to the textural, morphological, and spectral information of a single WorldView-2 image are investigated. The results show an improvement in classification accuracy of 27% and 14% for the spatial and spectral experiments, respectively. Additionally, the multiangle data set allows the differentiation of classes not typically well identified by a single image, such as skyscrapers and bridges as well as flat and pitched roofs.

III. SYSTEM REQUIREMENT SPECIFICATION

System requirement specifications gathered by extracting the appropriate information to implement the system. It is the elaborative conditions which the system needs to attain. Moreover, the SRS delivers a complete knowledge of the system to understand what this project is going to achieve without any constraints on how to achieve this goal. This SRS does not providing the information to outside characters but it hides the plan.

A. Hardware Requirements

- System Processor : i7

- Hard Disk : 50GB.

- Ram : 8 GB 12 GB

- Any desktop / Laptop system with above configuration or higher level.

B. Software Requirements

- Operating system : Windows1(64bits OS)

- Programming Language : Python 3

- Framework : Anaconda

- Libraries :Keras,TensorFlOpenCV

- IDE :Jupyter Notebook.

IV. EXISTING SYSTEM

With the improvement of spatial resolution of Aerial images, Aerial image classification gradually formed three parallel classification branches at different levels: pixel-level, object-level, and scene-level classification, as shown in Fig. 1. Here, it is worth mentioning that we use the term of “Areial image classification” as main concept of this system using Deep Learning Technique Convolutional Neural Network (CNN). To be specific, in the existing system mainly focused on classifying Aerial images at pixel level or sub-pixel level, through labeling each pixel in the Aerial images with a semantic class, because the spatial resolution of Aerial images is very low–the size of a pixel is similar to the sizes of the objects of interest. To date, pixel-level Aerial image classification (sometimes also called semantic segmentation, as shown in Fig. 1 (a)) is still an active research topic in the areas of multispectral and hyperspectral Aerial image analysis.

V. PROPOSED SYSTEM

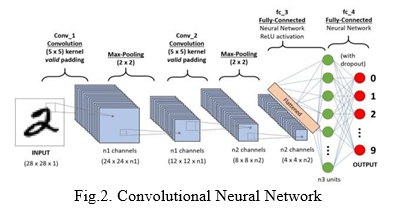

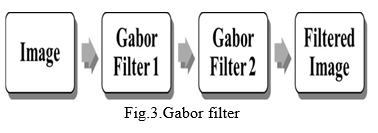

The proposed system build two models the first one is Aerial image scene classification using Convolutional Neural Network with actual dataset images and in another model dataset images are undergo Gabor Filter process Level 1 and Level 2, which will gives the clear edges of the Aerial images, the Gabor filter processed images are used in building the CNN model. Trust the Gabor filter images based CNN model will achieve more accuracy than the first model.

A. Convolutional neural network (CNN) / ConvNet

A level-3 heading must be indented, in bold and numbered. The level-3 heading must end with a colon. The body of the level-3 section immediately follows the level-3 heading in the same paragraph. Headings must be in 10pt bold font. For example, this paragraph begins with a level-3 heading.

A Convolutional Neural Network (ConvNet / CNN) is a Deep Learning algorithm which can take in an input image, assign importance (learnable weights and biases) to various aspects/objects in the image and be able to differentiate one from the other. The pre-processing required in a ConvNet is much lower as compared to other classification algorithms. While in primitive methods filters are hand-engineered, with enough training, ConvNets have the ability to learn these filters/characteristics.

The architecture of a ConvNet is analogous to that of the connectivity pattern of Neurons in the Human Brain and was inspired by the organization of the Visual Cortex. Individual neurons respond to stimuli only in a restricted region of the visual field known as the Receptive Field. A collection of such fields overlap to cover the entire visual area.

CNNs have wide applications in image and video recognition, recommender systems and natural language processing. In this article, the example that I will take is related to Computer Vision. However, the basic concept remains the same and can be applied to any other use- case! CNNs, like neural networks, are made up of neurons with learnable weights and biases. Each neuron receives several inputs, takes a weighted sum over them, pass it through an activation function and responds with an output. The whole network has a loss function and all the tips and tricks that we developed for neural networks still apply on CNNs.

B. Gabor Filter

The real and imaginary parts of the Gabor filter kernel are applied to the image and the response is returned as a pair of arrays. Gabor filter is a linear filter with a Gaussian kernel which is modulated by a sinusoidal plane wave. Frequency and orientation representations of the Gabor filter are similar to those of the human visual system. Gabor filter banks are commonly used in computer vision and image processing. They are especially suitable for edge detection and texture classification.

Gabor filters used in texture analysis, edge detection, feature extraction. When a Gabor filter is applied to an image, it gives the highest response at edges and at points where texture changes. The following images show a test image and its transformation after the filter is applied.

Features are extracted directly from gray-scale character images by Gabor filters which are specially designed from statistical information of character structures. An adaptive sigmoid function is applied to the outputs of Gabor filters to achieve better performance on low-quality images.

VI. DATASETS AND EXPERIMENTAL RESULTS

We tested the described image representations and classifier on two datasets. Both datasets consist of aerial images. The first dataset is our RSSCN7 dataset. The second dataset contains images used previously for aerial image classification and we include it here for comparison purposes. For evaluation of the classifiers we used an 400 x 400 pixel multispectral (RGB) aerial images. In this image there is a variety of structures, both man-made, such as buildings, factories, and warehouses, As well as natural, such as fields, trees and rivers. We partitioned this image into 400×400 pixel tiles, and used a total of 2800 images in our experiments. We manually classified all images into 7 classes, namely: fields, forest, grass, industry, parking, resident and river_lake. Examples of images from each class are shown in Fig. 1. It should be noted that the distribution of images in these categories is highly uneven, which can be observed from the bar graph in Fig. 2. In our experiments we used half of the images for training and the other half for testing.

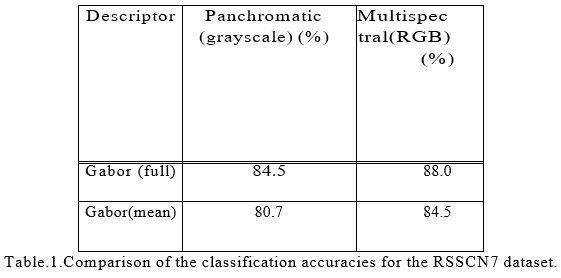

We compute Gabor filter at 8 scales and 8 orientations for all images from the dataset. We also tried other combinations of numbers of scales and orientations and chose the one with the best performance. Gabor filter, as proposed in are computed for grayscale images. Since images are multispectral.we compute Gabor filter for all 3 spectral bands in an image, and concatenate the obtained vectors, which yields 3 × 8 × 8 × 2 = 384-dimensional.For comparison purposes we also compute Gabor filter for grayscale (panchromatic) versions of images, which are 8 × 8 × 2 = 128-dimensional.

For testing our classifiers we used 10-fold cross

validation, each time with different random partition of the dataset, and averaged the results. Average classification accuracies on all categories are given in Table 1. In the table, Gabor (full) denotes Gabor descriptor as given in (5), while Gabor (mean) denotes descriptor obtained using only means of filter-bank responses.

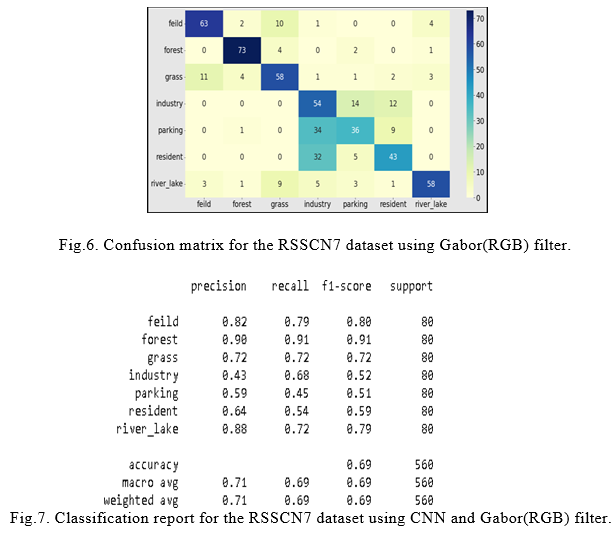

The confusion matrix for Gabor filter is given in figure 6. We note that confusions mainly arise between categories which can be difficult even for humans .The most notable examples are fields, forest, grass, industry, parking, resident and river_lake.

Conclusion

Aerial images have obtained major improvements through several decades of development. The number of papers on aerial image classification is breathtaking, especially the literature about deep learning-based methods. The accuracy of the aerial image is increased by using CNN and Gabor filter, when compare to ordinary methods used in the survey papers.

References

[1] Q. Hu, W. Wu, T. Xia, Q. Yu, P. Yang, Z. Li, and Q. Song, “Exploring the use of google earth imagery and object-based methods in land use/cover mapping,” Remote Sensing, vol. 5, no. 11, pp. 6026–6042, 2013. [2] L. G´omez-Chova, D. Tuia, G. Moser, and G. Camps- Valls, “Multimodal classification of remote sensing images: A review and future directions,” Proceedings of the IEEE, vol. 103, no. 9, pp. 1560–1584, 2015. [3] P. Gamba, “Human settlements: A global challenge for eo data processing and interpretation,” Proceedings of the IEEE, vol. 101, no. 3, pp. 570–581, 2012. [4] D. Li, M. Wang, Z. Dong, X. Shen, and L. Shi, “Earth observation brain (eob): An intelligent earth observation system,” Geo-spatialinformation science, vol. 20, no. 2, pp. 134–140, 2017. [5] N. Longbotham, C. Chaapel, L. Bleiler, C. Padwick, [6] W. J. Emery, and F. Pacifici, “Very high resolution multiangle urban classification analysis,” IEEE Transactions on Geoscience and Remote Sensing, vol. 50, no. 4, pp. 1155–1170, 2011. [7] A. Tayyebi, B. C. Pijanowski, and A. H. Tayyebi, “An urban growth boundary model using neural networks, gis and radial parameterization: An application to tehran, iran,” Landscape and Urban Planning,vol. 100, no. 1-2, pp. 35–44, 2011. [8] T. R. Martha, N. Kerle, C. J. van Westen, V. Jetten, and K. V. Kumar, “Segment optimization and data-driven thresholding for knowledgebased landslide detection by object-based image analysis,” IEEE Transactionson Geoscience and Remote Sensing, vol. 49, no. 12, pp. 4928– 4943, 2011. [9] G. Cheng, L. Guo, T. Zhao, J. Han, H. Li, and J. Fang, “Automatic landslide detection from remote- sensing imagery using a scene classification method based on bovw and plsa,” International Journal of Remote Sensing, vol. 34, no. 1-2, pp. 45–59, 2013. [10] Z. Y. Lv, W. Shi, X. Zhang, and J. A. Benediktsson, “Landslide inventory mapping from bitemporal high- resolution sensing images using change detection and multiscale remote segmentation,” IEEE journal of selected topics in applied earth observations and remote sensing, vol. 11, no. 5, pp. 1520–1532, 2018. [11] X. Huang, D. Wen, J. Li, and R. Qin, “Multi-level using monitoring of subtle urban changes for the megacities of china high-resolution multi-view satellite imagery,” Remote sensing of environment, vol. 196, pp. 56–75, 2017.

Copyright

Copyright © 2022 Prakruthi D P, P. Sumathi. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET42572

Publish Date : 2022-05-12

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online