Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Investigation of Different Deep Neural Networks for the Diagnostics of Brain Tumor, Skin Cancer and Breast Cancer

Authors: Riya Nimje, Shreya Paliwal, Jahanvi Saraf, Prof. Sachin Chavan

DOI Link: https://doi.org/10.22214/ijraset.2022.40932

Certificate: View Certificate

Abstract

Early disease detection cannot be neglected in the healthcare domain and especially in the diseases where risk is associated with a life of a person. As per the WHO, if diseases can be predicted ahead of time, death rates can be reduced. The paper\'s goal is to investigate how to detect Breast Cancer, Skin Cancer, and Brain Tumor at the early stages with the help of Deep Learning techniques. The authors of different papers have used different techniques and Algorithms like Convolutional Neural Network (CNN) algorithms, Visual Geometry Group (VGG16) and Residual Network (ResNet). We tried to investigate these three algorithms and compared them to find out the most efficient amongst them for each disease.

Introduction

I. INTRODUCTION

In the healthcare domain, to avoid deaths, it is critical to discover lethal infections at the earliest stage possible time. The aim is to use several Deep Learning models to detect these diseases at the earliest possible stage. The diseases which we will be covering in our paper will be Breast Cancer, Skin Cancer, and Brain Tumor. As we all know, all these diseases are very much contributing to the increasing death rates, so our main aim is to decrease the death rates by predicting the same.

A. Skin Cancer

Skin cancer is a condition in which the cells of the skin develop abnormally. The main appearance occurs where the sun is exposed the most but it is found that it also occurs in areas it doesn't. The main causes are UV sun radiations and tanning beds. If skin cancer is diagnosed early enough, it can be treated with minimal scarring and a high probability of being entirely eliminated. The dermatologist may even spot the skin transformation at a pre - malignant stage, before it progresses to full-blown skin cancer. A change in your skin is one of the most common signs of skin cancer. It could be an unhealed sore, new growth, or an altered mole. Not all skin malignancies have the same appearance.

B. Brain Tumor

One of the most challenging jobs in medical image processing is detecting brain tumors. The task is difficult to perform since the photos are diverse, as brain tumors come in a range of shapes and textures. Many different types of cells make up brain tumors, and these cells can give information about the tumor's origin, severity, and rarity. Tumors can occur in various places, and the location of a tumor might provide details about the cells that are causing it, which can aid in further diagnosis.

C. Breast Cancer

Breast cancer is a condition in which the cells of the breast grow out of control. Breast cancer manifests itself in a variety of ways. Which cells in the breast become malignant determines the type of breast cancer. Breast cancer can start in any part of the breast. The three main components of a breast are lobules, ducts, and connective tissue. The milk-producing glands are known as lobules. Milk-carrying tubes are the ducts. Connective tissue, which is made up of fibrous and fatty tissue, holds everything together. Breast cancer typically begins in the ducts or lobules of the breast. Through blood and lymph veins, breast cancer can travel to other parts of the body. When breast cancer spreads to other parts of the body, it is considered to have metastasized.

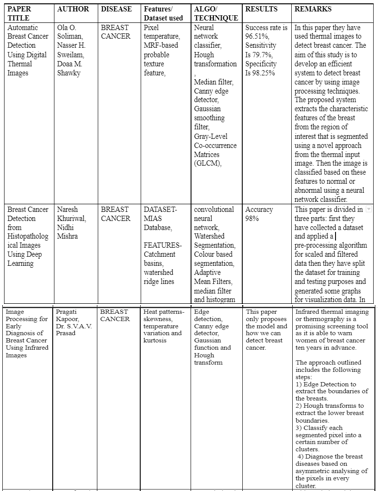

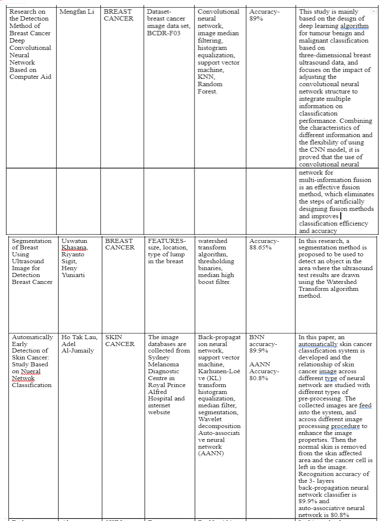

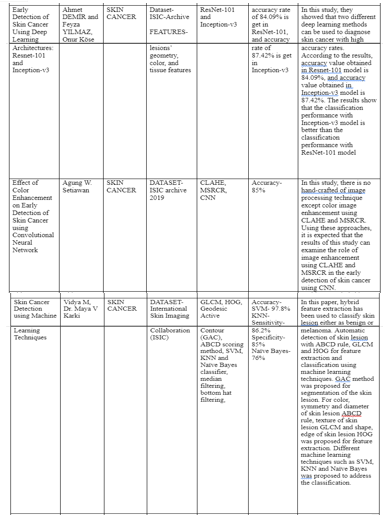

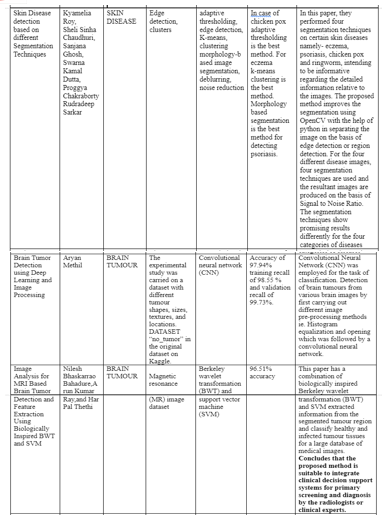

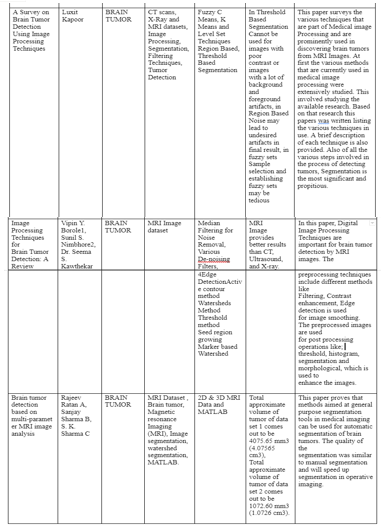

II. LITERATURE SURVEY

III. DATASETS

Datasets that different authors have used for different diseases are as follows-

- Breast Cancer: MIAS Database, breast cancer image data set, BCDR-F03

- Skin Cancer: Databases are collected from Sydney Melanoma Diagnostic Centre in Royal Prince Alfred Hospital and internet website, ISIC-Archive 2019.

- Brain Tumor: No_tumor, Magnetic resonance imaging (MRI)

IV. FEATURE DETECTION

In this research paper, to detect different diseases for that we extract the appropriate features from each diseases as follows:

A. Skin Cancer

A huge brownish spot with darker speckles on the surface is found.

A bleeder or a mole changes their texture, color or size.

A little lesions with just irregular borders with portions of blue-black, pink, red, white or blue.

A lesion that is unpleasant, irritable, or blistering.

Dark lesions on the palms, soles, fingertips, or toes, as well as mucous membranes lining the mouth, nose, vaginal, or anus

B. Brain Tumor

If correctly chosen, features, or the properties of the objects of interest, represent the most significant information that the image has to offer for a complete characterization of a lesion. Objects and images are analyzed using feature extraction approaches to obtain the most prominent traits that are typical of the many types of objects. Classifiers employ features as inputs to assign them to the class that they represent. Feature extraction is used to decrease the original data by measuring specific traits, or features, that separate one input pattern from another. By converting the description of the image's relevant features into feature vectors, the extracted feature should supply the input type's characteristics to the classifier.

The following features are extracted using the proposed approach.

Circularity, irregularity, Area, Perimeter, and Shape Index are all characteristics of a shape.

Mean, Variance, Standard Variance, Median Intensity, Skewness, and Kurtosis are all aspects of intensity.

Contrast, Correlation, Entropy, Energy, Homogeneity, Cluster Shade, and Sum of Square Variance are all texture properties.

C. Breast Cancer

Cysts are tiny sacs filled with fluid. The majority are simple cysts with a thin wall that are not malignant. If a cyst cannot be classified as a simple cyst, a doctor may perform additional testing to assure that it is not malignant.

Calcium deposits are known as calcifications. Macrocalcifications are larger calcium deposits that normally arise as a result of aging. A doctor may screen the microcalcifications for indicators of malignancy, depending on their appearance.

Fibroadenomas are breast tumors that are benign. They are spherical and have a marble-like texture to them. Fibroadenomas are more common in people in their 20s and 30s, but they can happen at any age.

On a mammography, scar tissue generally appears white. Any scarring on the breasts should be disclosed to a doctor ahead of time.

A tumor, cyst, or fibroadenoma, whether cancerous or not, is referred to as a mass.

V. MODEL SELECTION

To detect the different diseases, we have investigated 3 different deep learning models :-

A. Convolutional Neural Networks

CNN or Convolutional neural network is a model in deep learning which is used for feature detection and classification of images. This model takes the image as an input, after taking the input it does the feature extraction and lastly, classify the images.

B. ResNets

ResNets or Residual Neural Networks is an Artificial Neural Network. ResNets basically uses some shortcuts and skip connections to skip or jump over some layers of networks.

C. VGG Neural Networks

VGG ( visual geometry group ) is a deep convolutional neural network ( CNN ) with multiple layers. We have two different types of VGG ( VGG 16 and VGG 19 ) networks depending upon the number of layers it consists of.VGG 16 is a model having 16 different layers and VGG 19 is a model having 19 different layers in its model.

VI. DESIGN AND ARCHITECTURE

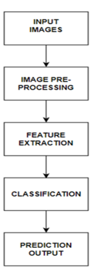

A. CNN (Convolutional Neural Network)

This is the deep learning model architecture for detection of Skin Cancer, Brain Tumor and Breast Cancer. The deep learning model starts with taking the input image in the model. Following the input image to the model, we will pre-process it using various pre-processing techniques like grayscale conversion, image scaling, segmentation and so on. The model will extract features after the image pre-processing is completed. Now the image is ready for training and classification. So now the model will be trained with the help of deep learning algorithms such as CNN. After predicting whether the image is infected or not, our model will give the result to the user.

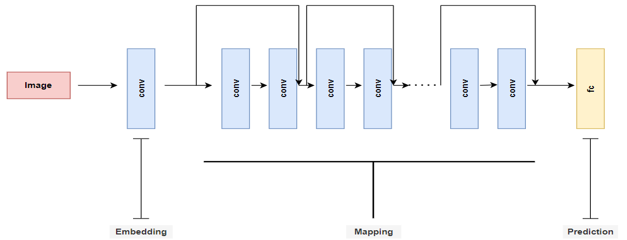

B. ResNet (Residual Network)

This is a schematic view for ResNet Architecture. There is an input image which gets decomposed into three blocks like embedding, mapping and prediction. Where, ‘conv’ referred to convolutional operations which is followed by non linear activations and ‘fc’ referred to fully connected layers.

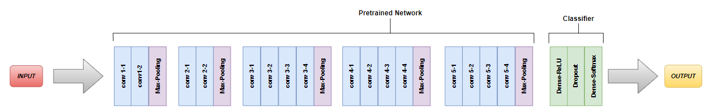

C. VGG-16 (Visual Geometry Group)

The convnets are fed a fixed-size 224 by 224 RGB picture during training. The only pre-processing done here is subtracting the mean RGB value derived on the training set from each pixel. The image is processed through a stack of convolutional (conv.) layers, where filters with a very narrow receptive field are utilized, such as 3 3 (which is the smallest size to capture the notions of left/right, up/down, and center and has the same effective receptive field as one 7 x 7). It's more complex, with more non-linearities and fewer parameters. 1 1 convolution filters, which can be thought of as a linear transformation of the input channels (followed by non-linearity), are also used in one of the configurations. For 3 x 3 convolutional layers, the convolution stride and spatial padding of the conv. layer inputs are both set to 1 pixel, ensuring that the spatial resolution is kept after convolution. Spatial pooling is aided by five max-pooling layers that follow parts of the convolutional layers. Max-pooling is done with stride 2 over a 22 pixel window. Following a stack of convolutional layers, there are three Fully-Connected (FC) layers.

VII. IMPLEMENTATION

Implementation process of Convolutional Neural Network, ResNet and VGG Neural Networks.

A. Implementation Process Of Convolutional Neural Networks

The working of Convolutional Neural Network starts with taking the image as an input. After taking the inputs, the image passes through the Convolutional Neural Network and lastly we get the desired output.

Inside the convolutional neural network, the image passes through different types of layers and activation functions for the final output as below :-

- Convolution Operation: This is the very first step of the CNN model. It involves the three different elements which are input image, feature detector, and feature map. The convolution operation begins with placing the window which is nothing but the feature detector over an input image starting from the top. This window is used to detect different features from the images and lastly is mapped to the feature map. This step also involves the rectification of images, which is used to increase the non-linearity of images.

- Max Pooling: The main function of the Max Pooling layer is that the ACNN must be able to detect any type of images, which may be normal, rotated or squashed. This layer is also responsible for acquiring the property known as “Spatial Variance”. There are different types of pooling available - Mean Pooling, Max Pooling and Sum Pooling.

- Flattening: After performing the above steps, we have a pooled feature map ready with us. For inserting the data into the neural network, we have to just flatten it. In this step, as the name suggests we will flatten our pooled feature map into one column which is further fed to the neural network.

- Fully Connection

In full connection, we have three different layers involved :-

a. Input Layer - consists of the flattened data from the above step.

b. Fully Connected Layer - Connects the both ANN and CNN

c. Output layer- Gives the labeled output.

B. Implementation Process Of ResNet(Residual Network)

ResNets work on the premise of building deeper networks compared to other simple networks while simultaneously determining an optimal number of layers to overcome the vanishing gradient problem. Where the gradient information in the input layers of the model cannot be back propagated by networks.

When we increase the number of layers, we run into an issue called Vanishing/Exploding gradient, which is a typical difficulty in deep learning. As a result, the gradient becomes 0 or too huge. As a result, as the number of layers grows, so does the training and test error rate.

The residual network's main purpose is to create a deeper neural network. Based on this, we may form two conclusions:

- As we progress further into the implementation of a large number of layers, we must ensure that the accuracy and error rate do not deteriorate. Identity mapping can help with this..

- Continue to understand the residuals in order to match the predicted to the actual.

The two main types of ResNet blocks are firstly convolutional blocks and secondly identity.

- Identity Block: When the input activation and output activation have the same dimension

- Convolutional Block: When the dimensions of the input and output do not match The shortcut path differs from the identity block in that it includes a CONV2D layer.

By enabling the gradient to flow along this other shortcut channel, the skip connections in ResNet are enabled. These connections also aid the model by helping it to learn the identity functions, ensuring that the upper layer performs at least as well as the lower layer, if not better.

C. Implementation Process Of VGG(Visual Geometry Group)

Below are the steps for the VGG implementation -

- Input: VGG takes the RGB input image with the size of 224 X 224 pixels.

- Convolutional Layers: In VGG, the size of the convolutional layers are as small as possible. For example, size can be 3X3, which can still capture the features. The size of convolutional filters in VGG are also 1X1 which acts as a linear transformation of the input followed by the ReLU function. VGG has the fixed stride of 1 pixel because of which the spatial resolution is preserved.

- Hidden Layers: All VGG networks use the ReLU function in their hidden layers.

- Fully Connected Layers: VGG models have a total of 3 fully connected layers from which the first and second have 4096 channels each and the third one have 1000 channels, 1 for each class.

During training, the convnets are fed a fixed-size 224 by 224 RGB image. The only pre-processing done here is subtracting each pixel's mean RGB value from the training set. The image is processed using a stack of convolutional (conv.) layers with filters with an extremely narrow receptive field, such as 3 3 (the smallest size to capture the concepts of left/right, up/down, and center and has the same effective receptive field as one 7 x 7). It's more complicated, with fewer parameters and more non-linearities. In one of the setups, 1 1 convolution filters are utilized, which may be thought of as a linear modification of the input channels (followed by non-linearity).

The convolution stride and spatial padding of the convolutional layer inputs are both set to 1 pixel for 3 x 3 convolutional layers, guaranteeing that the spatial resolution after convolution is preserved. Five max-pooling layers that follow sections of the convolutional layers assist spatial pooling. Stride 2 is used to max-pool over a 22 pixel frame. There are three Fully-Connected (FC) layers after a stack of convolutional layers.

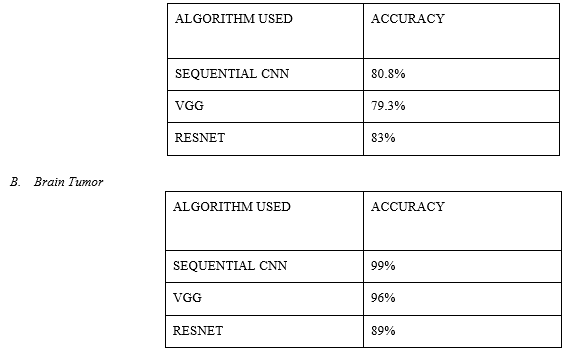

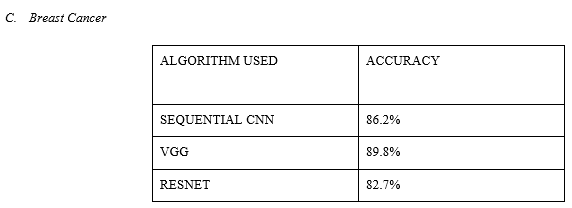

VIII. RESULT ANALYSIS

A. Skin Cancer

Conclusion

To lower death rates in the healthcare industry, it is critical to diagnose lethal diseases early on. If we can detect lethal diseases early on, we can cure them without having to worry about their severity or complexity. So for this, we have selected three different types of diseases, which are Skin Cancer, Brain tumor and Breast Cancer and investigate upon this with different models like CNN (Convolutional Neural Network), VGG 16 (Visual Geometry Group) and ResNet (Residual Network) and compared it to conclude that which model gives us the best result or accuracy if it gets deployed in the upcoming time. To reduce death rates in the healthcare industry, it is critical to diagnose lethal diseases so patients receive the appropriate care at the nascent stage. We can treat deadly diseases without worrying about their severity or intricacy if we can diagnose them early. So for this, we have selected three different types of diseases, which are Skin Cancer, Brain tumor and Breast Cancer and investigate upon this with different models like CNN (ConvolutioPnal Neural Network), VGG 16 (Visual Geometry Group) and ResNet (Residual Network) and compared it to conclude that which model gives us the best result or accuracy. After comparing different models we got the best accuracy for Skin Cancer as 83% with ResNet model, 99 % accuracy for Brain Tumor with SEQUENTIAL CNN model and lastly, 89.8% accuracy for Breast Cancer with VGG model.

References

[1] O. O. Soliman, N. H. Sweilam and D. M. Shawky, \"Automatic Breast Cancer Detection Using Digital Thermal Images,\" 2018 9th Cairo International Biomedical Engineering Conference (CIBEC), 2018, pp. 110-113, doi: 10.1109/CIBEC.2018.8641807. [2] N. Khuriwal and N. Mishra, \"Breast Cancer Detection From Histopathological Images Using Deep Learning,\" 2018 3rd International Conference and Workshops on Recent Advances and Innovations in Engineering (ICRAIE), 2018, pp. 1-4, doi: 10.1109/ICRAIE.2018.8710426. [3] P. Kapoor and S. V. A. V. Prasad, \"Image processing for early diagnosis of breast cancer using infrared images,\" 2010 The 2nd International Conference on Computer and Automation Engineering (ICCAE), 2010, pp. 564-566, doi: 10.1109/ICCAE.2010.5451827. [4] Li, Mengfan. (2021). Research on the Detection Method of Breast Cancer Deep Convolutional Neural Network Based on Computer Aid. 536-540. 10.1109/IPEC51340.2021.9421338. [5] U. Khasana, R. Sigit and H. Yuniarti, \"Segmentation of Breast Using Ultrasound Image for Detection Breast Cancer,\" 2020 International Electronics Symposium (IES), 2020, pp. 584-587, doi: 10.1109/IES50839.2020.9231629. [6] W. Setiawan, \"Effect of Color Enhancement on Early Detection of Skin Cancer using Convolutional Neural Network,\" 2020 IEEE International Conference on Informatics, IoT, and Enabling Technologies (ICIoT), 2020, pp. 100-103, doi: 10.1109/ICIoT48696.2020.9089631. [7] M. Vidya and M. V. Karki, \"Skin Cancer Detection using Machine Learning Techniques,\" 2020 IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT), 2020, pp. 1-5, doi: 10.1109/CONECCT50063.2020.9198489. [8] K. Roy, S. S. Chaudhuri, S. Ghosh, S. K. Dutta, P. Chakraborty and R. Sarkar, \"Skin Disease detection based on different Segmentation Techniques,\" 2019 International Conference on Opto-Electronics and Applied Optics (Optronix), 2019, pp. 1-5, doi: 10.1109/OPTRONIX.2019.8862403. [9] Demir, F. Yilmaz and O. Kose, \"Early detection of skin cancer using deep learning architectures: resnet-101 and inception-v3,\" 2019 Medical Technologies Congress (TIPTEKNO), 2019, pp. 1-4, doi: 10.1109/TIPTEKNO47231.2019.8972045. [10] H. T. Lau and A. Al-Jumaily, \"Automatically Early Detection of Skin Cancer: Study Based on Nueral Netwok Classification,\" 2009 International Conference of Soft Computing and Pattern Recognition, 2009, pp. 375-380, doi: 10.1109/SoCPaR.2009.80. [11] A. S. Methil, \"Brain Tumor Detection using Deep Learning and Image Processing,\" 2021 International Conference on Artificial Intelligence and Smart Systems (ICAIS), 2021, pp. 100-108, doi: 10.1109/ICAIS50930.2021.9395823. [12] Rojas-Albarracín, Gabriel, Miguel Á. Chaves, Antonio Fernández-Caballero, and María T. López 2019. \"Heart Attack Detection in Colour Images Using Convolutional Neural Networks\" Applied Sciences 9, no. 23: 5065. https://doi.org/10.3390/app9235065

Copyright

Copyright © 2022 Riya Nimje, Shreya Paliwal, Jahanvi Saraf, Prof. Sachin Chavan. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET40932

Publish Date : 2022-03-22

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online