Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Detection and Classification of Diabetic Retinopathy Using Deep Learning

Authors: A. K. Nivedha, Rahini. T , Sabrina Banu. M, Sowmiya. V , Sreelakshmi. T

DOI Link: https://doi.org/10.22214/ijraset.2023.51626

Certificate: View Certificate

Abstract

Diabetic retinopathy (DR) is a leading cause of blindness in diabetic patients. Early detection and classification of DR can facilitate timely treatment and reduce the risk of vision loss. In recent years, deep learning has shown promise in automating the diagnosis of DR. However, the use of high-performance models such as ResNet and DenseNet often requires powerful computing resources and large amounts of data. In this study, we propose a deep learning approach for the detection and classification of DR using MobileNetV2, a lighter and more computationally efficient model. Our method was trained and tested on a large publicly available dataset, achieving an accuracy of 85.28% for DR classification. We also conducted experiments to investigate the importance of different hyperparameters and data augmentation techniques. The results demonstrate the effectiveness of our method in detecting and classifying DR with high accuracy while using fewer computational resources. The proposed method has the potential to be applied in clinical settings to assist ophthalmologists in the early detection and diagnosis of DR.

Introduction

I. INTRODUCTION

Nowadays, healthcare becomes one of the top concerns, and the early detection and early treatment of diseases become crucial. Diabetes is a disease that increases the amount of glucose in the blood caused by a lack of insulin. Diabetes affects organs such as the retina, heart, nerves. Diabetic Retinopathy (DR) is a complication of diabetes that causes the blood vessels of the retina to swell and to leak fluids and blood . The patient can lose sight if the disease changes badly. Retinal screening is necessary for diabetic patients for timely treatment at an early stage.

The stages of the disease consist of four stages: mild nonproliferative, moderate nonproliferative, severe nonproliferative, proliferative.In the first stage, there will be balloon-like swelling in small areas of the blood vessels in the retina. In the second stage, known as moderate nonproliferative retinopathy, some of the blood vessels in the retina will become blocked. In the third stage, severe nonproliferative retinopathy brings with it more blocked blood vessels, which leads to areas of the retina no longer receiving adequate blood flow. Without proper blood flow, the retina can't grow new blood vessels to replace the damaged ones .The fourth stage or the final stage is known as proliferative retinopathy. This is the advanced stage of the disease.

Additional new blood vessels will begin to grow in the retina, but they will be fragilSe and abnormal. Because of this, they can leak blood which will lead to vision loss and possibly blindness. Diagnosis using the naked eye is prone to misdiagnosis and requires more experience and effort. Automated diagnostic methods may save more cost and time and effective than normal diagnosis methods.Recent research evaluating DR automated method uses deep learning to detect and classify DR.In this study, the MobileNetV2 model is proposed in classifying the stages of diabetic retinopathy with four disease stages and healthy cases using pre-training models.

II. EXISTING SYSTEM

The existing model enjoys the stacking of the VGG16 , SPP , and NiN . The VGG16 takes RGB image of size 224 × 244 as an input. The image is passed through a series of convolutional (conv) layers, with filters of 3 × 3 receptive fields, followed by a block of three fully connected layers. The conv layers can process inputs of varying sizes. It slides the input with a stack of kernels and produces an output feature map. . The feature map maintains the receptive fields responses spatially. However, the VGG16 fully connected layers need a fixed-length vector and limit the model to a fixed size input. Due to the use of fully connected layers, the VGG16 needs a fixed size input in both training and testing phases. The CNN assumes the latent space as linearly separable . Generally, the high dimensional latent spaces contain highly non-linear manifolds. To learn the data’s non-linear behaviour, we add the NiN on the top of the SPP layer.

The NiN is the collection of micro-networks called mlpconv layer, which works on the principle of multilayer NiN formed by stacking multiple conv layers. A stack of multilayer perceptrons encode the non-linear features and lead to a high level of abstraction. We have used the parametric relu (PRelu) function to compute the activation of ‘‘mlpconv’’ . . In the last layer, softmax is adopted as an activation for classification. The categorical cross-entropy is used as a loss function. The training is performed by exploiting the Stochastic Gradient Decent (SGD) optimizer. So we are introduced an advanced nueral network connection layer. “MOBILENET V2”. It is much better than the existing system.

III. OBJECTIVES

The objectives of detecting and classifying stages of diabetic retinopathy using deep learning with Mobilenet V2 are:

- Fast Processing: The primary objective is to provide faster processing while maintaining high accuracy in detection and classification of diabetic retinopathy.

- High Accuracy: The model with MobilenetV2 architecture should achieve high levels of accuracy in detecting and classifying diabetic retinopathy to ensure efficient diagnosis and treatment.

- Efficient use of Resources: The model should use minimal compute resources, making it suitable for deployment on mobile devices and in resource-constrained settings.

- Scalability: The deep learning model should be scalable to accommodate large datasets and new data as it becomes available.

- Generalizability: The model should be capable of detecting diabetic retinopathy across different patient populations and datasets.

- Interoperability: The model should be interoperable with other healthcare systems to facilitate sharing of patient data and integrated healthcare delivery.

- User-Friendly Interface: The model should have a user-friendly interface that makes it easy for healthcare professionals to use and interpret the results.

- Cost-Effective: The use of deep learning in detecting and classifying diabetic retinopathy should be cost-effective for patients, healthcare providers, and healthcare systems. The use of MobilenetV2 should further reduce the cost by requiring fewer resources.

IV. METHODOLOGY

A. Dataset

The dataset that is used in this study is an open-source dataset on the Kaggle Database . This is the data of an APTOS 2019 Blindness Detection competition on Kaggle. A large dataset is provided with a set of retinal images taken using fundus imaging under different conditions. Doctors will evaluate each image of the severity of diabetic retinopathy on a scale of 0 to 4: 0 – No_DR, 1 - Mild, 2 - Moderate, 3 - Severe, 4 - Proliferative DR. Like any dataset collected in a real-world environment, it is going to be had a disturbance. Images may contain false, out-of-focus, low-light, or oversized information. Images collected from many different clinics using a variety of imaging equipment over long periods will create many other variations of the input images. All stages containing 5000 datas. 80% datas are set into training and 20% datas into testing. All shapes are resized to a size of 224× 224 pixels to fit the model. Table 1 presents the number of images for training, validating, and testing.

B. Preprocessing

There are only 193 severe cases and 370 mild cases that are much less than the rest. If this training data will be used then the model can very well detect the cases of no_DR, moderate, and proliferate_DR than the cases of severe and mild. Therefore, the accuracy may become high but there is no balance between the layers. To overcome this problem, data enhancement techniques such as shifting, rotating, zooming are implemented so as not to lose the balance of accuracy between stages of the disease, all stages of equal importance. At the same time, the data enhancement will make the model become more general and avoid overfitting.

C. Convolution Neural Network

Deep learning (DL) is a branch of machine learning techniques that involves hierarchical layers of non-linear processing stages for unsupervised feature learning as well as for classifying patterns. Nowadays, DL is one of the most effective computer-aided medical diagnosis methods. The convolutional neural network (CNN) is useful in computer vision tasks. It has made impressive progress in many areas especially medical diagnostics.

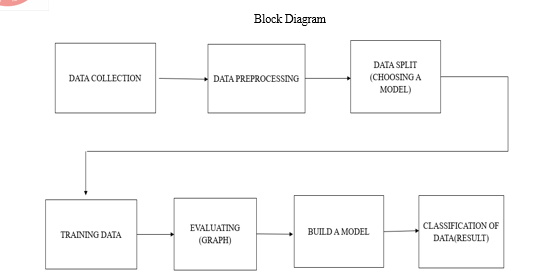

V. BLOCK DIAGRAM DESCRIPTION

A. Data Collection

Datas are collected from kaggle dataset. These datas are having different resolution, which means these datas having different features. These types of datas we can't able to set as input datas. Datasets having same number of datas in an dataset. For that purpose we are suggested for augmentation process. Augmentation is means that the datas are under crop, flip, rotate etc... These datas are different images. Which means augmentation is done for increasing the number of datas in an set of data. These datas are called a dataset.

B. Pre-processing

The resultant datasets is under pre-processing.These process is going to filtering the datas. Which means noise removal, cropping etc... (For changing resolutions of the datasets). Through these procedure got good datasets for processing.

C. Choose a Model

After the training of datas we got an model. Here we are using Mobilenet V2. This model is having good accuracy and learning loss is very less, when we are comparing to the VGG-16 (The VGG model stands for the Visual Geometry Group from Oxford). MobileNet-v2 is a convolutional neural network that is 53 layers deep. You can load a pretrained version of the network trained on more than a million images from the ImageNet database. MobileNet model is a network model using depthwise separable convolution as its basic unit. Its depthwise separable convolution has two layers: depthwise convolution and point convolution.

D. Evaluation

The trained datas are learned for the software. In that we can put off input datas for Testing. Which may gives the accuracy and loss of learning. At maximum we taking the accuracy as more than 80. Accuracy is shown in the decimal form (for example; 0.8 which means accuracy is 80). In this stage we got the graphical representation of accuracy and loss of model.

E. Build a Model

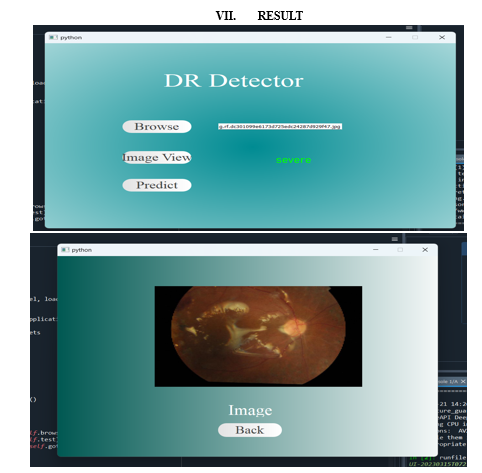

In this we got an accurate and new model for testing datas. In this we are putting new datas for Testing. Already we trained, so it can predict the classification now. Then we given the classification of diabetic rectinopathy stages. We are shown the stages of diabetic rectinopathy which are in the labels of Mild, Moderate, No_DR (Normal), proliferate and Severe. At the maximum value is labelled that displays the stage (For exmaple; the graph shows 0.8 is for moderate at maximum. That moderate data is displayed. Other datas are falls).

VI. SOFTWARE REQUIREMENT

A. Sypder Navigator

Anaconda Navigator is a desktop graphical user interface (GUI) included in Anaconda® Distribution that allows you to launch applications and manage conda packages, environments, and channels without using command line interface (CLI) commands. Navigator can search for packages on Anaconda.org or in a local Anaconda Repository. It is available for Windows, macOS, and Linux.

B. Why do we use Spyder Navigator?

In order to run, many scientific packages depend on specific versions of other packages. Data scientists often use multiple versions of many packages and use multiple environments to separate these different versions.The CLI program conda is both a package manager and an environment manager. This helps data scientists ensure that each version of each package has all the dependencies it requires and works correctly.Navigator is a graphical interface that enables you work with packages and environments without needing to type conda commands in a terminal window. You can use it to find the packages you want, install them in an environment, run the packages, and update them – all inside Navigator.

C. Python

Python is a wonderful and powerful programming language that's easy to use (easy to read and write) and with Raspberry Pi lets you connect your project to the real world. Python syntax is very clean, with an emphasis on readability and uses standard English keywords. Start by opening ANACONDA NAVIGATOR from the desktop.

VIII. FUTURE WORK

A handheld fundus camera can be developed, with cloud connectivity. The images can be uploaded to the cloud where the algorithm would process the image and test it on the model to give the classification. Thus the time for preliminary diagnosis can be reduced.

Conclusion

The incidence of diabetes is increasing worldwide, and complications of diabetic retinopathy are also increasing. Diabetic retinopathy stages are based on the type of damage that appears on the retina. This disorder threatens the vision of diabetics if diabetic retinopathy is detected in the late stages. Therefore, the detection and treatment of diabetic retinopathy in the early stages is crucial to reduce the risk of blindness. The process of diagnosing diabetic retinopathy manually with an increasing incidence of diabetic retinopathy has become insufficiently effective. Therefore, the automation of diabetic retinopathy diagnosis using a computer-aided screening system saves effort, time, and spending. Most researchers have used CNN to classify and detect diabetic retinopathy images due to its effectiveness. In this study, the MobileNetV2 model is proposed in classifying stages of diabetic retinopathy with 93.89% accuracy with high reliability. The cross-validation was repeated three times to ensure that the model is not affected by different datasets. A thermal map is also given to help experts for evaluating the effects of different stages of the disease. The result of the proposed model shows that our model and techniques can be used to detect and classify diabetic retinopathy using deep learning. In the future, multiple datasets should be combined to achieve the balance of datasets for improving accuracy and precision.

References

[1] Taylor R, Batey D. Handbook of retinal screening in diabetes:diagnosis and management. second ed. John Wiley & Sons, Ltd Wiley-Blackwell; 2012. [2] American academy of ophthalmology-what is diabetic retinopathy? [Online]. Available, https://www.aao.org/eye-health/diseases/what-is-diabetic-retinopathy. [3] Grisworld Home Care Delivered with heart [Online]. Available, https://www.griswoldhomecare.com/blog/2015/january/the-4-stages-of-diabeticretinopathy-what-you-ca/ [4] Liu, Y.P.; Li, Z.; Xu, C.; Li, J.; Liang, R. Referable diabetic retinopathy identification from eye fundus images with weighted path for convolutional neural network. Artif. Intell. Med. 2019, 99, 101694. [5] Das, S.; Kharbanda, K.; Suchetha, M.; Raman, R.; Dhas, E. Deep learning architecture based on segmented fundus image features for classification of diabetic retinopathy. Biomed. Signal Process. Control 2021, 68, 102600. Classification of Stages Diabetic Retinopathy Using MobileNetV2 Model Huynh et al. 155 [6] Kassani, S.H.; Kassani, P.H.; Khazaeinezhad, R.; Wesolowski, M.J.; Schneider, K.A.; Deters, R. Diabetic retinopathy classification using a modified xception architecture. In Proceedings of the 2019 IEEE International Symposium on Signal Processing and Information Technology (ISSPIT), Ajman, United Arab Emirates, 10–12 December 2019; pp. 1–6. [7] Mobeen-Ur-Rehman.; Khan, S.H.; Abbas, Z.; Danish Rizvi, S.M. Classification of Diabetic Retinopathy Images Based on Customised CNN Architecture. In Proceedings of the 2019 Amity International Conference on Artificial Intelligence, AICAI 2019, Dubai, United Arab Emirates, 4–6 February 2019; pp. 244–248. [8] Decenciere, E.; Zhang, X.; Cazuguel, G.; Lay, B.; Cochener, B.; Trone, C.; Gain, P.; Ordonez, R.; Massin, P.; Erginay, A.; et al. Feedback on a publicly distributed image database: The messidor database. Image Anal. Stereol. 2014, 33, 231–234. [9] Zhang, W.; Zhong, J.; Yang, S.; Gao, Z.; Hu, J.; Chen, Y.; Yi, Z. Automated identification and grading system of diabetic retinopathy using deep neural networks. Knowl. Based Syst. 2019, 175, 12–25. [10] Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the Inception Architecture for Computer Vision. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 2818–2826.

Copyright

Copyright © 2023 A. K. Nivedha, Rahini. T , Sabrina Banu. M, Sowmiya. V , Sreelakshmi. T. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET51626

Publish Date : 2023-05-05

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online