Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Driver Drowsiness Detection System using Raspberry Pi

Authors: Suraj Jadhav, Sanket Jagdale, Niraj Jangale, Shital Raut

DOI Link: https://doi.org/10.22214/ijraset.2022.48137

Certificate: View Certificate

Abstract

This paper presents a real-time driver drowsiness detection system for driving safety. Supported computer vision techniques, the driver’s face is found from color video captured in an exceedingly car. Then, face detection is used to locate the regions of the driver’s eyes, which are used because the templates for eye tracking in subsequently frames. Finally, the tracked eye’s images are used for drowsiness detection so as to come up with waring alarms. The proposed approach has three phases: Face, Eye detection and drowsiness detection The role of image processing is to acknowledge the face of the driving force then extracts the image of the eyes of the driving force for detection of drowsiness. The HAAR face detection algorithm takes captured frames of image as input and so the detected face as output. Next, CHT is employed to tracking eyes from the detected face. If the eyes are closed for a predefined period of your time the eyes of the motive force are considered closed and hence an alarm are beginning to alert the driving force.

Introduction

I. INTRODUCTION

Drowsy driving is one among the key causes behind fatal road accidents. one among the recent study shows that one out of 5 road accidents are caused by drowsy driving which is roughly around 21 percent of road accidents, and this percentage is increasing each year as per global status report on road safety 2015, supported the information from 180 different countries. This certainly highlights the very fact that across the planet the whole numbers of road traffic deaths are very high because of driver’s drowsiness. Driver fatigue, drink-and-drive and carelessness are coming forward as major reasons behind such road accidents. Many lives and families have gotten affected because of this across various countries. All this led to the event of Intelligent Driver Assistance Systems time drowsy driving detection is one in every of the most effective possible major that may be implemented to help drivers to create them awake to drowsy driving conditions. Such driver behavioral state detection system can help in catching the motive force drowsy conditions early and might possibly avoid mishaps. Heavy traffic, increasing automotive population, adverse driving conditions, tight commute time requirements and also the work loads are few major reasons behind such fatigue.

II. LITERATURE SURVEY

Several studies in a variety of ways have been done on Driver Drowsiness detection system.

In [1] authors propose It uses machine learning techniques to predict driver states and emotions, providing information that improves road safety. This paper focuses on developing a contactless system that can detect drowsiness and warn in a timely manner. The system monitors the driver's eyes with a camera. Algorithms can be developed to detect early signs of driver fatigue and avoid accidents. When signs of fatigue are detected, the seatbelt sounds and vibrates to alert the driver. Warnings are disabled manually, not automatically. This paper uses an algorithm that is faster than PERCLOS. The system detects driver fatigue by processing the eye area. After capturing an image, the first stage of processing is facial recognition. when you blink.

Tian et Qin [2] build a system that checks the driver eye states. Your system uses the Cb and Cr components of the YCbCr color space. The system finds faces with the vertical projection function and eyes with the horizontal projection function. Once the eye locations are identified, the system uses the complexity function to compute the eye state. Hong et al. [4] Define a system that detects real-time eye conditions to identify driver drowsiness. Face region recognition is based on the optimized method of Jones and Viola [5]. The eye area is obtained by horizontal projection. Finally, a new complexity function with dynamic thresholds for identifying eye conditions. Authors [10] propose a system that uses video images to detect driver fatigue, called DriCare. B. Yawning, blinking, and time with eyes closed without the device on the body. Due to the shortcomings of the previous algorithms, we introduce a new face tracking algorithm to improve the tracking accuracy. Furthermore, they developed a new face region detection method based on 68 key points. These face regions are then used to assess the driver's condition. By combining eye and mouth functions, DriCare can issue fatigue warnings to drivers. Experimental results showed that DriCare achieved an accuracy of about 92%.

III. METHODOLOGY

A. Hardware

- Raspberry-pi

- Webcam

- Speaker

- Power supply cable

B. Software

- Putty.exe

- Balena Etcher

- Advanced Ip scanner

- VNC viewer

The aim of this project to make sure proper social distance among people. So, this could be achieved by limiting no. of individuals in shop, public places etc and limiting a particular distance among people. during this system we use sensors like infrared to detect vacancy/occupancy eventually which can help to count total number of individuals in an exceedingly room And ultrasonic sensor to live distance. If the limit of anythese exceed, the place will get alert sound through buzzer. The Arduino Ide will provide readings to firebase database, firebasewill be wont to store user details received from website. Website will provide user the main points of place like total no. of individuals and the way is that the social distancing norm followed because it are connected to firebase. this can help user to make your mind up which place is safe to go to from his/her home itself.

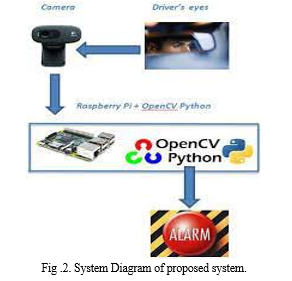

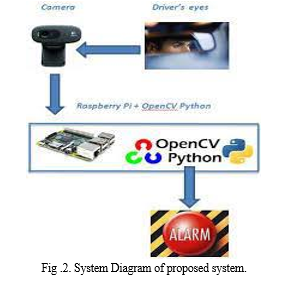

IV. SYSTEM ARCHITECTURE

In the proposed system shown in Fig. 1, the main focus is on fatigue detection and speeding up data processing. Close your eyes and watch the number of frames and count. When the number of frames exceeds her threshold, an alert is generated indicating drowsiness detected on the display. The system should be able to detect drowsiness regardless of the driver's skin tone or complexion, the glasses the driver is wearing, or the darkness inside the vehicle. All of these goals were successfully achieved by choosing a closed-eye detection system with an appropriate classifier in OpenCV.

In this algorithm, the driver's image is first captured by the camera for processing. OpenCV first performs face recognition on the driver's image, then eye recognition. Eye recognition technology recognizes only when the eyes are open. The algorithm then counts the number of eyes open in each frame and calculates a criterion for detecting drowsiness. If the criteria are met, the driver is designated as drowsy. Indicators and buzzers linked to the system intervene to correct abnormal driver behavior. This system requires a face and eye classifier. The HARR Classifier Cascade file built into OpenCV contains various classifiers for face and eye detection. Search and detect faces in a single frame using the built-in OpenCV xml "haarcascade_frontalface_".

The haarcascade_eye_tree_eyeglasses.xml classifier is used to detect open eyes from detected faces. The system does not recognize closed eyes. Face detection and eye opening detection were performed for each frame of the driver's face image captured by the camera. A variable Eye total is allocated to store the number of open eyes (0, 1, and 2) detected in each frame. A variable drowsiness number is assigned to store the number of consecutive frames in which the eye was seen. If Eyetotal <, the number of drowsiness increases. For blinking, the sleepiness count increases to

1. H. Sleepiness score >=, which meets the criteria for sleepiness.

As you can see, each eye is represented by his 6 landmarks.

V. LIMITATIONS

Dependance on ambient of sunshine, sometimes we would face some bad lights problems which could cause some error while detecting the face.

Distance of camera from driver’s face, the gap between the motive force and therefore the camera must be in an exceedingly certain range so, the webcam is ready to detect the motions on the face.

Use of spectacles, it’s a standard problem face by any camera within the world, as use of spectacles sometimes causes error or there’s not a perfect result of the scanof the face.

Multiple face problems, so it’s a basic problem as there’s not only the driving force travelling within the vehicle alone, there are other passengers also and sometimes the camera can catch their face movement also, which can cause a warning.

VI. FUTURE SCOPE

Moving forward, there are some things we are able to do to further improve our results and fine tune the models. First,we want to include distance between the facial landmarksto account for any movement by the topic within the video. Second, we would like to update parameters with our more complex models so as to attain better results. Third, we’dprefer to collect our own training data from a bigger sample ofparticipants while including new distinct signals of drowsiness.

VII. ACKNOWLEDGMENT

The authors are very grateful to prof. Shital Raut for taking a kin interest in this project and guiding us throughout. The authors are also grateful for the insightful comments provided by anonymous peer reviewers at books and texts. The generosity and expertise of all have greatly improved this study and saved us from many errors; those that inevitably remain are entirely our responsibility.

VIII. RESULTS

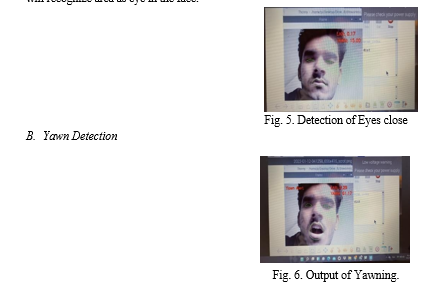

A. Eye Detection

The aim is to identify the eye from the face of a driver using shape predictor as shown in fig 5. The eye detector was loaded with the Harr detectors which can detect eyes describing the contrast taking measurement of the distance between eye area and the ear area it will recognize area as eye in the face.

Conclusion

This document details how we designed and implemented a driver assistance system using multiple compatible and cost-effective hardware components and software algorithms. The main purpose of this work is to ensure the safety of drivers and passengers traveling in vehicles. There is a lot of research in this area of work, but our work confirms cost, reliability, and ease of implementation. The notification system used in the system is compact and suitable for everyday use. I\'m here. All of this was possible because of the underlying foundation of IoT. The components used in this prototype are inexpensive and can be used in difficult conditions. The use of highly advanced futuristic technology adds something new to our project that many researchers have missed in the past.

References

[1] V B Navya Kiran, Raksha R, Anisoor Rahman, Varsha K N, Dr. Nagamani N P,” Driver Drowsiness Detection,” 2020 IEEE International RF and Microwave Conference (RFM), NCAIT – 2020 (Volume 8 – Issue 15), International Journal of Engineering Research & Technology, 21-09-2020. [2] Z. Tian et H. Qin, \"Real-time Driver\'s Eye State Detection\", in Proceedings of the IEEE International Conference on Vehicular Electronics and Safety, October 2005. [3] P. Somaldo, F. A. Ferdiansyah, G. Jati and W. Jatmiko,” Developing Smart COVID-19 Social Distancing Surveillance Drone using YOLO Implemented in Robot Operating System simulation environment,” 2020 IEEE 8th R10 Humanitarian Technology Conference (R10-HTC), 2020, pp. 1-6, doi: 10.1109/R10- HTC49770.2020.9357040. [4] Z. Tian et H. Qin, \"Real-time Driver\'s Eye State Detection\", in Proceedings of the IEEE International Conference on Vehicular Electronics and Safety, October 2005. [5] T. Hong, H. Qin and Q. Sun, \"An Improved Real Time Eye State Identification System in Driver Drowsiness Detection\", in proceeding of the IEEE International Conference on Control and Automation, Guangzhou, CHINA, 2007. [6] A. I. Kyritsis and M. Deriaz,” A Queue Management Approach for Social Distancing and Contact Tracing,” 2020 Third International Con- ference on Artificial Intelligence for Industries (AI4I), 2020, pp. 66-68, doi: 10.1109/AI4I49448.2020.00022. [7] S. Rajarajeshwari, S. Archana, S. R. Boda, R. B. Sreelakshmi, K. Venkateswaran and N. Sridhar,” A Smart Image Processing System for Hall Management including Social Distancing - “SoDisCop”,” 2020 Third International Conference on Smart Systems and Inven- tive Technology (ICSSIT), 2020, pp. 1213- 1219, doi: 10.1109/IC- SSIT48917.2020.9214262. [8] T. N. Anand Reddy, N. Deepa Ch and V. Padmaja,” Social Distance Alert System to Control Virus Spread using UWB RTLS in Corporate Environments,” 2020 IEEE International Conference on Advent Trends in Multidisciplinary Research and Innovation (ICATMRI), 2020, pp. 1-6, doi: 10.1109/ICATMRI51801.2020.9398453. [9] J. F. Moore, A. Carvalho, G. A. Davis, Y. Abulhassan and F. M. Mega- hed,” Seat Assignments with Physical Distancing in Single- Destination Public Transit Settings,” in IEEE Access, vol. 9, pp. 42985-42993, 2021, doi: 10.1109/ACCESS.2021.3065298. [10] W. Deng, R.wu” Real-Time Driver-Drowsiness Detection System Using Facial Features” VOLUME 4, 2016, IEEE TRANSACTIONS and JOURNALS [11] Y. Kobayashi, Y. Taniguchi, Y. Ochi and N. Iguchi,” A System for Monitoring Social Distancing Using Microcomputer Modules on Uni- versity Campuses,” 2020 IEEE International Conference on Consumer Electronics - Asia (ICCE-Asia), 2020, pp. 1-4, doi: 10.1109/ICCE- Asia49877.2020.9277423. [12] C. C. Liu, S. G. Hosking, and M. G. Lenné, \"Predicting driver drowsiness using vehicle measures: Recent insights and future challenges,\" Journal of Safety Research, vol. 40, no. 4, pp. 239-245, 2009. [13] P. M. Forsman, B. J. Vila, R. A. Short, C. G. Mott, and H. P. Van Dongen, \"Efficient driver drowsiness detection at moderate levels of drowsiness,\" Accident Analysis & Prevention, vol. 50, pp. 341-350, 2013. [14] S. Otmani, T. Pebayle, J. Roge, and A. Muzet, \"Effect of driving duration and partial sleep deprivation on subsequent alertness and performance of car drivers,\" Physiology & behavior, vol. 84, no. 5, pp. 715-724, 2005. [15] P. Thiffault and J. Bergeron, \"Monotony of road environment and driver fatigue: a simulator study,\" Accident Analysis & Prevention, vol. 35, no. 3, pp. 381-391, 2003. [16] Recognition, 2001.E. Vural, M. Cetin, A. Ercil, G. Littlewort, M. Bartlett, and J. Movellan, \"Drowsy driver detection through facial movement analysis,\" in International Workshop on Human-Computer Interaction, 2007, pp. 6- 18: Springer. [17] T. P. Nguyen, M. T. Chew and S. Demidenko, \"Eye tracking system to detect driver drowsiness,\" 2015 6th International Conference on Automation, Robotics and Applications (ICARA), Queenstown, 2015, pp. 472-477.

Copyright

Copyright © 2022 Suraj Jadhav, Sanket Jagdale, Niraj Jangale, Shital Raut. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET48137

Publish Date : 2022-12-14

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online