Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Essence of Machine Learning in Google Self Driving Cars

Authors: Ananya Mishra, Ms. Sonia Ahlawat

DOI Link: https://doi.org/10.22214/ijraset.2023.55695

Certificate: View Certificate

Abstract

Human beings with their innovative brain bring new inventions which are gifts to mankind. These ideas turn our lives simpler and technology plays a key role in it. Algorithms pinch ideas into reality and nevertheless failed attempts turn to success someday. Such a failed attempt was autonomous cars- where all is needed to sit in soft seats and technology will do its work. How easy does it sound? Although the concept is still new, anticipation for this vehicle is building up all around the world. This research paper will discuss the essence of machine learning and its potential role in moderating the future. The rapid development of autonomous vehicles is continuing apace, and Google is quickly becoming a major player in the field. This paper will discuss the implications of these recent developments for both the automotive and technology industries.

Introduction

I. INTRODUCTION

Machine learning is a form of learning in which machines can learn on their own by observing and manipulating data. The application of artificial intelligence (AI) in this way enables the system to learn from its experiences and improve itself over time. The success of autonomous vehicles depends on artificial intelligence, and machine learning enables these vehicles to collect data from cameras and sensors and analyze it. It gathers image data and processes it which results in identifying the difference between objects around in the path. Fetching of image data is continuous and generates prediction accordingly. The essential components of a self-driving car are movement prediction, object detection, object identification or recognition, object classification, and object localisation. The most crucial component of Google's self-driving cars is their LiDAR (LIght Detection and Ranging) technology. The driverless automobile is driven thanks to a variety of technologies. One of the key components that enable this is the camera and sensors. This automobile is powered by different software from Google. It is referred to as Google chauffeur.

According to the background of this project, which is now being overseen by Google engineer Sebastian Thrun, a co-inventor of Google Street View and the previous director of the Stanford Artificial Intelligence Laboratory. The robotic Stanley vehicle, developed by Thrun's Stanford team, won the 2005 DARPA Grand Challenge and an award of US$2 million from the US Department of Defense. After Google lobbied for regulations allowing the operation of robotic cars in that state, Nevada enacted a bill on June 29, 2011, allowing their use there. The Nevada law became effective on March 1, 2012, and in May 2012, the state's department of motor vehicles gave the first license for an autonomous vehicle to a Toyota Prius outfitted with Google's test-driven driverless technology. On March 28, 2012, Google uploaded a video on YouTube depicting California resident Steve Mahan (whose 95% vision has been lost) being driven around in Google's self-driving Toyota Prius. The team said in August 2012 that they had driven 500,000 km (nearly 300,000 miles) of accident-free autonomous driving, normally had 12 cars on the road at once, and had begun testing the vehicles with a single driver rather than two. In May 2014, Google announced a new idea for its driverless vehicle that featured neither a steering wheel nor pedals. In December of the same year, they unveiled a fully functional prototype that they intended to test on San Francisco Bay Area highways starting in 2015.

II. LEARNINGS

Supervised learning involves working with labeled data. Here, the algorithm picks out individual items from pairings of objects using prior training and knowledge. If, for example, a batch of car images is labeled as "heavy vehicles" and a set of bicycle images as "light vehicles," supervised learning should enable the algorithm to recognise these things from unlabeled images where it could discover many other objects. On the other hand, Unsupervised learning involves working with unlabeled data; large amounts of data is loaded into a machine, which then uses algorithms to determine how certain data factors relate to one another and generate patterns that the machine can recognise. For instance, the algorithm can distinguish between images of cars and bikes and determine that the information included in each image is considerably different, enabling us to categorize and separate the images of cars from the images of bikes.

III. ALGORITHMS

Google's self-driving car uses "AdaBoost", one of the key machine learning algorithms. It is a decision matrix algorithm that combines many learning techniques for classification or regression, allowing them to cooperate and support one another. It basically examines how the output of various regression and classification algorithms performs in relation to accurate predictions. To create a single strong classifier, AdaBoost combines a number of weak classifiers.

A filter chain is created during the object detection process by combining a number of classifiers. AdaBoost classifier, which has a few weak classifiers, is separated by each filter. The specific area is immediately labeled as a non-vehicle if each of these filters in the picture display vehicle region fails. When a filter recognises a portion of an image as a vehicle, that portion moves on to the following filter in the chain. If that portion of the image successfully passes all of the chain filters, it is categorized as a vehicle.It enables more precise object identification and decision-making in autonomous vehicles. Detecting faces, pedestrians, and moving vehicles are its three main applications. The motive of using the AdaBoost algorithm is to extract the best features from given features.

Similar to AdaBoost, “TextonBoost” combines weak learners to make powerful learners. It improves image identification based on “texton” labeling. “Textons” are collections of visual data that share similar traits and react similarly to filters. Google uses the TextonBoost algorithm in its self-driving car effort to combine data from three sources: appearance, shape, and context. By utilizing new features based on texton, the TextonBoost algorithm can recognise and segment images. It compiles visual data with recurring elements. Shared boosting is used to create an effective classifier that can be used with many different classes . These classifiers are added to a conditional random field to produce accurate image segmentation, and utilizing both random feature selection and piecewise training techniques, the model is effectively trained on very large datasets.This use of machine learning in Google's effort is brilliant because the results from each of these sources alone might be inaccurate. The most precise object recognition is produced by TextonBoost, which combines many classifiers. It takes a global view of the picture and depicts the elements in relation to one another.

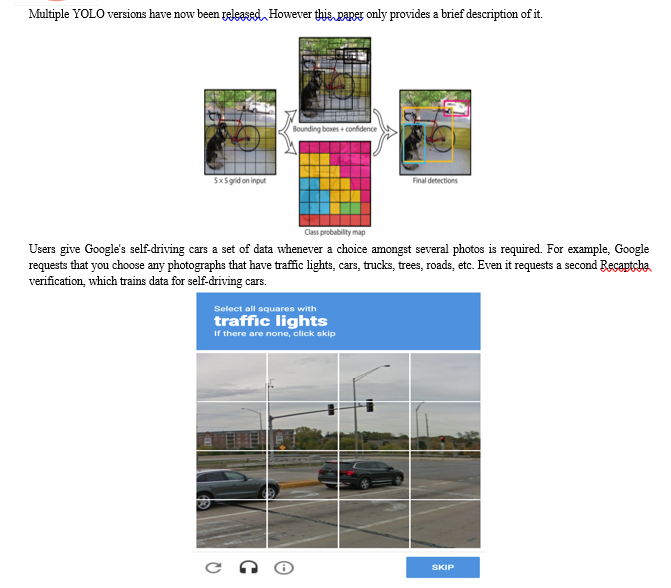

Then comes the “YOLO” (You Only Look Once) algorithm used for object detection in autonomous vehicles. Quick processing and vehicle response to circumstances in the actual world are ensured by it. YOLO divides an input image into grids after taking the image as input. After applying Image classification and localization to each grid, It then predicts the bounding boxes for objects and the class probabilities that correlate to them. The Non-Maximum Suppression (NMS) method is used to remove boxes with low class probability and combine significantly overlapping bounding boxes of the same items into single boxes in order to prune duplicate predictions pointing to the same objects at test time. The Google self-driving car will be able to classify items like people, cars, and trees using this system. It separates the entire image into parts after analyzing it. As each class of objects has a certain set of features, it labels things accordingly. This approach provides predictions for each individual image segment while taking into account each forecast for the entire image and only uses network evaluation once.

IV. CHANGING FUTURE

Self-driving cars will transform the auto industry and the world's highways in ways no one could have predicted, making vehicles smarter and more convenient for users. These cars can detect environmental elements like the existence of obstacles or traffic signals, the volume of traffic, the weather, and the state of the road. training it to run on its own. It will increase traffic safety, for example, which is one of its more legitimate benefits. lowering the frequency of accidents on the road without affecting drivers' behavior, as 94% of accidents on the road are prevented by keeping a safe and constant space between vehicles and lowering stop and go traffic. People with disabilities can live the life they want with the support of fully automated systems, which provide them personal freedom. These types of vehicles can be shared, which lowers the cost of personal transportation and increases mobility. It might lower carbon emissions and fuel use, hence minimizing exposure to greenhouse gasses. The demand for all these vehicles may increase as a result of automation and car sharing. The economic attraction of electric cars is increased when they are used more frequently throughout the day, which also allows cost sharing of up-front batteries also.

Conclusion

With the help of Machine Learning, these vehicles will help millions of blind and disabled people become more mobile and improve community connectivity, increasing road safety. The behavior of these car to Machine Learning algorithms can change and evolve without human input, even dynamically, without any human intervention or correction. These algorithms can quickly process huge amounts of data and easily identify trends and patterns that a human reviewing the same data might miss. Machine Learning systems can be scaled up and applied in new situations without the need for engineer input because they create their own rules adapting to new landscapes by successfully employing their previously learned knowledge. The algorithms employed in autonomous vehicles must repeatedly use the same type of data review. Consequently, having a system that can accomplish it fast and effectively is advantageous.

References

[1] “How Machine Learning Algorithms Make Self- Driving Cars a Reality” - Intellias Mobility Blog https://intellias.com/how-machine-learning-algorithms-make-self-driving-cars-a-reality/ [2] “The Society of Motor Manufacturers and Traders, Connected and Autonomous Vehicles”- 2019 Report. [3] “A Vision for Safety.” -U.S. Department of Transportation, Automated Driving Systems 2.0 [4] “The Society of Motor Manufacturers and Traders, Connected and Autonomous Vehicles”-2019 Report https://www.selfdrivingcars360.com/the-role-of-machine-learning-in-autonomous-vehicles/ [5] “Benefits of self-driving vehicles.”coalitionforfuturemobility.com https://coalitionforfuturemobility.com/benefits-of-self-driving-vehicles/ [6] \"Machine Learning Algorithms and Techniques in Self-Driving Cars | Self Driving Cars\'\' - Sharif blog https://www.aionlinecourse.com/tutorial/self-driving-cars/machine-learning-algorithms-and-techniques-in-self-driving-cars [7] \"How Self-driving Cars are Changing the Future\" - Michelle Joe Blog https://www.indrastra.com/2018/09/How-Self-Driving-Cars-Changing-Future-004-09-2018-0032.html [8] \"Google Self-Driving Car Project | SXSW Interactive 2016\" –Urmson https://www.youtube.com/watch?v=Uj-rK8V-rik [9] \"Self-Driving Cars -Application of ML\" - Mandla Dharani Blog https://www.codingninjas.com/codestudio/library/self-driving-cars-application-of-ml [10] \"What is AdaBoost Algorithm – Model, Prediction, Data Preparation\"- DataFlair Blog https://data-flair.training/blogs/adaboost-algorithm/ [11] \"A Feast of Sensor Data - Feeding Self-driving Algorithms\"- Daniel Rödler https://understand.ai/blog/annotation/autonomous-driving/machine-learning/2020/11/12/feast-of-sensor-data-feeding-self-driving-algorithm.html [12] \"Adaboost and Object Detection\"- Lester Melendez Presentation https://www.beylebooks.com/read/self-driving-car-simulation-using-adaboost-cnn-algorithm https://www.slideserve.com/lester-melendez/adaboost-and-object-detection [13] “Real-time object detection for autonomous vehicles using deep learning Roger Kalliomäki” https://uu.diva-portal.org/smash/get/diva2:1356309/FULLTEXT01.pdf [14] \"Moving Vehicle Detection Using AdaBoost and Haar-Like Feature in Surveillance Videos\" -Mohammad Mahdi Moghimi, Maryam Nayeri, Majid Pourahmadiand Mohammad Kazem Moghimi https://arxiv.org/ftp/arxiv/papers/1801/1801.01698.pdf [15] “TextonBoost for Image Understanding: Multi-Class Object Recognition and Segmentation by Jointly Modeling Texture, Layout, and Context” J. Shotton, J. Winn, C. Rother, A. Criminisi IJCV 2007Computer Vision Seminar Presented by Nick Morsillo https://pdfs.semanticscholar.org/9243/acbc80de21ce4c79664b8ce1a6437605deae.pdf [16] \"A Practical Guide to Object Detection using the Popular YOLO Framework – Part III\"- Pulkit Sharma https://www.analyticsvidhya.com/blog/2018/12/practical-guide-object-detection-yolo-framewor-python/

Copyright

Copyright © 2023 Ananya Mishra, Ms. Sonia Ahlawat. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET55695

Publish Date : 2023-09-11

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online