Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Face to BMI Prediction Using Machine Learning Algorithms

Authors: Srinivas Mekala, Sai Krishi Samala, Sai Satwik Sriramula, K. Naga Sailaja

DOI Link: https://doi.org/10.22214/ijraset.2023.53140

Certificate: View Certificate

Abstract

BMI of a person is a gauge of how healthy they are in relation to their weight. Numerous aspects, including physical health, mental health, and popularity, have been linked to BMI. Accurate height and weight, is frequently necessary for BMI calculations which would need labor-intensive measurement. BMI is a key indicator of diseases that can develop as a result of greater body fat levels and is related to cholesterol and body fat. Governments and businesses can employ large-scale automation of BMI calculation to analyze many facets of society and to make smart decisions. Prior works have only used geometric facial features, disregarding other information, or a data-driven deep learning strategy where the amount of data becomes a limiting factor. From health industry till the social media applications, there are many areas where BMI data is used. Human faces contain a number of cues that are able to be a subject of a study. Hence, face image is used to predict BMI. In this study, a deep network-based BMI predictor tool is designed and its performance is evaluated using Convolutional Neural Networks (CNN) and Regression.

Introduction

I. INTRODUCTION

Any person's BMI (Body Mass Index) is an important sign of their health. If the person is underweight, normal, overweight, or obese, it is determined. Body mass index (BMI), a measure of obesity based on a person's height and weight, was created by Lambert Adolphe Jacques Quetelet. Despite the fact that it cannot discriminate between muscle and fat, which are linked to weight, BMI is the most useful index for evaluating obesity [9]. The state of people's health nowadays is one of the most overlooked issues. Even technology with many advantages has its downsides. It has made people sluggish, which has decreased their physical activity and resulted in a sedentary lifestyle and an increase in BMI, both of which are harmful to their health.

A person’s face can tell a lot about them. Recent research has revealed a significant link. This research explores the relationship between a person's BMI and their facial features [2]. People with thin faces are likely to have lower BMIs, and vice versa. Obese persons typically have bigger middle and lower facial features. Without a measuring tape and a scale, it is challenging for the person to calculate their BMI. Deep learning has made tremendous strides recently, enabling models to extract useful information from photos. These techniques allow us to extrapolate the BMI from human faces. Therefore, we have suggested a method to estimate BMI from faces in this study.

A. Scope of BMI using facial features

A person's weight is divided by their height squared to obtain their BMI, a frequently used indicator of body weight. However, direct access to a person's weight and height is needed to calculate BMI, which may not be possible in all circumstances. It's possible for someone to be in a situation where they are unable to even stand up straight or if they do not have access to tools for measuring their height and weight. As a result, research on the use of face characteristics as a potential alternative approach for calculating BMI has generated interest. The goal of utilizing face characteristics to identify BMI is to provide a non-intrusive and practical approach for calculating BMI that may be utilized in circumstances when it is not possible or practical to take direct measurements of weight and height. Machine learning algorithms may be used to extract and evaluate facial elements from photographs, such as the size and shape of the face, the cheekbones and jawline, the space between the eyebrows, the length of the earlobes, etc., that have been proven to be linked with BMI.

BMI detection is still a progressing field, more research is required to fully understand its potential and limitations. There is a possibility of lacking the ability to get enough training data for the model due to privacy reasons.

II. RELATED WORK

As the drastic growth in obesity issues all over the world, there aroused a need for self-examining model for health and BMI. We can achieve this by studying the facial features using AI to approximate the BMI of a person. This research has compared five different deep learning models like VGG, ResNet50, DenseNet, MobileNet, lightCNN [1]

A multi-task cascaded convolutional neural networks [2] is used to build a model to predict BMI and used SMTP protocol to send the image and the health details as an email to the health officer. This research has used the data of the patients like, age, gender, weight, height to train the model along with the facial images.

A ResNet architecture model called as ResNet50 is used as a predictor to estimate the age, gender of a facial image. This ResNet50 model is based on Deeper Neural Networks. A residual networks of depth 152 layers [3] is applied on ImageNet dataset to fine tune the model.

Images from publicly available sources like social media are used to train a BMI model. It basically has two stages, one is to build a deep feature extraction model and one is training of a regression model [4].

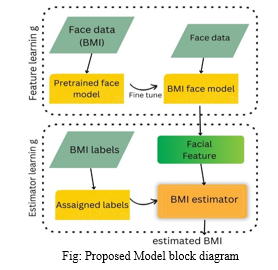

III. PROPOSED MODEL

A CNN based prediction model which can examine the facial features of a given image to calculate the BMI of a person determine whether they are underweight, healthy, overweight or obese. ImageNet dataset, which is a huge dataset available on internet to train various image detection algorithms is used to train and test the model.

A Haar cascade classifier is used to detect the face from the given image which is a pre trained model so that we can crop the face to the required appropriate size i.e (224,224,8)

IV. SYSTEM ANALYSIS

Python programming language is used to build this model where libraries like Tensorflow, Keras, OpenCV, Numpy, Pandas, Tkinter etc... are used.

A. Tensor flow

Keras is used to run prototyping and experimentation of neural networks. It runs on top of Tensorflow. Tensorflow, Theano, CNTK are mostly supported by keras in the backend. It helps in adding, replacing and removing layers easily.

B. CNN

Convolutional neural networks are a sub topic under Artificial neural networks which is more focused on the visual detection of objects, people or animals. In the layers of a neural network if one of the layer uses a mathematical method called Convolution, then the network can be called as CNN.

C. ResNet50

Residual Neural Networks is an architecture under CNN introduced in 2015 [4]. It solves the problem of vanishing gradients and made a deeper neural network possible to go deeper. ResNet50 is a part of this architecture which is 50 layers deep built using 2 CNNs and followed by a softmax activation function. It created a benchmark in the large-scale visual recognition challenge [8]

D. Haar Cascade Classifier

Haar cascade classifier is a machine learning approach used to detect objects from images or videos. Specific features like edges corners rectangular regions are detected to compute haar like features. This classifier is trained with a set of positive and negative samples of the required object, so that it can learn to differentiate the positive and negative samples by adjusting the feature weights. The downfall of this classifier is, it takes high computational resources and memory.

E. Activation Function

It defines the output of a neuron or node when a set of inputs are given. It is built to act as a human biological neuron. It is used in neural networks to compute weighted sum of input and biases to trigger a node.

- Softmax

This activation function is basically used in output layers of a network to perform multi-class classifications. It changes the outputs of a neural network in to a probability distribution.

2. ReLu

Rectified Linear Unit lets us replicate nonlinear interactions b/w input and output which is a general requirement for CNN based deep learning models. It has become a default activation function for various deep learning applications in wide range or architectures.

V. IMPLEMENTATION

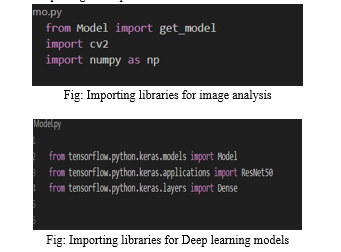

A. Importing the required packages

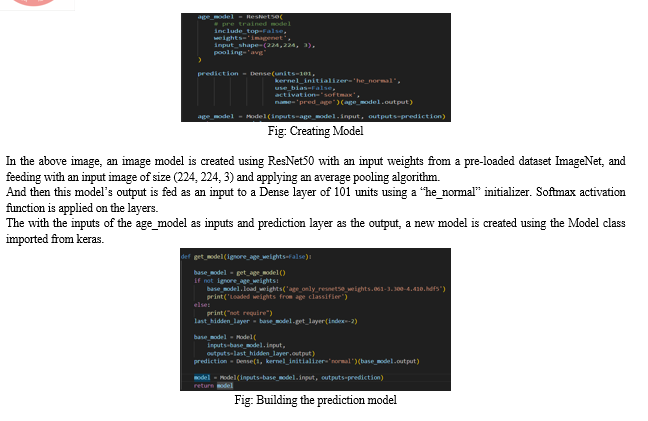

The Model is imported from Tensorflow Keras to create a model from the existing models available Keras. ResNet50, a pre-loaded residual network model is imported to apply on the images to get the age model. Dense layers are imported to apply layers in the model.

Numpy and CV2 are imported to get the facial features from the uploaded image. They detect the face from it and resize it to the requirement i.e (224 x 224 x 8) and extraction of features is done to feed the prediction model with the input.

And then the age_model, is used as a base model and by using age_only_resnet50 classifier, a new model is created where the input is the inputs of the base model and output is the last hidden layer of the base model. Then prediction is extracted from a newly created Dense layer of units 1.

This prediction as the output and input of the base model as the input, a model is created which we can use as a BMI prediction model.

VI. RESULTS

BMI is considered a measure of body fat based on a person's height and weight. It can serve as a screening tool. BMI may also be used to diagnose a variety of health issues.

Based on BMI, a person is classified as follows:

- Underweight if BMI<18.5

- Healthy Weight if BMI > 18.5 <24.9

- Overweight if 25 < BMI > 29.9

- Obese if 30 < BMI

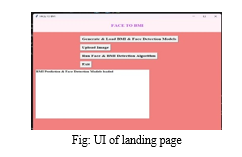

The above interface works like this:

a. First to click “Generate and Load BMI & Face detection Model to load the CNN model.

b. Then to give picture as input to the model click on “Upload image” and then selected a picture from the saved location on the desktop. (Make sure only one person is present in the picture in order to get better results).

c. Then in order to predict the BMI click on “Run Face and BMI detection Algorithm” in order to get the result.

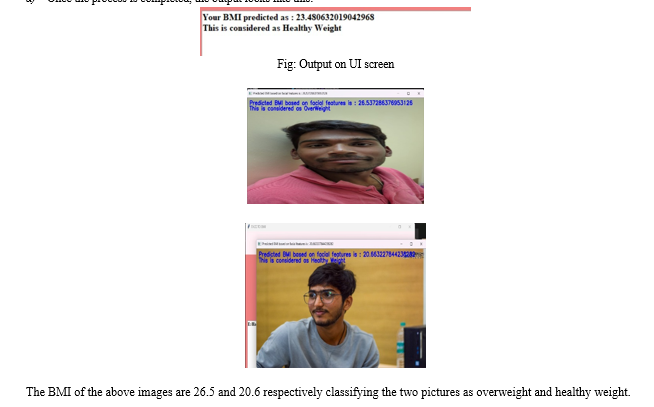

d. Once the process is completed, the output looks like this:

VII. FUTURE SCOPE

It might be difficult to determine someone's BMI using traditional techniques since not everyone is aware of their weight and height. Face to BMI prediction can be connected with Android applications and health apps to provide a fast BMI result. Additionally, it may be utilised by health applications to provide different people a diet plan based on their BMI. In some cases, a mobile app is more practical than a desktop one. Additionally, a person's BMI may be utilised to compile a health report that can be used for a variety of medical purposes.

Conclusion

We came to the conclusion that there is a significant correlation between BMI and facial features. Additionally, we found that those with higher BMIs are more likely to experience health issues. The suggested technique does not need a complete body image. The exact method may be broken down into three steps: Building a model to utilise the facial characteristics from the image, then utilising the dataset to train the model, and then classifying the individual into several categories based on the estimated values. Although we found no gender bias in the BMI prediction, this can still be improved for better outcomes. Lack of huge datasets and compatibility to work with large datasets, as well as the inability to forecast the BMI of numerous people in a single image, are the project\'s biggest drawbacks. A huge dataset containing a diverse range of photos and faces helps improve the model\'s accuracy and output. It was difficult to calculate a person\'s BMI using the 2D information from the photograph.

References

[1] Hera Siddiqui, Ajita Rattani, Dakshina Ranjan Kisku and Tanner Dean; “AI-based BMI Inference from Facial Images: An Application to Weight Monitoring”; 2020. [2] Dhanamjayulu C, Nizhal U N, Praveen Kumar Reddy Maddikunta, Thippa Reddy Gadekallu, Celestine Iwendi, Chuliang Wei, Qin Xin; “Identification of malnutrition and prediction of BMI from facial images using real-time image processing and machine learning; 2021. [3] Enes Kocabey, Mustafa Camurcu, Ferda Ofli , Yusuf Aytar, Javier Marin, Antonio Torralba, Ingmar Weber; “Face-to-BMI: Using Computer Vision to Infer Body Mass Index on Social Media”; 2017. [4] Kaiming He, Xiangyu Zhang, Shaoqing Ren and Jian Sun; “Deep Residual Learning for Image Recognition”; 2015. [5] Lingyun Wen, Guodong Guo; “A computational approach to body mass index prediction from face images”; 2013. [6] Nadeem Yousafa , Sarfaraz Husseinb and Waqas Sultani; “Estimation of BMI from Facial Images using Semantic Segmentation based Region-Aware Pooling”; 2021. [7] Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li and Li Fei-Fei; “ImageNet: A Large-Scale Hierarchical Image Database”; 2009. [8] Olga Russakovsky, Jia Deng, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang , Andrej Karpathy , Aditya Khosla, Michael Bernstein , Alexander C. Berg , Li Fei-Fei; “ImageNet Large Scale Visual Recognition Challenge”; 2015. [9] Chong Yen Fook, Lim Chee Chin, Vikneswaran Vijean, Lim Whey Teen, Hasimah Ali, Aimi Salihah Abdul Naseer, “Investigation on Body Mass Index Prediction from Face images”; 2020.

Copyright

Copyright © 2023 Srinivas Mekala, Sai Krishi Samala, Sai Satwik Sriramula, K. Naga Sailaja. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET53140

Publish Date : 2023-05-27

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online