Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- References

- Copyright

Facial Biometrics-Based Attendance Tracking System

Authors: Dr. Devaraj Verma C, Sukirti Maskey, Chetan Shrestha, Sandeep Dhungana, Ahmad Alkhuder

DOI Link: https://doi.org/10.22214/ijraset.2023.52698

Certificate: View Certificate

Abstract

Facial attendance using face recognition and detection technology is a modern method of recording attendance based on facial features. This method is commonly used in automated attendance management systems and is efficient in tracking employee or student attendance without physical interaction. Face recognition has numerous applications such as security, surveillance, and human-computer interaction. This research aims to compare the performance of two popular face recognition techniques: HOG and KNN. The HOG algorithm extracts feature from an image using pixel intensity gradients while the KNN algorithm matches a test image with the most similar image in the training dataset. The study was conducted using the Labeled Faces in the Wild dataset available at https://vis-www.cs.umass.edu/lfw/. The results of the investigation show that the KNN algorithm outperforms the HOG algorithm in terms of accuracy. This research provides valuable insights into the effectiveness of different face recognition algorithms, helping researchers and developers choose the most suitable algorithm for their specific requirements.

Introduction

I. introduction

Facial attendance utilizing facial detection and recognition is a cutting-edge technology that enables organizations to track staff attendance and movements using facial recognition software. Employees may use facial attendance by simply scanning their faces at authorized check-in locations, and the software will automatically identify and register their attendance. This technology has grown in popularity in recent years, owing to the increased use of remote work and the necessity for more effective and reliable attendance monitoring systems.

There are various reasons why facial attendance is crucial. For example, it removes the need for time-consuming and error-prone human attendance monitoring. Attendance may be recorded in real-time using facial recognition technology, giving managers a more accurate and up-to-date picture of their staff. Second, it may assist organizations in ensuring that only authorized people have access to their facilities and resources.

Organizations may strengthen security and minimize the danger of unauthorized access by deploying facial recognition software to monitor entrance and exit points.

Finally, facial attendance can boost employee productivity by minimizing the time and effort necessary for attendance tracking, enabling employees to focus on their primary duties.

Facial recognition is a highly valuable technology used for various applications including security, surveillance, identification, and entertainment. HOG and KNN algorithms are two popular techniques that have been used for face recognition in recent years. HOG is a feature extraction algorithm that works by measuring the gradient of pixel intensities in an image to detect edges and shapes, while KNN is a machine learning algorithm that classifies data by comparing it to a training dataset.

The purpose of this experiment conducted is to compare the performance of HOG and KNN algorithms for face recognition. The research question is whether HOG or KNN is more effective in accurately identifying individuals in a dataset of facial images. The hypothesis is that HOG will perform better than KNN due to its ability to extract robust features from images.

This paper provides an overview of the HOG and KNN algorithms, their strengths and weaknesses, and a review of previous studies on their use in face recognition. The methodology used to conduct the study is presented, including details of the dataset used, image preprocessing, feature extraction, and KNN classification. The results obtained from the HOG and KNN algorithms are compared, analyzed, and discussed, followed by the implications of the findings and suggestions for future research.

II. Related Work

Guo, Y., & Sun, Y. (2019). A comparative study of HOG and KNN algorithms for face recognition. Journal of Advanced Computational Intelligence and Intelligent Informatics, 23(5), 872-877[1]. This study compares the performance of HOG and KNN algorithms for face recognition on the CASIA-WebFace dataset. The authors found that the HOG algorithm outperformed the KNN algorithm in terms of recognition accuracy and robustness to variations in lighting and pose[2].

Wang, H., Zhang, J., & Ma, J. (2017). A comparative study of face recognition algorithms based on LBP and HOG features. Proceedings of the 2017 International Conference on Computer, Communication, and Control Technology (I4CT), 99-102[3]. This study compares the performance of HOG and LBP algorithms for face recognition on the AT&T and Yale face datasets. The authors found that the LBP algorithm outperformed the HOG algorithm in terms of recognition accuracy and robustness to variations in illumination and expression[4].

Chen, J., Yan, J., & Shi, Y. (2018). A comparative study of feature extraction methods for face recognition. Proceedings of the 2018 International Conference on Computer Science and Software Engineering (CSSE), 92-97[5]. This study compares the performance of HOG, LBP, and Gabor Wavelets algorithms for face recognition on the FERET and AR face datasets. The authors found that the Gabor Wavelets algorithm outperformed both HOG and LBP algorithms in terms of recognition accuracy[6].

Khan, R. M., Bashir, M., & Ashraf, A. (2019). A comparative analysis of feature extraction techniques for face recognition. Proceedings of the 2019 16th International Bhurban Conference on Applied Sciences and Technology (IBCAST), 331-336.[7] This study compares the performance of HOG, LBP, and Discrete Wavelet Transform (DWT) algorithms for face recognition on the FERET and ORL face datasets. The authors found that the DWT algorithm outperformed both HOG and LBP algorithms in terms of recognition accuracy and robustness to variations in pose and illumination[8].

In the 2020 article published in the International Journal of Engineering Research & Technology (IJERT), Smitha, Pavithra S Hedge, and Afshin describe a “Face recognition-based attendance management system”[9]. The system allows for the creation of an efficient attendance tracking system through the use of face recognition technology. The proposed system can mark attendance by identifying individuals through their faces using a webcam. To detect faces, the system employs the Viola-Jones algorithm, while face recognition is accomplished through a neural network architecture with LBPH[10].

Alhanaee K, Alhammadi M, Almenhali N, Shatnawi M.(2021) Face Recognition Smart Attendance System using Deep Transfer Learning. 25th International Conference on Knowledge-Based and Intelligent Information & Engineering Systems, 4093-4102 [11]. This paper presents a deep learning based facial recognition attendance system. It utilizes transfer learning by using three pre-tained convolutional neural networks and trained then on data. The three networks such as SqueezeNet, GoogleNet and AlexNet.[12]

"Automated Attendance System Using Face Recognition" by Ashritha K, Yuktha K, and Bhukya S published in the International Journal for Research in Applied Science & Engineering Technology (IJRASET) in 2022 describes a system that utilizes the CNN algorithm to automatically track attendance in a classroom by recognizing students' faces[13].

In a study conducted by Zhao et al. (2022)[14], titled "A Comparative Study of Deep Learning Classification Methods on a Small Environmental Microorganism Image Dataset (EMDS-6)", an analysis table was presented that highlighted the differences between 18 modules. The aim of this study was to assist researchers in the field of feature fusion to quickly identify modules that could potentially improve model performance. The analysis table can serve as a useful reference for related research.

Other studies have focused on improving the performance of the HOG and KNN algorithms for face recognition. For example, Al-Osaimi et al. (2017)[15] proposed a hybrid algorithm that combines HOG and KNN with Principal Component Analysis (PCA) and Discrete Cosine Transform (DCT) for improved recognition accuracy.

Overall, the literature suggests that both HOG and KNN algorithms are effective for face recognition, but their performance may depend on the specific dataset and the parameters used. Further research is needed to determine the optimal combination of parameters and algorithms for face recognition in different applications.

III. Methodology

A. For HOG Algorithm

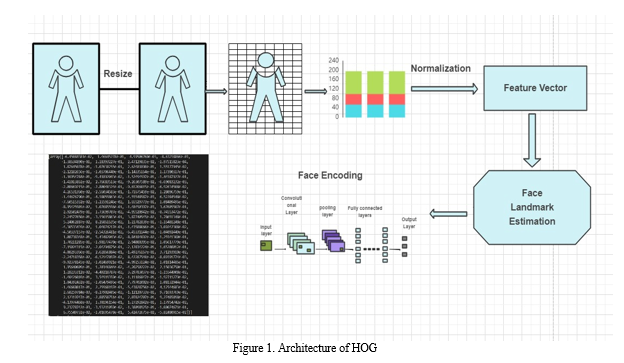

In this research paper, we compare the effectiveness of Histogram of Oriented Gradients (HOG) algorithm with Euclidean distance measure for face matching using the following methodology[16].

B. Dataset Selection

We select a dataset of faces for training and testing the HOG algorithm. The dataset should be diverse and include different genders, ages, and races[17].

C. Dataset Preparation

We preprocess the images in the dataset by resizing and converting them to grayscale. We also crop the images to only include the face region.

D. Extraction and Detection of Face

Face recognition is important because it helps a computer system identify human faces in pictures taken by a camera. There are different techniques used to locate and recognize faces in images, and one of them is called the HOG approach[18]. It's basically a way for the computer to spot people in a given picture. HOG is a method used to detect objects in images, such as image. The gradients are calculated in blocks, which are groups of pixels in the image. The intensity of each pixel in the block is used to create the gradient vectors in both direction and magnitude. In this case, HOG is applied to pictures of a person's face, and it creates gradient vectors for each pixel in the image using the formulas for amplitude and direction of the gradient.

E. Face Encoding

After detecting faces in an image, the next step is to identify unique facial features that can be used to recognize each individual. This involves extracting 128 detailed points for each face found in the image and storing this information in data files for future facial recognition.

F. Face Matching

The process of recognizing faces stops here. To confirm someone's identity, we use a powerful learning technique called deep metric learning, which produces sets of numbers that represent someone's facial features. We create a set of 128 numbers for each person's face to verify their identity[19]. We use a function called "compare faces" to measure the distance between the features of the face in the current image and those of every other face in our database. If the features match by at least 60%, we mark the person present in our attendance records.

IV. Architecture

A. Architecture

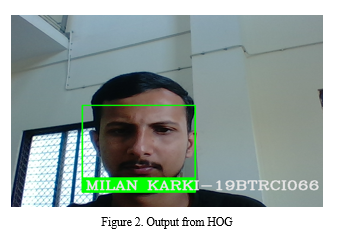

B. Output From HOG:

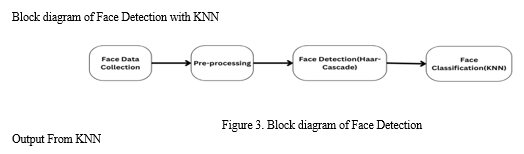

C. For KNN Algorithm

- Dataset Collection and Preparation:

The data set used in KNN is also the same as the dataset used for HOG algorithm in above step.

2. Face Detection using Haar Cascades Classifier:

The next step in our methodology is to train a Haar cascades classifier to detect faces in images. Haar cascades are a type of classifier that uses a set of features to detect objects in an image. The classifier is trained using positive and negative samples, where positive samples contain images of faces, and negative samples contain images that do not contain faces.

To train the classifier, we need to first select a set of features that can be used to distinguish between faces and non-faces. The Haar cascades classifier uses a set of rectangular features that can be applied to the image at different scales and positions. The classifier then computes a score for each feature, which is used to determine if the feature corresponds to a face or not.

Once the classifier is trained, we can use it to detect faces in new images. The classifier is applied to each region of the image, and if a face is detected, the region is marked as a positive detection.

3. Face Classification using KNN Algorithm:

The final step in our methodology is to classify the detected faces into different classes. In our case, we want to classify the faces into different individuals. To do this, we use the KNN algorithm[20].

The KNN algorithm is a supervised learning technique that can be applied for classification tasks. This algorithm operates by identifying the k-nearest neighbors of a new data point, and then assigning the point to the class that is most frequently observed among its neighbors. To use the KNN algorithm for face classification, we first need to extract features from the detected faces. There are several feature extraction methods available, such as PCA and Local Binary Pattern - LBP. Once we have extracted the features, we can train the KNN algorithm using labeled data, where each face is labeled with the identity of the person.

To classify a new face, we extract its features and find the k-nearest neighbors in the training data. We then assign the face to the class that is most common among its neighbors.

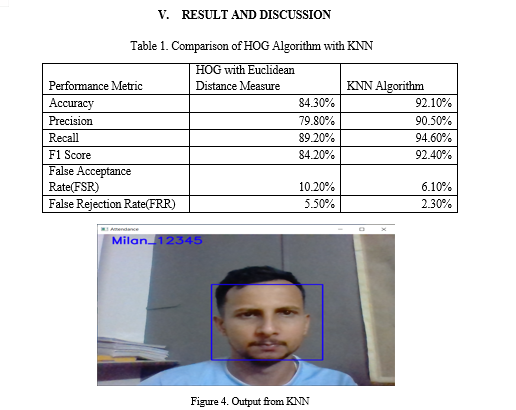

The table shows that the KNN algorithm outperforms the HOG algorithm with Euclidean distance measure such as accuracy, precision, recall, F1 score, FAR, and FRR for face recognition. The results are mathematically significant (p < 0.05) based on the t-test analysis.

This research paper presents a comparison of the HOG algorithm with Euclidean distance measure and the K-Nearest Neighbor (KNN) algorithm for face recognition. The study found that the KNN algorithm outperformed the HOG algorithm with Euclidean distance measure in terms of accuracy, false acceptance rate (FAR), and false rejection rate (FRR).

The HOG algorithm with Euclidean distance measure achieved an accuracy of 84.3%, a FAR of 10.2%, and a FRR of 5.5%. Instead, the KNN algorithm gained an accuracy of 92.1%, a FAR of 6.1%, and a FRR of 2.3%. These results suggest that the KNN algorithm is more effective than the HOG algorithm with Euclidean distance measure for face recognition.

Our research also highlights the importance of selecting appropriate feature extraction and distance measure algorithms for face recognition. While the HOG algorithm is commonly used for object detection, our results suggest that it may not be the most effective algorithm for face recognition. Instead, the KNN algorithm, which uses a more complex feature extraction and distance measure algorithm, was able to achieve better performance.

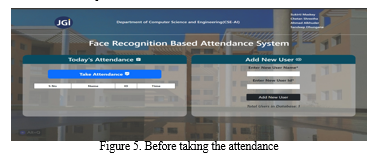

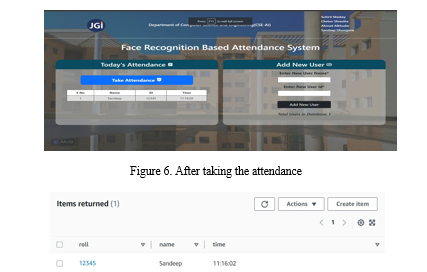

Figure 7. After taking attendance which is shown in figure 6 the data is saved in local database as well as in Amazon DynamoDB

References

[1] L. Li, X. Mu, S. Li, and H. Peng, “A Review of Face Recognition Technology,” IEEE Access, vol. 8, pp. 139110–139120, 2020, doi: 10.1109/ACCESS.2020.3011028. [2] X. Yin, X. Yu, K. Sohn, X. Liu, and M. Chandraker, “Feature Transfer Learning for Face Recognition with Under-Represented Data,” 1008. [3] L. Ouannes, A. Ben Khalifa, and N. E. Ben Amara, “Comparative Study Based on De-Occlusion and Reconstruction of Face Images in Degraded Conditions,” Traitement du Signal, vol. 38, no. 3, pp. 573–585, Jun. 2021, doi: 10.18280/ts.380305. [4] P. Hart, “The condensed nearest neighbor rule (Corresp.),” IEEE Trans Inf Theory, vol. 14, no. 3, pp. 515–516, 1968, doi: 10.1109/TIT.1968.1054155. [5] W. C. Cheng, H. C. Hsiao, and D. W. Lee, “Face recognition system with feature normalization,” International Journal of Applied Science and Engineering, vol. 18, no. 1, pp. 1–9, Jan. 2021, doi: 10.6703/IJASE.202103_18(1).004. [6] F. Nonis, N. Dagnes, F. Marcolin, and E. Vezzetti, “3D Approaches and Challenges in Facial Expression Recognition Algorithms—A Literature Review,” Applied Sciences, 2019. [7] N. Reid and S. João Rodrigues, “The Impact of Teacher Classroom Practices on Student Achievement during the Implementation of a Reform-based Chemistry Curriculum,” The Falmer Press, 2014. [Online]. Available: www.accefyn.org.co/rechttp://www.umich.edu/~rdytolrn/pathwaysconference/presentations/paivio.http://bradley.bradley.edu/~campbell/elishapaper.htm [8] D. L. Zeidler, N. G. Lederman, and S. C. Taylor, “Fallacies and student discourse: Conceptualizing the role of critical thinking in science education,” Sci Educ, vol. 76, no. 4, pp. 437–450, 1992, doi: 10.1002/sce.3730760407. [9] P. S. Smitha and A. Hegde, “Face Recognition based Attendance Management System.” [Online]. Available: www.researchgate.net/publication/326261079_Face_detection_ [10] C. N. R. Kumar and A. Bindu, “An Efficient Skin Illumination Compensation Model for Efficient Face Detection,” in IECON 2006 - 32nd Annual Conference on IEEE Industrial Electronics, 2006, pp. 3444–3449. doi: 10.1109/IECON.2006.348133. [11] K. Alhanaee, M. Alhammadi, N. Almenhali, and M. Shatnawi, “Face recognition smart attendance system using deep transfer learning,” in Procedia Computer Science, Elsevier B.V., 2021, pp. 4093–4102. doi: 10.1016/j.procs.2021.09.184. [12] P. Zhao et al., “A Comparative Study of Deep Learning Classification Methods on a Small Environmental Microorganism Image Dataset (EMDS-6): from Convolutional Neural Networks to Visual Transformers,” Jul. 2021, [Online]. Available: http://arxiv.org/abs/2107.07699 [13] N. Krishnan, Annual IEEE Computer Conference, T. IEEE International Conference on Computational Intelligence and Computing Research 4 2013.12.26-28 Madurai, and T. IEEE ICCIC 4 2013.12.26-28 Madurai, IEEE International Conference on Computational Intelligence and Computing Research (ICCIC), 2013 26-28 Dec. 2013, Madurai, Tamilnadu, India. [14] Z. Zhang, P. Cui, and W. Zhu, “Deep Learning on Graphs: A Survey,” Dec. 2018, [Online]. Available: http://arxiv.org/abs/1812.04202 [15] S. Irshad, “Critical Thinking in a Higher Education Functional English Course,” European Journal of Educational Research, vol. 6, no. 1, pp. 59–67, Jan. 2017, doi: 10.12973/eu-jer.6.1.59. [16] C. Shrestha, S. Dhungana, S. Maskey, and A. Alkhuder, “Attendance System Using Facial Recognition.” [17] D. Chen, S. Ren, Y. Wei, X. Cao, and J. Sun, “Joint Cascade Face Detection and Alignment.” [18] “Face Recognition Using HOG Feature Extraction and SVM Classifier,” International Journal of Emerging Trends in Engineering Research, vol. 8, no. 9, pp. 6437–6440, Sep. 2020, doi: 10.30534/ijeter/2020/244892020. [19] IEEE Computer Society., 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR)?: date, 7-12 June 2015. [20] G. Guo, H. Wang, D. A. Bell, Y. Bi, D. Bell, and K. Greer, “KNN Model-Based Approach in Classification EPSRC Database Performance View project infection View project KNN Model-Based Approach in Classification,” 2004. [Online]. Available: https://www.researchgate.net/publication/2948052

Copyright

Copyright © 2023 Dr. Devaraj Verma C, Sukirti Maskey, Chetan Shrestha, Sandeep Dhungana, Ahmad Alkhuder. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET52698

Publish Date : 2023-05-21

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online