Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Development of Haptic Prosthetic Hand for Realization of Intuitive Operation for Physically Challenged

Authors: Aayeesha Najla J, Ullas J Naik , Akash R, Darshan H M, Dr. Aravinda H L

DOI Link: https://doi.org/10.22214/ijraset.2023.54607

Certificate: View Certificate

Abstract

The Amputees are prone to physical and mental problems. Due to the loss of their body parts, they mostly rely on others to perform their daily activities. In order to facilitate and improve the quality of life with independence and freedom to the amputees, prosthesis was developed. The notion for a low-cost microcontroller-based prosthetic arm is presented in this project. The idea is to change the perception of remote controls for actuating manually operated Prosthetic Arm. Well, this project provides a way to eradicate the buttons, joysticks and replace them with other advanced techniques that controls the complete Prosthetic Arm by the user gesture, voice command, flex sensors. The human commands are sensed with the help of a Bluetooth and flex sensors. A microcontroller is used in the transmitter section. It is coded in such a way that the required actions for the human gestures are done. These sensed signals are processed and then transmitted to the Arm at the receiver section. Thus the prosthetic arm performs the required movement. Prosthesis is able to achieve actions like fully hold or release objects, bi-digital gripping pincer, or postures like thumb up and pointing with the index finger. Many studies use voice recognition to control medical devices and hand prostheses in real-time, but its use has some limitations. Furthermore, some studies take advantage of inertial sensors to control the hand prostheses. By reviewing the advantages and limitations for each control method, our project proposes a new synchronized control system, that combines voice recognition, inertial measurement, flex sensors based combination strategies to render the prosthetic hand more feasible, and easy to use.

Introduction

I. INTRODUCTION

Advancements in the field of prosthetics have revolutionized the lives of physically challenged individuals, offering them the opportunity to regain mobility and independence. One remarkable innovation in this area is the development of haptic prosthetic arms that aim to provide intuitive operation and a seamless integration with the user's body. The primary objective of developing a haptic prosthetic arm is to create a limb replacement that not only mimics the appearance of a biological arm but also replicates its sensory feedback and dexterity. This breakthrough technology enables physically challenged individuals to perform a wide range of daily tasks with relative ease, effectively bridging the gap between their physical limitations and their desiredlevel of independence.

Prosthetic hand (artificial hand) becomes the main alternative for replacing the lost hand. Prosthetic hand can be divided in two types; body-powered and electrical motor powered prosthesis. Body-powered prosthetic hand is manually operated by utilizing the movement of certain body parts that are connected through cables. The movement of certain body part such as shoulder is be able to drive the prosthetic hand. While electric motor powered prosthetic hand is operated by the electric motorutilizing electrical signal from the remaining human hand in order to drive the prosthetic hand.

Replicating the human hand has become one of the most desired achievements in robotics and prosthesis, but also one of the most difficult goals ever set in engineering. In the pursuit of this goal, there have been several researches aiming toreproduce the mechanical behaviour of the hand and to get control signals from theremaining muscles or nerves near the amputation, in order to create an ultimate handprosthesis that can retrieve part or full functionality to an amputee.

In India, there are about 5 million disabled people (in movement/motor functions). The disabled people affected with neuromuscular disorders such as multiple sclerosis (MS) or amyotrophic lateral sclerosis (ALS), brain or spinal cord injury, Myasthenia gravis, brainstem stroke, cerebral palsy etc., in order to express themselves one must provide them with augmentative and alternative communication. Brain Computer Interface (BCI) systems has been developed to address this need. Recent advancements in BCI have presented new opportunities for development of new prosthetic arm interface for such people based on thought or brain signals. BCIs systems include the conventional channels of communication which is muscles and speech instead they provide direct communication and control between human brain and physical devices by translating the brain activity into commands in real time. Early prosthetics were simple. They were immovable prosthesis like wooden shaft, pegs and metal hooks.

Later advances facilitate the movement of the prosthesis, but they looked very different from a human hand. Emphasis was given on the improvement in both the function and appearance of prosthesis. As technology advanced, the hands became more natural. An EEG sensor detects various waves from the brain such as alpha, beta, gamma and theta by using these waves the Brain sense brainwave sensor measures attention, meditation and concentration values the values are assigned for the specific task to be performed bythe 3D Print prosthetic arm which is controlled by servos.

Development of human arm prosthetics, which are improved to regain lost functions of amputated limbs, encounters critical and challenging problems to carry out various dexterous tasks. To date, many of revolutionizing design of human arm prosthetics including Boston Arm (Mann & Reimers, 1970), Deka Arm (Resnik, 2010), Otto Bock trans carpal hand (Otto Bock Health Care, Minneapolis, MN), and Shanghai Kesheng Hands (Shanghai Kesheng Prosthese Corporation Ltd.) have been developed. Intuitive and precise control of such prostheses is still one of the main interests of scientific studies.

II. METHODOLOGY

The implementation of both the prosthetic hand and its speech recognition- based control electronics has been successfully accomplished. The control hardware has been developed to recognize basic pick up and release operations, and rigorous programming and testing have been conducted to ensure its functionality. The current stage of the study marks a significant milestone in the development of this technology.

This advancement will enable users to perform more complex tasks, such as executing a realistic handshake, further enhancing the natural and intuitive functionality of the prosthetic hand. The inclusion of additional voice commands will provide users with greater control and flexibility, empowering them to interact with their environment more effectively.

Looking ahead, future studies will focus on expanding the capabilities of the prosthetic hand by including a broader range of voice commands for its operation. This advancement will enable users to perform more complex tasks, such as executing a realistic handshake, further enhancing the natural and intuitive functionality of the prosthetic hand. The inclusion of additional voice commands will provide users with greater control and flexibility, empowering them to interact with their environment more effectively.

Additionally, there is an ongoing effort to improve the cosmetics of the prosthetic hand, aiming to make it appear more natural. Enhancements in the hand's aesthetics will contribute to its overall acceptance and integration within society. By creating a visually appealing and lifelike prosthetic hand, individuals using this technology will feel more confident and comfortable, fostering a sense of normaly and reducing the stigma associated with prosthetics.

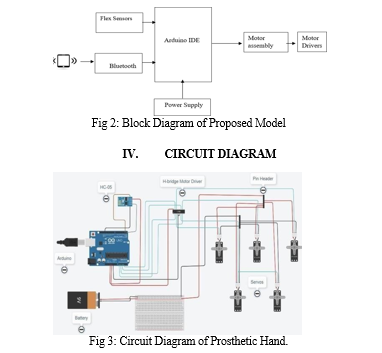

A. Sensors and User Inputs

Various sensors are integrated into the prosthetic hand to gather information about the user's intentions and the surrounding environment. These sensors can include electromyography (EMG) sensors, pressure sensors, force sensors, and position sensors. EMG sensors detect electrical signals generated by the user's residual muscles. These signals are used to interpret the user's intended movements. Other sensors, such as pressure sensors and force sensors, provide information about the pressure and force applied to the prosthetic hand. This data helps in determining the grip strength and the interaction with objects. Additionally, user inputs, such as voice commands or gesture recognition, can also be included as inputs to control the prosthetic hand.

- Signal Processing

The data collected from the sensors and user inputs are processed by dedicated processors or microcontrollers. These processors analyze and interpret the incoming signals to extract meaningful information about the user's intentions and the required hand movements. Signal processing algorithms, such as pattern recognition or machine learning techniques, may be employed to translate the sensor data into specific hand movements.

2. Motor Control

Once the user's intentions and desired hand movements are determined, the processed data is sent to the motor control system of the prosthetic hand. The motor control system consists of actuators, typically motors or servos, that drive the movement of the hand's fingers, thumb, and other components. The motor control system receives the processed data and generates appropriate control signals to activate the actuators, causing the hand to move and perform the desired grip or manipulation.

3. Prosthetic Hand Operation

The control signals from the motor control system are then transmitted to the respective actuators in the prosthetic hand. The actuators actuate the mechanical components, replicating the desired hand movements based on the user's intentions and sensor inputs. The prosthetic hand mimics the gripping patterns, finger movements, and other functionalities, allowing the user to interact with objects and perform tasks. The continuous loop of data flow from sensors and user inputs to processors to motor control ensures real-time control and feedback for the prosthetic hand. This feedback loop allows the user to have a natural and intuitive interaction with the prosthetic hand, enabling them to perform various daily tasks and activities with greater ease and functionality.

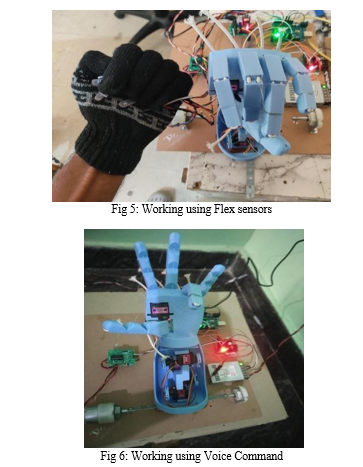

III. IMPLEMENTATION

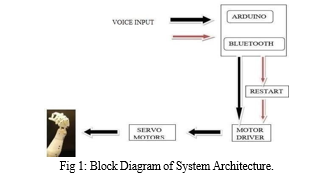

The controller was trained for different commands. The commands were trained from different values from flex sensors and Bluetooth to the Arduino (palm Connected Sensor). According to these commands the movement of Arduino takes place. The commands were differentiable so that any kind of overlapping between the commands would not create the confusion to microcontroller. Different kind of arm movements such as Palm opening, Palm closing, Elbow up and Elbow down were demonstrated. The testing was done using the different motors and the respective response time was calculated. This response time associated with the specific motor and specific weight will help to built prosthetic hand with specific application.

The various components which we have used are Arduino, Battery, Servo motors, Bluetooth module HC-05, Motor driver and Pin Header. Pin header is a connector which is used to connect pins of Servo motors and Arduino. All grounds are connected to common ground of Arduino. Power supply is provided by 9 volts battery which is not enough so we are using Arduino power pin to give power to Bluetooth. We are providing power to Servo motors separately because it needs more power. Signal wires are connected to output of H-bridge. Inputs of H-bridge are called to analog inputs in Arduino. Bluetooth HC-05 is connected to Tx, Rx & to power supply .

V. RESULTS AND DISCUSSION

The prosthetic arm development project successfully implemented Bluetooth connectivity and voice control as a means of interaction. The Bluetooth module facilitated wireless communication between the prosthetic arm and external devices, enabling seamless control and command transmission. Voice input was integrated as a user-friendly control method, allowing users to provide commands verbally. Prosthetic arms have undergone significant advancements in terms of their functionality. Modern prosthetic arms are designed to offer a wide range of movements and capabilities, including the ability to grasp objects, perform delicate tasks, and even provide sensory feedback to the user. One of the exciting areas of research is the development of neural interfaces that connect the prosthetic arm directly to the user's nerves and muscles. This technology allows individuals to control their prosthetic arms using their own neural signals, providing more intuitive and natural movement.

Many prosthetic arms use myoelectric control, which involves the use of electrodes placed on the user's residual limb to detect and interpret muscle signals. These signals are then used to control the movements of the prosthetic arm. Studies have shown that myoelectric control can provide precise and coordinated movements, enhancing the functionality of the prosthetic arm. Prosthetic arms are becoming more customizable and adaptable to meet the specific needs and preferences of individual users. Components can be tailored to fit the user's residual limb, and the prosthetic arm can be adjusted or modified as the user's needs change. Prosthetic arms are benefiting from advancements in materials, robotics, and artificial intelligence. Technologies such as 3D printing allow for customized and lightweight prosthetic components, while robotics and AI contribute to more advanced and responsive arm movements. The cost of prosthetic arms and their accessibility to all individuals in need continue to be significant factors of discussion. Efforts are being made to develop more affordable solutions, increase insurance coverage, and provide access to prosthetic arms in developing countries. The integration of Bluetooth and voice control in the prosthetic arm significantly improved the user experience. By allowing users to control the arm wirelessly and effortlessly using their voice, the system eliminated the need for physical buttons or complex control interfaces. This intuitive control method facilitated a more natural and efficient interaction between the user and the prosthetic arm, enhancing overall usability and user satisfaction. The incorporation of Bluetooth and voice control expanded the capabilities of the prosthetic arm, providing users with increased independence and functionality. By enabling wireless communication, users were able to operate the prosthetic arm from a distance, reducing the need for direct physical contact. Voice control added versatility, allowing users to trigger specific hand movements and gestures through spoken commands, enabling more precise control and a broader range of functionalities.

Research has focused on assessing the user experience and satisfaction with prosthetic arms. Studies have indicated that individuals who use prosthetic arms experience improved quality of life, increased independence, and enhanced psychological well-being. However, challenges such as socket discomfort, weight, and limited dexterity remain areas of concern.

Conclusion

The development of prosthetic arms has made significant progress over the years, revolutionizing the lives of individuals with limb loss or limb difference. Although the field continues to evolve and advance, several key conclusions can be drawn regarding the current state of prosthetic arm development. Prosthetic arms have become increasingly sophisticated and capable of replicating natural arm movements and functionalities. Advancements in robotics, materials science, and sensor technology have enabled the creation of prosthetics that can perform a wide range of tasks, such as grasping objects with varying degrees of force, manipulating delicate items, and even providing sensory feedback. The development of prostheticarms has made significant strides in enhancing the lives of individuals with upper limb loss. Through ongoing research, advancements in technology, and a deeper understanding of user needs, prosthetic arms have become increasingly functional, customizable, and integrated into daily life. Prosthetic arms have succeeded in restoring functionality, promoting independence, and improving the overall quality of life for users. The introduction of myoelectric control, neural interfaces, and sensory feedback has revolutionized the field, enabling more intuitive and natural movement. Customization options allow for individualized solutions that cater to specific needs and preferences. Despite these advancements, challenges such as cost, accessibility, comfort, and limitations in replicating the full range of natural arm movement remain. However, ongoing efforts are being made to address these challenges, with a focus on affordability, improved fit, reduced weight, and advancements in materials and technology. Looking ahead, the future of prosthetic arm development holds promise. Emerging technologies such as 3D printing, robotics, and artificial intelligence are likely to play significant roles in advancing prosthetic arms further. The integration of assistive technologies and the ongoing collaboration between researchers, clinicians, engineers, and users will continue to drive innovation and refinement in prosthetic arm design and functionality.

References

[1] J. Pradeep, Dr. P. Victer Paul 2016.Design and Implementation of Gesture Controlled Robotic Arm for Industrial Applications, Article in InternationalJournal of Scientific Research · October 2016. [2] Juan Pablo Ángel-López1,2 and Nelson Arzola de la Peña2.”Voice Controlled Prosthetic Hand with Predefined Grasps and Movements”, 2017. [3] Sakshi Sharma, Shubhank Sharma, Piyush Yadav 2017.Design and Implementation of Robotic Hand Control Using Gesture Recognition, Vol. 6 Issue 04, April-2017. [4] Pravash Ranjan Tripathy1, Subhendu Kumar Behera2, Design of a Prosthetic Arm Using Flex Sensor, 2019.Volume 14, Issue 4 Ver. 2 (July-Aug. 2019), PP 69-73. [5] Omer Saad Alkhafaf 1,2,*, Mousa K. Wali 1, and Ali H. Al- Timemy 3. Improved Prosthetic Hand Control with Synchronous Use of Voice Recognition and Inertial Measuremen, 2020. [6] 1Atishay.M,2D. Mahesh Kumar, 2020.Development and Implementation of RealTime Flex Sensor based Prosthetic Hand, Vol. 9 Issue 04, April-2020. [7] Jayarama Pradeep,A.Jamna & Ramakrishna Sasikumar.”Low cost Voice- Controlled Prosthetic Arm with Five Degrees of Freedom”,2021. [8] N Nissi Kumar, P Sai Tharun, K. Prakash, L.M.N Mythresh, Naga Jyoshna ”ROBOT ARM WITH GESTURE CONTROL”, 2022.

Copyright

Copyright © 2023 Aayeesha Najla J, Ullas J Naik , Akash R, Darshan H M, Dr. Aravinda H L. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET54607

Publish Date : 2023-07-04

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online