Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Review on Human-Computer Interaction: An Evolution of Usablility

Authors: Pooja Tekade, Himanshu Abhyankar, Prof. Rupali Tornekar

DOI Link: https://doi.org/10.22214/ijraset.2022.41414

Certificate: View Certificate

Abstract

This paper\'s goal is to give a broad overview of the field of Human-Computer Interaction. The basic definitions and terminology are covered, as well as a study of existing technologies and recent breakthroughs in the field, popular architectures used in the design of HCI systems, including unimodal and multimodal configurations, and HCI applications. A major goal of human-computer interaction research is to make systems more usable, valuable, and to give users with experiences tailored to their specific knowledge and goals. In an information-rich environment, the challenge is not just to make information available to individuals at any time, in any place, and in any form, but also to keep it up to date as per current trends and demands.

Introduction

I. INTRODUCTION

When personal computers (PCs) were originally introduced in the 1980s, programme development followed a "technology-centered strategy," neglecting the demands of regular users. As the popularity of the PC grew, so did the concerns with user experience. Human computer interaction (HCI) emerged as a result of efforts largely driven by human factors, psychology, and computer science. HCI is an interdisciplinary area that uses a "human-centered design" approach to create computing solutions that fulfill the demands of users. The discipline of Human-Computer Interaction (HCI) has grown not only in terms of quality of interaction, but also in terms of branching over its history. Instead of building standard interfaces, many research areas have focused on topics such as multimodality against unimodality, intelligent adaptive interfaces versus command/action based interfaces, and lastly active versus passive interfaces. One of the most significant shifts in design history is the shift from the generic human to the individual user. Design has transformed practically every encounter in the modern world, whether it's depositing a check, calling a taxi, or connecting with a buddy, thanks to user experience (UX). It has also contributed to making technology a more natural part of our lives, accessible to everyone from toddlers to the visually impaired. This paper aims to give an overview of the current status of HCI systems and to cover the major disciplines indicated above, the evolution of User Experience and the foreseen future. The literature review that was conducted to acquire the relevant information for this paper is covered in the next section. Following that, the overview part gives an overview of current technologies as well as recent advances in the field of human-computer interaction. After that, a discussion of the various architectures of HCI designs is provided. The last parts cover the evolution of UX and the future.

II. LITERATURE REVIEW

When a system's functionality and usability are properly balanced, the system's actual efficacy is realized. The focus of this paper is primarily on advances in the physical aspects of interaction, with the goal of demonstrating how different methods of interaction can be combined i.e. Multi-Modal Interaction [17] [24] and how each method's performance can be improved i.e. Intelligent Interaction [13] [16] to provide a better and easier interface for the user. The present physical technologies for HCI may essentially be classified based on the device's intended human sense. Vision, audition, and touch are the three human senses that these gadgets rely on.

We further discuss the real efficacy of a system, which is attained when the system's functionality and usability are properly balanced [1] [3]. We came across a notion that says there are three degrees of user activity: physical [5], cognitive [6], and emotive [7]. The physical aspect establishes the mechanics of human-computer interaction, whereas the cognitive aspect addresses how users can comprehend and participate with the system. The emotional element is a technique for persuading users to keep using the computer by altering their attitudes and feelings. We discovered that the amount and variety of an interface's inputs and outputs, which serve as communication channels, are the most important factors. Modalities are the terms used to describe these channels. A system is either unimodal or multimodal depending on the number of modalities. The applications of unimodal HCI have been studied, including visual based HCI [15], audio based HCI [12], and sensor based HCI [8]. We also looked at multimodal HCI applications such as smart video conferencing [27], intelligent homes [25], and assisting individuals with impairments [26].

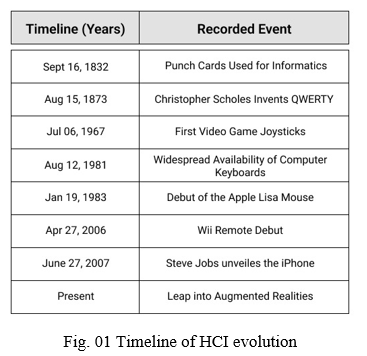

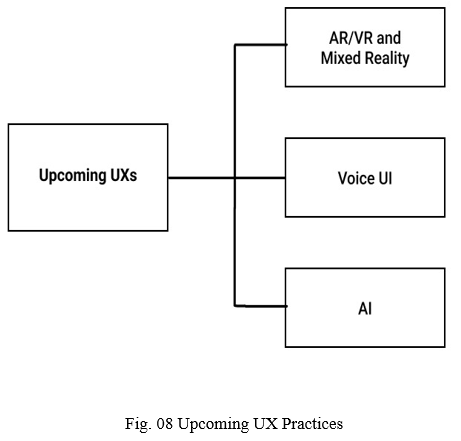

We've also spoken about the rise of User Experience and how it has influenced the design systems and how people interact with technology. We've discussed the accomplishments in this subject as well as the impact of recent events. We also looked at the whole user experience delivery process, from discovery to definition through design and development. The table below shows the timeline of evolution of HCI and the events that shaped it.

III. OVERVIEW

In the beginning, computers were physically separate items from the rest of our environment. To communicate with them, a user told a keypunch operator what they wanted to accomplish, and the keypunch operator punched holes in a card and passed it to a separate computer operator, who fed it to the computer. They'd wait together for the results to be printed.

Early computers were closer to a new kind of life than they were to being a part of ordinary life. Early designers were faced with the task of figuring out how to interact with this new living form. Designers coined the term "human-computer interaction" to describe their fledgling discipline, and the interfaces they created were named "human-computer interfaces."

The concept of user experience did not emerge until the dawn of the digital age. Computers evolved from specialist tools to an integrated element of everyday life as they became more portable, linked, and simple to operate. And the language of design shifted: the emphasis was now on creating for every potential user and experience.

The developments in human-computer interaction (HCI) over the last decade have made it nearly difficult to distinguish between what is fiction and what is and can be real. The continual twists in marketing and the impetus of research allow the new technology to become available to everyone in no time. However, not all existing technologies are available to the general public and/or are inexpensive. The first portion of this section provides an overview of the technology that is more or less available to and used by the general population. The second section provides an overview of the direction in which HCI research is headed.

A. Defining HCI

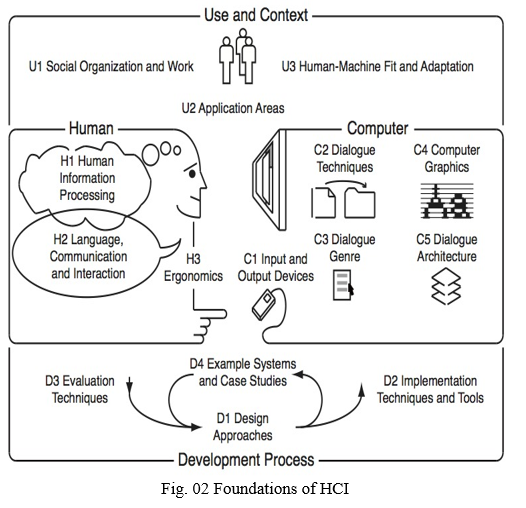

The notion of Human-Computer Interaction/Interfacing (HCI), also known as Man-Machine Interaction or Interfacing, was inherently embodied with the development of the computer, or more broadly, the machine itself. In reality, the answer is obvious: most advanced technology is useless unless it can be handled effectively by mankind. This simple argument simply gives the key words to consider while designing HCI: usability and functionality.

What a system can accomplish, i.e. how the functions of a system may assist in the fulfillment of the system's purpose, is ultimately what defines why it is developed. The collection of activities or services that a system delivers to its users defines its functionality. The value of functionality, on the other hand, is only apparent when it can be used effectively by the user. Usability of a system with a specific capability is the range and degree by which the system may be utilized efficiently and properly to accomplish certain goals for certain users. When a system's functionality and usability are properly balanced, the system's actual efficacy is realized

With these concepts in mind, and keeping in mind that the terms computer, machine, and system are frequently used interchangeably in this context, HCI is a design that aims to achieve a fit between the user, the machine, and the required services in order to achieve a certain level of quality and optimality in the services.

It's primarily subjective and context-dependent to determine what constitutes an effective HCI design. An aviation component designing tool, for example, should enable great precision in the perspective and design of the parts, but graphics editing software may not. The available technology may also influence how various forms of HCI for the same goal are built.

B. Methodology

HCI design should take into account a wide range of human behaviors and be practical. When compared to the simplicity of the interaction technique itself, the degree of human engagement in connection with a computer is sometimes imperceptible. The complexity of current interfaces varies depending on the degree of functionality/usability as well as the financial and commercial aspects of the machine on the market. An electrical kettle, for example, does not require a complicated user interface because its sole purpose is to heat water, and it would be impractical to have anything more than a thermostatic on/off button. A basic website, on the other hand, with limited functionality, ought to be complex enough in usability to attract and retain consumers.

As a result, while designing HCI, the amount of interaction a user has with a machine should be carefully considered. Physical, cognitive, and emotive are the three degrees of user engagement. The physical aspect establishes the mechanics of human-computer interaction, whereas the cognitive aspect addresses how users can comprehend and participate with the system. The affective aspect is a more recent issue, and it aims to affect the user in such a way that they continue to use the machine by changing attitudes and emotions toward the user. The present physical technologies for HCI may essentially be classified based on the device's intended human sense. Vision, audition, and touch are the three human senses that these gadgets rely on.

The most prevalent type of input device is one that relies on vision and is either a switch or a pointing device. Any type of interface that employs buttons and switches, such as a keyboard, is referred to as a switch-based device. Mice, joysticks, touch screen panels, graphic tablets, trackballs, and pen-based input are examples of pointing devices. Joysticks are controllers that may be used for both switching and pointing. Any type of visual display or printing equipment can be used as an output device. Recent HCI approaches and technologies are attempting to blend traditional means of interaction with newer technology like networking and animation. Wearable gadgets, wireless devices, and virtual devices are the three categories of new innovations. Technology is progressing at such a rapid pace that the distinctions between these new technologies are blurring and merging. GPS navigation systems, military super-soldier enhancing devices (e.g. thermal vision, tracking other soldiers' movements using GPS, and environmental scanning), radio frequency identification (RFID) products, personal digital assistants (PDA), and virtual tours for real estate businesses are just a few examples of these devices.

IV. ADVANCES IN HCI

Recent research directions and breakthroughs in HCI, especially intelligent and adaptable interfaces and ubiquitous computing, are covered in the sections that follow. Physical, cognitive, and affectional activity are all involved in these connections.

A. Intelligent and Adaptive HCI

Although the bulk of the public's gadgets are still simple command/action setups with less complex physical gear, the flow of research is focused on the development of intelligent and adaptable interfaces. The precise theoretical meaning of intelligence or being clever is unknown or, at the very least, not publicly acceptable. However, the apparent increase and improvement in functionality and usefulness of new technologies on the market may be used to describe these ideas.

As previously said, it is economically and technologically critical to create HCI designs that make users' lives easier, more joyful, and rewarding. Every day, the interfaces are becoming more natural to use in order to achieve this aim. An excellent illustration is the evolution of note-taking tool interfaces. There were typewriters, then keyboards, and now touch screen tablet PCs where you may write with your own handwriting and have it recognised and converted to text, as well as tools that automatically transcribe everything you say so you don't have to write at all.

Differentiating between employing intelligence in the creation of the interface (Intelligent HCI) and the way the interface interacts with users is a key aspect in the next generation of interfaces (Adaptive HCI). Intelligent HCI designs are user interfaces that include intelligence in their perception and/or reaction to users. Speech-enabled interfaces that engage with users using natural language and gadgets that visually detect the user's motions or gaze and reply accordingly are two examples.

Adaptive HCI designs, on the other hand, may not employ intelligence in the interface design but do so in the way they interact with users in the future. An example of adaptable HCI is a website that sells numerous things using a standard GUI. This website would be adaptable -to a degree- if it could identify the user and remember his searches and purchases, as well as intelligently search, discover, and propose things on sale that it believes the user would require. The majority of these adaptations are concerned with the cognitive and emotive levels of user behavior.

B. Ubiquitous Computing and Ambient Intelligent

The most recent research in the realm of human-computer interaction is undeniably pervasive computing (Ubicomp). The term, which is often interchangeably used with ambient intelligence and pervasive computing, refers to the ultimate methods of human-computer interaction, which is the deletion of a desktop and embedding of the computer in the environment, so that it is invisible to humans while surrounding them everywhere, hence the term ambient.

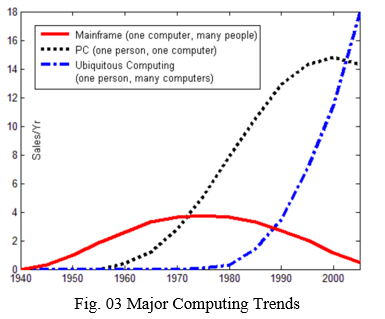

Mark Weiser, then the head technologist of Xerox PARC's Computer Science Lab, was the first to develop the concept of ubiquitous computing in 1998. His concept was to embed computers in the environment and ordinary things so that people might engage with several computers at the same time while being unaware of their presence and wirelessly

The Third Wave of computing has been dubbed Ubicomp. The mainframe era, when many people shared a single computer, was the First Wave. Then came the Second Wave, which introduced the PC era (one person, one computer), and now Ubicomp introduces the multi-computer, one-person age.

V. HCI SYSTEM ARCHITECTURE

Configuration forms one of the most important parts in HCI design. In fact, diversity and number of inputs and outputs are vital for defining any given interface. The main purpose of creating an architecture of an HCI System is to understand how these inputs and outputs work together. The upcoming sections will define different configurations and designs on which interfaces are based.

A. Unimodal HCI Systems

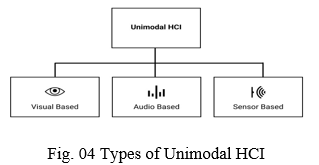

As previously stated, the amount and diversity of an interface's inputs and outputs, which are communication channels that allow users to interact with a computer via this interface, are the most important factors. A modality is the name given to each of the various separate single channels. The term "unimodal" refers to a system that is based solely on one modality.

Different modalities can be classified into three categories based on their nature:

- Visual Based HCI

- Sensor Based HCI

- Audio Based HCI

Let us try to describe each category with references and examples to these modalities ahead.

B. Visual Based HCI

Human-computer interaction with such a visual interface is perhaps the most common field of HCI study. Researchers attempted to address many aspects of human responses that can be detected as a visual signal, taking into account the breadth of applications and the range of open challenges and techniques. The following are some of the primary research areas covered in this section:

- Facial Expression Analysis

- Body Movement Tracking (Large-scale)

- Gesture Recognition

- Gaze Detection (Eyes Movement Tracking)

While the purpose of each section varies depending on the application, a broad picture of each region may be drawn. Facial expression analysis is concerned with the visual identification of emotions. Body movement tracking and gesture recognition are the main emphasis of this area and can serve a variety of functions, although they are most commonly employed for direct human-computer interaction in a command-and-control scenario. Gaze detection is a type of indirect user-machine interaction that is usually used to better comprehend the user's attention, purpose, or focus in context-sensitive circumstances. The exception is eye tracking systems for people with disabilities, where eye tracking is a key component of command and control.

C. Sensor Based HCI

This section combines a number of different topics with a wide range of applications. The common thread running through all of these domains is the use of at least one physical sensor to facilitate interaction between the user and the machine. These sensors, as seen below, might be either simple or extremely complex.

- Pen-Based Interaction

- Mouse & Keyboard

- Joysticks

- Motion Tracking Sensors and Digitizers

- Haptic Sensors

- Pressure Sensors

- Taste/Smell Sensors

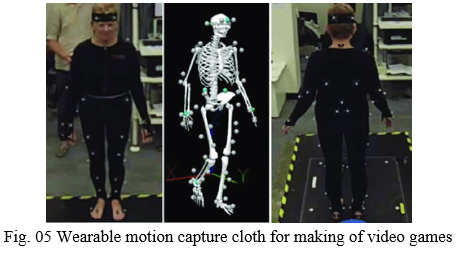

Some of these sensors have been around for a long time, while others are brand new. Mobile devices are particularly interested in pen-based sensors, which are related to pen gestures and handwriting recognition. Motion tracking sensors/digitizers are cutting-edge technology that has transformed the film, animation, art, and video game industries.

They take the shape of wearable cloth or joint sensors, allowing computers to interact with reality and humans to digitally create their own world. Haptic and pressure sensors are particularly interesting for robotics and virtual reality applications. Hundreds of haptic sensors are built into new humanoid robots, making them touch sensitive and aware. Medical surgery applications also use these types of sensors. Taste and smell sensors are also the subject of some research, but they are not as well-known as other areas.

D. Audio Based HCI

Another key area of HCI systems is audio-based interaction between a computer and a human. This section deals with data gathered from various audio signals. While the nature of audio transmissions is not as varied as that of visual signals, the data gathered is. Audio signals can be more dependable, useful, and in some cases unique sources of information.This section's research areas can be broken down into the following categories:

- Speech Recognition

- Speaker Recognition

- Auditory Emotion Analysis

- Human-Made Noise or Sign Detections

- Musical Interaction

Researchers have traditionally focused on voice recognition and speaker recognition. The analysis of emotions in audio data was sparked by recent initiatives to integrate human emotions in intelligent human-computer interaction. Aside from the tone and pitch of voice data, common human auditory indicators like sigh, gasp, and others aided emotion analysis in the development of more intelligent HCI systems. Music production and interaction is a relatively new topic of HCI that has applications in the art sector and is being researched in both audio and visual-based HCI systems.

VI. MULTIMODAL HCI SYSTEMS

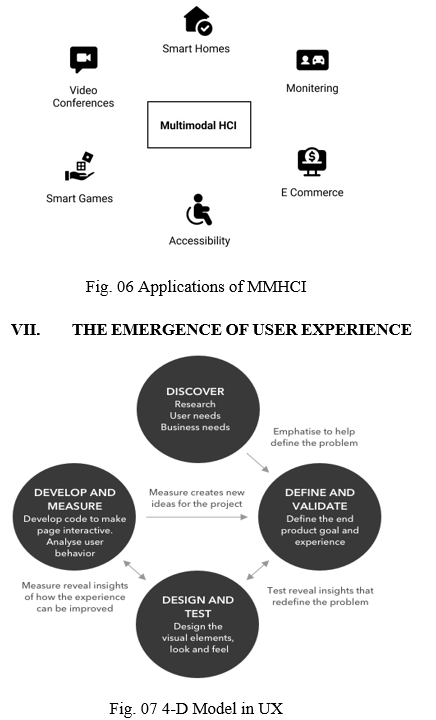

The term "multimodal" refers to the use of many modalities at the same time. These modalities in MMHCI systems generally refer to how the system responds to inputs, i.e. communication channels. These channels are defined by human types of communication, which are essentially his senses: Sight, Hearing, Touch, Smell, and Taste. These are only a few of the types of interactions that can be had with a machine.

As a result, a multimodal interface facilitates human-computer interaction by allowing two or more types of input to be used in addition to the usual keyboard and mouse. The number of allowed input modes, their types, and how they interact may differ significantly from one multimodal system to the next. Speech, gesture, gaze, facial expressions, and other non-traditional modalities of input are all included in multimodal interfaces. Gesture and speech are one of the most widely used combinations of input techniques. Although an ideal multimodal HCI system would include a combination of single modalities that interact in a correlative manner, each modality's practical restrictions and open difficulties impose limitations on the fusion of different modalities. Despite all of the progress made in MMHCI, the modalities are still addressed independently in most contemporary multimodal systems, and the findings of multiple modalities are only merged at the end.

The collaboration of several modalities to aid recognition is an intriguing element of multimodality. Lip movement tracking (visual) can aid speech recognition methods (audio), and voice recognition methods (audio) can aid command acquisition in gesture recognition (visual-based). The following section illustrates some of the uses for intelligent multimodal systems.

A. Applications

In comparison to standard interfaces, multimodal interfaces can provide a variety of benefits. They can, for example, provide a more natural and user-friendly experience. Multimodal interfaces provide users with a natural experience by allowing them to pick an object with a pointing gesture and then ask questions about it using speech. Multimodal interfaces' ability to provide redundancy to accommodate diverse persons and situations is another major strength. Here are a few more examples of multimodal system applications:

- Smart Video Conferencing

- Intelligent Homes/Offices

- Driver Monitoring

- Intelligent Games

- E-Commerce

- Helping People with Disabilities

A. Personal Computer Revolution

The 1980s PC revolution increased the demand on the computer industry to enhance product usability. There was obviously a need for usability before personal computing, which is why UX personnel rose by a factor of 100 during the mainframe period. However, mainframes were corporate computers, which meant that the people who used the computer were not the ones who bought it. As a result, the computer industry had little motivation to create high-quality user interfaces in those early days. Because the user and the buyer were the same with personal computers, the user experience had a direct influence on buying decisions. In addition, a large trade press provided reviews of all new software packages, generally focusing on their usability.

B. Web Revolution

Companies were under much more pressure to enhance the quality of their interface design during the online revolution of the 1990s and 2000s. The following was the payment and user experience routine with typical PC software:

- You purchased a shrink-wrapped software box.

- You took the gadget out of the box, installed the software, and then discovered it was difficult to operate.

In other words, in the case of PC software, money came first, followed by user experience. The sequence of these two stages was reversed with websites:

a. You visit the company's website. You navigate the site if it makes sense. You eventually get at a website for a product you might be interested in if you can find your way there. You may move to the next stage if the information on the product pages appears to be relevant to you.

b. You add items to your basket, proceed through the checkout procedure, and eventually pay the firm.

On the web, the user experience comes first, followed by money. Making UX the money's gatekeeper greatly enhanced executives' willingness to spend in their UX teams.

C. Dot Com Bubble

Usability has become the "hot new thing" due to extensive journalistic coverage. Strong favorable PR for UX, particularly during the dot-com bubble, led many organizations to believe "we need some of that."

VIII. THE FUTURE OF HCI

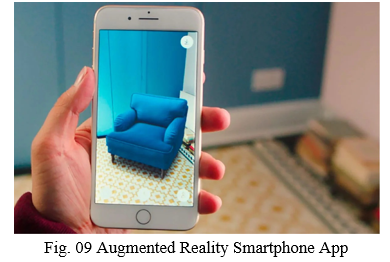

A. Augmented, Virtual and Mixed Reality

Augmented reality (AR) is a virtual layer that overlays the actual world, enhancing real-world experiences and reacting quickly to changes in the user's environment. Many AR apps, such as Snapchat and Pokémon Go, have become immediate blockbusters just days after their debut, proving that augmented reality is a significant advancement in human interaction. AR will no longer be limited to smartphones and tablets, but will also include wearable gadgets in the future.

Augmented reality (AR) is separate from virtual reality (VR) and mixed reality (MR). Users are immersed in a completely simulated world with created components and objects in virtual reality. Mixed reality, also known as hybrid reality, combines the virtual and actual worlds.

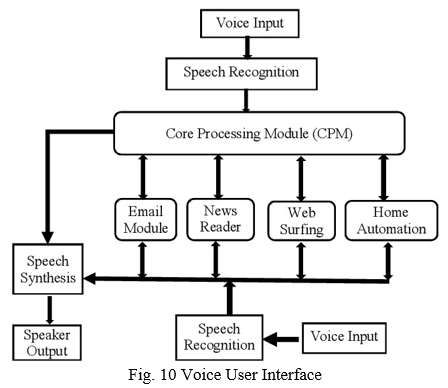

B. Voice User Interfaces

Voice user interfaces (VUIs) allow users to operate apps and gadgets using their voices. They're changing the way people engage with items. For example, for several years, consumers have used speech recognition to manage gadgets like Google Home, Alexa, Cortana, and Siri. VUIs are increasingly becoming more important in assisting users with their day-to-day chores, such as setting an alarm or arranging an appointment.

The future of user interfaces isn't limited to physical screens, as VUIs illustrate. Digital assistants and smart speakers are growing increasingly popular among consumers, according to Adobe's analytics survey, and will continue to do so in the future.

Current voice assistants, however, have some limitations. Users believe they're interacting with a device rather than a real person since they don't consider human emotions. However, we've seen remarkable speech-based interactions in movies like J.A.R.V.I.S. in Iron Man and Samantha in Her, and it's evident that voice assistants will become users' friends in the not-too-distant future.

C. Artificial Intelligence

Artificial intelligence (AI) is a branch of computer science that enables the creation of intelligent machines that can eventually mimic human behavior. Artificial intelligence has the ability to think, learn, and complete tasks independently. It learns from human behavior in its environment, just like UX experts learn from watching people execute their activities.

AI is transforming human interactions with digital gadgets with the advent of chatbots and voice assistants like Siri, Google Assistant, and Alexa. AI has helped to predict product design by evaluating historical and current data to forecast users' future behavior and decrease the effort required for them to perform their activities, in addition to boosting human-device interactions.

AI is also assisting UX designers in analyzing the voluminous data obtained from UX research, allowing them to devote more time and effort to design rather than data analysis. AI-powered tools are also assisting in the rise of sales and user engagemenT.

D. Design For Accessibility

Millions of individuals throughout the world suffer from a variety of impairments, including visual, speech, hearing, cognitive, physical, and mental health issues. When building products, we as UX designers must solve this gap. Designers should put themselves in the shoes of consumers, interview individuals with impairments, and learn about their pain areas while using technology goods, as well as what features may assist them utilize digital products more efficiently. In recent years, several firms have grown more conscious of the need for accessibility and are creating goods with everyone in mind. Many features and solutions are now available to assist persons with impairments in doing their daily chores.

It will be simpler for these folks to use goods with improved user experiences in the future.

Conclusion

Human-Computer Interaction (HCI) is a crucial component of system design. The way a system is displayed and used by users determines its quality. As a result, better HCI designs have received a great deal of attention. The current study approach aims to use intelligent, adaptive, multimodal, natural methods to replace common regular methods of contact. The Third Wave, also known as ambient intelligence or ubiquitous computing, aims to integrate technology into the environment in order to make it more natural and undetectable. Virtual reality is an emerging discipline of HCI that has the potential to become the common interface of the future. Through a detailed reference list, this study tried to provide an overview of these difficulties as well as an assessment of previous research.

References

[1] Te’eni, J. Carey and P. Zhang, Human Computer Interaction: Developing Effective Organizational Information Systems, John Wiley & Sons, Hoboken (2007). [2] B. Shneiderman and C. Plaisant, Designing the User Interface: Strategies for EffectiveHuman-Computer Interaction (4th edition), Pearson/Addison-Wesley, Boston (2004). [3] J. Nielsen, Usability Engineering, Morgan Kaufman, San Francisco (1994). [4] D. Te’eni, “Designs that fit: an overview of fit conceptualization in HCI”, in P. Zhang and D. Galletta (eds), Human-Computer Interaction and Management Information Systems: Foundations, M.E. Sharpe, Armonk (2006). [5] A. Chapanis, Man Machine Engineering, Wadsworth, Belmont (1965). [6] D. Norman, “Cognitive Engineering”, in D. Norman and S. Draper (eds), User Centered Design: New Perspective on Human-Computer Interaction, Lawrence Erlbaum, Hillsdale (1986). [7] R.W. Picard, Affective Computing, MIT Press, Cambridge (1997). [8] J.S. Greenstein, “Pointing devices”, in M.G. Helander, T.K. Landauer and P. Prabhu (eds), Handbook of Human-Computer Interaction, Elsevier Science, Amsterdam (1997). [9] B.A. Myers, “A brief history of human-computer interaction technology”, ACM interactions, 5(2), pp 44-54 (1998). [10] B. Shneiderman, Designing the User Interface: Strategies for Effective HumanComputer Interaction (3rd edition), Addison Wesley Longman, Reading (1998). [11] A. Murata, “An experimental evaluation of mouse, joystick, joycard, lightpen, trackball and touchscreen for Pointing - Basic Study on Human Interface Design”, Proceedings of the Fourth International Conference on Human-Computer Interaction 1991, pp 123-127 (1991). [12] L.R. Rabiner, Fundamentals of Speech Recognition, Prentice Hall, Englewood Cliffs (1993). [13] M.T. Maybury and W. Wahlster, Readings in Intelligent User Interfaces, Morgan Kaufmann Press, San Francisco (1998). [14] S.L. Oviatt, P. Cohen, L. Wu, J. Vergo, L. Duncan, B. Suhm, J. Bers, T. Holzman, T. Winograd, J. Landay, J. Larson and D. Ferro, “Designing the user interface for multimodal speech and pen-based gesture applications: state-of-the-art systems and future research directions”, Human-Computer Interaction, 15, pp 263-322 (2000). [15] D.M. Gavrila, “The visual analysis of human movement: a survey”, Computer Vision and Image Understanding, 73(1), pp 82-98 (1999). [16] L.E. Sibert and R.J.K. Jacob, “Evaluation of eye gaze interaction”, Conference of Human-Factors in Computing Systems, pp 281-288 (2000). [17] A. Jaimes and N. Sebe, “Multimodal human computer interaction: a survey”, Computer Vision and Image Understanding, 108(1-2), pp 116-134 (2007). [18] I. Cohen, N. Sebe, A. Garg, L. Chen and T.S. Huang, “Facial expression recognition from video sequences: temporal and static modeling”, Computer Vision and Image Understanding, 91(1-2), pp 160-187 (2003). [19] M. Pantic and L.J.M. Rothkrantz, “Automatic analysis of facial expressions: the state of the art”, IEEE Transactions on PAMI, 22(12), pp 1424-1445 (2000) [20] J.K. Aggarwal and Q. Cai, “Human motion analysis: a review”, Computer Vision and Image Understanding, 73(3), pp 428-440 (1999). [21] T. Kirishima, K. Sato and K. Chihara, “Real-time gesture recognition by learning and selective control of visual interest points”, IEEE Transactions on PAMI, 27(3), pp 351- 364 (2005). [22] P.Y. Oudeyer, “The production and recognition of emotions in speech: features and algorithms”, International Journal of Human-Computer Studies, 59(1-2), pp 157-183 (2003). [23] L.S. Chen, Joint Processing of Audio-Visual Information for the Recognition of Emotional Expressions in Human-Computer Interaction, PhD thesis, UIUC, (2000). [24] S. Oviatt, “Multimodal interfaces”, in J.A. Jacko and A. Sears (eds), The HumanComputer Interaction Handbook: Fundamentals, Evolving Technologies, and Emerging Application, Lawrence Erlbaum Associates, Mahwah (2003). [25] S. Meyer and A. Rakotonirainy, “A Survey of research on context-aware homes”, Australasian Information Security Workshop Conference on ACSW Frontiers, pp 159-168 (2003). [26] Y. Kuno, N. Shimada and Y. Shirai, “Look where you’re going: a robotic wheelchair based on the integration of human and environmental observations”, IEEE Robotics and Automation, 10(1), pp 26-34 (2003). [27] I. McCowan, D. Gatica-Perez, S. Bengio, G. Lathoud, M. Barnard and D. Zhang, “Automatic analysis of multimodal group actions in meetings”, IEEE Transactions on PAMI, 27(3), pp 305-317 (2005).

Copyright

Copyright © 2022 Pooja Tekade, Himanshu Abhyankar, Prof. Rupali Tornekar. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET41414

Publish Date : 2022-04-12

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online