Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Implementation of Virtual Keyboard Using Hand Gestures

Authors: Nuzhat Naaz, G. Praveen Babu

DOI Link: https://doi.org/10.22214/ijraset.2023.55863

Certificate: View Certificate

Abstract

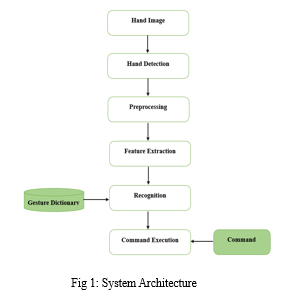

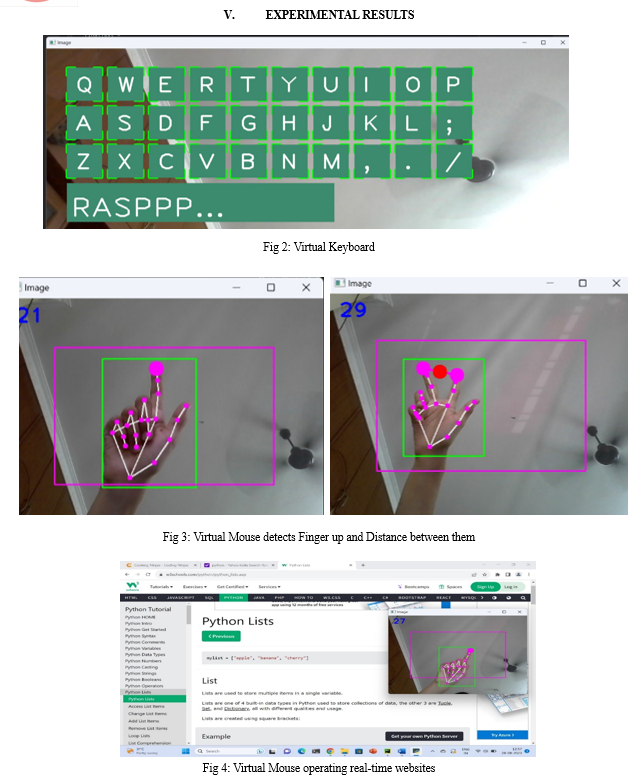

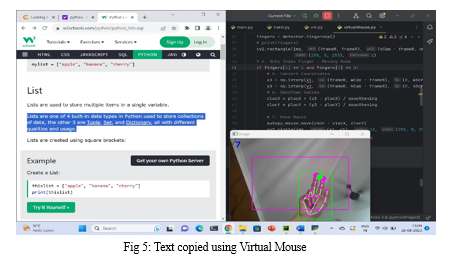

Human interaction with computers is increasing everyday with the advancement in Technology. The different ways of interaction with computers have become an important factor in choosing devices. Humans are more interested in lightweight, touchless technologies that can make the work easier and easier. In this Paper, a virtual keyboard and mouse that act as touchless input medium to Computers and eliminates the use of physical keyboard and mouse has been proposed. The virtual keyboard and mouse are designed to execute its functionality by recognizing hand gestures. The project is build using Python, OpenCV, MediaPipe technologies which provides features for hand gesture controls. It requires a computer or laptop with camera. The idea is to display the customized keyboard layout on the computer screen and the built-in camera captures the hands and its movements over the virtual keyboard layout. A forefinger hovering over the keyboard keys highlights those keys and when the tips of forefinger and middle finger met, then that key is clicked, which is taken as input to computer and displayed on the screen. A virtual mouse has been designed to function as a physical mouse based on hand gestures. The virtual mouse recognizes the fingertip of forefinger and movement of that finger is considered as cursor movements. When the forefinger points to the elements on the screen, it is highlighted as if the cursor is placed over those elements. The overlapping of tips of forefinger and middle finger are designed as a click.

Introduction

I. INTRODUCTION

Computing devices have a recorded history of evolving the world to make lives easier and faster. User requirements are the commands for devices to perform functions. User requirements are instructed to computing devices as user input. There are multiple mediums to provide input to computing devices such as Keyboard, Mouse, Scanner and more. The traditional keyboard and mouse require additional hardware and space. Hence, it led to need to overcome these challenges and develop a virtual input medium which doesn’t require any special hardware and space. This paper explains how virtual keyboard and mouse can be created and the ways in which it can benefit the users. Furthermore, natural human communication is based on body language. Moreover, human communication is inherently intertwined with body language meaning transferring information via languages, poses, and gestures. Hence, the challenge of establishing an uncomplicated, organic, and effective method for human-machine interaction currently stands as a focal point for researchers. A virtual input mechanism allows users to input text or control a computer by simulating a physical keyboard, mouse through image processing techniques provided by the OpenCV library. It serves as an alternative input method, particularly useful for touchless screens, accessibility applications, and situations where a physical keyboard is not available or practical. The core process involves displaying a graphical representation of keys on the screen, analyzing user interactions via mouse clicks or touch gestures, and translating these interactions into meaningful characters or actions. This synthesis of visual interface design, image processing, and user interaction results in an adaptable and versatile tool that can cater to a range of applications, enhancing accessibility and usability in diverse computing environments. The results and outcomes of the experiments conducted within the specific experimental environment provide crucial insights into the effectiveness of the chosen algorithms, the implementation of customized keyboard, mouse and the impact on gesture recognition accuracy and user experience.

II. LITERATURE SURVEY

A. “A Virtual Keyboard Implementation Based on Finger Recognition”

This paper highlights the necessity of keyboards in computing systems and the difficulties faced using a physical keyboard. Keyboard is one of the popular ways to provide input to the system. But physical keyboards are restricted to additional hardware and space. Users must carry keyboards from one place to another and need to adjust extra space for them. The researchers of this paper struggled to develop a virtual keyboard.

Firstly, keyboard is written or printed on a plain paper and a snap of the same is embedded in the system. The paper must be fixed on a plain or any wall. The device’s camera is enabled to detect fingers. It detects fingertips based on the skin tone of human beings. The user needs to place the finger on the key which he wants to enter and hold for some time. The code is designed in a way if the user’s finger is kept on any letter or digit for a longer time, then it will be taken as input key. According to author, the keyboard being developed is environmental-friendly. Also, as it requires only a piece of paper, so its economic in terms of cost. This keyboard’s accuracy is claimed to be 94.62% [2]. There exist some limitations including lots of computations, with requirements of this project. As it is printed on paper which doesn’t have fixed space, so there is a possibility of losing the keyboard paper anytime, anywhere.

There should be proper lighting and fixed plane for this model to work properly. As the user every time needs to wait for loner period than usual to get a key pressed, shows that it is very much time consuming and can take up to hours to type a document. Below is the representation of Author’s research.

B. “A New Virtual Keyboard with Finger Gesture Recognition for AR/VR Devices”

This paper is a different approach to create virtual keyboard by identifying gestures of fingers. It mainly uses two fingers – Thumb and Forefinger. This keyboard model is similar to the Keypad Mobile where user has 3 to 4 characters on a single key. The position of the letter decides number of times the key has to be pressed to input. The characters are arranged in 3*3 arrays. So, to adjust 26 alphabets it uses three 3*3 arrays.

The characters can be customized based on its frequency or as per user’s requirements. The better understanding is explained further. The user places forefinger on any key that he targets to input, thumb is separated from forefinger. When the user’s thumb touches the forefinger, that target key is highlighted and taken as input. If the user wants to enter first character, then thumb and forefinger are made to contact for once, for instance in below example user’s input is letter ‘O’. If user wants to enter second character, the fingers need to contact twice and if third character is to be entered then finger contact thrice and so on. Author states the average speed achieved is 46.16%, which is comparatively less. It requires only a low-cost camera, but disadvantages include the accuracy of developed model. Author says the exact timing of typing is difficult to be matched hence decreasing speed of project.

III. METHODOLOGY

In this paper, proposed is functionality of virtual keyboard and mouse that are easy to be understood by a common man and are budget friendly.

This keyboard is built on Python as programming language. Supporting libraries used in this project are: OpenCV, Mediapipe. Initially, the device’s web camera opens, and it is programmed to detect hands, fingertips, and its gestures. The gestures of fingers have certain specifications. The Forefinger when placed over the keys, the key highlights to make user understand which key is going to be pressed. When the two fingers i.e., index finger and middle finger of user meet on a key, the key is clicked, and input is taken by the device. The text from this keyboard can be entered in its given text field, current IDE, or Notepad, hence enables users to use it in broad aspects.

Mouse, an important device to control Computer Systems. Proposed mouse can overcome the existing mouse problems. It doesn’t require hardware or Bluetooth connections to functions. It is purely built on hand gesture libraries provided and supported by python. The functions such as left click, scrolling webpages, and move around window or computer screen are programmed using Autopy, PyAutoGUI and Pynput packages. A single finger which is up, behaves as cursor to point anywhere on the screen, and combination of specific fingers results in mouse actions. The model being proposed has increased speed and accuracy recorded 98%.

A. Benefits

- It has a high speed of 90%, and accuracy of 98%.

- The virtual interaction model is preferred to avoid spread of virus or bacteria by eliminating touching physical devices.

- It can be implemented for control Robots in Robotics field and have significance in Nano Technology.

- It can Automate Systems.

- It also has broader scope in Gaming based on Augmented reality, virtual reality and more.

B. Modules

The modules being implemented are:

- Hand Detection Module: It has been programmed to detect Human hands. Implemented using CVZONE. The in-built Hand Tracking Module captures hands from live feed and mark locators on hand.

- Keyboard Module: It displays keyboard layout onto screen, from where user can access it virtually, thus eliminating physical keyboard.

- Mouse Module: It gives the capability to access mouse virtual without any hardware or cables or Bluetooth.

IV. IMPLEMENTATION

A. FAST Algorithm for Feature Detection:

FAST (Features from Accelerated Segment Test) Algorithm proposed in 2006 by Edward Rosten and Tom Drummond, is used to detect corners in an image. Choose a pixel p in an image that will be used to determine whether it is an interest point or not. Let Ip be the intensity. Choose the proper threshold value, t. Think about a 16-pixel circumference surrounding the pixel being tested. If found cluster of n consecutive pixels in the circle (of 16 pixels) those are brighter than Ip+t or all darker than Ip-t exists, then the pixel p is a corner.

B. Proposed Algorithm

- Install and import the libraries cvzone, Mediapipe, AutoPy, time.

- There are 2 phases in hand tracking.

a. Palm Detection: The cropped image of the hand is produced by MediaPipe after processing the entire input image.

b. Identification of Hand Landmarks: On the hand image that has been cropped, MediaPipe identifies the 21 hand landmarks.

3. The webcam frames from a laptop or PC serve as the foundation for the proposed AI virtual input system. The video capture object is constructed using the Python computer vision package OpenCV, and the web camera begins recording footage. The virtual AI system receives frames from the web camera and processes them.

4. The Keyboard buttons are drawn on the screen in a loop. We can customize the size and color of the keys. Also, the type of keyboards such as “QWERTY” keyboard or a normal keyboard design is flexible to choose. Special characters can also be added based on requirement.

5. Only index finger is chosen as mouse cursor which is hovered over the screen.

6. The index finger and middle finger points, when meet performs a mouse click action on the screen.

VI. FUTURE WORK

- Model can be enhanced to more accuracy level targeting to 100%.

- Backspace and special operations (Shift key) features would really improve the project.

- Advanced features can be added to Mouse to make more interactions. Features include – right click, scroll bar operations etc.

- If worked on, this model can be made real-world Application in near future.

Conclusion

Overcoming the various challenges in Legacy system, virtual interaction between Human and computer system holds significance and improvements. Combination of controlling gestures and performing live actions on computer screen is attractive. Its designed simple, even a common person or even a child must be able to grasp it quickly. It is economic and space saving project, it replaces traditional or conventional keyboard, mouse system. All the operations of input devices are maintained in small program, which displays keyboard on-screen and captures human hand triggers by identifying gestures, allows user to pass input to the system or type text in various environments. Mouse performs its actions by navigating to webpages, selecting texts, clicking desired elements or buttons. The innovation has high development scope.

References

[1] Real-time hand gesture recognition with EMG using machine learning, Andrés G. Jaramillo ; Marco E. Benalcázar, 2017 IEEE Second Ecuador Technical Chapters Meeting (ETCM) [2] Y. Zhang, A virtual keyboard implementation based on finger recognition, 2016. [3] A New Virtual Keyboard with Finger Gesture Recognition for AR/VR Devices | SpringerLink [4] Adajania, Y., Gosalia, J., Kanade, A., Mehta, H., Shekokar, N.: Virtual keyboard using shadow analysis. In: 2010 3rd International Conference on Emerging Trends in Engineering and Technology. pp. 163–165. IEEE (2010) [5] Hernanto, S., Suwardi, I.S.: Webcam virtual keyboard. In: Proceedings of the 2011 International Conference on Electrical Engineering and Informatics. pp. 1–5. IEEE (2011) [6] Saraswati, V.I., Sigit, R., Harsono, T.: Eye gaze system to operate virtual keyboard. In: 2016 International Electronics Symposium (IES). pp. 175–179. IEEE (2016) [7] Performance analysis of RTEPI method for real time hand gesture recognition, A.V. Dehankar; Sanjeev Jain ; V. M. Thakare, 2017 International Conference on Intelligent Sustainable Systems (ICISS) [8] Using AEPI method for hand gesture recognition in varying background and blurred images, A.V. Dehankar ; Sanjeev Jain ; V. M. Thakare, 2017 International conference of Electronics, Communication and Aerospace Technology (ICECA) [9] Detection and recognition of hand gesture for wearable applications in IoMTW, Anna Yang ; Sung Moon Chun ; Jae-Gon Kim, 2017 19th International Conference on Advanced Communication Technology (ICACT) [10] Hand Gesture Recognition System for Touch-Less Car Interface Using Multiclass Support Vector Machine, Mrinalini Pramod Tarvekar, 2018 Second International Conference on Intelligent Computing and Control Systems (ICICCS)

Copyright

Copyright © 2023 Nuzhat Naaz, G. Praveen Babu. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET55863

Publish Date : 2023-09-24

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online