Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Implementing a Real-time Emotion Detection System using Convolutional Neural Network

Authors: K Nalini, G. Lakshmi Indira, S. Mahathi Varma, P Vinith

DOI Link: https://doi.org/10.22214/ijraset.2022.44648

Certificate: View Certificate

Abstract

Humans express their emotions through their facial expressions. They are crucial in interpersonal communication. Every human mood can be documented using this project\'s real-time emotion recognition system. In this project, various models generated using machine learning and deep learning algorithms are used. To identify human expressions in real time, application software is created that makes use of a few powerful Python packages. This project makes use of a number of libraries, including Keras, OpenCV, and Matplotlib. Training those emotions can detect various human emotions. This real-time emotion detection can be used across multiple platforms.

Introduction

I. INTRODUCTION

We were able to keep our aspirations unrestricted due to the evident and quick growth of technology. In today's environment, there is a great deal of image processing research and analysis going on. It is a sort of signal processing in which an image is utilized as an input and an image or any parameters associated with that picture is output. Facial recognition is one of the most essential applications of image processing. Our faces create a variety of expressions depending on our emotions, which are nonverbal signals. Facial expression recognition is used in disciplines such as human-computer interface, pattern recognition, and others. The analytical domain of facial emotion detection seeks to identify emotion in human facial expressions. Automatic translation systems and machine-to-human interactions are tiny steps forward in the field of face expression recognition research. We'll use a convolutional neural network to identify emotions in faces in this scenario. Tensorflow is used to design, build, and train deep learning models. In the subject of face recognition, deep learning in neural networks is commonly employed. The face detection notion demands some kind of pre-processing to get the system ready. It necessitates the installation of extra software like OpenCV (Open Source Computer Vision). OpenCV is a computer vision object that is implemented as a library.

II. EXISTING SYSTEM

The study of the face and its features has been an active area of research for many years. It is not a recent development.

Fisherface algorithm introduces a highly accurate face recognition methodology. This algorithm uses principal component analysis and linear discriminant analysis to achieve recognition. Principal component analysis can be used to reduce the dimensionality of images. High- dimensional space will be converted to low-dimensional space, implying that the number of features per image will be reduced. Linear discriminant analysis aids in the computation of a collection of distinguishing features that synchronises various classes of image data for arrangement. Even though there were many algorithms with high accuracy, such as fisherface, there were some drawbacks, such as high process load, poor discrimination, and low data distinction.

III. PROPOSED METHOD

The seven basic emotions into which human facial expressions can be arranged are happy, sad, surprise, fear, anger, disgust, and neutral. Our facial expressions are caused by the enhancement of certain facial muscles. In this project, we will design a deep learning neural network to allow machines to make references about our emotional states.

IV. RELATED WORK

The increasing progress of convolutional neural networks in the field of image classification, used for real-time emotion detection, is discussed in "Facial Expression Recognition Based on Tensorflow Platform", 2017, Research Gate, by Bing Nan and Cui Xu.

G.Hintin and Greves published a detailed examination on facial emotion recognition in the IEEE journal "Emotion Recognition with Deep Recurrent Neural Networks," revealing the properties of the dataset used and the facial emotion recognition study classifier.

Real time emotion recognition, which helps to classify human emotions in real-time by just using camera and few lines of code acts as a big step towards Advanced Human Computer Interaction according to “Emotion Detection Using OpenCV and keras”, 2020, Start it up, by Karan Sethi. He also gave a detailed description of proper way of defining variables that helps in saving time of writing the values manually again and again. A detailed description of developing a convolutional neural network model for real time emotion detection is given in “Emotion Recognition from Faial Expression using Deep Learning”, 2019, International Journal of Engineering and Advanced Technology (IJEAT), by Nithya Roopa. S

V. METHODOLOGY

A. Algorithm

Initially faces in each frame of webcam feed are detected using haar cascade strategy.

The image's face region is resized to 48*48 pixels and fed into a convolutional neural network.

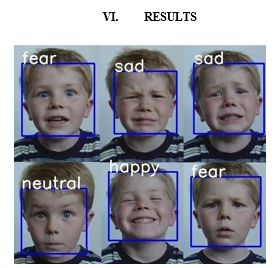

The network generates a list of programming scores for seven different types of emotions (i.e., happy, angry, sad, disgust, fear, surprise and neutral). Emotion with most extreme score will be shown on screen. Hence, real-time emotion detection is done.

Steps involved in face recognition:

- Data Description

The Fer2013 dataset, which contains 48*48 grayscale images of faces, is used.

The faces have been classified into one of seven facial emotion categories, such as –

0 = Angry,1 = Abhorrent,2 = Anxiety,3 = Joyful,4 = Depressing,5 = a pleasant surprise,6=neutral.

2. Data Preparation

Fer2013.csv has two columns: emotion and pixels.All of the pixels in the pixel column are sorted in a list fashion. Because computing pixel values in the range [0 – 255] is challenging, data in the pixel field is stored in the range [0-1]. Stored face objects are reshaped and enlarged to 48*48 in this step. Emotion labels and their pixel values are stored in objects.

3. Image Augmentation

We are now using the image augmentation technique on our dataset to generate more data by artificially expanding the size of the training dataset using the image augmentation technique. This will be accomplished by modifying images in the dataset and applying transformations such as image rotation, cropping, zooming, flipping, and so on.

Keras deep learning neural network libraries can be used to augment image data.

4. Feature Extraction and Classification

The removal of irrelevant visual components is aided by facial detection, which is a key step in emotion identification. Facial landmarks are plotted on human face photographs, which are a collection of critical spots on the image identified by their (x, y) coordinates. These landmarks on the face are used to pinpoint the eyes, brows, and jaw line. The facial component is used to extract emotion detection characteristics.

5. Training

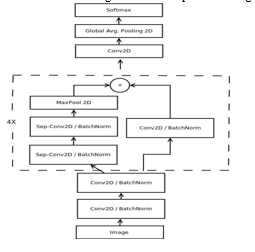

With the architecture a mini-exception model for emotion recognition is developed in this stage.

6. Validation

In this step, we will use various OpenCV and Keras functions.

A video object is used to save a video frame, and the cascade classifier detects the facial region of interest.

The grayscale image is then obtained from the frame, and numpy is used to resize and reshape it.

The resized image is then fed into predictor.

Our output will be the maximum argument determined.

The output will be formatted above the rectangular box drawn around the facial regions.

The resized image is then fed into predictor.Our output will be the maximum argument determined.The output will be formatted above the rectangular box drawn around the facial regions.

B. Technology Description

Python: Python is a high-level, deciphered, intelligent, and object-oriented prearranging language that is runtime processed by an interpreter and is designed to be highly readable.

It is an excellent language for beginners and adheres to the Object – Oriented style.

Pycharm: It is a free and open source application.

This is an interactive Python console. It aids in data training and testing.

Haar Cascade files: A Haar cascade is essentially a classifier that is used to identify the item for which it has been prepared from the source. For training, a positive image is usually superimposed over a set of negative images. Training takes place on a server and in stages.

Using high-quality images at various stages of classifier training aids in achieving the best results. Predefined haar cascades, which are available on an online platform, can also be used.

Deep Learning: Deep learning algorithms are planned by considering the design and function of the mind.

It models data that is intended to perform a specific activity. Deep learning in neural organisations has numerous applications in image recognition.

Methods for deep learning:

-Learning rate decay: this method id most commonly used by the programmer as it increases the performance and decrease the training period.

- Transfer learning: here the existing data is re-trained with new classifications so as to perform new tasks.

TensorFlow: Tensorflow is a Google-created secondary machine learning framework that is used to build and train deep learning models.

CNN: A Convolutional Neural Network, also known as CNN is a class of neural networks that specializes in processing data that has a grid-like topology, such as an image. A digital image is a binary representation of visual data. It contains a series of pixels arranged in a grid-like fashion that contains pixel values to denote how bright and what color each pixel should be.

Other packages such as OpenCV, Numpy, Pandas, Keras, Adam, Kwargs, Cinit are used

Conclusion

A. Conclusion As a result, an effective and secure Real-time Emotion Recognition System is developed to replace a manual and temperamental framework. This framework aids in saving and reducing manual work done by organisations utilising effective electronic equipment. There are no prerequisites for this system\'s presentation because it simply makes use of a PC as well as a camera. B. Future Scope Provision of Personalised Services: Analyse emotions to display personalised messages in smart environments provide personalised recommendations. Customer Behaviour Analysis and Advertising: Analyse customers’ emotions while shopping focused on either goods or their arrangement within the shop. Healthcare: Detect autism or neurodegenerative diseases, predict psychotic disorders or depression to identify users in need of assistance, suicide prevention ,detect depression in elderly people ,observe patients conditions during treatment. Crime Detection: Detect and reduce fraudulent insurance claims ,deploy fraud prevention strategies, spot shoplifters. Others: driver fatigue detection, detection of political attitudes, employement, etc

References

[1] Xiao-Ling Xia, Cui Xu, Bing Nan (2017), “Facial Expression Recognition Based on TensorFlow Platform,” ITM Web of Conferences, ResearchGate [2] G.Hintin , Greves (2013), “Emotion Recognition with Deep Recurrent Neural Networks,” IEEE International Conference on Acoustics, Speech and Signal Processing, pp.6645-6649. [3] Karan Sethi (2020), “Emotion Detection Using OpenCV and keras,” Start it up. [4] Nithya Roopa.S (2019), “Emotion Recognition from Facial Expression using Deep Learning,” International Journal of Engineering and Advanced Technology(IJEAT [5] Abdat, F. et al. (2011). Human-Computer Interaction Using Emotion Recognition from Facial Expression. In: 2011 UKSim 5th European Symposium on Computer Modeling and Simulation. IEEE. doi: 10 . 1109/ems.2011.20 [6] Andalibi, Nazanin and Justin Buss (2020). The Human in Emotion Recognition on Social Media: Attitudes, Outcomes, Risks. In: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. CHI ’20. Honolulu, HI, USA: Association for Computing Machinery, pp. 1–16. isbn: 9781450367080. doi: 10.1145/3313831.3376680 [7] Barrett, Lisa Feldman et al. (2019). Emotional Expressions Reconsidered: Challenges to Inferring Emotion From Human Facial Movements. In: Psychological Science in the Public Interest 20.1. PMID: 31313636, pp. 1–68. doi: 10 . 1177 / 1529100619832930 [8] Cowie, R. et al. (2001). Emotion recognition in humancomputer interaction. In: IEEE Signal Processing Magazine 18.1, pp. 32–80. doi: 10.1109/79.911197. [9] Crawford, K. et al. (2919). AI Now 2019 Report. Tech. rep. New York: AI Now Institute. Daily, Shaundra B. et al. (2017). Affective Computing: Historical Foundations, Current Applications, and Future Trends. In: Emotions and Affect in Human Factors and Human-Computer Interaction. Elsevier, pp. 213–231. doi: 10 . 1016 / b978 - 0 - 12 - 801851-4.00009-4 [10] Du, Shichuan et al. (2014). Compound facial expressions of emotion. In: Proceedings of the National Academy of Sciences 111.15, E1454–E1462. issn: 0027-8424. doi: 10.1073/pnas.1322355111 [11] eprint: https://www.pnas.org/content/111/15/E1454.full. pdf. Ekman, Paul and Wallace V Friesen (2003). Unmasking the face: A guide to recognizing emotions from facial clues. Ishk. Jacintha, V et al. (2019). A Review on Facial Emotion Recognition Techniques. In: 2019 International Conference on Communication and Signal Processing (ICCSP). IEEE, pp. 0517–0521. doi: 10.1109/ICCSP. 2019.8698067 [12] Ko, Byoung Chul (2018). A brief review of facial emotion recognition based on visual information. In: Sensors 18.2, p. 401. doi: 10.3390/s18020401. [13] Lang, Peter J. et al. (1993). Looking at pictures: Affective, facial, visceral, and behavioral reactions. In: Psychophysiology 30.3, pp. 261–273. doi: 10.1111/ j.1469-8986.1993.tb03352.x [14] Rhue, Lauren (2018). Racial Influence on Automated Perceptions of Emotions. In: SSRN Electronic Journal. doi: 10.2139/ssrn.3281765. [15] Russell, James A. (1995). Facial expressions of emotion: What lies beyond minimal universality? In: Psychological Bulletin 118.3, pp. 379–391. doi: 10. 1037/0033-2909.118.3.379. [16] Sedenberg, Elaine and John Chuang (2017). Smile for the Camera: Privacy and Policy Implications of Emotion AI. arXiv: 1709.00396 [cs.CY].

Copyright

Copyright © 2022 K Nalini, G. Lakshmi Indira, S. Mahathi Varma, P Vinith. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET44648

Publish Date : 2022-06-21

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online