Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Indian Paper Currency Recognition with Audio Output System for Visually Impaired based on Image Processing using Transfer Learning

Authors: Chidanandan V, Aarjeyan Shrestha, Adarsh Kumar Dubey, Amit Chaudhari, Apil Bist

DOI Link: https://doi.org/10.22214/ijraset.2022.45699

Certificate: View Certificate

Abstract

We give an outline of camera-based computer vision technology in this paper. It can aid those who are not visually blessed in instantly identifying paper currency. For those who are blind or visually challenged, an effective paper money detection algorithm should have the following qualities: 1) Complete precision; and 2) adaptability in a range of circumstances in various environments and emergence. Many of the currency identification algorithms in use today are constrained to specific situations. We suggest a deep learning strategy focused on high accuracy in this project. This method works well for gathering more class-specific data and is better at handling partial closure and viewpoint changes. Evaluation of transfer learning also demonstrate its efficiency in coping with visual rotation, measures, and illumination changes.

Introduction

I. INTRODUCTION

Vision assists us with performing day to day undertakings as well as influences an individual's way of behaving. In the mean time, innovation is developing quickly day by day. By utilizing innovation, individuals fathom their issues over time. Advanced adaptable frameworks within the genuine world require a monetary acknowledgment framework. There are other conceivable employments in genuine life such as cash registers, cash checking frameworks, cash trade machines, and a money-monitoring framework to assist the outwardly disabled.Vision assists us for performing day to day undertakings as well as influences an individual's way of behaving. Visual deficiency influences a person’s mental conduct; an individual with a visual impedance or an individual with a typical vision may be less discouraged than a dazzle individual with a need of social intuitive and may moreover have an uneasiness clutter. One of the greatest issues for individuals who are daze is seeing the genuine thing around them. Man is able to see and recognize things, and after that meet the require for security and can believe their nature. For outwardly impeded individuals, one of the greatest issues is seeing notes. The most cause of unmistakable abandons are unpredictable refractive abandons, cataract, macular- related decrease, glaucoma, diabetic retinopathy, corneal murkiness, and trachoma (Ali & Manzoor). Numerous individuals have utilized an assortment of cash acknowledgment strategies, such as the composition, estimate, and colour of a money note. The cutting edge control of computer and the accessibility of a camera make it simple for us to construct an effective framework that sees Indian cash. There are seven Pakistan money plans, shifting in size and colour. The proposed approach might see a diverse Indian money [10, 20, 50, 100, 200, 500, and 2000], which changes over the yield into the money of a money into a daze or outwardly impeded individual.

II. EXISTING SYSTEM

To recognize the money, numerous advances have progressed. A few of these are examined underneath: Xiaodong Yanget al. propose a paper-based approach to the information of the paper money utilizing the SURF handle. But this innovation was complicated. The concept of knowing cash based on the measure, color and surface of notes utilizing the picture histogram was given by Hassanpour. Cash can be distinguished based on electromagnetic discoveries. The fundamental preface of this innovation is the most recent beat whirlpool innovation. Examiners too examined the selection handle, in which the picture is changed over into a gray scale picture. To induce freed of undesirable clamor on a gray scale the central channels are utilized. Canny locator edge and sobel edge finder are utilized to distinguish the edge. To degree the edges of the even and vertical axis around the content locale with a gray scale picture, employing a finder on the canny edge. To degree the edges of the full horizontal axis even hub on a gray scale, a locator is utilized on the edge of the sobel but the framework isn't manually operated. The vector back machine can be utilized to distinguish the bank number with the assistance of the calculation proposed by Shan Gaiet.

There's a program, which comprises of 2 categories: hardware and software. The Equipment area comprises of three fundamental parts: the establishment portion, the preparing portion, and the expulsion portion. Initially it will get a picture from the camera. Within the handle of preparing the banknotes and coins are isolated by a microcontroller. The yield component contains the voice recorder IC and speaker to supply yield to the microcontroller within the frame of voice and paper money. One paper speaks to the fast-track handle utilized for the progressed binarization of content extraction from permit plate pictures captured by a portable camera. Depending on the color conspire the analysts spoken to the innovation of content extraction and fracture of the content picture captured by the camera. The restrain of this strategy was to get large-size writings with complex lighting changes and the program was exceptionally complex. Jian Yuan et al. print a paper speaking to the content extricated from the billboards by taking a picture with the assistance of a portable camera. Sometimes there's a money related emergency and liquidation due to the utilize of the camera. In another paper, there are two sorts of fake identification methods included. One of them is to induce Ultra Violet (UV) utilizing lab see and the other is the light division when transmitted by investing cash. The result will be superior in case both comes about are great. Bank notes are effortlessly recognizable concurring to their particular colors with a gadget created by Mohammed et al. But the result isn't so precise. The framework was planned that was solid in keeping the light on the paper cash. Fair depend on banknotes to be tried on a level glass, and the framework identifies whether the paper cash is fake or not, and recognizes the esteem of notes utilizing an infrared camera. Cash can be gotten and seen utilizing neural innovation. Words in printed content can be identified by employing a versatile cursor such as a pencil. A little camera is connected to the cursor. When the client drops the cursor at that point the voice synthesizer will donate the result. For the primary time with the assistance of a pointer, the locale is analyzed. On the off chance that there's more content over the cursor then the picture is partitioned into squares. Each piece is classified as ‘character’. At that point the next step is to seize the 'character' pieces. An extra step is picture examination of extremity. In case extremity may be a diversion at that point the pixels of the mass-produced picture are changed over. Based on the Java stage, the content- perusing program is planned to assist individuals with visual disabilities. In this case the content is changed over into discourse. Content from a picture can be gotten employing a little representation, a novel-based approach and a non-guarded perusing strategy. More approximately al. propose inserted innovation. Where the ARM 11 microcontroller can be utilized for picture and video information extraction. Siddharth Mody et al. created a versatile "Text-to-Speech Converter". Discourse processor changes over content to console input into discourse.

III. LITERATURE SURVEY

The work in Paper [1] focuses on developing a system that can operate the industry-wide optical glass eye system that combines visual detection and visual recognition functions. Database images of paper documents were taken with a portable camera, the appropriate position is taken in photographs using flexible blocking and positioning filters. In order to design a reliable and powerful recognition algorithm system that has the goal of determining an object or set of visual objects that is easy to see and varied enough to serve as the basis for continuous recognition.

The system is trained to use the many features of paper money and lessons to easily understand how to use the system with confidence. Common causes of errors occur when a system is developed such as covering the benefit region with one or more fingers, it can cause detection problems.

As well as the identification of Banknotes required to obtain counterfeit money, paper [2] proposes a system that not only looks at counterfeit notes but also looks partially, wrapped, wrinkled or possibly worn or torn by use. This project uses a computer vision system to obtain multiple currency notes with a different perspective, scales and environment with a dynamic range of light. It used Computer Vision (OpenCV) to speed up the process where by reducing noise and CLAHE increasing variability, the recognition process developed by the Scale Invariant Feature Transform (SIFT), Speed up Robust Features (SURF), Features from the Accelerated Segment Test (FAST), ORB, Binary Robust Invariant Scalable Key points (BRISK). The disadvantage of the system is that in the test images in which most of the notes regions were included, the local inliers ratio of has improved for better. This is due to the localization of the dots to avoid the removal of detection results with a low level of inliers.

The Paper [3] proposed a modular model review system that would use the acquisition of features and note Indian currency notes. The main features of the Indian currency are studied briefly so that the system developed can be useful to visually impaired people to find a particular feature of a particular note. In the middle of the numbers, the vocabulary is visible and training separation made with Support Vector Machine (SVM) filters. The proposed framework picks up the symbol or different emblems in notes by training a cascade object detector in MATLAB and hence the HOG descriptor used to distinguish the Ashoka Pillar mark on the coin where the CIE LAB Color Space model was utilized for variety cash investigation and colour analysis.

The delta-E distance among preparing and information testing to recognize the sort of cash and and matching template for detection of different identification marks, both end with 99 percent precision. In any case, the variety picture histogram and Markov's chain of surface examination show exceptionally low precision as various banknotes can have similar tones and surfaces, hence lessening proficiency.

The life expectancy of a bank can't be foreordained yet can have physical damages, for example, dirtinessbrought about by over the sweaty touch of many people, oil microorganisms, and mud-borne microbes can cause these damages. To keep these ruined notes from getting older and to keep them from spreading, a data set of new and old paper cash is perused on paper [4]. Picture acquisition and preprocessing, highlight determination and result, and the development of the segment model were project stages. The Modified SMOTE Algorithm has been utilized to reinforce old banknotes. The paper cash model was created utilizing the conventional Support Vector Machine (SVM) calculation. By examining the study, the many benefits are that such a strategy can work on the popularity accuracy ratio of around 20% and tackle the issue of recycling problem somewhat. However, the primary issue is the outright and in this way the general worth of the old bank cash tests is far away yet those of the most recent financial balance and subsequently the dataset is typically not equivalent. In the field of banking, arranging, counting, arranging, arranging and erasing can be time consuming.

This paper [5] recommended a reaction involving TMS320C6416 DSK as a DSP upgrade stage, SV253A4 as a picture sensor, XRD98L23 as a sensor processor and can kill fast assortment of bank picture images and use TMS320 organization's + 4/2C photograph/video library. - TI pre-screening bank picture as a focal channel and securing of Sobel administrator edge to fulfil the picture quality necessity and assortment speed to finish distinguishing proof highlights. Yet, this approach is significantly more muddled because of the inclusion of numerous intricate sensors and equipment frameworks. There are gadgets currently accessible inside the market however they don't work for Malaysian assets and the gadgets are extravagant.

The reason for this task [6] is to make something practical to assist with blinding individuals to isolate Malaysian paper cash. Cash Note Recognizer (CNR) identifies different highlights of the Banknote and gives the result in grouping as bell burst sounds. The ATMega328P-PU microcontroller will recognize different notes to the info gave from TCS230 and send the decent sound example to the bell as a sign. Because of the insurance of the variety sensor utilizing a light safeguard, the result waves were inside an exceptionally brief distance so the framework was basic. However, assuming that you take a gander at the bigger extension, this technique doesn't function admirably, on the grounds that the sort of task has specific impediments.

IV. PROPOSED SYSTEM

A. Image Recovery

The primary arrange of any vision system is the image acquisition stage. After the picture is distinguished, distinctive planning procedures can be associated to the picture to perform various tasks. Performing picture acquisition in image processing is always the primary step within the stream of work because, without the image, no processing is conceivable. There are different ways to get a picture with the assistance of a camera or a scanner.

B. Image Pre-process

The main objective of image filtering or preprocessing is to extend image perceivability and progress the affect of data sets. Image pre-processing is one of the foremost common activities required earlier to key information examination and information extraction. Image pre-processing, too called Image rebuilding, incorporates twisting alteration, clamor diminishment, and the sound presented amid the photography handle. Picture alteration can upgrade the exactness of the test. It includes machine learning and deep learning calculations to distinguish currency types.

C. Eliminate Background

The images are taken in an assortment of situations, depending on the lighting conditions and background while the money within the photo itself can be harmed. Image segmentation is critical in decreasing information handling and evacuating undesirable highlights (background) that will include choice- making.

D. Feature Extract

Feature removal is a special type of size reduction. When the input calculation is as well huge to be handled and the input information isn't required it'll be changed over into a set of diminished values. Changing over the input information to a highlight set is called a Feature Extraction.

On the off chance if the extricated components are carefully chosen it is anticipated that the preset components will extricate the related information from the input information to perform the desired work utilizing this decreased estimate rather than the full-size input.

E. Match input with existing datasets

To guarantee image closeness, the internal work of the transfer learning is to see the information through the proper feature extraction progress and comparable to the same context within the images. The main technique of the transfer learning is to gain the knowledge from the previous done tasks and improve it self in the next iteration.

F. Audio Output Generation

Script files are the place where recognized test codes are recorded. We utilize text to speech converter to stack these records and show the sound yield of content data. Visually impaired can alter speech rate and volume to their inclinations.

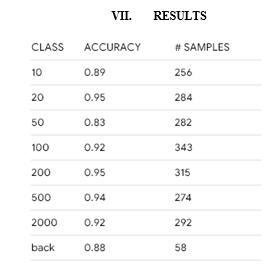

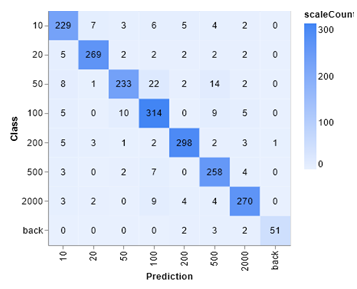

We have isolated segment 11 of the Indian notes. But it may be confounding, one might think that there are as it were 7 categories of Indian Cash. But after making cash the Government of India has presented 10, 20, 50, 100, 200, 500, 2000 notes. And 10, 20, 50,100 old notes are right now in utilize. In this project we combine the old 100 note with the modern 100 note. These notes have a totally diverse color combination, the number of pixels. So in case we attempt to combine this note as one part of Rs 100 notes it does not give an exact result because of the distinctive color pixel values. That's why we have classified the new Rs 100 notes as "new Rs 100 notes" and "old Rs 100 notes". In this way, it can be accomplished in a few way with way better accuracy and impact.

V. APPLICATION DESIGN DETAILS

A. Flutter

Flutter is open source framework and cross-platform UI SDK(Software Development Kit) for building beautiful iOS and Android application that allow code reuse across operating systems. It consists of ready-to-use widgets ,libraries, tools. It provides high- performance apps and supports native development using a single code structure.

B. Dart

Dart is a client-optimized programming language used to code Flutter apps. Dart prioritize both development and high-quality production. It combines in time compilation and ahead of time compilation. As it eliminates XML files, no separate declarative layout like XML is required. The reason to use Dart is its portability and accessibility.

C. Flutter Application

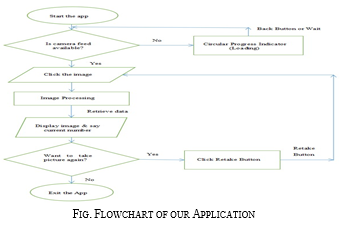

When the app starts it searches for the camera feed. If the camera feed is not available it asks for the permission to turn on the camera. Allowing the camera helps the person to click image. After the image is clicked the Image Processing starts. It retrieves the data from the different datasets trained and Displays image and say out loud the current currency number. If we want to take the picture again, click the retake Button.

VI. SYSTEM IMPLEMENTATION

A. Convolutional Neural Networks

Convolutional Neural Network (CNN) is a multilayer neural network that was actually motivated by the visual cortex of animals. The plan is exceptionally helpful within the utilize of image processing. The first CNN was made by Yann LeCun; at the time, buildings centered on handwriting recognitiont, such as deciphering postal code. As a deep network, the primary layers recognize the highlights (such as edges), and after that the layers afterward reassemble these components into high-quality input highlights. The LeNet CNN architecture comprises of a few layers that use feature extraction and partition. The picture is partitioned by the getting areas that bolster to the convolutional layer, and after that avoids highlights from the input picture. The another step is compilation, which diminishes the estimate of the extricated components whereas putting away the foremost important data. Next step is to mix the feed which is fully integrated multiple perceptron. The final layer is nodes identifying image elements. You train the network through back distribution. CNN can not only be used in image processing but also in video recognition and other different application in the use of natural language.

B. Transfer Learning

Using the knowledge gained from the previous problem of pre-trained model into a new problem is known as the Transfer Learning in Machine Learning. To increase the prediction of a new task the machine used the knowledge learned from the previous assignment. It reduces the training time, improve the performance of the neural network and also the absence of a large amount of data. CNN is a multilayer neural network that was actually motivated by the visual cortex of animals.

We first run a Deep learning algorithm on a given datasets to generate the model. Our Transfer Learning techniques will use the layers that have been pre-trained on source to solve a task that has been targeted. We download the pre-trained model from the internet and remove the top portion (the fully-connected layer). It leaves us with only the convolutional and pooling layers. Using the pre-trained layers, we will extract various visual features from our dataset. We freeze specific layers from the training and use pre-trained weights by updating them with backpropagation. We first train the model without Fine-tuning. We initialize the best trained weights and recompile the model allowing for back propagation to update the last two pre-trained layers. We again initialize our Fully-Connected layer and also its weights for training.

We generate a model that can outperform a custom written CNN by using different Transfer Learning strategies such as Fine-Tuning. We solved the paper cash recognition using a custom datasets of pre-trained model of Transfer Learning.

C. Fine-Tuning

Fine Tuning is the process of taking weights of a trained neural network and using it as an input or initialization for a new model being trained on data from the image/videos. It speeds up the training process and also overcome the small datasets size. In this model we load the pre-trained layers, pass image data through it and fine tune the trainable layers nearby Fully Connected Layer.

Conclusion

We started with a brief preamble to our system and highlighted different scopes and objectives of this project. During the project research we had an opportunity to come across the problems that visually impaired people are facing in current environment. We checked on numerous Google searches out of which we decrease down to few papers and chosen a few papers as our base inquire about papers. We also came to conclude that the existing framework using the sparse representation, edge detection etc are extremely complex and not handheld. Also the systems only based on color parameters are less effective and will give only partially accurate results. As we focused on handheld possibilities for the project we couldn’t find system that enable visually impaired people for the currency recognition of bank notes. In this study, we displayed a novel strategy for the acknowledgement of Indian Currency notes. The highlight form images are extricated using a deep learning transfer learning techniques. The structure of this project consists of two crucial steps: in the first step, we train our model using already pre-trained architecture. In second step, use make use of the camera, which captures the image of the currency which the extracts the features to train on pre-trained architecture. As a result it recognizes the paper currency with very high accuracy. We can further extend the proposed system for the future work in the area of recognizing even some complex Indian Currencies Notes. The overall purpose is to provide the visual acuity for the visually impaired people by constructing different effective frameworks and systems for guaranteed solution. This project is about the creation of the user-friendly, real-time, easy-to-use, accessible application. This project is an attempt to suggest the approach for extracting characteristics of Indian paper currency. This project will definitely be useful in providing the vision to visually impaired people. In the future, Mobile app can be built to recognize different foreign currency notes like Dollars, Taka and Euros etc. Also the UI of the app can be further improved for the easy use. A system can be built by implementing this project for fake currency detection which will be the better approach for the project. We can also implement the conversion of currency denomination for the future scope.

References

[1] Rubeena Mirza1,Vinti Nanda2 “Design and implementation of Indian Paper currency authentication system based on Feature extraction by Edge based Segmentation using sobel operator” International journal of engineering Research and development eISSN:2278-067X,p- ISSN:2278-800X, www.ijerd.com Volume 3,Issue 2(august 2012) PPJ. Breckling, Ed., The Analysis of Directional Time Series: Applications to Wind Speed and Direction, ser. Lecture Notes in Statistics. Berlin, Germany: Springer, 1989, vol. 61 [2] Kalyan Kumar Debnath, bSultan Uddin Ahmed,aMd. Shahjahan “A Paper currency Recognition System using negatively correlated Neural network Ensemble”, MULTIMEDIA OF JOURNAL, VOL. 5, NO.6, DECEMBER 2010@2010 [3] Megha Thakur, Amrit Kaur, “VARIOUS COUNTERFEITCURRENCY DETECTION AND CLASSIFICATION TECHNIQUES” International Journal ForTechnological Research In Engineering Volume 1, Issue 11, pp.1309-1313,July -2014 [4] Vivek Sharan, Amandeep Kaur,” Detection of Counterfeit Indian Currency Note Using Image Processing” International Journal of Engineering and Advanced Technology (IJEAT), Volume.09, Issue:01, ISSN: 2249 -8958 (October 2019) [5] Aakash S Patel, “Indian Paper currency detection” International Journal for Scientific Research & Development (IJSRD), Vol. 7, Issue 06, ISSN: 2321 613 ne 2019) [6] Ingulkar Ashwini Suresh,”Indian Currency Recognition and verification Using Image Processing”.IRJETInternational Research Journal of Engineering and Technology, Vol.3, Issue-6, 2016 [7] Mohd Bilal Ganai”Implementation of Text to Speech Conversion Technique”-International Journal of Innovative Research in Computer andCommunication Engineering (AnISO 3297: 2007 Certified Organization) Vol. 3, Issue 9,September 2015 [8] Mriganka Gogoi, et al.,”Automatic Indian Currency Denomination Recognition System based on Artificial Neural Network”, 978-1-4799-5991-4/15©2015 IEEE [9] Kuldeep Verma, et al.,”Indian Currency Recognition Based On Texture Analysis”, Institute Of Technology, NirmaUniversity, Ahmedabad – 382 481, 08- 10 December, 2011

Copyright

Copyright © 2022 Chidanandan V, Aarjeyan Shrestha, Adarsh Kumar Dubey, Amit Chaudhari, Apil Bist. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45699

Publish Date : 2022-07-17

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online