Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Lung Cancer Detection using Fusion, CNN and YOLO in MATLAB

Authors: Parth Vats, Tejas , Ritu Vats, Geeta Dahiya

DOI Link: https://doi.org/10.22214/ijraset.2023.49125

Certificate: View Certificate

Abstract

Lung Cancer is one of the major causes of deaths in India. Various data analytics and classification approaches have been used to diagnose and find lung cancer in numerous cases. Lung Cancer can only be cured by early tumour diagnosis because the basis of the diseases is yet unknown, making prevention impossible. In order to classify the existence of lung cancer/tumour in a CT picture and PET image, a lung cancer detection method using image processing and deep learning is applied. Using Fusion technique, we first obtain CT scans, then PET images of the same patient, then combine both images into one. The classification carried out using image feature extraction. As a result, the combined CT and PET scan images of the patient are classified as normal or abnormal. The tumour component of the abnormal photos is the focus of the detecting process. Using YOLO and CNN, an effective strategy to identify lung cancer and its phases is one that also seeks to produce more precise results.

Introduction

I. INTRODUCTION

A. Lung Cancer

Lung cancer is the primary cause of deaths in the world. As it has four stages and if detected at stage I then there is about 67% chances of survival rate, and if detected at stage IV less than 1% chance left. Thus, it is concluded that early detection and treatment at stage 1 have high survival rate. But unfortunately, lung cancer is usually detected late due to the lack of symptoms in its early stages. This is the reason why lung screening programs have been investigated to detect pulmonary nodules: they are small lesions which can be calcified or not, almost spherical in shape or with irregular borders. Early diagnosis has an important prognostic value and has a huge impact on treatment planning. As nodules are the most common sign of lung cancer, nodule detection in CT scan images is a main diagnostic problem. Conventional projection radiography is a simple, cheap, and widely used clinical test. Unfortunately, its capability to detect lung cancer in its early stages is limited by several factors, both technical and observer-dependent. Lesions are relatively small and usually contrast poorly with respect to anatomical structure. In the field of medical diagnosis an extensive diversity of imaging techniques is presently available, such as radiography, computed tomography (CT) and magnetic resonance imaging (MRI). In recent times, Computed Tomography (CT) is the most effectively used for diagnostic imaging examination for chest diseases such as lung cancer, tuberculosis, pneumonia and pulmonary emphysema. The volume and the size of the medical images are progressively increasing day by day. Therefore, it becomes necessary to use computers in facilitating the processing [1] and analyzing of those medical images.

II. LITERATURE SURVEY

- W. Wang and S. Wu

The paper presents that (1) we apply image processing technique into lung tissue information recognition, the key and hardest task is auto-detecting the tiny nodules, which may present the information of early lung cancer; and (2) the newly developed ridge detection algorithm is to diagnose indeterminate nodules correctly, allowing curative resection of early-stage malignant nodules and avoiding the morbidity and mortality of surgery for benign nodules. The algorithm has been compared to some traditional image segmentation algorithms. All the results are satisfactory for diagnosis

Summary: Newly developed ridge detection algorithm is to diagnose indeterminate nodules correctly, allowing curative resection of early-stage malignant nodules

2. A. Sheila and T. Ried

This perspective on Varella-Garcia et al. (beginning on p. XX in this issue of the journal) examines the role of interphase fluorescence in-situ hybridization (FISH) for the early detection of lung cancer. This work involving interphase FISH is an important step towards identifying and validating a molecular marker in sputum samples for lung-cancer early detection and highlights the value of establishing cohort studies with biorepositories of samples collected from participants followed over time for disease development.

Summary: It is used for the early detection of lung cancer

3. D. Kim, C. Chung and K. Barnard

Research has been devoted in recent years to relevance feedback as an effective solution to improve performance of image similarity search. However, few methods using the relevance feedback are currently available to perform relatively complex queries on large image databases. In the case of complex image queries, images with relevant concepts are often scattered across several visual regions in the feature space. This leads to adapting multiple regions to represent a query in the feature space. Therefore, it is necessary to handle disjunctive queries in the feature space. In this paper, we propose a new adaptive classification and cluster-merging method to find multiple regions and their arbitrary shapes of a complex image query. Our method achieves the same high retrieval quality regardless of the shapes of query regions since the measures used in our method are invariant under linear transformations. Extensive experiments show that the result of our method converges to the users true information need fast, and the retrieval quality of our method is about 22% in recall and 20% in precision better than that of the query expansion approach, and about 35% in recall and about 31% in precision better than that of the query point movement approach, in MARS. 2005 Elsevier Inc. All rights reserved.

Summary: Achieves the same high retrieval quality regardless of the shapes of query regions since the measures used in our method are invariant under linear transformations.

4. S. Saleh, N. Kalyankar, and S. Khamitkar

In this paper, we present methods for edge segmentation of satellite images; we used seven techniques for this category; Sobel operator technique, Prewitt technique, Kiresh technique, Laplacian technique, Canny technique, Roberts technique and Edge Maximization Technique (EMT) and they are compared with one another so as to choose the best technique for edge detection segment image. These techniques applied on one satellite images to choose base guesses for segmentation or edgedetection image.

Summary: The comparative studies applied by using seven techniques of edge detection and experiments are carried out for different techniques Kiresh, EMT and Perwitt techniques respectively are the best techniques for edge detection.

5. L. Lucchese and S. K. Mitra

Segmentation is the low-level operation concerned with partitioning images by determining disjoint and homogeneous regions or, equivalently, by finding edges or boundaries. The homogeneous regions, or the edges, are supposed to correspond to actual objects, or parts of them, within the images. Thus, in a large number of applications in image processing and computer vision, segmentation plays a fundamental role as the first step before applying to images higher-level operations such as recognition, semantic interpretation, and representation. Until very recently, attention has been focused on segmentation of gray-level images since these have been the only kind of visual information that acquisition devices were able to take and computer resources to hand le. Nowadays, color imagery has definitely supplanted monochromatic information and computation power is no longer a limitation in processing large volumes of data. The attention has accordingly been focused in recent years on algorithms for segmentation of color images and various techniques, ofted borrowed from the background of gray-level image segmentation, have been proposed. This paper provides a review of methods advanced in the past few years for segmentation of color images.

Summary: Color layer discrimination in cartographic documents of low graphical quality.

6. F. Taher and R. Sammouda

The analysis of sputum color images can be used to detect the lung cancer in its early stages. However, the analysis of sputum is time consuming and requires highly trained personnel to avoid high errors. Image processing techniques provide a good tool for improving the manual screening of sputum samples. In this paper two basic techniques have been applied: a region detection technique and a feature extraction technique with the aim to achieve a high specificity rate and reduce the time consumed to analyze such sputum samples. These techniques are based on determining the shape of the nuclei inside the sputum cells. After that we extract some features from the nuclei shape to build our diagnostic rule. The final results will be used for a computer aided diagnosis (CAD) system for early detection of lung cancer.

Summary: Achieves the same high retrieval quality regardless of the shapes of query regions since the measures used in our method are invariant under linear transformations

III. PROPOSED METHOD

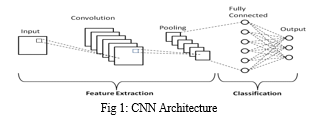

A. CNN

A convolutional neural network (CNN or ConvNet), is a network architecture for deep learning which learns directly from data, eliminating the need for manual feature extraction. CNNs are particularly useful for finding patterns in images to recognize objects, faces, and scenes. They can also be quite effective for classifying non-image data such as audio, time series, and signal data. Applications that call for object recognition and computer vision — such as self-driving vehicles and face-recognition applications — rely heavily on CNNs.

The term ‘Convolution” in CNN denotes the mathematical function of convolution which is a special kind of linear operation wherein two functions are multiplied to produce a third function which expresses how the shape of one function is modified by the other. In simple terms, two images which can be represented as matrices are multiplied to give an output that is used to extract features from the image.

B. YOLO V2

In YOLOv2 the details of each block in the visualization can be seen by hovering over the block. Each Convolution block has the BatchNorm normalization and then Leaky Relu activation except for the last Convolution block. YOLO divides the input image into an S×S grid. Each grid cell predicts only one object. For example, the yellow grid cell below tries to predict the “person” object whose center (the blue dot) falls inside the grid cell. Each grid cell predicts a fixed number of boundary boxes. In this example, the yellow grid cell makes two boundary box predictions (blue boxes) to locate where the person is. However, the one-object rule limits how close detected objects can be.For each grid cell,

- It predicts B boundary boxes and each box has one box confidence score,

- It detects one object only regardless of the number of boxes B,

- It predicts C conditional class probabilities (one per class for the likeliness of the object class).

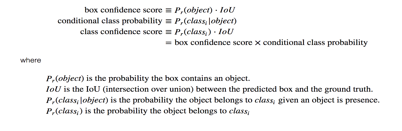

The boundary boxes contain box confidence score. The confidence score reflects how likely the box contains an object (objectless) and how accurate is the boundary box. We normalize the bounding box width w and height h by the image width and height. x and y are offsets to the corresponding cell. Hence, x, y, w and h are all between 0 and 1. Each cell has 20 conditional class probabilities. The conditional class probability is the probability that the detected object belongs to a particular class (one probability per category for each cell). The class confidence score for each prediction box is computed as:

Class confidence score = box confidence score * conditional class probability

It measures the confidence on both the classification and the localization (where an object is located). We may mix up those scoring and probability terms easily. Here are the mathematical definitions for your future reference.

YOLO predicts multiple bounding boxes per grid cell. To compute the loss for the true positive, we only want one of them to be responsible for the object. For this purpose, we select the one with the highest IoU (intersection over union) with the ground truth. This strategy leads to specialization among the bounding box predictions. Each prediction gets better at predicting certain sizes and aspect ratios.

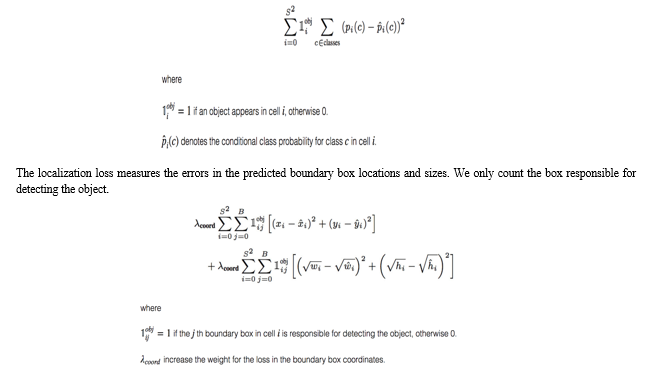

YOLO uses sum-squared error between the predictions and the ground truth to calculate loss. The loss function composes of:

- the classification loss.

- the localization loss (errors between the predicted boundary box and the ground truth).

- the confidence loss (the objectness of the box).

C. Classification Loss

If an object is detected, the classification loss at each cell is the squared error of the class conditional probabilities for each class:

We do not want to weight absolute errors in large boxes and small boxes equally. i.e. a 2-pixel error in a large box is the same for a small box. To partially address this, YOLO predicts the square root of the bounding box width and height instead of the width and height. In addition, to put more emphasis on the boundary box accuracy, we multiply the loss by λcoord .

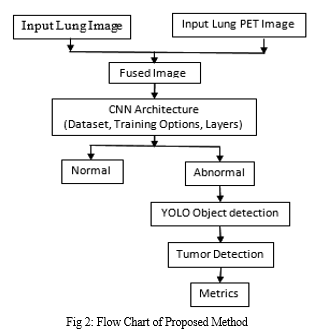

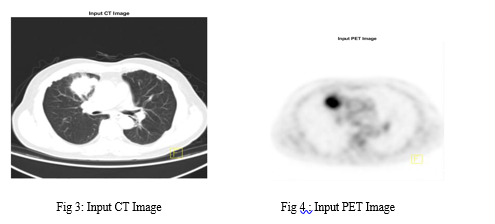

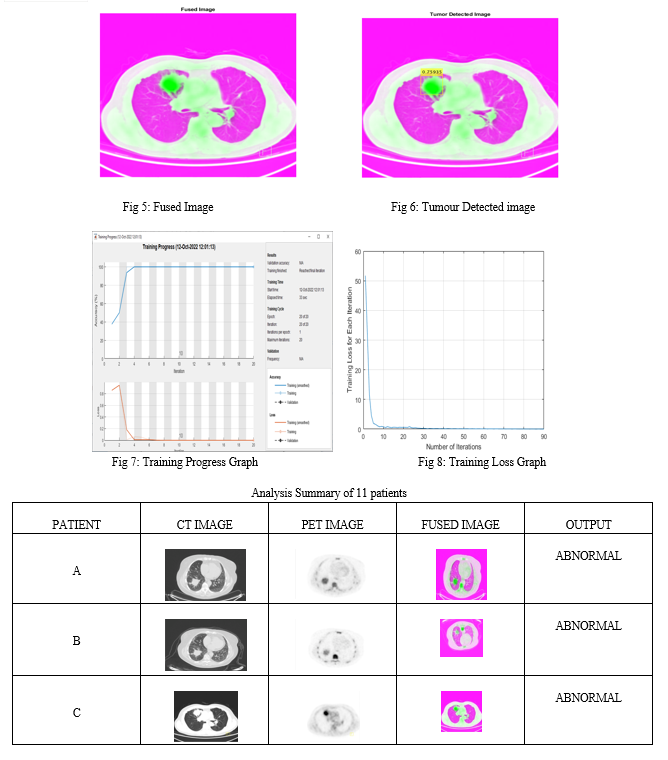

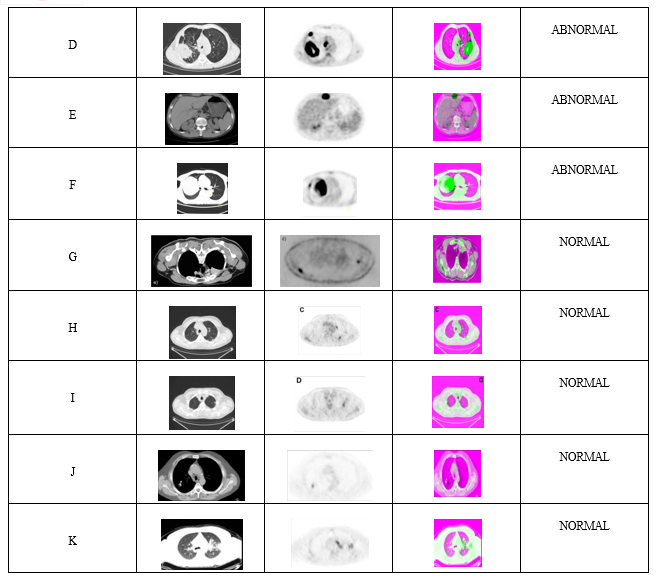

D. Methodology

- In our method first we collected both Lung CT and Lung PET images from Kaggle as dataset and trained our deep learning model.

- Then by using Fusion techniques CT and PET images are combined of same patient.

- The output fused images are then trained with CNN network which will classify the fused images as Normal and Abnormal.

- If the input images classified as abnormal then YOLO V2 is used for detecting the tumour with a boundary box in classified output image. Here we investigate a better and accurate method for lung cancer/tumour detection using deep learning techniques.

IV. ADVANTAGES AND APPLICATIONS OF PROPOSED METHOD

A. Advantages

- Early diagnosis improves cancer outcomes by providing care at the earliest possible stage

- In addition to increased accuracy in predictions and a better Intersection over Union in bounding boxes (compared to real-time object detectors), YOLO has the inherent advantage of speed.

- YOLO is a much faster algorithm than its counterparts, running at as high as 45 FPS.

B. Applications

- Early Lung Cancer Diagnosis

- In medical fields

V. RESULTS AND CONCLUSIONS

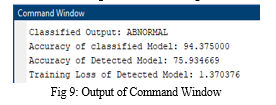

We tested our project on 11 patients and it was found that it successfully detected tumour 9 patients out of 11.

VI. ACKNOWLEDGMENT

We would like to express our sincere thanks and gratitude to Mr. Pankaj Dahiya, Medical Oncologist, Critical Care Centre Dayanand Hospital and Mr. Tarun Yadav, MS Civil Hospital, Sonipat for their valuable guidance and to help us in the research work and for the recommendation of this project. We would also like to thanks our guide teachers and our principal sir Mr.V.K.Mittal for their valuable support and cooperation which helped us in the completion of this project.

Conclusion

In this paper, here we have investigated a novel technique using CNN and YOLO V2 pertained network for lung cancer/ tumor detection using lung cancer images. The most effective strategy to reduce lung cancer mortality is early detection. Firstly, takes both CT and Lung PET images of patient and We have used fusion techniques for fusing Lung CT and Lung PET into single fused image and then given for classification through images as normal/abnormal is done by using Deep Learning Network i.e., CNN and If the fused image has tumor i.e., abnormal then detection of lung cancer/Tumor using YOLO V2.

References

[1] W. Wang and S. Wu, “A Study on Lung Cancer Detection by Image Processing”, proceeding of the IEEE conference on Communications, Circuits and Systems, pp. 371-374, 2006. [2] A. Sheila and T. Ried “Interphase Cytogenetics of Sputum Cells for the Early Detection of Lung Carcinogenesis”, Journal of Cancer Prevention Research, vol. 3, no. 4, pp. 416-419, March, 2010. [3] D. Kim, C. Chung and K. Barnard, \"Relevance Feedback using Adaptive Clustering for Image Similarity Retrieval,\" Journal of Systems and Software, vol. 78, pp. 9-23, Oct. 2005. [4] S. Saleh, N. Kalyankar, and S. Khamitkar,” Image Segmentation by using Edge Detection”, International Journal on Computer Science and Engineering(IJCSE), vol. 2, no. 3, pp. 804-807, 2010. [5] L. Lucchese and S. K. Mitra, “Color Image Segmentation: A State of the Art Survey,” Proceeding of the Indian National Science Academy (INSA-A), New Delhi, India, vol. 67, no. 2, pp. 207-221, 2001. [6] F. Taher and R. Sammouda,” Identification of Lung Cancer based on shape and Color”, Proceeding of the 4th International Conference on Innovation in Information Technology, pp.481-485, Dubai, UAE, Nov. 2007.

Copyright

Copyright © 2023 Parth Vats, Tejas , Ritu Vats, Geeta Dahiya. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET49125

Publish Date : 2023-02-15

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online