Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Review Made on the Content-Based Image Recovery

Authors: V. Sivakumar, AbdurRehman Khadim, Rohan B Patil, Arun Balouria, R. Swathi

DOI Link: https://doi.org/10.22214/ijraset.2022.45973

Certificate: View Certificate

Abstract

Images recovery mean that recovering the authentic images from which the images recovered from the feature database, as you will see inhere we talked in the paper all about the latest methods and technique in the field based on image recovery and image processing. Content Based Image Recovery (CBIR) is fastest and fastest developing research in field and in the place of the Graphic Processing. Translated methods facilitate faster image recovery, queries for image recovery system, image based on image processing (CBIR) in combination with multimedia encoded features, and outside many image recovery techniques out there. Some futuristic researches guide us to the present here to evaluate the research in this area and infield of image recovery.

Introduction

I. INTRODUCTION

Content-based image recovery method (CBIR) show that it has excellent performance in calculating low level features of the pixels representation still the output volume doesn’t match with user's expectations. System does not work well in extracting semantic elements such as objects, object meanings, actions and emotions. In this situation, is called as semantic gap, included present research of (CBIR) programs on recovering image by the type and object or visual field. Still analyzing also interpret big image value and various images site, such as in the CBIR method is clearly difficult because of there is no need prior knowledge of the size or scale of each of the buildings where images can be inspected. Content Based Image Recovery (CBIR), automatically finds digital images on a large website. This process uses the visual content of the image to make a query. Contrary to previous methods of retrieving images involving footnote of handwritten images, CBIR system detects image by auto extraction of features. With technological advances, which include the growing fame of optic and digital-cameras and the good chances to manage and withhold large amounts of information, CBIR appears to be more effective and power saving. It frees the user coming from previous hard work, straightforward and prone mistake and errors and as a result greatly improves system usability.

II. THE USE OF IMAGES

Historical record says that the first man called for paintings on the walls of the cave. In Roman Empire times the images were mostly saw as a system of architecture and road development with geospatial information maps and gaining a high hand in the face of war. The demand and use of images increased over the years, especially with arrival of photography in sixteenth century. And in the 20th century, the introduction of various forms of computers and development in science and technology gives birth to inexpensive digital storage devices and a global web, leading to the development of growing assets such as digital knowledge in the form of photography. In this age of computer work in human life, including trade, surveillance, academics, hospitals, government, engineering, architecture, crime prevention, journalism, fashion and photography, and historians desperately need photography to make effective and efficient resources.

A large collection of images and their grayscale values ??is called a photographic website. An image website is a structure in which image values such as grayscale values are given individually. Image values or data includes raw images and data extracted from grayscale values ??with automatic or computer aided image analysis techniques. Police keep record of photos of the crime scenes, murderers, and evidence of corruption, criminals, and stolen items. On X-rays of medical practice, MRI and ultra-sonic scanned image of the site is stored for monitoring, diagnosis, and research purposes.

In the field of architecture and engineering design the details of the images are of the design designs, and the analysis of the mechanical components. In advertising and issuing of journals create a photographic portfolio of many events and venture as buildings, people, sports, national and international events, and outcome advertisements. In historical researches the photographic images are made up in areaof historical recordswhich includes the art,medicine and social sciences.

III. THE PROBLEM INIMAGE RECOVERY

If you have a small collections of image, simple navigation can identify an image in your mobile gallery or any other image archive, but it’s not a best solution for the big and varied type of image collection, where user will encounter image recovering problems. The problem of image recovery is that the problem of searching and recovering of images which is closer to a user's request retrieved from the database. An example of a recovery problem is ingenuity of engineer who requires finding his organization's database for help in similar design of his projects.

Let us say a police looking for validation of a criminal suspect face among the faces are in famous criminal-data. In business section before the sign is finally approved for use, it’s necessary to finding out if there are similar ones or not because this can lead to complaints and penalties on copyright. In hospitals, some tablets and ailments need attention of doctor and reviews like X-ray, ultrasound, MRI, or scanned image of the patient before any suggestion of the solution.

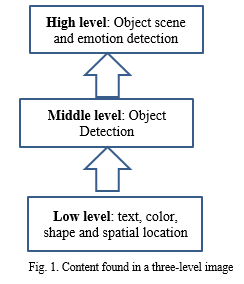

IV. VISUAL CONTENT LEVELS

Images are inherited by attributes, dataand contents such that these can help resolve the image recovery problem. The data or content-value that can be obtained from the image such areas sorted in major three levels. See Figure 1 for information.

- Low Level: Includes visual characteristics such as shape, color, texture,motion, spatial-data, and other valuable information.

- Intermediate Level: It include the attendance or arrangement of specific types of object, content in the image or scenes and roles.

- High Level: Includes emotions, feeling or impression, expression of feeling and meanings related with mixture of perceptual attributes. For Example including scenes or objects of emotions or expression of the religious value.

Image content quality determines the degree of output of the required feature. At the lowest level, which is also considered to be the basic or basic level the elements extracted such as texture, color, shape, location data and movement of data are called as classic features because they can only be gained with standard pixel-elements data, and pixel representation of photography. Medium-level features can be extracted with the knowledge of the combination pixels which make the whole image, while the existing high level features can go out of just a set of pixels. Finding imagery, descriptions and feelings connected with a combination of pixels that forms a complete object.

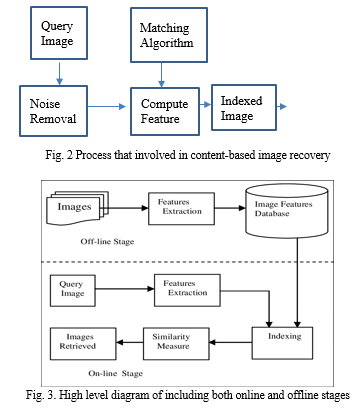

V. PRINCIPLE OF CBIR

The standard CBIR structure as shown in Figure.2 automatically removes the visual features (color, texture, shape and location data) of every images on a website based on the pixel value ??presented in different types of system page is called as feature forum. The characteristic feature of each attribute of the image is relatively small in volume compared to the image data.

So the feature website has the volume of abstraction or combined image to shape on the image website; every image represents a combined rendering of the content that the image contains such as texture, color, shape and location-data and represented in form of fixed length or vector or real value or many component and signature. Many customers usually provide query image input name or image itself as input and insert in the system. Then system automate and removes the visual information, objects and contents of a query image (online process) in same way as it do for each of images you have saved (offline), and identifies the images on web whose vectors contain same feature values. Those are available as query image, also sort out items in familiar way also in terms as similarity of a feature website. During this application it has the vectors of a compact feature less than the size of the image data which proves that CBIR its method is cheaper and faster and more efficient and more profitable than text based document image recovering. CBIR can be used in 2-ways. First one, direct image comparing or matching is what two image matching, one image example such as, an existing image on a website. Second, a limited image comparison, which is to find the images closest to the image in question.

In offline process from the document the grayscale value of comparing imagesstored in image feature database and in online process from the given query image we remove the noise in the image and extract the feature and then convert it into the gray scale value after that we compare the grayscale by pixel by pixel if we found the similar value in comparison then we retrieve the images from the document those who have the similar values.

VI. PROPOSED SYSTEM

A. Proposed Methodology

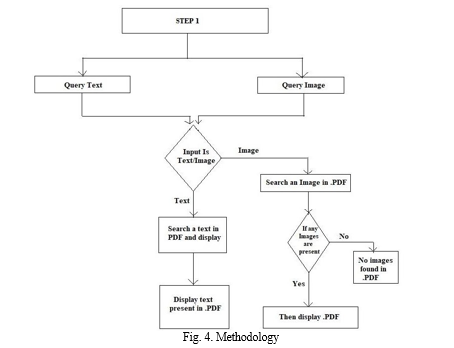

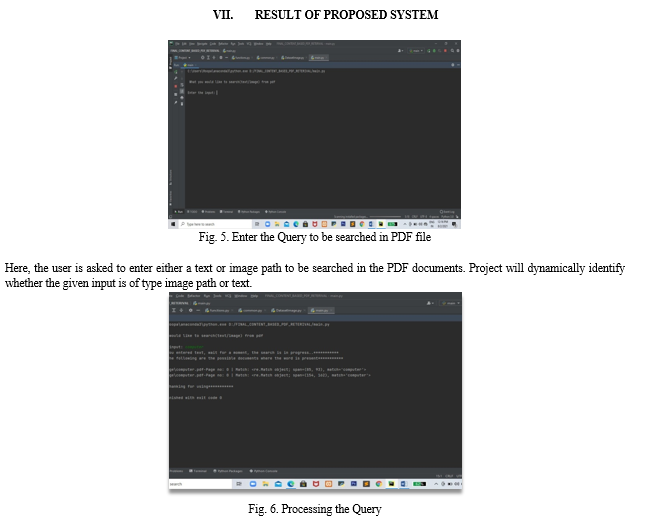

We have proposed a system to search for given input query image/text from PDF. The proposed system extracts local binary pattern (LBP) and RGB features from input query image as well as the images present in PDF document.

The extracted features are used to construct histogram and matched further to find exact matching images present in PDF documents.

The proposed system also uses general text matching technique finds identify whether the input substring is present in PDF document or not. In both the cases if the search is successful.

The corresponding PDF document is retrieved and opened in web browser to show the input query image/text is present in document. Otherwise it will display appropriate message to indicate unsuccessful search. The proposed system contains following modules.

The following are the Project modules

- Input module.

- Data-preprocessing module.

- Feature extraction.

- Image similarity detection module

- Text search module

B. Implementation Details

In this section we describe the implementation of the core modules of the proposed system and the pieces of code.

- Input Module

The input module will accept image path/substring as a input and initiate a system for searching. The following python code fragment shows the implementation details of input module.

def get_query_image_path_from_user():

dataset_path = raw_input("\nEnter the input: ")

return dataset_path

2. Data-preprocessing Module

In this step the input query image and text information is preprocessed in order to remove the noise present in an image. In this step we also convert RGB image into grayscale image. The text input content is tokenized to break long statements into individual words. The following fragment of code shows image as well as text preprocessing operations.

3. Feature Extraction.

In order to find the correct matching, all images which are present in PDF documents are extracted and then their local binary pattern and color information features are extracted and corresponding histograms constructed, normalized to find similarity between extracted image features which are present in PDF Document to the extracted image features of the query image path to find a perfect matching image

4. Similarity Matching Module

This method is used to find similarities between the image elements of the query image method in the extracted elements of PDF Documents and to obtain the corresponding PDF document.

5. Text Search Module

This module accepts the query text and then list out all the PDF documents available in the specified path and later text information present in each PDF document is extracted to find whether the input query text present in the PDF document or not. This process will be repeated for all PDF documents present in specified path. If appropriate matching text is present in PDF documents then it will display the PDF documents.

Conclusion

The important aim of this project is to give an efficient, effective and easier way to access and recover text-based images. We start by doing text-based image retrieval to obtain a set of immature image with a higher memory level compared to a higher level of accuracy. Then image content or feature-based processing is used to produce the most relevant, accurate and accurate output. Combining a text-based meta search method with a content-based retrieval method: first we use a text-based method; then apply image retrieval method based on visual content; or he can use two methods at same period of time. By using a high memory level and low accuracy, as well as a less costly original text based application, we can easily accumulate more pertinent images online at a much lower and affordable price. Then a more cost-effective, accurate and visual-based or content-based methods are used to improve precision-related approach in very small given image sets. The test results show as, even atomic image features and system incorporated algorithms to achieves promising and accurate results.

References

[1] Hiroki Tanioka, “A Fast Content-Based Image Retrieval Method Using Deep Visual Features: A survey,” Pattern Recognition, 17(1), 2019. [2] Eksan Firkat, Abdusalan Dawat, Palidan Tuerxan, Askar Handulla, “Bilingual Printed Document Image Retrieval Based On SIFT Feature: where do we go from here”, IEEE Transactions On Knowledge and Data Engineering, 4(5), Oct. 2019. [3] Nabin Sharma, Ranju Mandal, Rabi Sharma, Umapada Pal, Michael Blumenstein,“Signature and Logo Detection using Deep CNN for Document Image Retrieval,” in Storage and Retrieval for Image and Video Databases, San Jose, CA, 2018. [4] Amarnath Gupta and Ramesh Jain, “A Novel Technique For Content Based Image Retrieval Using Color, Texture & Edge Feature , 40(5), 2016. [5] A. Pentland, R. W. Picard, and S. Sclaroff, “HPCIR- Histogram Position Centroid For Image Retrieval,” in Proceedings of the SPIE Conference on Storage and Retrieval of Image and Video Databases II, pages 34--47, 2016. [6] Remco C. Veltkamp, MirelaTanase, “Content-Based Image Retrieval Systems: A Survey,” Technical Report UUCS-2000-34, October 2016. [7] J. R. Smith and S.-F. Chang, “CBDIR: Fast and Effective Content Based Document Information Retrieval System, 4(3): 12-20, 2016. [8] I. Kompatsiaris, E. Triantafylluo, and M. G. Strintzis, “A World Wide Web RegionBased Image Search Engine,” International Conference on Image Analysis and Processing, 2015. [9] Michael J. Swain, Charles Frankel, Vassilis Athitsos, “WebSeer: An Image Search Engine for the World Wide Web,” Technical Report TR96 -14, University of Chicago, July 2015. [10] Sclaroff S., Taycher L. and Cascia M. L, “ImageRover: a content-based image browser for the World Wide Web.,” in Proc. of IEEE Workshop on Content-based Access of Image and Video Libraries

Copyright

Copyright © 2022 V. Sivakumar, AbdurRehman Khadim, Rohan B Patil, Arun Balouria, R. Swathi. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45973

Publish Date : 2022-07-25

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online