Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Night Guardian: Empowering Women’s Safety with Hand Sign – Based Communication and AI

Authors: Padmini D, Mohanapriya S, Mr. A. G. Ignatius

DOI Link: https://doi.org/10.22214/ijraset.2024.58340

Certificate: View Certificate

Abstract

In an era where technology continually shapes the way we interact with the world, there arises a pressing need for innovative solutions that enhance women\'s safety. Ensuring women\'s safety, especially at night, is crucial due to the unique challenges and risks they may face during nighttime hours. Our project aims to address critical issues related to women\'s safety, such as harassment, assault, and emergencies, particularly in public spaces. We leverage advanced technologies like MediaPipe and machine learning algorithms to create an effective and responsive safety solution. The project employs various machine learning algorithms, including random forest, support vector machine, Hybrid model and k-nearest neighbor, to train the model. Rigorous testing is conducted to evaluate and select the optimal model, utilizing key performance metrics such as F1 score, precision, and recall. By integrating technology and machine learning into the project, we strive to provide women with tools and resources that enhance their safety, create awareness, and contribute to the development of safer environments. The integration of MediaPipe proves instrumental in hand tracking and key point detection, enhancing the accuracy of sign language recognition. Leveraging OpenCV, the system captures video through a webcam, allowing real-time comparison with the trained model. Predictions are made based on the recognized sign language gestures. To enhance the system\'s applicability, a database matching mechanism triggers an alarm and sends an email with a screenshot when a recognized sign matches predefined gestures. This feature ensures timely notifications, allowing authorities to take necessary actions in response to identified incidents for women safety. The real-time nature of the system and its ability to recognize and respond to specific gestures make it a valuable tool in addressing safety concerns for women.

Introduction

I. INTRODUCTION

In many countries around the world, there is growing concern regarding women's safety. The rate of crimes against women is rising alarmingly. Women face challenges in various situations and their safety has become a major issue. Women harassment is the fourth most common and frequently happening crime in India. Most attacks on women occur while they are travelling alone or are in a distant location where they cannot seek any aid. Unawareness about self-defense and inefficient self-protection weapons currently available are the main reasons why women cannot protect themselves at critical times. Women are still facing unfortunate incidents like molestation, rape, acid attack etc. Many applications and devices are already there in the market but those are ineffective as they need to be manually operated. Since the mental state of the women is affected in danger conditions and sometimes it's not possible to operate them manually.Since gesture interaction is a kind of non-contact way, which is more secure, friendly, and easy to accept by humankind.

The gesture is one of the most widely used communicative manners. In the long-term social practice process, the gesture is endowed with a variety of specific meanings. At present, gesture has become the most powerful tool for expressing sentiment, intention, or attitude for humans. Sign language mainly use the human hands to convey information. The main contribution of this work is that an HRI system by integrating deaf and speech-impaired people, which enables the social robots to communicate with target people efficiently and friendly. Most importantly, the proposed system can be applied in other interaction scenarios between robots and autistic children.Sign language recognition is the process of recognizing and interpreting human hand gestures, movements, and poses used in sign languages, which are used by deaf and hard-of-hearing individuals to communicate with each other and with the hearing community. The increased visibility of women is also resulting in the increase in the levels of crime on women. At the same time, there is also the curious case of the declining labour force participation of women in India. Of all the factors responsible for this, one that can also be an important factor is increase in lack of safety in public spaces and women related crimes. There has never been a greater need to discuss fervently the issue of safety of women in public places.

II. LITERATURE REVIEW

The literature review in the mentioned paper critically examines the prevalent issues of abuse and harassment faced by women and girls in India, emphasizing the distinct challenges encountered on various social media platforms. The authors highlight the limited safety measures and protection mechanisms available for women in online spaces compared to real-life scenarios. The focus is primarily on platforms such as Twitter, Facebook, and Instagram, where abusive content, including videos, images, and written text, poses a threat to women's safety. The review underlines the need for enhanced safety measures and stricter actions against those who misuse these platforms to harass women. The paper also acknowledges the significance of social media as a medium for women to express their views, share experiences, and seek support, shedding light on the complex dynamics of women's safety in the digital realm, particularly in different regions of India.

The literature surrounding women's safety devices highlights the urgent need for innovative solutions to address the pervasive issue of sexual harassment and abuse faced by women globally. Instances of such incidents occurring in public spaces, irrespective of urban or rural settings, significantly curtail women's freedom, hindering their ability to engage in education, employment, and public activities. The existing literature emphasizes the multifaceted impact of these safety concerns, including restricted access to essential services and diminished enjoyment of cultural and recreational pursuits. Against this backdrop, the paper introduces a novel contribution in the form of a Wearable Safety Device utilizing the ESP32 MCU.

This device is designed to empower women by enabling them to transmit their location details to trusted contacts in emergency situations. Additionally, the device goes beyond safety functionalities, incorporating health monitoring features that transform it into a versatile fitness band. The literature review underscores the ongoing discourse on women's safety concerns and positions the proposed wearable device as a promising technological intervention to enhance women's security and well-being.

The paper "Brain Tumor Detection using Deep Learning and Image Processing" by A. S. Methil, presented at the 2021 ICAIS conference in Coimbatore, India, addresses the challenging task of brain tumor detection in medical image processing.

The author highlights the difficulty of this task due to the diverse shapes and textures of brain tumors, arising from different types of cells with implications for the nature, severity, and rarity of the tumor. The paper emphasizes the complexities introduced by issues commonly found in digital images, such as illumination problems and overlapping image intensities between tumor and non-tumor images.

To address these challenges, the author proposes a novel method involving various image preprocessing techniques, including histogram equalization and opening, followed by the implementation of a Convolutional Neural Network (CNN) for classification.

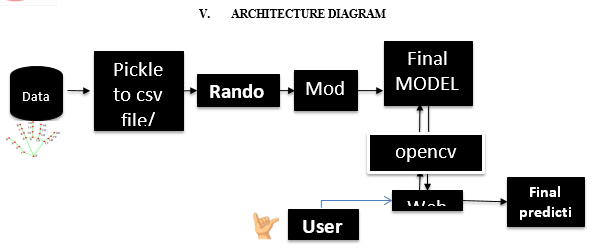

III. PROPOSED METHODOLOGY

- Hand key points as input features for a machine learning model

- Train the machine learning model using different algorithms such as random forest, support vector machine, hybrid stacking model , and k-nearest neighbor.

- Evaluate the performance of the trained models using metrics like F1 score, precision, and recall.

- Select the best-performing model based on the evaluation metrics.

- Use the selected model to predict the sign language gestures in real-time by comparing the hand key points detected by MediaPipe with the trained model.

- Use OpenCV to capture video input from a webcam and predict the corresponding sign which can make authorities respond accordingly through alert mail received.

A. Random Forest

The algorithm constructs a collection of decision trees and combines their predictions to make a final prediction. Random Forest is a popular choice for sign language recognition due to its ability to handle complex, non-linear relationships between features and outputs.One advantage of Random Forest for sign language recognition is its ability to handle high-dimensional data, such as sign language data that includes multiple modalities.

Hyperparameters to Increase the Predictive Power

n_estimators: Number of trees the algorithm builds before averaging the predictions.

max_features: Maximum number of features random forest considers splitting a node.

mini_sample_leaf: Determines the minimum number of leaves required to split an internal node.

criterion: How to split the node in each tree? (Entropy/Gini impurity/Log Loss)

max_leaf_nodes: Maximum leaf nodes in each tree

Hyperparameters to Increase the Speed

n_jobs: it tells the engine how many processors it is allowed to use. If the value is 1, it can use only one processor, but if the value is -1, there is no limit.

random_state:controls randomness of the sample. The model will always produce the same results if it has a definite value of random state and has been given the same hyperparameters and training data.

oob_score: OOB means out of the bag. It is a random forest cross-validation method. In this, one-third of the sample is not used to train the data; instead used to evaluate its performance. These samples are called out-of-bag samples.

Important Features of Random Forest

- Diversity: Not all attributes/variables/features are considered while making an individual tree; each tree is different.

- Immune to the curse of dimensionality: Since each tree does not consider all the features, the feature space is reduced.

- Parallelization: Each tree is created independently out of different data and attributes. This means we can fully use the CPU to build random forests.

- Train-Test Split: In a random forest, we don’t have to segregate the data for train and test as there will always be 30% of the data which is not seen by the decision tree.

- Stability: Stability arises because the result is based on majority voting/ averaging.

IV. MODULE DESCRIPTION

A. Module-1

Data Collection

- Hand gesture images.

- Sign language recognition module: A module that recognizes the sign language gestures performed by the user and create a numerical value

B. Module-2

Preprocessing

- Checking the units of data.

- Assign dependent variable and independent variable.

- Splitting the data into training data and testing data.

- Checking for missing values

C. Module: 3

- Model Training

Three Algorithm is used random forest , support vector machine and k nearest neighbour.

2. Random forest

Random Forest is known for its ability to handle noisy data and its ability to estimate feature importance, making it a popular algorithm for many real-world applications. Random Forest is an ensemble machine learning algorithm that operates by constructing a multitude of decision trees and aggregating their predictions. It is used for both regression and classification problems.

The code is created for Random Forest Classifier algorithm using the scikit-learn library. It sets the number of trees in the forest to 30 using the "n_estimators" parameter.

The ".fit" method trains the classifier on the training data "X_train" and corresponding target values "y_train".The ".predict" method is then used to generate predictions for the test data "X_test".

Finally, the code prints the accuracy of the predictions using the "metrics.accuracy_score" function from scikit-learn, which compares the actual target values "y_test" to the predicted values "y_pred". The accuracy score is the proportion of correct predictions in the test data.

3. Support Vector Machine

Support Vector Machine (SVM) is a type of machine learning algorithm that can be used for sign language recognition. SVM works by finding a boundary between classes in the feature space that maximizes the margin, which is the distance between the boundary and the closest data points from each class. The data points closest to the boundary are known as support vectors and have the greatest impact on the boundary. SVM can handle both linear and non-linear decision boundaries, making it a versatile algorithm for sign language recognition.

Additionally, SVM can handle high-dimensional data, which is often the case in sign language recognition where multiple features such as hand shape, orientation, and motion need to be considered.

4. Knearest Neighbour

K-Nearest Neighbors (KNN) is another machine learning algorithm that can be used for sign language recognition. KNN operates by classifying a data point based on the majority class of its K nearest neighbors in the feature space. The value of K is a hyperparameter that can be tuned for optimal performance.

In sign language recognition, KNN can be used to identify the sign language gesture that is closest to a given test sample based on its features such as hand shape, orientation, and motion.

KNN is a simple and intuitive algorithm that is easy to implement, but it can be computationally expensive for large datasets and may not perform well with high-dimensional data.

5. Hybrid Model

In stacking, multiple diverse models (base models) are trained independently on the same dataset. Their predictions become features for a higher-level model called the meta-model.

The meta-model is then trained to make the final prediction. Stacking leverages the strengths of different models, resulting in improved overall performance and robustness compared to individual models. It's an ensemble learning technique that combines the predictions of diverse models through a meta-model, enhancing predictive accuracy.

6. Model Testing

Model testing is an essential step in evaluating the performance of machine learning models. In the context of sign language recognition using random forest, k-nearest neighbors (KNN), and support vector machine (SVM), the following steps can be taken for model testing:

Model Evaluation: Evaluate the performance of the models using the testing set. Common performance metrics for classification tasks include accuracy, precision, recall, and F1 score. It is also essential to examine the confusion matrix to identify which classes are often confused by the models.

Conclusion

A significant step forward in leveraging advanced technologies and machine learning to address critical issues related to women\'s safety, especially during nighttime hours in public spaces. By integrating MediaPipe for hand tracking and key point detection, and utilizing machine learning algorithms such as random forest, support vector machine, Hybrid model, and k-nearest neighbor, we have created an effective and responsive safety solution. The real-time nature of the system, coupled with its ability to recognize and respond to specific gestures, makes it a valuable tool in addressing safety concerns for women. The incorporation of sign language recognition further enhances the system\'s applicability, providing an additional layer of safety for women facing communication challenges. Rigorous testing and evaluation using key performance metrics like F1 score, precision, and recall ensure that the model\'s performance meets the desired standards. The system\'s database matching mechanism, triggering alarms and sending timely email notifications when predefined gestures are recognized, adds an extra layer of security and ensures prompt action can be taken.

References

[1] Duarte, S. Palaskar, L. Ventura, D. Ghadiyarm, K. DeHaan, F. Metze, J. Torres, and X. Giro-i-Nieto. “How2Sign: A Large-scale Multimodal Dataset for Continuous American Sign Language”. In: Conference on Computer Vision and Pattern Recognition (CVPR). 2021. [2] World Health Organization 2021 Deafness and hearing loss. 2021. url: https : / / www . who.int/news-room/fact-sheets/detail/deafness-and-hearing-loss (visited on 12/15/2021). [3] Graves, A.-r. Mohamed, and G. E. Hinton. “Speech Recognition with Deep Recurrent Neural Networks”. In: CoRR abs/1303.5778 (2013). arXiv: 1303 . 5778. url: http : //arxiv.org/abs/1303.5778. [4] H. Brashear, T. Starner, P. Lukowicz, and H. Junker. “Using multiple sensors for mobile sign language recognition”. In: Nov. 2005, pp. 45–52. isbn: 0-7695-2034-0. doi: 10.1109/ISWC.2003.1241392. [ [5] D. Uebersax, J. Gall, M. van den Bergh, and L. V. Gool. “Real-time sign language letter and word recognition from depth data”. In: 2011 IEEE International Conference on Computer Vision Workshops (ICCV Workshops) (2011), pp. 383–390. [6] S. A. Mehdi and Y. N. Khan. “Sign language recognition using sensor gloves”. In: Proceedings of the 9th International Conference on Neural Information Processing, 2002. ICONIP ’02. 5 (2002), 2204–2206 vol.5. [7] Z. Zafrulla, H. Brashear, T. Starner, H. Hamilton, and P. Presti. “American sign language recognition with the kinect”. In: Proceedings of the 13th international conference on multimodal interfaces. 2011, pp. 279–286. [8] R. Sutton-Spence and B. Woll. The Linguistics of British Sign Language: An Introduction. Cambridge University Press, 1999. isbn: 9781107494091. url: https://books.google. de/books?id=0betAQAAQBAJ. [9] P. Boyes-Braem, R. Sutton-Spence, and R. te Leiden. The Hands are the Head of the Mouth: The Mouth as Articulator in Sign Languages. International studies on sign language and the communication of the deaf. Gallaudet University Press, 2001. isbn: 9783927731837. url: https://books.google.de/books?id=EyeLQgAACAAJ. [10] O. M. Sincan, J. C. S. J. Junior, S. Escalera, and H. Y. Keles. ChaLearn LAP Large Scale Signer Independent Isolated Sign Language Recognition Challenge: Design, Results and Future Research. 2021. arXiv: 2105.05066 [cs.CV]. [11] R. L. McKinley and J. W. Rohrer, \"A Machine Learning Approach to American Sign Language Recognition,\" in IEEE Transactions on Systems, Man, and Cybernetics - Part C: Applications and Reviews, vol. 42, no. 6, pp. 1069-1078, Nov. 2012, doi: 10.1109/TSMCC.2011.2167896. [12] M. A. Garg and S. Kumar, \"ASL Recognition Using Machine Learning and Computer Vision Techniques,\" in IEEE International Conference on Electrical, Computer and Communication Technologies (ICECCT), Coimbatore, India, 2017, pp. 1-5, doi: 10.1109/ICECCT.2017.8117854. [13] N. Kumar and A. C. Kesavan, \"American Sign Language Recognition Using Machine Learning and Image Processing Techniques,\" in IEEE International Conference on Computational Intelligence and Computing Research (ICCIC), Chennai, India, 2015, pp. 1-6, doi: 10.1109/ICCIC.2015.7435782.

Copyright

Copyright © 2024 Padmini D, Mohanapriya S, Mr. A. G. Ignatius. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET58340

Publish Date : 2024-02-07

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online