Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Dementia Classification using Multiple Transfer Learning Models

Authors: Lakshmi R Suresh

DOI Link: https://doi.org/10.22214/ijraset.2022.40056

Certificate: View Certificate

Abstract

Dementia is an irreversible progressive neurodegenerative disorder. Mild cognitive impairment (MCI) is the prodromal state of Dementia, which is further classifed into a progressive state (i.e., pMCI) and a stable state (i.e., sMCI). With the development of deep learning, the convolutional neural networks (CNNs) have Dementia great progress in image recognition using magnetic resonance imaging (MRI) and positron emission tomography (PET) for diagnosis. Rather than training an entire model from scratch, transfer learning approach uses the CNN model by fine-tuning them, to classify MR images into Dementia ,mild cognitive impairment (MCI) and normal control (NC). The performance of this method has been evaluated over Dementia dataset by changing the learning rate of the model. Moreover, in this study, in order to demonstrate the transfer learning approach we utilize different pre-trained deep learning models such as GoogLeNet, VGG-16, AlexNet and ResNet-18, and compare their efficiency to classify Dementia. The overall classification accuracy resulted by GoogLeNet for training and testing was 99.84% and 98.25% respectively, which was exceptionally more than other models training and testing accuracies.

Introduction

I. INTRODUCTION

Dementia is a syndrome – usually of a chronic or progressive nature – in which there is deterioration in cognitive function (i.e. the ability to process thought) beyond what might be expected from normal ageing. It affects memory, thinking, orientation, comprehension, calculation, learning capacity, language, and judgement. Consciousness is not affected. The impairment in cognitive function is commonly accompanied, and occasionally preceded, by deterioration in emotional control, social behaviour, or motivation. Dementia results from a variety of diseases and injuries that primarily or secondarily affect the brain, such as Alzheimer's disease or stroke. Dementia is one of the major causes of disability and dependency among older people worldwide. It can be overwhelming, not only for the people who have it, but also for their carers and families. There is often a lack of awareness and understanding of dementia, resulting in stigmatization and barriers to diagnosis and care. The impact of dementia on carers, family and society at large can be physical, psychological, social and economic.

While no cure exists for the disease yet, there is consensus on the need and benefit for early diagnosis of Dementia. Currently, many neurologists and medical researchers have been contributing considerable time to researching methods to allow for early detection of Dementia, and promising results have been continually achieved [4]. At present, there have been many studies about diagnosis of Dementia based on Magnetic Resonance Imaging (MRI) data, playing a significant role in classifying Dementia. Various computer-assisted techniques are proposed, classifying the characterized extracted features from the input images. These features are usually extracted from the regions of interest (ROI) and volume of interests (VoI) [5] or even combine different extracted features [6]. While most of the existing work has focused on the binary classification which only classifies Dementia from NC, proper treatment requires classifying Dementia, MCI and NC. MCI is a stage prior to Dementia, where patients will result in mild symptoms of Dementia and bare the chance of getting transformed to dementia [7].

Recently, machine learning techniques, particularly deep learning, show great potential in aiding the diagnosis of Dementia using MRI scans. Deep learning methods, such as CNN, have been shown to outperform existing machine learning methods [8-9]. It has a massive progress in the field of image processing, mainly due to the availability of large labeled datasets such as ImageNet, for better and accurate learning of models. ImageNet offers around 1.2 million natural images with above 1000 distinctive classes. CNN trained over such images results in high accuracy also improves medical image categorization. However, there are certain limitations of training CNN from scratch, requirement of large dataset is one of them. As a result, another alternative approach called transfer learning can be used to overcome this problem which requires minimum dataset and consumes less time [10, 11]. Transfer learning is a machine learning method where a pre-trained network is reused as the starting point for a model on a second task.

In this paper, we employ three pre-trained base models to illustrate transfer learning to effectively classify Dementia. The main objective of this paper is to show how dementia of training a completely new model from scratch, we can utilize transfer learning approach without any preprocessing of the MR images and achieve high accuracy. Moreover, in this study we compare the performance of different deep learning models such as GoogLeNet, VGG-16, AlexNet and SqueezeNet by applying transfer learning method.

II. MATERIALS USED

The primary goal of DEMENTIA has been to test whether serial magnetic resonance imaging (MRI), positron emission tomography (PET), other biological markers, and clinical and neuropsychological assessment can be combined to measure the progression of mild cognitive impairment (MCI) and early Dementia. DEMENTIA is the result of efforts of many investigators and subjects have been recruited from over 50 sites across the U.S. and Candementiaa.

All subjects were required to be 60 years of age or older. The entire image set was classified subjectively by a neurologist, rdementiaiologist, and psychiatrist into categories DEMENTIA, MCI, and NC.

III. CLASSIFICATION USING TRANSFER LEARNING

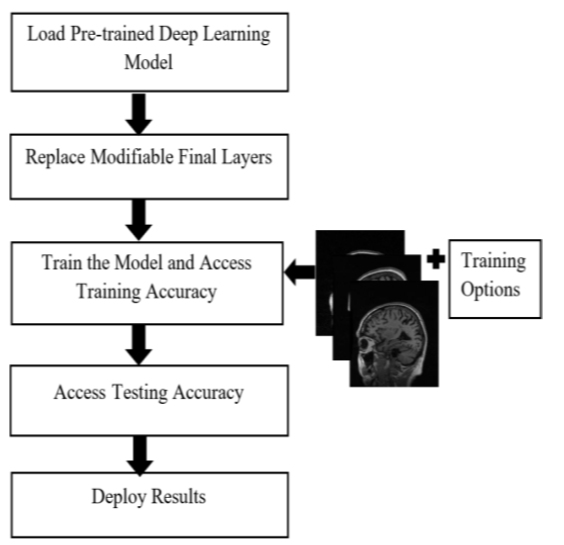

The proposed method exploits the transfer learning technique for 3-way classification of DEMENTIA. The architecture of utilizing transfer learning is shown in Fig. 1.

The transfer learning approach is helpful if we have a small training dataset for parameter learning [12]. We take a trained network, e.g., GoogLeNet as a starting point to learn a new task. GoogleNet pre-trained on ImageNet is taken as a base model to train a brain MR images from DEMENTIANI dataset. To use the transfer learning, the fully-connected layers are removed since the outputs of these layers are 1000 categories and is replaced by a new fully-connected layer followed by a softmax layer and an output layer for classifying 3 classes. Then, we train the network by providing training set MR images in dementiDementiaition to training options. Next, we test our model and obtain testing accuracy of the model. Finally, we deploy results using confusion matrix.

IV. PRE-TRAINED CNN ARCHITECTURE

A. Overview

Deep learning is a subfield of machine learning and a collection of algorithms that are inspired by the structure of human brain and try to imitate their functions. CNN is one such deep learning algorithm in which the transformations are done using the convolution operation. A typical CNN is comprised of three basic layers; a convolutional layer, a pooling layer and a fully-connected layer. However, an activation layer, normalization layer and a dropout layer also plays significant role in the deep architecture of CNN model. The convolutional layer is the core building block of a CNN and is responsible for most of the computations done. It extracts the features from the input image which is to be classified [13]. Its parameter consists set of kernels or learnable filters. It performs the convolution operation or filtration over the input, forwarding the response to the next layer as a feature map [14]. The pooling layer is used to spatially reduce the spatial representation and the computational space [15]. It performs the pooling operation on each of the sliced inputs, reducing the computational cost for the next convolutional layer. The application of convolutional and pooling layers results in the extraction and reduction of features from the input images. The objective of a fully-connected layer is to take the output feature maps of the final convolutional or pooling layers and use them to classify the image into a label.

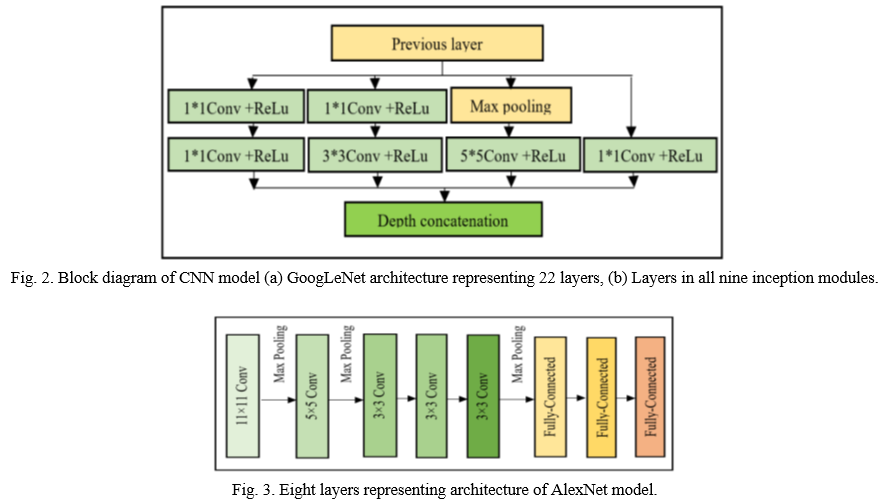

- GoogLeNet: GoogLeNet has been trained on over a million images and can classify into 1000 object categories. It was introduced by a Google team and was the winner of ILSVRC-2014. The network is designed with computational efficiency and practicality in mind. The network is mdementiae up of 22-layers deep as shown in Fig. 2(a). All the convolutions, including those inside the inception modules, use rectified linear unit (ReLU) as an activation function. The size of the receptive field of our network is 224×224in the RGB color space with zero mean. Hence all the images were cropped and converted to 224×224×3 size which is a valid input size for our model. Uniqueness lies in the same 9 Inception modules used in GoogLeNet model [16]. Fig. 2(b) shows detailed structure of Inception layer.

- AlexNet: The original AlexNet architecture was trained over the ImageNet dataset [17] comprising images belonging to 1000 object classes. It was designed by Alex Krizhevsky and was the winner of ISLRVC-2012 [18]. The architecture of Alexnet is depicted in Fig. 3. It contains 8 layers with the first 5 layers as convolutional followed by 3 fully connected layers. AlexNet accepts input images of size 227×227in RGB color space. Therefore all the images were resized and converted to fit the network criteria to perform transfer learning.

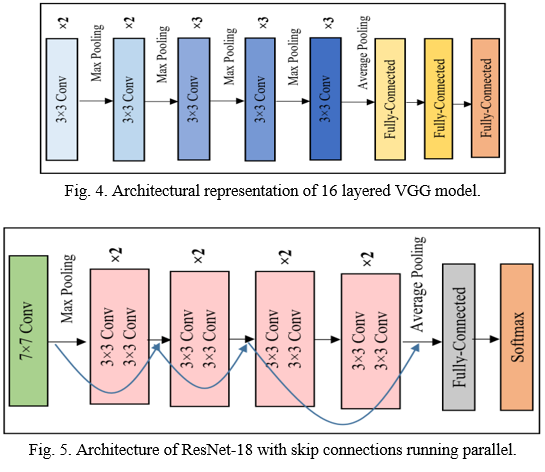

3. VGG-16: VGG-16 is a CNN model proposed by K. Simonyan and A. Zisserman in 2014 [19]. As the name indicates, the VGG-16 model contains 16 layers in total. Since VGG-16 was trained on RGB i.e., 3 channel images it can accept input only if it has exactly 3 channels. Thus, the input tothe first convolutional layer is a fixed size 224×224 RGB image, resulted by cropping and converting the image.

4. ResNet-18: Deep Residual networks, shortly named as ResNet is developed based on the core idea dementiDementiaressed as shortcut connections or skip connections. These connections provide alternate pathway for data and grdementiaients to flow, thus making training possible. The simplest model isResNet-18 which has 18 layers. The input image size of this model is 224×224×3. It was developed by Kaiming et al., [20] and was winner of ILSVRC-2015. The detailed architecture of ResNet- 18 and how skip connections run in parallel is shown in Fig. 5.

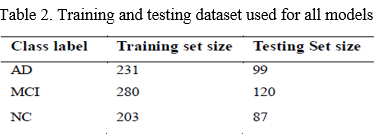

B. Creating Training and Testing Datasets

DEMENTIA dataset of total 1020 images is shuffled and split into train and test set in the ratio 70:30. The training and testing set used for 3-way classification (DEMENTIA vs. MCI vs. NC) for all three networks are same and is shown in Table 2.

We evaluated the performance by changing the learning rate, which controls the amount of change required in response to the estimated error each time the model weights are updated. Choosing the learning rate is challenging as a value too small may result in a long training process, whereas a value too large may result in learning a sub-optimal set of weights too fast or an unstable training process. It also affects the accuracy of the model.

V. RESULTS AND DISCUSSION

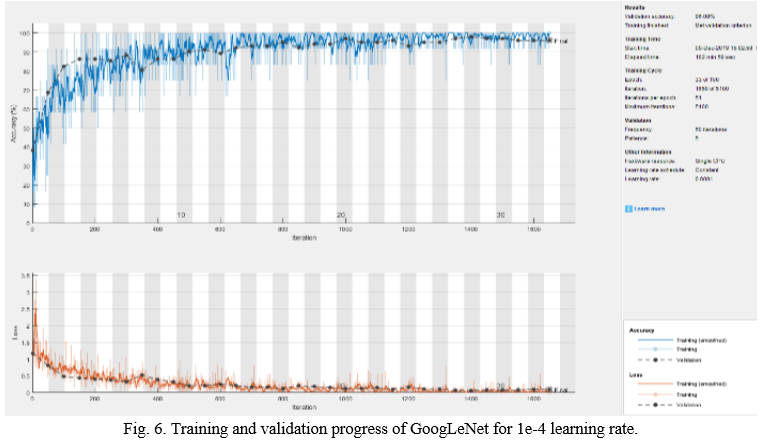

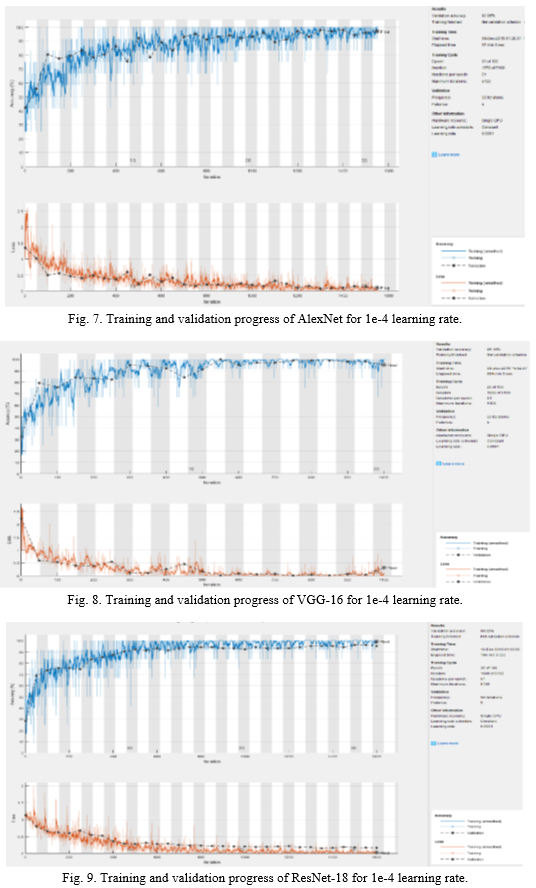

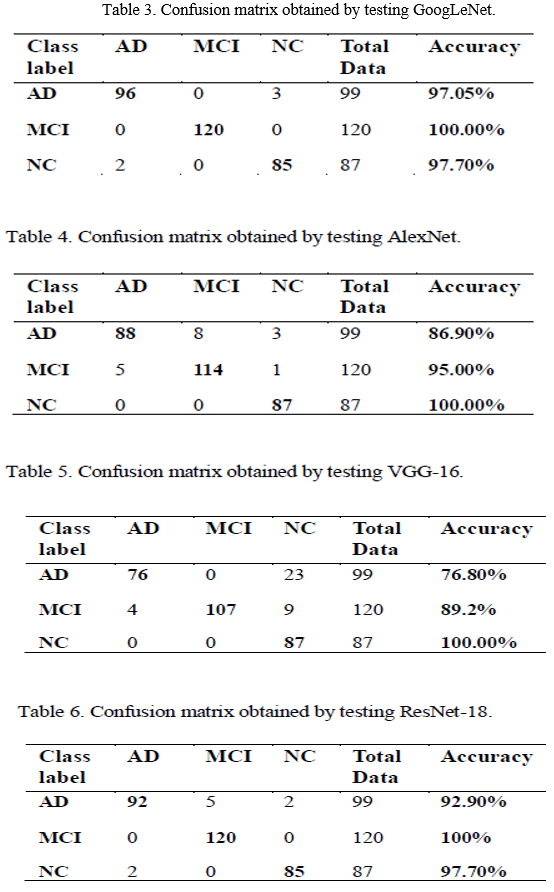

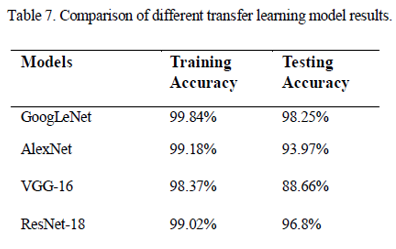

The classification model was built using MATLAB 2018, which easily offers transfer learning. Training options such as 100 epochs by considering validation patience of 5 as an early stopping parameter, a learning rate of 0.0001, stochastic grdementiaient descent with momentum (SGDM) as an optimizer to minimize the loss function as well as dementiajust the weight and bias factors, and a mini batch size of 12 were selected to train 621 images. One Epoch is defined as completed when entire dataset is passed forward and backward through neural network. In our case, it took 51 iterations to complete 1 epoch. Validation patience or an extra epoch checks if the model remains stable without any further improvement and thus prevents the model from over-fitting problem. Since accuracy is the primary evaluation metric, we analyzed training and testing accuracy results by changing the learning rate from 1e-2 to 1e-5 to see how learning rate affects the accuracy and loss of the model. However, as expected the model produced optimal results for learning rate 1e-4. Therefore, we evaluated all models by fixing the learning rate to 1e-4. The accuracy was obtained using a confusion matrix, which describes the performance of a classification model. The confusion matrix of GoogLeNet, AlexNet, VGG-16 and ResNet-18 are showed from Table 3 to 6. The training and validation progress of all the models used in this paper is shown in Figs. 6-9.

Figs. 5~8 show how the models progresses when training the model and how its accuracy and loss change. Although every models were trained for 100 epochs, it was trained for less epochs due to early stopping in order to prevent the model from overfitting. In Fig. 5, we can clearly note that the training loss of GoogLeNet stedementiaily declined and almost reached zero while raising the accuracy of the model. The possble reason for this is the inception modules used in GoogLeNet, which increases the depth of the model and learns more features from the image. Nevertheless, other models were also capable in reducing the loss with an increase in accuarcy. ResNet-18 in Fig. 8 also performed well with the reduction in loss to almost zero. It was more efficient as compared to AlexNet and VGG-16 due to skip connections used in the network.

In Table 3., we can clearly note that GoogLeNet was successful in classification with 97.05% of DEMENTIA, 100% of MCI and 97.70% of NC being correctly classified. Thus, the overall testing accuracy of GoogLeNet resulted in 98.25%, Moreover, the other models also resulted in good accuracies such as AlexNet, VGG-16 and ResNet-18 shown in Table 4, 5 and 6 respectively. Among these models ResNet-18 outperfomed the other two models with 92.90% of DEMENTIA, 100% of MCI and 97.70% of NC correctly classified. However, GoogLeNet surpasssed the other models with the highest testing accuracy, hence, outcoming as the best model to classify DEMENTIA.

From Table 7, we can conclude that GoogLeNet is a powerful deep learning model for medical images specifically MR images classification. GoogLeNet produced highest training and testing accuracy over other models. The second highest accuracies was obtained by ResNet-18 outperforming AlexNet and VGG-16.

Conclusion

The detection of Dementiaremains a difficult problem, yet important for early diagnosis to get proper treatment. Currently, there is no treatment for DEMENTIA and hence, early detection and classification is very important task to treat the patient. While there are many classification algorithms used in present days, classification using deep learning has captivated every researchers due to its flexibility and capacity to produce optimal results. However, in medical field acquiring enough data (images) is quite difficult as well as training a model from scratch is time consuming. Therefore, to overcome these problems transfer learning is used which requires only minimal data and takes few hours to classify MR images. In this paper, we used transfer learning approach using different deep learning models such as GoogLeNet, AlexNet, VGG-6 and ResNet-18 as the base model for accurately classifying MR images amongst three different classes: DEMENTIA, MCI and NC. We analyzed these models by changing the learning rate and the performance obtained at 1e-4 outperformed the performance of other cases. The models were well trained for our datasets and all the models were efficient in classification. Among all the other models, GoogLeNet produced the highest training and testing accuracy of 99.84% and 98.25% respectively. Therefore, transfer learning using GoogLeNet is definitely a successful approach to classify MR images into DEMENTIA, MCI and NC. Acknowledgement This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (2018R1D1A1A09083734).

References

[1] F. Giannini and G. Leuzzi, Nonlinear Microwave Circuit Design. NewYork, NY: John Wiley & Sons Inc., 2004. [2] V.L. Villemagne, S. Burnham, P. Bourgeat and B. Brown, “Amyloid ? deposition, neurodegeneration, and cognitive decline in spordementiaic Alzheimer\'s disease: a prospective cohort study,” The Lancet Neurology, vol. 12, no. 4, pp. 357-367, Apr. 2013. [3] S. Belleville, C. Fouquet, S. Duchesne and D.L. Collins, “Detecting early preclinical Dementiavia cognition, neuropsychiatry, and neuroimaging: qualitative review and recommendations for testing,” Journal of Alzheimer’s disease, vol. 42, no. 4, pp. 375–382, Jan. 2014. [4] J. Gaugler, B. James, T. Johnson, A. Marin and J. Weuve, “2019 Alzheimer\'s disease facts and figures,” Alzheimers & Dementia, vol. 15, no. 3, pp. 321-87, Mar. 2019. [5] M. Tabaton, P. Odetti, S. Cammarata and R. Borghi, “Artificial neural networks identify the predictive values of risk factors on the conversion of amnestic mild cognitive impairment,” Journal of Alzheimer’s Disease, vol. 19, no. 3, pp. 1035–1040, Jan. 2010. [6] J. B. Toledo, M. Bjerke, K. W. Chen and M. Rozycki, “Memory, executive, and multidomain subtle cognitive impairment Clinical and biomarker findings,” Neurology, vol. 85, no. 2, pp. 144–153, Jul. 2015. [7] N. Mdementiausanka, H. K. Choi, J. H. So and B. K. Choi, “Alzheimer\'s Disease Classification Based on Multi-feature Fusion,” Current Medical Imaging, vol. 15, no. 2, pp. 161-9, Feb, 2019. [8] S. Gauthier, B. Reisberg, M. Zaudig, R. C. Petersen, K. Ritchie, K. Broich, et. Al., “Mild cognitive impairment,” The lancet, vol. 367, no. 9518, pp. 1262-70, Apr. 2006. [9] Y. LeCun, Y. Bengio and G. Hinton, “Deep learning,” Nature, vol. 521, no. 7553, pp. 436-444, May. 2015. [10] A. Ortiz, J. Munilla, J. M. Gorriz, and J. Ramirez, “Ensembles of deep learning architectures for the early diagnosis of the Alzheimer’s disease,” International Journal of neural systems, vol. 26, no. 07, pp. 1650025, No. 2016. [11] H. C. shin, H. R. Roth, M. Gao, “Deep Convolutional Neural Networks for Computer-Aided Detection: CNN Architectures, Dataset Characteristics and Transfer Learning,” IEEE transactions on medical imaging, vol. 35, no. 5, pp. 1285-1298, Feb. 2016. [12] K.H. Jin, M.T. McCann, E. Froustey, “Deep Convolutional Neural Network for Inverse Problems in Imaging,” IEEE Transactions on Image Processing, vol. 26, no. 9, pp. 4509-4522, Jun. 2017. [13] S. J. Pan, and Q. Yang, “A survey on transfer learning,” IEEE Transactions on knowledge and data engineering, vol. 22, no. 10, pp. 1345-1359, Oct. 2009. [14] M. Xin and Y. Wang, “Research on image classification model based on deep convolution neural network,” EURASIP Journal on Image and Video Processing, no. 1, pp. 40, Dec. 2019. [15] J. Fang, Y. Zhou, Y. Yu, and S. Du, “Fine-grained vehicle model recognition using a coarse-to-fine convolutional neural network architecture,” IEEE Transactions on Intelligent Transportation Systems, vol. 18, no. 7, pp. 1782-1792, Nov. 2016. [16] J. Bouvrie, “Notes on convolutional neural networks,” In Practice, 2006. [17] C. Szegedy, L. Wei, J. Yangqing, S. Pierre, “Going deeper with convolutions,” in Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 1-9, 2015. [18] J. Deng, W. Dong, R. Socher, L. J. Li, K. Li, and F. F. Li, “ImageNet: A large-scale hierarchical image database,” in 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255, Jun. 2009. [19] A. Krizhevsky, I. Sutskever and G. E. Hinton, “Imagenet classification with deep convolutional neural networks,” In Dementiavances in neural information processing systems, pp. 1097-1105, 2012. [20] K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” arXiv preprint arXiv:1409.1556, Sep. 2014. [21] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, pp. 770- 778, 2016.

Copyright

Copyright © 2022 Lakshmi R Suresh. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET40056

Publish Date : 2022-01-23

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online