Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Prediction of Alzheimer's Disease Using CNN

Authors: Kavya M K, Geetha M

DOI Link: https://doi.org/10.22214/ijraset.2022.45357

Certificate: View Certificate

Abstract

Alzheimer’s disease is an incurable, progressive neurological brain disorder. Earlier detection of Alzheimer’s disease can help with proper treatment and prevent brain tissue damage. Several statistical and machine learning models have been exploited by researchers for Alzheimer’s disease diagnosis. Analysing magnetic resonance imaging (MRI) is a common practice for Alzheimer’s disease diagnosis in clinical research. Detection of Alzheimer’s disease is exacting due to the similarity in Alzheimer’s disease MRI data and standard healthy MRI data of older people. Recently, advanced deep learning techniques have successfully demonstrated human-level performance in numerous fields including medical image analysis. We propose a deep convolutional neural network for Alzheimer’s disease diagnosis using brain MRI data analysis. While most of the existing approaches perform binary classification, our model can identify different stages of Alzheimer’s disease and obtains superior performance for early-stage diagnosis.

Introduction

I. INTRODUCTION

Alzheimer’s disease (AD) is the most prevailing type of dementia. The prevalence of Alzheimer’s disease is estimated to be around 5% after 65 years old and is staggering 30% for more than 85 years old in developed countries. It is estimated that by 2050, around 0.64 Billion people will be diagnosed with Alzheimer’s disease. Alzheimer’s disease destroys brain cells causing people to lose their memory, mental functions and ability to continue daily activities. Initially, Alzheimer’s disease affects the part of the brain that controls language and memory. As a result, Alzheimer’s disease patients suffer from memory loss, confusion and difficulty in speaking, reading or writing. They often forget about their life and may not recognize their family members. They struggle to perform daily activities such as brushing hair or combing tooth. All these make Alzheimer’s disease patients anxious or aggressive or to wander away from home. Ultimately, Alzheimer’s disease destroys the part of the brain controlling breathing and heart functionality which lead to death.

There are three major stages in Alzheimer’s disease—very mild, mild and moderate. Detection of Alzheimer’s disease (AD) is still not accurate until a patient reaches moderate Alzheimer’s disease stage. For proper medical assessment of Alzheimer’s disease, several things are needed such as physical and neurobiological examinations, Mini-Mental State Examination (MMSE) and patient’s detailed history.

Recently, physicians are using brain MRI for Alzheimer’s disease diagnosis. Alzheimer’s disease shrinks the hippocampus and cerebral cortex of the brain and enlarges the ventricles. Hippocampus is the responsible part of the brain for episodic and spatial memory. It also works as a relay structure between our body and brain. The reduction in hippocampus causes cell loss and damage specifically to synapses and neuron ends. So neurons cannot communicate anymore via synapses. As a result, brain regions related to remembering (short-term memory), thinking, planning and judgment are affected.

For accurate disease diagnosis, researchers have developed several computer-aided diagnostic systems.

They developed rule-based expert systems from 1970s to 1990s and supervised models from 1990s. Feature vectors are extracted from medical image data to train supervised systems. Extracting those features needs human experts that often require a lot of time, money and effort. With the advancement of deep learning models, now we can extract features directly from the images without the engagement of human experts.

So researchers are focusing on developing deep learning models for accurate disease diagnosis. Deep learning technologies have achieved major triumph for different medical image analysis tasks such as MRI, microscopy, CT, ultrasound, X-ray and mammography. Deep models showed prominent results for organ and substructure segmentation, several disease detection and classification in areas of pathology, brain, lung, abdomen, cardiac, breast, bone, retina, etc.

Machine learning studies using neuroimaging data for developing diagnostic tools helped a lot for automated brain MRI segmentation and classification. Most of them use handcrafted feature generation and extraction from the MRI data. These handcrafted features are fed into machine learning models for further analysis.

A. Problem Statement

Human experts play a crucial role in these complex multi-step architectures. Moreover, neuroimaging studies often have a dataset with limited samples. While image classification datasets used for object detection and classification have millions of images while neuroimaging datasets usually contain a few hundred images. But a large dataset is vital to develop robust neural networks. Because of the scarcity of large image database, it is important to develop models that can learn useful features from the small dataset.

B. Objectives

The aim of this work is to develop developed a deep convolutional neural network that learned features directly from the input MRI and eliminated the need for the handcrafted feature generation. Therefore objectives of proposed system are as follows:

- To develop a deep convolutional neural network that can identify Alzheimer’s disease and classify the current disease stage.

- To develop a network that learns from a small dataset and still demonstrates superior performance for Alzheimer Disease diagnosis.

- To develop an efficient approach to training a deep learning model with an balanced dataset.

- To do the perform analysis of the proposed system for validation.

C. Proposed System

The proposed approach extracts texture and shape features of the Hippocampus region from the MRI scans and a Neural Network is used as Multi-Class Classifier for detection of various stages of Alzheimer’s disease. The proposed approach is under implementation and is expected to give better accuracy as compared to conventional approaches.

II. LITERATURE SURVEY

In 2016, Yang Han and Xing-Ming Zhao proposed a feature selection based approach known as Hybrid Forward Sequential Selection (HFS) for detection of Alzheimer’s disease. This proposed approach combines the filter and wrapper approaches to detect informative features from the MRI data which was obtained from the Alzheimer’s disease Neuroimaging Initiative (ADNI) database. In this approach the features were ranked and top-k features are selected. The Support Vector Machine (SVM) was used as the classifier. The authors claim that proposed approach outperforms other feature selection methods, improves accuracy of diagnosis and reduces computational cost.

In 2016, Devvi Srawinda and Alhadi Bustamam proposed an Advanced Local Binary Pattern (ALBP) method. The ALBP method was introduced as 2D and 3D feature extraction descriptors. As ALBP produces large number of features, the feature selection was done using principal Component Analysis (PCA) and factor analysis. The Support Vector Machine was used for multiclass classification. The authors claim that their proposed work gives better performance and accuracy compared to the previous Local Binary Pattern (LBP) method. The average accuracy achieved for whole brain and hippocampus data was between 80% and 100%. It is also claimed that the uniform rotation invariant ALBP sign magnitude outperforms other approaches with an average accuracy of 96.28% for multiclass classification of whole brain image. It is concluded that the extracted feature vector has high dimensionality, requires high computation for processing and can be improved using parallel computing for feature extraction from large brain datasets of MRI.

In 2013, S.Saraswathi et.al proposed a Alzheimer’s Disease detection method using combination of three machine learning algorithms. The Genetic Algorithm (GA) is used for feature selection. The Voxel-based Morphometry is used for feature extraction. The classification is done using Extreme Learning Machine (ELM) and the Advanced Particle Swarm Optimization (PSO) algorithm optimizes the classification results. It is claimed that the GA-ELMPSO classifier gives 94.57% average training accuracy and 87.23% testing accuracy.

In 2013, Rigel Mahmood and Bishad Ghimire developed an automated system based on mathematical and image processing techniques for Alzheimer’s disease classification. The high dimensional vector space was reduced to 150 dimensions using the Principal Component Analysis and the reduced dimensions were categorized from PCA using multi-class neural network. The classifier was trained and tested using the OASIS database. The authors claim that their proposed approach gives an accuracy of nearly 90%.

In 2014, M. Evanchalin Sweety and G.Wiselin Jiji proposed a Particle Swarm Optimization (PSO) and Decision Tree Classifier based method for Alzheimer’s disease detection. In the proposed approach, the processed images are normalized and the Markov random filter is used for noise reduction. Features are extracted from the normalized images using moments and Principal Component Analysis.

The particle Swarm Optimization for reduction of extracted features and the classification is done using the Decision Tree Classifier. The authors claim that for similar work their proposed approach gives an accuracy of 92.07% on SPECT images and 86.71% on PET images.

In 2013, Devvi Sarwinda and Aniati M. Aryamurthy proposed a computer aided system based on feature selection approach using 3D MR images for texture analysis. In this proposed approach, the feature selection approach and feature extraction of 3D descriptor is combined where, feature selection is done using Kernel PCA and feature extraction is done using magnitude from three orthogonal planes as well as Complete Local Binary Pattern of Sign. The classification is done using Support Vector Machine. The authors claim that their proposed approach gives an accuracy of 100% for classification of Alzheimer’s disease and normal brain. It is also claimed that the proposed system gives an accuracy of 84% for Alzheimer’s and Mild Cognitive Impairment (MCI) classification.

In 2015, Lauge Sorensen et.al, proposed the hypothesis that the hippocampal texture is associated with early cognitive loss was tested. They used three independent datasets from Australian Imaging Biomarkers and Lifestyle flagship study of ageing (AIBL), ADNI and metropolit 1953 for training the classifier. In this study it was found that hippocampal texture is a better bio-marker as compared to reduction in hippocampal volume for predicting MCI-to-AD conversion for ADNI dataset. The hippocampal texture was found to have superior differentiation capability between stable MCI and MCI-to-AD conversion than volumetric changes in the hippocampus region. The findings in their research supported the hypothesis that textural information of hippocampus region is more sensitive as compared to volume and can be used to detect Alzheimer Disease in early stage.

In 2015, Chetan Patil et.al proposed an approach to estimate the possibility of early detection of Alzheimer’s by evaluating the utility of image processing on MRI images. The authors demonstrated the applications of image processing techniques such as k-means clustering, wavelet transform, watershed algorithm and a customized algorithm implemented on open source platforms, OpenCV and Qt. In the proposed approach, the T1-weighted MRIs were used for image processing to evaluate the Hippocampal atrophy. A boundary detection algorithm was used to extract the region of interest (ROI) and k-means clustering was used for segmentation. The brain and hippocampal volume is implemented. The authors claim that the overall brain volume is less and the difference between the grey and white matter is higher in case of Alzheimer’s. It is also claimed that their proposed work is useful to the technical as well as medical community.

In 2011, Mayank Agarwal and Javed Mostafa described an application of content-based image retrieval namely ViewFinder Medicine (vfM) to the domain of Alzheimer’s disease and medical imaging. To find similar scans and for classification the vfM combines textual information with low level features. Experiments were conducted using the T1 weighted contrast enhanced MP-RAGE MR images to evaluate the systems performance. Two sets of experiments were carried out where, the first set estimated the efficiency of using the classification module in the system and the second set measured the accuracy of classification. The 10fold cross-validation was used to establish the classifier accuracy. The classification accuracy was compared using the Discrete Cosine Transform (DCT), Discrete Wavelet Transform (DWT), LBP and other possible combinations. The classification of these models is contrasted with the fusion model.

The authors claim that as compared to DCT and DWT the LPB method gives higher performance. The authors also claim that the fusion model gives maximum accuracy of 86.7% and the classification accuracy is higher with the skull information removed than the whole-brain images.

III. SYSTEM DESIGN

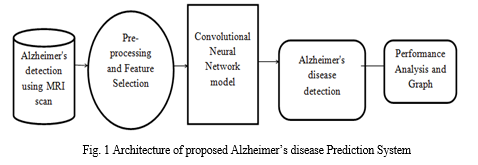

A. System Architecture

The system has been built using CNN algorithm which is a widely used technique in Deep learning. Deep learning refers to neural networks with a deep number of layers (usually more than five) that extract a hierarchy of features from raw input images. Deep learning extracts complex, high-level features from the images and trains a large amount of data, thus resulting in greater accuracy. Owing to significantly increased GPU processing power, deep learning methods allow us to train a vast amount of imaging data and increase accuracy despite variations in images.

CNN consists of layers of convolution, pooling, activation function, and fully connected layers with each layer performing specific functions. Input images are convolved across the kernel by the convolutional layer to produce feature maps. In the pooling layer, as the value transferred to the successive layer, the results obtained from preceding convolutional layers are down sampled using the maximum or average of the specified neighborhood. The most popular activation functions are the rectified linear unit (ReLU) and the leaky ReLU, which is a modification of ReLU. The ReLU transforms data nonlinearly by clipping off negative input values to zero and passing positive input values as output.

The results of the last CNN layer are coupled to loss function to provide a forecast of the input data. Finally, network parameters are obtained by decreasing the loss function between prediction and ground truth labels along with regularization constraints. In addition, weights of the network are updated at each iteration using back-propagation until the convergence.

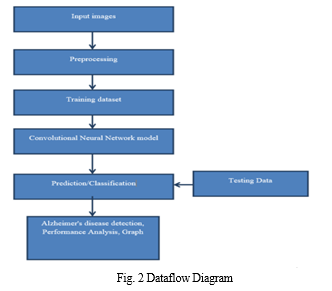

B. Dataflow Diagram

Figure 2 depicts DFD is also called as bubble chart. It is a simple graphical formalism that can be used to represent a system in terms of input data to the system, various processing carried out on this data, and the output data is generated by this system.

Training phase: A set of labelled MRI scans is pre-processed for ROI extraction and segmentation. The training phase can be summarized as follows:

- Extract features such as Texture, Shape and Area. from the pre-processed MRI scans.

- Train a CNN classifier using this feature set.

The output of the training phase is a trained classifier capable of predicting classification label based on features of MRI scan.

The performance of the trained classifier can be evaluated using measures like accuracy, sensitivity and specificity.

Classification: This phase can be summarized as follows:

a. Take as input, MRI scan of a patient.

b. Pre-process the MRI scan.

c. Extract the required features from the patient’s MRI scan.

d. Use the trained classifier to predict the classification label for the patient’s MRI scan.

The output of this phase is a classification label suggesting probable AD stage for the subject.

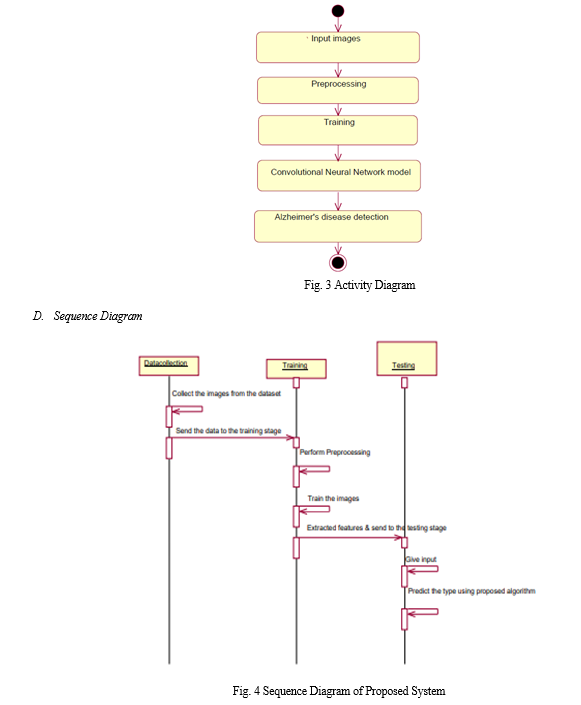

C. Activity Diagram

Figure 3 represents Activity diagrams are graphical representations of workflows of stepwise activities and actions with support for choice, iteration and concurrency. In the Unified Modelling Language, activity diagrams can be used to describe the business and operational step-by-step workflows of components in a system. An activity diagram shows the overall flow of control.

IV. IMPLEMENTATION

1. Dataset: In the first module, we developed the system to get the input dataset for the training and testing purpose.

Data set link: https://www.kaggle.com/tourist55/alzheimers-dataset-4-class-of-images The dataset consists of 6123 Alzheimer's detection using MRI scan.

- Importing The Necessary Libraries: We will be using Python language for this. First we will import the necessary libraries such as keras for building the main model, sklearn for splitting the training and test data, PIL for converting the images into array of numbers and other libraries such as pandas, numpy, matplotlib and tensorflow.

- Retrieving the images: We will retrieve the images and their labels. Then resize the images to (224,224) as all images should have same size for recognition. Then convert the images into numpy array.

- Splitting the dataset: Split the dataset into train and test. 80% train data and 20% test data.

Convolutional Neural Networks

The objectives behind the first module of the course 4 are:

a, To understand the convolution operation

b. To understand the pooling operation

c. Remembering the vocabulary used in convolutional neural networks (padding, stride, filter, etc.)

d. Building a convolutional neural network for multi-class classification in images

5. Building the Model: For building the model we will use sequential model from keras library. Then we will add the layers to make convolutional neural network. In the first 2 Conv2D layers we have used 32 filters and the kernel size is (5,5). In the MaxPool2D layer we have kept pool size (2,2) which means it will select the maximum value of every 2 x 2 area of the image. By doing this, dimensions of the image will reduce by factor of 2. In dropout layer we have kept dropout rate = 0.25 that means 25% of neurons are removed randomly. We apply these 3 layers again with some change in parameters. Then we apply flatten layer to convert 2-D data to 1-D vector. This layer is followed by dense layer, dropout layer and dense layer again. The last dense layer outputs 2 nodes as the brain tumour or not. This layer uses the softmax activation function which gives probability value and predicts which of the 2 options has the highest probability.

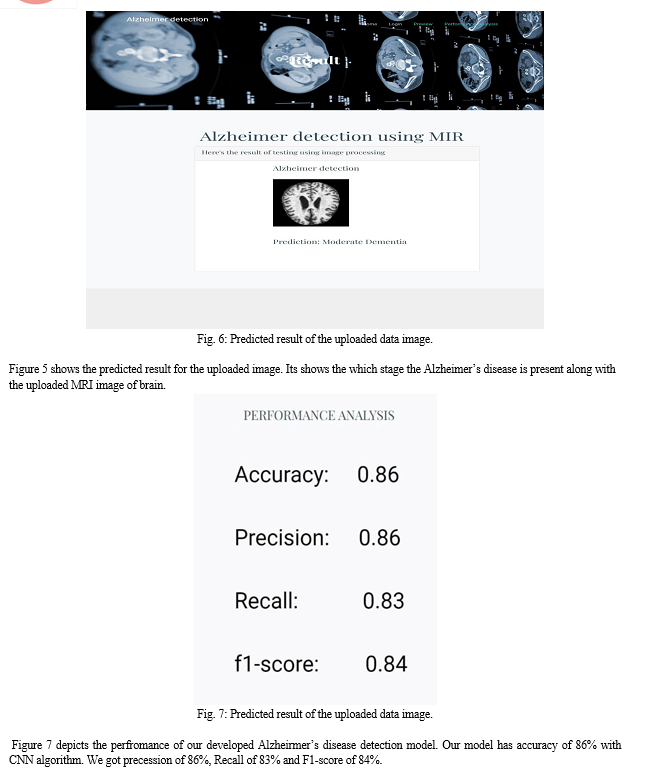

6. Apply the Model and plot the graphs for accuracy and loss: We will compile the model and apply it using fit function. The batch size will be 2. Then we will plot the graphs for accuracy and loss. We go training accuracy is 86.34% and validation accuracy is 86.45% on the test data that detects AD accurately.

7. Accuracy on test set: We got a accuracy of 86.45.00% on test set.

8. Saving the Trained Model: Once you’re confident enough to take your trained and tested model into the production-ready environment, the first step is to save it into a .h5 or . pkl file using a library like pickle.

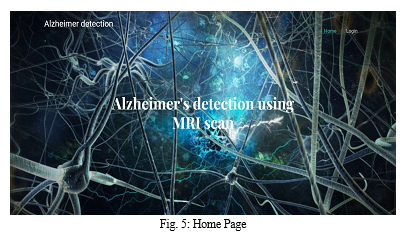

V. RESULTS AND SCREENSHOTS

The figure 5 depicts the output screen of the proposed system. It is the home page in which we get login button. We use django framework for fron-end. Once we start the django server we get this page in our local host port

Conclusion

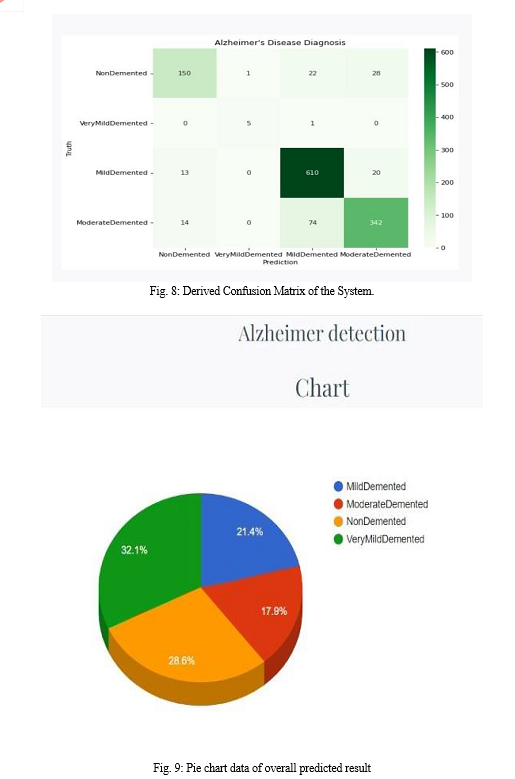

In this Work, the basic Convolutional Neural Network (CNN) architecture model has been used to classify Alzheimer\'s from magnetic resonance imaging (MRI) scans images. Convolutional Neural Network (CNN) architecture model is used to avoid the expensive training from scratch and to get higher efficiency with limited number of datasets. The proposed work was able to give a good accuracy where training accuracy is 86.34% and validation accuracy is 86.45% on the test data with very small misclassifications on normal and very mild demented. Future work includes using data from other modalities like PET, fMRI to improve the performance.

References

[1] Yang Han, Xing-Ming Zhao “A hybrid sequential feature selection approach for the diagnosis of Alzheimer\'s Disease”, 2016 International Joint Conference on Neural Networks (IJCNN). [2] Devvi Sarwinda, Alhadi Bustamam “Detection of Alzheimer’s Disease Using Advanced Local Binary Pattern from Hippocampus and Whole Brain of MR Images”, 2016 International Joint Conference on Neural Networks (IJCNN). [3] S. Saraswathi et.al “Detection of onset of Alzheimer’s Disease from MRI images Using a GA-ELM-PSO Classifier”, 2013 Fourth International Workshop on Computational Intelligence in Medical Imaging (CIMI). [4] Rigel Mahmood, Bishad Ghimire “Automatic Detection and Classification of Alzheimer\'s Disease from MRI Scans Using Principal Component Analysis and Artificial Neural Networks”, 2013 20th International Conference on Systems, Signal and Image Processing (IWSSIP). [5] M.Evanchalin et.al “Detection of Alzheimer Disease in Brain Images Using PSO and Decision Tree Approach”, 2014 IEEE International Conference on Advanced Communication Control and Computing Technologies (ICACCC. [6] Devvi Sarwinda, Aniati M. Arymurthy “Feature Selection Using Kernel PCA for Alzheimer’s Disease Detection with 3D MR Images of Brain”, 2013 International Conference on Advanced Computer Science and Information Systems (ICACSIS). [7] Lauge Sørensen et.al “Early detection of Alzheimer’s disease using MRI hippocampal texture”, US National Library of Medicine National Institute of Health, DOI:10.1002/hbm.23091, Epub 2015 Dec 21. [8] Chetan Patil et.al “Early Detection of Alzheimer’s Disease”, 2015 IEEE International Conference on Signal Processing, Informatics, Communication and Energy Systems (SPICES). [9] Mayank Agarwal, Javed Mostafa “Content-based Image Retrieval for Alzheimer’s Disease Detection”, 2011 9th International Workshop on Content-Based Multimedia Indexing (CBMI).

Copyright

Copyright © 2022 Kavya M K, Geetha M. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45357

Publish Date : 2022-07-05

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online