Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Prediction of Gross Calorific Value of Coal using Machine Learning Algorithm

Authors: Snehal B. Kale, Dr. Veena A. Shinde, Dr. Vijay S. Koshti

DOI Link: https://doi.org/10.22214/ijraset.2022.46093

Certificate: View Certificate

Abstract

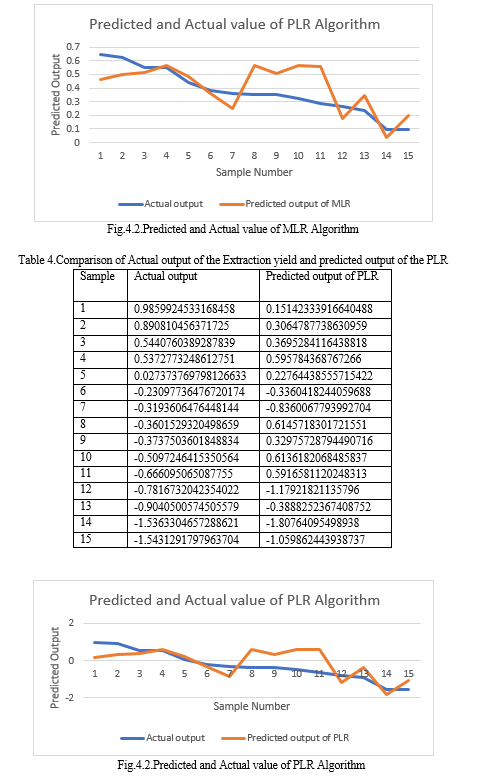

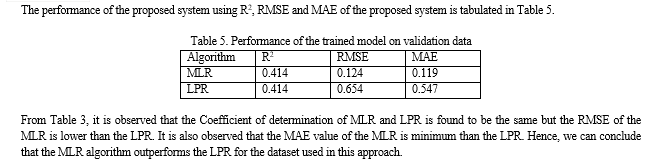

The rising number of occurrences of coal grade slippage among coal suppliers and users is causing worry in the Indian coal industry.One of the most important metrics for determining coal quality is the Gross Calorific Value (GCV). As a result, good GCV prediction is one of the essential techniques to boost heating value and coal output. This system aims to estimate the GCV of the coal samples from proximate and ultimate parameters of coal using machine learning regression algorithm: the Multiple Linear Regression (MLR) and Local Polynomial Regression (LPR). The performance of this system is evaluated using Coefficient of determination (R2), Root Mean Squared Error (RMSE), and Mean Absolute Error (MAE) parameters. The results of the proposed system in terms of RMSE, MAE, and R2 of the MLR and LPR are observed as 0.124, 0.119, 0.414, and 0.654, 0.547, and 0.414, respectively.

Introduction

I. INTRODUCTION

Coal is the most abundant and commonly used fossil fuel on the planet. It is a global industry that contributes significantly to global economic growth. Coal is mined commercially in more than 50 nations and used in more than 70. Annual global coal usage is estimated to be at 5,800 million tonnes, roughly 75% of that utilized to generate electricity. To meet the challenge of sustainable development and rising energy demand, this consumption is expected to double by 2030 nearly.

Coal is an explosive, lightweight organic origin rock that is black or dark brown and consists primarily of carbonized plant debris. It is found primarily in underground deposits with ash-forming minerals [1]. With each passing year, the world's energy consumption grows. At this moment, fossil-based fuels such as fuel oil, natural gas, and coal are used to meet most of the world's energy needs [2].Coal is one of the most widely used fossil fuels among various energy-supplier materials due to its high carbon content. It is the most abundant and has the most extended life cycle, making it the most vital energy source in the long run. As a result, coal's most prominent application is in generating electricity in thermal power plants. On the other hand, coal can be used in a variety of industries for a variety of purposes, including cement manufacture, coke production for metallurgical furnaces, and household heating, depending on its rank (coal grade). Coal analysis can estimate the utility of coal in various sectors.

"HyperCoal" (HPC) refers to ashless coal obtained using thermal solvent extraction. As inert chemical molecules and mineral debris are eliminated, HPC exhibits excellent fluidity [3]. HPC can be used in various applications, such as a fuel for low-temperature catalytic gasification and as a replacement for caking coals [4,5]. Coal characteristics can be determined using processes outlined in internationally accepted test standards. Two test sets are used to determine the quality of coal: proximal and ultimate analysis.Moisture, ash, volatile matter, fixed carbon, and calorific values are measured in proximate analysis. Carbon, hydrogen, nitrogen, sulphur, and oxygen contents, on the other hand, are assessed in final analyses [4, 9]. However, the calorific value of coal is the most essential of them all.Estimating the coal's Gross CalorificValue (GCV) is crucial. Hence, the use of linear regression analysis to predict calorific value was examined. Finally, the MLRmodel was used to predict calorific value.

II. LITERATURE SURVEY

This section presents the different methodologies for the Prediction of Gross Calorific Value of coal.

A. What Is Mathematical Modeling

Models describe our beliefs about how the world functions. In mathematical modeling, we translate those beliefs into the language of mathematics. This has many advantages

- Mathematics is an exact language. It helps us to formulate ideas and identify underlying assumptions.

- Mathematics is a concise language with well-defined rules for manipulations.

- The results that mathematicians have proved over hundreds of years are at our disposal.

- Computers can be used to perform numerical calculations.

There is a significant element of compromise in mathematical modeling. The majority of interacting systems in the real world are far too complicated to model in their entirety. Hence the first level of compromise is identifying the essential parts of the system. These will be included in the model; the rest will be excluded. The second level of compromise concerns the amount of mathematical manipulation which is worthwhile. Although mathematics has the potential to prove general results, these results depend critically on the form of equations used. Small changes in the structure of equations may require enormous changes in mathematical methods. Using computers to handle the model equations may never lead to elegant results, but it is more robust against alterations.

B. What Is The Necessity Of Modeling

Mathematical modeling can be used for several different reasons. How well any particular objective is achieved depends on the state of knowledge about a system and how well the modeling is done. Examples of the range of objectives are:

- Developing scientific understanding throughthe quantitative expression of current knowledge of a system (as well as displaying what we know, this may also show up what we do not know);

- test the effect of changes in a system;

- aid decision making, including

a. tactical decisions by managers;

b. strategic decisions by planners.

C. What Is Phenomenological Modeling

A phenomenological model is a scientific model that describes the empirical relationship of phenomena to each other in a consistent way with fundamental theory but is not directly derived from theory. In other words, a phenomenological model is not derived from the first principles. A phenomenological model forgoes any attempt to explain why the variables interact the way they do and simply attempts to describe the relationship, assuming that the relationship extends past the measured values.

- Regression analysis is sometimes used to create statistical models that serve as phenomenological models.

- Phenomenological models have been characterized as being completely independent of theories, though many phenomenological models while failing to be derivable from a theory, incorporate principles and laws associated with theories.

- The liquid drops model of the atomic nucleus, for instance, portrays the nucleus as a liquid drop and describes it as having several properties (surface tension and charge, among others) originating in different theories (hydrodynamics and electrodynamics, respectively). Certain aspects of these theories—though usually not the complete theory- are then used to determine the nucleus's static and dynamical properties.

D. What Is Empirical Modeling

Empirical modeling refers to any kind of (computer) modeling based on empirical observations rather than on mathematically describable relationships of the system modeled. Empiricalmodeling is a generic term for activities that create models by observation and experiment. Empirical Modelling (EM) refers to a specific variety of empirical Modelling constructed models following particular principles. Though the extent to which these principles can be applied to model-building without computers is an exciting issue, there are at least two good reasons to consider Empirical Modelling in the first instance as computer-based. Undoubtedly, computer technologies have had a transformative impact where the full exploitation of Empirical Modelling principles is concerned.

What is more, the conception of Empirical Modelling has been closely associated with thinking about the role of the computer in model-building.

An empirical model operates on a simple semantic principle: the maker observes a close correspondence between the model's behavior and that of its referent.

The crafting of this correspondence can be 'empirical' in a wide variety of senses: it may entail a trial-and-error process, may be based on a computational approximation to analytic formulae, it may be derived as a black-box relation. That affords no insight into 'why it works.' Empirical Modelling is rooted in the vital principle of William James's radical empiricism, which postulates that all knowing is rooted in given-in-experience connections.

E. Artificial Neural Network

Traditional approaches to solving chemical engineering problems frequently have their limitations, such as in the Modelling of highly complex and nonlinear systems. Artificial neural networks (ANN) have proved to solve complex tasks in some practical applications that should be of interest to you as a chemical engineer. This paper is not a review of the extensive literature that has been published in the last decade on artificial neural networks, nor is it a general review of artificial neural networks.

Instead, it focuses solely on certain kinds of ANN that have proven fruitful in solving real problems and gives four detailed examples of applications:

- fault detection

- prediction of polymer quality

- data rectification

- modeling and control for those who want more information,

F. Genetic Programming

Modifications for constraints" genetic algorithms" refer to the biological evolution of a genotype that has inspired their particular way of incorporating random influences into the optimization process. That way consists in:

- randomly exchanging coordinates between two particular points in the input space of the objective function (recombination, crossover),

- randomly modifying coordinates of a particular point in the input space of the objective function (mutation),

- Selecting the points for crossover and mutation according to a probability distribution is either uniform or skewed towards points where the objective function takes high values (the latter being a probabilistic expression of the survival-of-the-fittest principle).

In the search for optimal catalysts in chemical engineering, it is helpful to differentiate between quantitative mutation, which modifies merely the proportionsof substances already present in the catalytic material, and qualitative mutation, which enters new substances or removes present ones (Figure 1). Genetic algorithms have been initially introduced for unconstrained optimization. However, there have been repeated attempts to modify them for constraints.

4. To ignore offspring's infeasible concerning constraints and not include them in the new population. Because in constrained optimization, the global optimum frequently lies on a boundary determined by the constraints. Ignoring infeasible offspring may discharge information on offspring very close to that optimum. Moreover, for some genetic algorithms, this approach can lead to the deadlock of not being able to find a whole population of viable offspring.

5. To modify the objective function by a superposition of a penalty for infeasibility. This works well if theoretical considerations allow an appropriate choice of the penalty function. On the other hand, suppose some heuristic penalty function is used. In that case, its values typically turn out to be either too small, allowing the optimization paths to stay forever outside the feasibility area, or too large, thus suppressing the information on the value of the objective function for infeasible offspring.

6. To repair the infeasible offspring by modifying it so that all constraints get fulfilled. This again can discard information on some offspring close to that optimum. Therefore, various modifications of the repair approach have been proposed that give up full feasibility, but preserve some information on the original offspring, e.g., repairing only randomly selected offspring (with a prescribed probability) or using the original offspring together with the value of the objective function of its repaired version.

7. To add the feasibility/infeasibility as another objective function, thus transforming the constrained optimization task into a task of multiobjective optimization.

8. To modify the recombination and mutation operator to get closed concerning the set of feasible solutions. Hence, a mutation of a point that fulfills all the constraints or a recombination of two such points has to fulfill them.

G. Fuzzy Logic Controller

The fuzzy Logic 'Fuzzy' word is equivalent to inaccurate, approximate, and imprecise meaning. Fuzzy logic is a form of approximate reasoning rather than fixed or exact. Traditional binary sets have a truth value as either 0 or 1,which is true or false, while fuzzy logic has the truth value ranging between 0 and 1.

Its truth value can take any magnitude range between 0 and 1 or true or false.Fuzzy logic emulates human reasoning and logical thinking systematically and mathematically.

It provides an intuitive or perspective way to implement decision-making, diagnosis, and control system implementation.

Using traditional binary sets, it is not possible to precisely classify the color of the apples as completely dark i.e., 0 or false and completely lighter than the other i.e. 1 or true. Membership functions are user defined values for the linguistic variables. Thus, the human logic reasoning capabilities can be implemented for controlling complex real-world systems.

Fuzzy Logic controller structure Fuzzy logic controller is based on expert knowledge which provides means to convert the strategy for linguistic variable control into strategy for automatic control.

The fuzzy logic controller encompasses four main components viz. Fuzzification unit, Fuzzy Knowledge Base, Decision making unit, and Defuzzification unit. These are explained briefly below.

- Fuzzification: Fuzzification unit measures the real scalar values of variables inputted into the system. It then performs a scale mapping of the range of scalar values of input variables and then converts it to corresponding fuzzy value. Thus, fuzzification simply means converting the crisp input data into fuzzy value with help of suitable linguistic values and defined membership functions. Fuzzification process can be represented mathematically as given below: x = fuzzifier (x 0) Where x is the fuzzified value of the crisp input value.

- Fuzzy Knowledge Base: It consists of a database comprising of the necessary definitions used to describe linguistic variables and fuzzy data manipulation also it consists of a rule base comprising the control goals and control policy defined by the experts by means of linguistic control rules.

- Decision Making Unit: The Decision-making unit is the heart or kernel of a fuzzy logic controller which has the capability of inferring fuzzy control actions employing rules of inference in fuzzy logic. A set of IF-THEN rules are used for inferring a control action based on expert knowledge or designing. IF (antecedents are satisfied) THEN (consequents are inferred) IF-THEN rules are accompanied with linguistic variables and are frequently called as fuzzy conditional statements, or fuzzy controlled rules. IF-THEN rules can be also be used with logic operators such as Boolean operators for applying multiple antecedents or multiple consequents.

- Defuzzification: The last unit of the fuzzy logic controller is the defuzzification unit which converts a inferred fuzzy control value from the decision making unit to a non-fuzzy or a crisp control value, which is fed to the process for the controlling action. In defuzzification a scale mapping is done to convert a fuzzy value to a non-fuzzy value. Defuzzification can be done by various methods. The most used method is the Centre of Gravity method to get the most desired control value.

H. Support Vector Regression

Kernel-based techniques (such as support vector machines, Bayes point machines, kernel principal component analysis, and Gaussian processes) represent a major development in machine learning algorithms.

Support vector machines (SVM) are a group of supervised learning methods that can be applied to classification or regression. In a short period of time, SVM found numerous applications in chemistry, such as in drug design, quantitative structure-activity relationships, chemometrics, sensors, chemical engineering, and text mining. Support vector machines represent an extension to nonlinear models of the generalized portrait algorithm developed by Vapnik and Lerner.

A support vector machine (SVM) is machine learning algorithm that analyses data for classification and regression analysis. SVM is a supervised learning method that looks at data and sorts it into one of two categories. An SVM outputs a map of the sorted data with the margins between the two as far apart as possible. The basic idea of regression estimation is to approximate the functional relationship between a set of independent variables and a dependent variable by minimising a "risk" functional which is a measure of prediction errors.

I. State Of Art Approach For Prediction Of Gross Calorific Value Of Coal Using Machine Learning Algorithm

Mustafa Acikkar et al. [10] show how to use support vector machines (SVMs) and a feature selection approach to create new GCV, prediction models. To identify the relevance of each GCV predictor, the feature selector RReliefF is used to a dataset containing proximate and ultimate analytic variables. Seven separate hybrid input sets (data models) were created in this manner. The square of multiple correlation coefficient (R2), root mean square error (RMSE) and mean absolute percentage error (MAPE) was used to calculate the prediction performance of models.The predictor variables moisture (M) and ash (A) from the proximate analysis and carbon (C), hydrogen (H), and sulphur (S) from the ultimate analysis were found to be the most relevant variables in predicting coal GCV, while the predictor variables volatile matter (VM) from the proximate analysis and nitrogen (N) from the ultimate analysis had no positive effect on prediction accuracy. With 0.998, 0.22 MJ/kg, and 0.66 %, respectively, the SVM-based model utilizing the predictor variables M, A, C, H, and S produced the best R - squared value and the lowest RMSE and MAPE. GCV was also predicted using multilayer perceptron and radial basis function networks as a comparison.

Bui et al. [11] developed the particle swarm optimization (PSO)-support vector regression (SVR) model as a unique evolutionary-based prediction system for forecasting GCV with good accuracy. The PSO-SVR models were built using three different kernel functions: radial basis function, linear, and polynomial functions. In addition, three benchmark machine learning models were developed to estimate GCV, including Classification and Regression Tree (CART), MLR, and Principal Component Analysis (PCA), and then compared to the proposed PSO-SVR model; 2583 coal samples were used to analyze the proximate components and GCV for this study.They were then utilized to create the aforementioned models and test their performance in experimental findings. The GCV prediction models were evaluated using RMSE, R2, ranking, and intensity color criteria. The suggested PSO-SVR model with radial basis function exhibited superior accuracy than the other models, according to the findings. With excellent efficiency, the PSO method was improved in the SVR model. To assess the heating value of coal seams in difficult geological settings, they should be employed as a supporting tool in practical engineering.

J. Fu [12] describes the use of statistical models to quickly and accurately quantify GCV utilizing coal components with mensuration in real-time online in China to fulfill practical demands. Researchers have developed linear regression (LM), nonlinear regression equation (NLM), and artificial neural networks (ANN) for estimating GCV. The GCV of China coal is predicted using 1400 data points in this paper. The support vector machine (SVM) is used to determine the progress of the estimating process, and the estimating robustness is assessed. The SVM model outperformed the three existing models in terms of accuracy and robustness, according to the comparative research. Meanwhile, the sampling process has been enhanced, and the number of input variables has been decreased to the absolute minimum.

The prediction of calorific value was explored using linear regression analysis, including simple linear regression analysis and MLRanalysis, and prediction models were built, according to S. Yerel and T. Ersen [13]. Following that, statistical tests were used to verify the constructed models. A MLRmodel was shown to be reliable for estimating calorific values. For mining planning, the approach provides both practical and economic benefits. Regression analysis, such as simple linear regression analysis and MLRanalysis, was used to analyzethe calorific value, ash content, and moisture content in this study. The multiple regression model was shown to be the best model in regression analysis. The multiple regression model's determination R2 is 89.2 percent. This is an excellent value that indicates the correct model. This finding demonstrates the utility of a MLRmodel for predicting calorific value. To manage the coal deposit, these models assess factors such as calorific value, ash concentration, and moisture content.

M. Sozer et al. [14] propose an MLR algorithm for coal GCV prediction. The predictive parameters' importance was investigated using R2, adj. R2, standard error, F-values, and p-values. MLR offered an acceptable correlation between HHV and any of the single parameters, although connections between HHV and any of the single parameters were almost irregular. In forecasting the HHV, it was also discovered that ultimate analysis parameters (C, H, and N) were more important than proximal analysis factors (fixed carbon (FC), volatile matter (VM), and ash). When elemental C content was present in the regression equation, FC content was considered as an inefficient parameter. The elemental C content became the most dominating parameter after proximal analysis parameters were removed from the equation, resulting in extremely low p-values. For hardcoals, adj. R2 of the equation with three parameters (HHV = 87.801(C) + 132.207(H) − 77.929(S)) was slightly higher than that of HHV = 11.421(Ash) + 22.135(VM) + 19.154(FC) + 70.764(C) + 7.552(H) − 53.782(S).

Yilmaz et al. [15], currently available The determination of coal's GCVis critical for characterizing coal and organic shales; yet, it is a complex, expensive, time-consuming, and damaging process. The application of various artificial neural network learning techniques such as MLP, RBF (exact), RBF (k-means), and RBF (SOM) for the prediction of GCV was reported in this research. As a consequence of this study, all models performed well in predicting GCV. Although the four different ANN algorithms have nearly identical prediction capabilities, MLP has greater accuracy than the other models. Soft computing techniques will be used to develop new ideas and procedures for predicting certain factors in fuel research.

The input layer, the hidden layer, and the output layer make up an RBFNN, which is a feed-forward neural network with three layers. In terms of node properties and learning algorithms, RBFNN is akin to a particular example of multilayer feed-forward neural networks [16]. The outputs of Gaussian functions in the hidden layer neurons are inversely proportional to the distance from the neuron's center. RBFNNs and GRNNs are extremely comparable. The primary distinction is that in GRNN, one neuron is assigned to each point in the training file, but in RBFNN, the number of neurons is frequently significantly smaller than the number of training points [17].

The RBFNN model's training phase determines four distinct parameters. The numbers of neurons in the hidden layer, the center coordinates of each RBF function in the hidden layer, the radius (spread) of each RBF function in each dimension, and the weights applied to the RBF function output as they are transmitted to the output layer are the parameters [17].

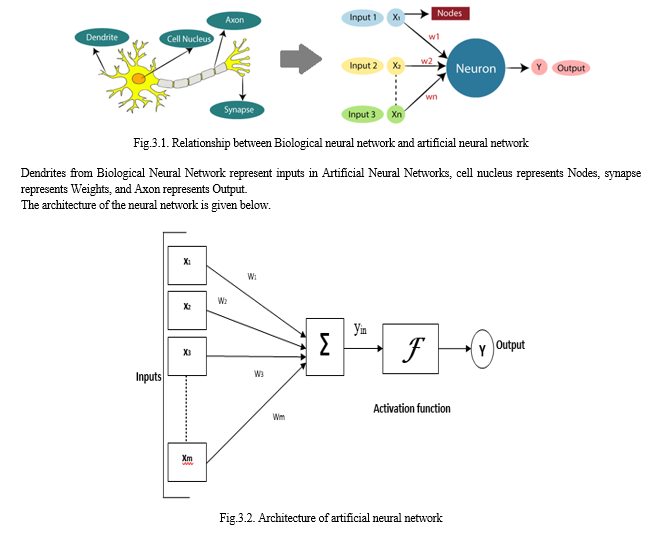

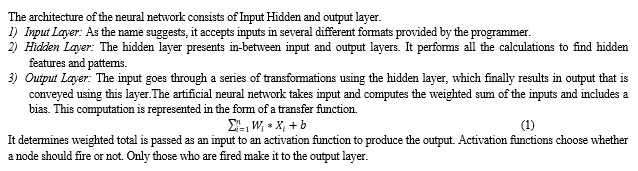

III. ARTIFICIAL NEURAL NETWORK

The term "Artificial Neural Network" is derived from Biological neural networks that develop the structure of a human brain. Similar to the human brain that has neurons interconnected to one another, artificial neural networks also have neurons that are interconnected to one another in various layers of the networks. These neurons are known as nodes.

Conclusion

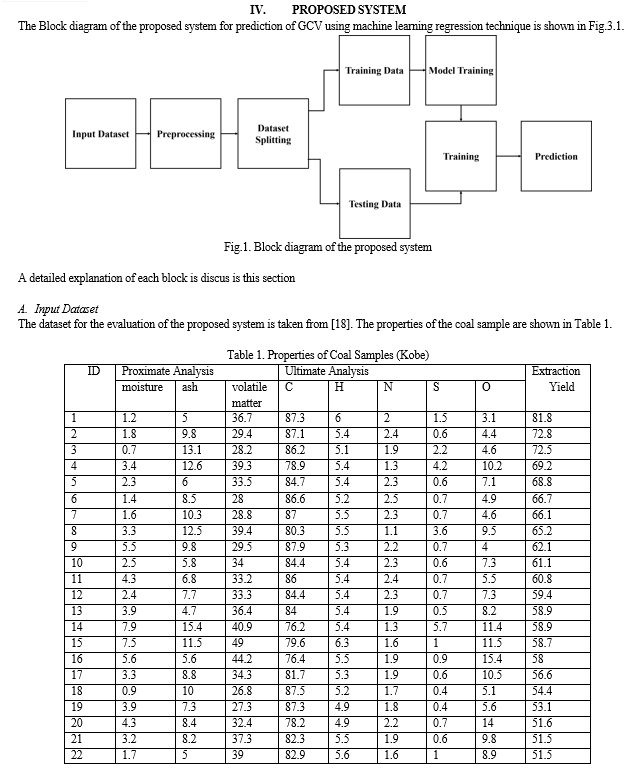

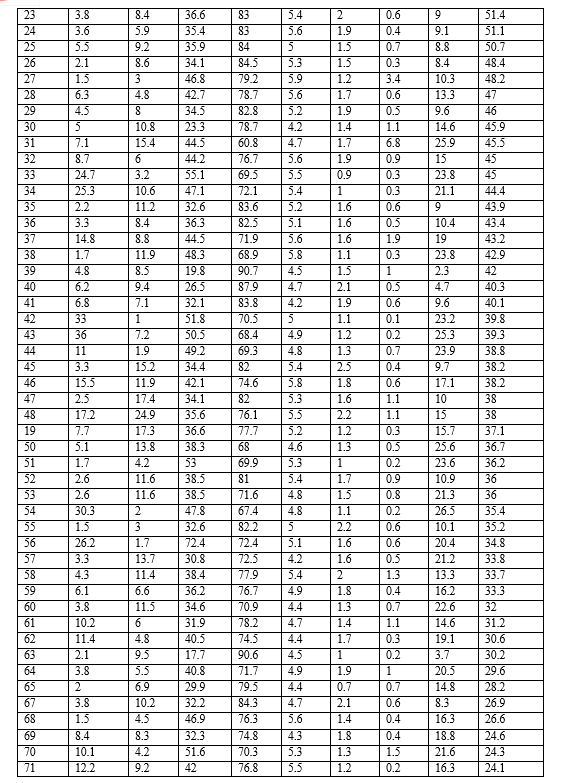

Coal is one of the world\'s most essential fossil fuels. In determining coal quality and deposit planning, a quick and accurate forecast of GCVs is critical. The regression analysis is aided by an accurate forecast of GCVs. In this paper, GCVasthe dependent variable and proximate analysis parameters (moisture, ash, volatile materials), and ultimate analysis parameters (Carbon, Hydrogen, Nitrogen, Sulphur, and Oxygen) as an independent variable are considered for the model training. The data is pre-processed using the normalization technique. The multiple regression and LPR algorithms are used to train the model over input data. The performance of the system is evaluated on the validation data using RMSE, MAE, and R2 evaluation metrics. From the qualitative analysis of the proposed system, it is observed that the RMSE, MAE, and R2of the MLR and LPR are observed as 0.124, 0.119, 0.414, and 0.654, 0.547, and 0.414 respectively.In the Future, a large dataset needs to be collected which will help to generalize the system. This system can be further improved by training the data using deep learning models.

References

[1] Sivrikaya O, \"Cleaning study of a low-rank lignite with DMS, Reichert spiral and flotation,\" Fuel 2014; 119: 252-258. [2] Akkaya AV. Proximate analysis based multiple regression models for higher heating value estimation of low rank coals. Fuel Process Technol 2009; 90: 165-170. [3] N. Okuyama, N. Komatsu, T. Shigehisa, T. Kaneko and S. Tsuruya, Fuel Process. Technol., 85, 947 (2004) [4] Atul Sharma, Toshimasa Takanohashi, Ikuo Saito, \"Effect of catalyst addition on gasification reactivity of HyperCoal and coal with steam at 775–700°C,\" Fuel,Volume 87, Issue 12,2008, pp.2686-2690 [5] Takanohashi, T., T. Shishido, and Is. Saito, 2008b. Effects of «HyperCoal» addition on coke strength and thermoplasticity of coal blends. Energy Fuels, 22: 1779-1783. [6] Masashi Iino, Toshimasa Takanohashi, Hironori Ohsuga, Kiminori Toda, \"Extraction of coals with CS2-N-methyl-2-pyrrolidinone mixed solvent at room temperature: Effect of coal rank and synergism of the mixed solvent,\" Fuel, Vol. 67, Issue 12, 1988, pp. 1639-1647. [7] Larson, J. W., Baskar, A. J., Hydrogen bonds from a subbituminous coal to sorbed solvents, An infrared study,Energy Fuels, 1987, 1: 230. [8] Painter, P. C., Sobkowiak, M., Youlcheff, J., FT-i.r. study of hydrogen bonding in coal,Fuel, 1987, 66: 973. [9] Miyake, M.; Stock, L. M.,\" Coal Solubilization. Factors Governing Successful Solubilization through C-Alkylation\" Energy Fuels 1988, vol.2, 815-818. [10] Acikkar, Mustafa. (2020). Prediction Of Gross Calorific Value Of Coal From Proximate And Ultimate Analysis Variables Using Support Vector Machines With Feature Selection. Ömer Halisdemir Üniversitesi Mühendislik Bilimleri Dergisi. 9. 1129-1141. 10.28948/ngumuh.585596. [11] Bui, H.-B.; Nguyen, H.; Choi, Y.; Bui, X.-N.; Nguyen-Thoi, T.; Zandi, Y. A Novel Artificial Intelligence Technique to Estimate the Gross Calorific Value of Coal Based on Meta-Heuristic and Support Vector Regression Algorithms. Appl. Sci. 2019, 9, 4868. https://doi.org/10.3390/app9224868 [12] J. Fu, \"Application of SVM in the estimation of GCV of coal and a comparison study of the accuracy and robustness of SVM,\" 2016 International Conference on Management Science and Engineering (ICMSE), 2016, pp. 553-560, doi: 10.1109/ICMSE.2016.8365486. [13] Yerel Kandemir, Suheyla & Ersen, T. (2013). Prediction of the Calorific Value of Coal Deposit Using Linear Regression Analysis. Energy Sources. 35. 10.1080/15567036.2010.514595. [14] Sozer, M., Haykiri-Acma, H., and Yaman, S. (May 13, 2021). \"Prediction of Calorific Value of Coal by Multilinear Regression and Analysis of Variance.\" ASME. J. Energy Resour. Technol. January 2022; 144(1): 012103. https://doi.org/10.1115/1.4050880 [15] Yilmaz, Iik & Erik, Nazan & Kaynar, Ouz. (2010). Different types of learning algorithms of artificial neural network (ANN) models for prediction of gross calorific value (GCV) of coals. Scientific Research and Essays. 5. 2242-2249. [16] Arliansyah J, Hartono Y. Trip attraction model using radial basis function neural networks. Procedia Eng 2015; 125: 445-451. [17] Sherrod PH. DTREG predictive modeling software user manual. 2014. [18] Koji Koyano, Toshimasa Takanohashi, and Ikuo Saito, \"Estimation of the Extraction Yield of Coals by a Simple Analysis,\" Energy & Fuel, 2011, Vol. 26, Issue 6, pp. 2565-2571.

Copyright

Copyright © 2022 Snehal B. Kale, Dr. Veena A. Shinde, Dr. Vijay S. Koshti. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET46093

Publish Date : 2022-07-31

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online