Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Real-Time Sign Language Detection using TensorFlow, OpenCV and Python

Authors: Prashant Verma, Khushboo Badli

DOI Link: https://doi.org/10.22214/ijraset.2022.43439

Certificate: View Certificate

Abstract

Deaf and hard-of-hearing persons, as well as others who are unable to communicate verbally, utilise sign language to communicate within their communities and with others. Sign languages are a set of preset languages that communicate information using a visual-manual modality. The dilemma of real-time finger-spelling recognition in Sign Language is discussed. We gathered a dataset for identifying 36 distinct gestures (alphabets and numerals) and a dataset for typical hand gestures in ISL created from scratch using webcam images. The system accepts a hand gesture as input and displays the identified character on the monitor screen in real time. This project falls under the category of human-computer interaction (HCI) and tries to recognise multiple alphabets (a-z), digits (0-9) and several typical ISL hand gestures. To apply Transfer learning to the problem, we used a Pre-Trained SSD Mobile net V2 architecture trained on our own dataset. In the vast majority of situations, we constructed a robust model that consistently classifies Sign language. Many studies have been done in the past in this area employing sensors (such as glove sensors) and other image processing techniques (such as edge detection technique, Hough Transform, and so on), but these technologies are quite costly, and many people cannot afford them. During the study, various human-computer interaction approaches for posture recognition were investigated and evaluated. The optimum solution was determined to comprise a set of image processing approaches with Human movement categorization. Without a controlled background and low light, the system can detect chosen Sign Language signs with an accuracy of 70-80%. As a result, we\'re creating this software to assist such folks because it\'s free and simple to use. However, aside from a small group of people, not everyone is familiar with sign language, and they may need an interpreter, which may be cumbersome and costly. This research intends to bridge the communication gap by building algorithms that can anticipate alphanumeric hand motions in sign language in real time. The main goal of this research is to create a computer-based intelligent system that will allow deaf persons to interact effectively with others by utilising hand gestures.

Introduction

I. INTRODUCTION

Sign languages were created largely to assist the deaf and dumb. In order to express precise information, they employ a simultaneous and specific mix of hand motions, hand shapes, and orientation.

This project falls within the HCI (Human Computer Interface) sector and seeks to recognise multiple alphabets (a-z), digits (0-9) and several typical ISL family hand motions such as Thank you, Hello, and so on. Hand-gesture recognition is a difficult problem, and ISL recognition is particularly difficult owing to the use of both hands. Many studies have been done in the past employing sensors (such as glove sensors) and various image processing techniques (such as edge detection, Hough Transform, and so on), but they are quite costly, and many people cannot afford them.

Many people in India are deaf or hard of hearing, thus they communicate with others using hand gestures. However, aside from a small group of people, not everyone is familiar with sign language, and they may need an interpreter, which may be complex and costly. The goal of this research is to build software that can anticipate ISL alphanumeric hand movements in real time, bridging the communication gap.[1]

II. PROBLEM STATEMENT

The most frequent sensory deficiency in people today is hearing loss. According to WHO estimates, there are roughly 63 million persons in India who suffer from Significant Auditory Impairment, putting the prevalence at 6.3 percent of the population. According to the NSSO study, there are 291 people with severe to profound hearing loss for every 100,000 people (NSSO, 2001)[2]. A substantial number of them are youngsters between the ages of 0 - 14. With such a huge population of hearing-impaired young Indians, there is a significant loss of physical and economic output. The main problem is that people who are hard of hearing, such as the deaf and dumb, find it difficult to interact with normal people since people who are not impaired do not learn how to communicate with each other using sign language.

The solution is to develop a translator that can detect sign language used by a disabled person, and then feed that sign into a machine-learning algorithm called transfer learning, which is then detected by the neural network and translated on the screen so that a normal person can understand what the sign is saying.

It's a lot easier now, thanks to speech to text and translators. But what about individuals who are unable to speak or hear? The main goal of this project is to create an application that can assist persons being unable to speak or hear. The language barrier is also a very significant issue. Hand signals and gestures are used by people who are unable to speak. Ordinary people have trouble comprehending their own language. As a result, a system that identifies various signals and gestures and relays information to ordinary people is required. It connects persons who are physically handicapped with others who are not. We can recognise the indications and provide the appropriate text output using computer vision and neural networks. Allow the sufferer to communicate on his or her own. Make yourself available to folks who are on a budget. It's completely free, and anyone may use it.

Many firms are creating solutions for deaf and hard of hearing persons, but not everyone can afford them. Some are very pricey for ordinary middle-class individuals to bring.

III. LITERATURE SURVEY

The purpose of the Literature Survey is to give the brief overview and also to establish complete information about the reference papers. The goal of Literature Survey is to completely specify the technical details related to the main project in a concise and unambiguous manner. In different approaches have been used by different researchers for recognition of various hand gestures which were implemented in different fields. The whole approaches could be divided into three broad categories ?

- Hand segmentation approaches

- Feature extraction approaches and ?

- Gesture recognition approaches.

The methods in which computers and humans communicate have changed in tandem with the advancement of information technology. In order to assist deaf and hearing individuals communicate more successfully, a lot of effort has been done in this sector. Because sign language consists of a series of gestures and postures, any attempt to recognise it falls within the category of human-computer interaction.

The detection of sign language is divided into two categories.

The Data Glove technique, in which the user wears a glove with electromechanical devices attached to digitalize hand and finger movements into processable data, is the first category. The downside of this approach is that you must constantly wear more clothing, and the findings are less precise.

Computer-vision-based techniques, on the other hand, use only a camera and allow for natural contact between humans and computers without the use of any extra technologies.[5]

Apart from significant advancements in the field of ASL, Indians began to work in the field of ISL. Image key point detection using SIFT, followed by a comparison of a new image's key point to the key points of standard images per alphabet in a database to categorise the new image with the label of the closest match. Similarly various work has been put into recognising the edges efficiently one of the idea was to use a combination of the colour data with bilateral filtering in the depth images to rectify edges.

Communicate with someone who is hard of hearing:

a. Speak clearly, not loudly

b. Talk at a reasonable speed.

c. Communicate face to face.

d. Create a quiet space

e. Seek better options.

f. Make it easy to lip-read.

g. Choose a mask that allows for lip-reading.[4]

These solutions are for persons who have a little hearing impairment; nevertheless, if a person is completely deaf, he or she will be unable to understand anything. At this time, Sign Language is their best and only option. Deaf-dumb people rely on sign language as their primary and only means of communication. Because sign language is a formal language that uses a system of hand gestures to communicate (by the deaf), it is the sole means of communication for those who are unable to speak or hear. Physically challenged persons can convey their thoughts and emotions via sign language.

In this paper, a unique sign language identification technique for detecting alphabets and motions in sign language is suggested. Deaf individuals employ a style of communication based on visual gestures and signs. The visual-manual modality is used to transmit meaning in sign languages. It is mostly utilised by Deaf or hard of hearing individuals.[6]

Sign language is used by youngsters who are neither deaf or hard of hearing. Hearing nonverbal children who are nonverbal owing to problems such as Down syndrome, autism, cerebral palsy, trauma, brain diseases, or speech impairments make up another big group of sign language users.

The ISL (Indian Sign Language) alphabet is used for fingerspelling. There is a symbol for each letter of the alphabet. On your palm, we may use these letter signs to spell out words – most commonly names and locations – and phrases.[11]

IV. TECHNOLOGY UTILIZED

- Interface: Jupyter notebook for inserting python libraries in a notebook format, it is typically a python code where we can easily estimate our data sets model in one single notebook.

- Operating System Environment: Windows 10

- Software: Python (3.7.4), Anaconda(2019-0.7) ,IDE (Jupyter), Numpy (version 1.16.5), cv2 (openCV) (version 3.4.2) , Tensorflow (version 2.0.0) , Github , Virtual Studio (2022) ,CUDA(10.1) and CuDNN(7.6) (For NIVIA GPU for faster training model) ,Protoc

- Hardware Environment: RAM- 16GB ,GRAPHIC CARD – 6GB , ROM-1060TB

V. TOOLS USED

- TensorFlow: It is an open-source artificial intelligence package that builds models using data flow graphs. It enables developers to build large-scale neural networks with several layers. TensorFlow is mostly used for classification, perception, comprehension, discovery, prediction, and creation. [7]?

- Object Detection API: It is an open source TensorFlow API to locate objects in an image and identify it. [7]?

- OpenCV: OpenCV is an open-source, highly optimized Python library targeted at tackling computer vision issues. It is primarily focused on real-time applications that provide computational efficiency for managing massive volumes of data. It processes photos and movies to recognize items, people, and even human handwriting [13]?

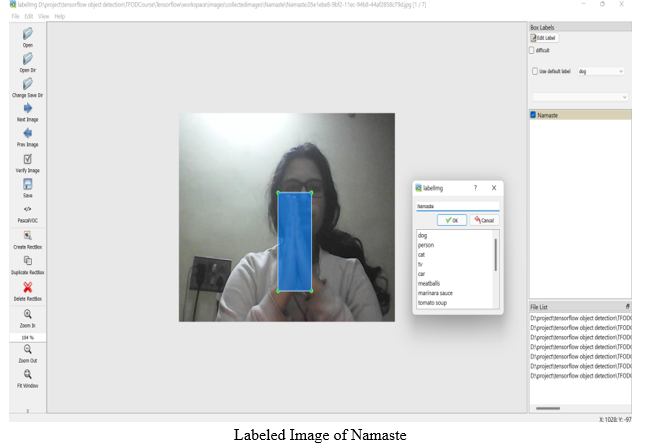

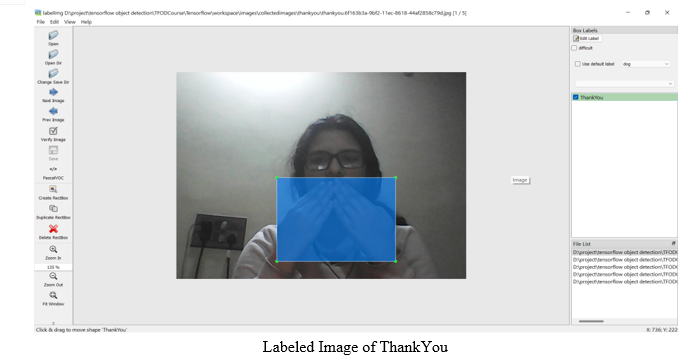

- LabelImg: LabelImg is a graphical image annotation tool that labels the bounding boxes of objects in pictures.[9]

VI. MODEL ANALYSIS AND RESULT:

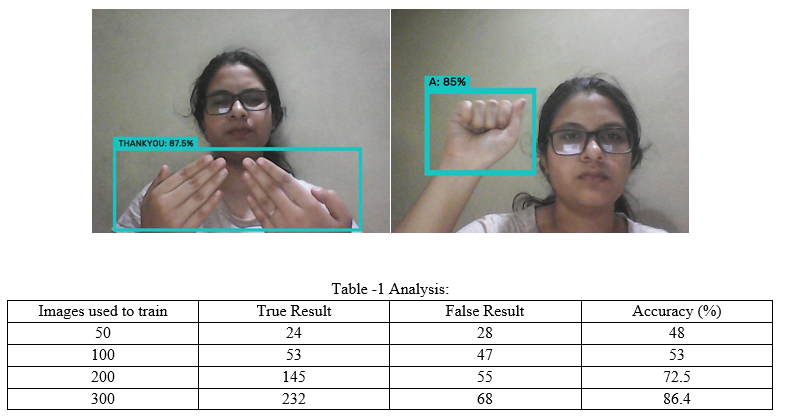

The model was trained using the technique of transfer learning and a pre-trained model SSDmobile net v2 was used.

A. Transfer Learning

Transfer learning is a machine learning technique where a model trained on one task is re-purposed on a second related task. Transfer learning is an optimization that allows rapid progress or improved performance when modeling the second task. Transfer learning is related to problems such as multi-task learning and concept drift and is not exclusively an area of study for deep learning.

Transfer learning is simply the process of using a pre-trained model that has been trained on a dataset for training and predicting on a new given dataset.[16]

B. SSD Mobile net V2

The Mobile Net SSD model is a single-shot multibox detection (SSD) network that scans the pixels of an image that are inside the bounding box coordinates and class probabilities to conduct object detection. In contrast to standard residual models, the model's architecture is built on the notion of inverted residual structure, in which the residual block's input and output are narrow bottleneck layers. In addition, nonlinearities in intermediate layers are reduced, and lightweight depthwise convolution is applied. The TensorFlow object detection API includes this model.

C. Pretrained Model Name

SSD_MOBILENET_V2_FPNLITE_320x320_COCO17_TPU-8

SPEED(ms) - 22

COCOmAP(mean average precision) - 22.5

OUTPUT – Boxes

Accuracy is inversely proportional to speed

Precision- True Precision/(True Precision+False Precision)

High precision value means more accurate will be the Model

VII. RESULT

VIII. APPLICATION AND FUTURE WORKS

A. Application

- The dataset can easily be extended and customized according to the need of the user and can prove to be an important step towards reducing the gap of communication for dumb and deaf people.

- Using the sign detection model, meetings held at a global level can become easy for the disabled people to understand and the value of their hard work can be given.

- The model can be used by any person with a basic knowledge of tech and thus available for everyone.

- This model can be implemented at elementary school level so that kids at a very young age can get to know about the sign language.

B. Future Scope

- The implementation of our model for other sign languages such as Indian sign language or American sign language.

- Further training with large dataset to efficiently recognize symbols.

- Improving the model's ability to identify expression

Conclusion

The fundamental goal of a sign language detecting system is to provide a practical mechanism for normal and deaf individuals to communicate through hand gestures. The proposed system will be used with a webcam or any other in-built camera that detects and processes indicators for recognition. We may deduce from the model\'s findings that the suggested system can produce reliable results under conditions of regulated light and intensity. Furthermore, new motions may be simply incorporated, and more images captured from various angles and frames will supply the model with greater accuracy. As a result, by expanding the dataset, the model may simply be scaled up to a vast size. The model has some limitations, such as environmental conditions such as low light intensity and an unmanaged backdrop, which reduce detection accuracy. As a consequence, we\'ll attempt to fix these problems as well as expand the dataset for more accurate findings.

References

[1] Jamie Berke , James Lacy March 01, 2021 “Hearing loss/deafness| Sign Language” https://www.verywellhealth.com/sign-language-nonverbal-users-1046848 [2] “National Health Mission -report of deaf people in India”, nhm.gov.in . 21-12-2021. [3] “Computer Vision” https://www.ibm.com/in-en/topics/computer-vision [4] Stephanie Thurrott|November 22 ,2021 “The Best Ways to Communicate with Someone Who Doesn’t Hear Well” [5] https://www.forbes.com/sites/bernardmarr/2019/04/08/7-amazing-examples-of-computer-and-machine-vision-in-practice/?sh=60a27b1b1018 [6] Juhi Ekbote, M. Joshi Published 1 March 2017Computer Science 2017 International Conference on Innovations in Information, Embedded and Communication Systems (ICIIECS)|DOI:10.1109/ICIIECS.2017.8276111 Corpus ID: 24740741 |Indian sign language recognition using ANN and SVM classifiers [7] By great learning team ,“Real-Time Object Detection Using TensorFlow”, december 25 ,2021 https://www.mygreatlearning.com/blog/object-detection-using-tensorflow/ [8] Jeffrey Dean, minute 0:47 / 2:17 from YouTube clip “TensorFlow: Open source machine learning”. Google. 2015. Archived from the original on November 11, 2021. \"It is machine learning software being used for various kinds of perceptual and language understanding tasks\" [9] Joseph Nelson “LabelImg for Labeling Object Detection Data” march 16,2020 https://blog.roboflow.com/labelimg [10] Tutzalin “LabelImg” January 5 https://github.com/tzutalin/labelImg [11] ID 168705246 | Utpreksha Chipkar | Dreamstime.com Hand Gestures Image “https://www.dreamstime.com/vector-illustrations-hand-gestures-their-meanings-vector-hand-gestures-meanings-image168705246” [12] Shirin Tikoo |COMPUTER VISION| Develop Sign Language Translator with Python | Skyfi Labs • Published: 2019-11-26 • Last Updated: 2022-05-14 https://www.skyfilabs.com/project-ideas/sign-language-translator-using-python” [13] Ramswarup Kulhary “OpenCV -Python” |updated 05 August 2021 | https://www.geeksforgeeks.org/opencv-overview/ [14] Saurabh Pal |March 25, 2019 |16 OpenCV Functions to Start your Computer Vision journey [15] \"About Python\". Python Software Foundation. Archived from the original on 20 April 2012. Retrieved 24 April 2012 Rossum, Guido Van (20 January 2009). [16] \"The History of Python: A Brief Timeline of Python\". The History of Python. Archived from the original on 5 june 2020. [17] Jason Brownlee on December 20, 2017 in Deep Learning for Computer Vision | Updated on September 16, 2019|A Gentle Introduction to Transfer Learning for Deep Learning| machinelearningmastery.com [18] “transfer learning”, tensorflow.org https://www.tensorflow.org/tutorials/images/transfer_learning

Copyright

Copyright © 2022 Prashant Verma, Khushboo Badli. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET43439

Publish Date : 2022-05-27

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online