Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Revolutionizing Alzheimer’s Disease Diagnosis using Deep Learning Approach

Authors: Arsah A, Karolin Kiruba R, Kishan I, Neekitha C, Padmapriya M

DOI Link: https://doi.org/10.22214/ijraset.2023.51715

Certificate: View Certificate

Abstract

Disorders of the brain are one of the most difficult diseases to cure because of their fragility, the difficulty of performing procedures, and the high costs. On the other hand, the surgery itself does not have to be effective because the results are uncertain. Adults who have hypertension, one of the most common brain illnesses, may have different degrees of memory problems and forgetfulness. Depending on each patient\'s situation. For these reasons, it\'s crucial to define memory loss, determine the patient\'s level of decline, and determine his brain MRI scans are used to identify Alzheimer\'s disease. In this project, we discuss methods and approaches for diagnosing Alzheimer\'s disease using deep learning. The suggested approach is utilized to enhance patient care, lower expenses, and enable quick and accurate analysis in sizable investigations. Alzheimer\'s disease is a progressive neurodegenerative disorder that affects millions of people worldwide. Early detection of the disease can improve patient outcomes, and brain MRI scans have shown promise as a tool for detecting Alzheimer\'s disease in its early stages. In recent years, deep learning algorithms, such as convolutional neural networks (CNNs), have been increasingly used in Alzheimer\'s disease analysis from brain MRI scans. This paper proposes a CNN-based system for Alzheimer\'s disease analysis from brain MRI scans. The proposed system involves several steps, including data preprocessing, feature extraction, training the CNN model, and evaluating its performance on a test set. The results demonstrate the effectiveness of the proposed CNN-based system in accurately detecting Alzheimer\'s disease from brain MRI scans. The proposed system has the potential to improve early detection and monitoring of Alzheimer\'s disease, leading to improved patient outcomes.

Introduction

I. INTRODUCTION

Alzheimer’s disease (AD) is the neurodegenerative disease among older adults and affects around 46 million people in the world. The early symptom of the disease is forgetting recent events or conversations. As the disease progresses, it brings severe impairment in memory and loss the ability to take over every day’s task. The beginning of the damage takes place in the region of the brain which is responsible in controlling the memory, but the process starts years before the first symptom. The loss of the neuron spreads to other regions and later the brain has shrunk significantly. The disease has the following stages: Mild, Moderate and Severe. The mild stage is the early stage and it includes the problems coming up with the right word or name and losing or misplacing the valuable object. The moderate stage is the middle and longest stage which includes forgetfulness about one’s own personal History and confusion about where they are and what day it is. The severe stage is the late stage and includes experience changes in physical abilities and has difficulty in communicating. More than 4 million people are suffering from Alzheimer's and other forms of dementia in India, which gives the third highest caseload in the world, after China and the United States. India’s dementia and Alzheimer’s burden is forecast to reach almost 7.5 million at the end of 2030. Predicting the disease priory brings down the risk of death rate. To do so, various methods are used to implement the classification of the disease.The early symptoms of Alzheimer’s disease are memory loss, mood changes, poor judgement, social withdrawal, changes in vision. This happens because Alzheimer's disease affects the hippocampus, which plays an important role in memory. One in every 3 seconds a new person somewhere is affected by dementia. At first, it typically destroys neurons and their connections in parts of the brain involved in memory, including the entorhinal cortex and hippocampus.

Hence, to understand the changes in the brain need to be studied and explored. It is in this context that MRI images are used as input. and a use a deep learning approach is used to study the variations shown by various factors to calculate the transition from Mild Cognitive Impairment (MCI) to AD. So, when changes are evident in those factors, one can be aware of such changes and take needed medications.

II. EXISTING SYSTEM

Alzheimer's disease is a chronic neurodegenerative disease that affects millions of people worldwide. It is a progressive disease that impairs memory, cognitive abilities, and daily functioning, and eventually leads to death.

Due to the growing prevalence of Alzheimer's disease, there is a need for effective early detection and prediction systems to aid in early diagnosis and treatment. There are various existing systems for Alzheimer's disease prediction, each with their own unique advantages and disadvantages. One such system is the Alzheimer's Disease Neuroimaging Initiative (ADNI). ADNI is a large-scale research effort that uses neuroimaging, genetics, and other biomarkers to identify early signs of Alzheimer's disease. The data collected from ADNI is used to develop predictive models for Alzheimer's disease. The most widely used algorithms are the support vector machine (SVM) and random forest after extracting the features from the image preprocessing pipeline. Residual U-Net model having a hybrid attention mechanism which can performs feature extraction at different scales of the receiving input images and a hybrid attention module that is incorporated with the skip connection in the U-Net model. Convolutional autoencoder system for PET images to perform multi-modal data fusion for disease prediction.

III. PROPOSED SYSTEM

The project deals with the deep learning based method for extraction of features from the segmented region to detect and classify the normal and abnormal brain cells of medical brain MRI images for a large database.The proposed approach extracts texture and shape features of the brain region from the MRI scans and a Neural Network is used as Multi-Class Classifier for detection of various stages of Alzheimer’s disease.The proposed approach is under implementation and

expected to give better accuracy as compared to conventional approaches.

The step-by-step process of the project are as follows:

- To collect and pre-process relevant data on demographic, lifestyle, and health-related factors from a variety of sources.

- To select appropriate machine learning algorithms for developing the alzheimer prediction model.

- To train and test the model using the collected data to evaluate its performance and accuracy.

- To optimize the model by fine-tuning the hyper-parameters and feature selection to improve its predictive capabilities.

- To deploy the model for practical use in clinical settings to aid in the early detection and prevention of alzheimer.

Overall, the project aims to leverage the power of machine learning to create a predictive model that can help healthcare professionals identify individuals at risk of developing alzheimer, leading to earlier intervention and improved health outcomes.

A. Modules Description

- Image Acquisition - The status of the brain can be examined using an MRI to help predict abnormalities and cerebral activities.In this work, an Automatic Diagnostic Tool (ADT) was created with the intention of analyzing and classifying normal and Alzheimer class MRI signal patterns. We can enter MRI image data in this module. This Kaggle dataset is a collection of MRI scans taken from young patients with untreatable Alzheimer's.After discontinuing anti-Alzheimer mediation, subjects were observed for up to several days in order to describe their data and determine whether they were candidates for surgical surgery.

- Preprocessing - Data preprocessing, which is an integral step in the data mining process, is the transformation or erasure of data before use in order to ensure or improve performance. Rubbish in, and garbage out is especially true for projects using data and machine learning. Data collection techniques are usually not well controlled, which results in out-of-range figures, impossible data combinations, missing information, etc. Inaccurate positive results from data analysis might result from not adequately checking for these problems. As a result, each analysis must be preceded by a review of the data's presentation or quality. The most critical part of a machine learning project often becomes data preparation, especially in computational biology. Serial EEG recordings are initially split in the before stage by a rolling time window without crossing over. The EEG data is then subjected to a wavelet transformation to produce a collection of signals.

- Feature Extraction - From preprocessed data, we can extract the temporal or frequency domain features in this module. It contains "mean," "variety," "kurtosis," "skewness," as well as other features for classification in the future.Automated feature ereduced to more manageable groups. Feature extraction helps to get the best feature from those big data sets by selecting and combining variables into features, thus, effectively reducing the amount of extraction uses specialized algorithms or deep networks to extract features automatically from signals or images without the need for human intervention. Feature extraction is a part of the dimensionality reduction process, in which, an initial set of the raw data is divided and data.

- Model Training - In the process of diagnosing Alzheimer's disease utilizing MRI recordings, a variety of comment operations must be carried out on the outputs of a training Deep CNN in order to obtain the offer enhanced again for test MRI scan image. A CNN consists of input, a layer, and multiple hidden layers. In a CNN's hidden layers, convolution, pooling, and fully connected layers are frequently observed. Following a convolutional layer's operation on the input, the subsequent layer receives the outcome. Convolution mimics the response of a single neuron to visual stimuli.

In convolutional networks, the output of a neural cluster at one level can be combined with a nerve cell at a higher level using either local or global pooling layers. In mean pooling, the mean value from each neural cell in the previous layers is utilized. Through fully connected layers, every neuron in one layer can communicate with every neuron in every other layer. The classic multi-layer feed-forward neural network and the CNN are conceptually equivalent. For the study of high-dimensional data, CNNs are unquestionably better than traditional classifiers. To control and reduce the overall number of parameters, convolutional layers of CNNs employ a parameter-sharing strategy. The network's variable and computation count, in addition to the representation's various studies have demonstrated, are gradually reduced through the use of a pooling layer, providing the network more fitting control. Setting up the CNN Model

function INITCNNMODEL ( , [ –5])

layer type1 = [max-pooling, fully-connected, fully-connected, convolution];

layer activation1 = [tanh(), max(), tanh(), softmax()]

model = new Model();

for =1 to 4 do

layer.type1 = layerType1[ ];

layer.inputSize =

layer.nervecell = new Nervecell [+1];

layer.params = ;

model.addLayer(layer);

end for

return model;

end function

5. Classification - User can input the brain the image in testing phase and extract the features and matched with model file and identify the disease details. And provide the diagnosis details based on predicted diseases.

6. Algorithm Details - ImageNet Large-Scale Visual Recognition Challenge (ILSVRC) is an annual event to showcase and challenge computer vision models. In the 2014 ImageNet challenge, Karen Simonyan & Andrew Zisserman from Visual Geometry Group, Department of Engineering Science, University of Oxford showcased their model in the paper titled “VERY DEEP CONVOLUTIONAL NETWORKS FOR LARGE-SCALE IMAGE RECOGNITION,” which won the 1st and 2nd place in object detection and classification.

A convolutional neural network is also known as a ConvNet, which is a kind of artificial neural network. convolutional neural network has an input layer, an output layer, and various hidden layers. VGG16 is a type of CNN (Convolutional Neural Network) that is considered to be one of the best computer vision models to date. The creators of this model evaluated the networks and increased the depth using an architecture with very small (3 × 3) convolution filters, which showed a significant improvement on the prior-art configurations. They pushed the depth to 16–19 weight layers making it approx — 138 trainable parameters.

VGG16 is object detection and classification algorithm which is able to classify 1000 images of 1000 different categories with 92.7% accuracy. It is one of the popular algorithms for image classification and is easy to use with transfer learning.

The 16 in VGG16 refers to 16 layers that have weights. In VGG16 there are thirteen convolutional layers, five Max Pooling layers, and three Dense layers which sum up to 21 layers but it has only sixteen weight layers i.e., learnable parameters layer.

VGG16 takes input tensor size as 224, 244 with 3 RGB channel Most unique thing about VGG16 is that instead of having a large number of hyper-parameters they focused on having convolution layers of 3x3 filter with stride 1 and always used the same padding and maxpool layer of 2x2 filter of stride 2.

The convolution and max pool layers are consistently arranged throughout the whole architecture Conv-1 Layer has 64 number of filters, Conv-2 has 128 filters, Conv-3 has 256 filters, Conv 4 and Conv 5 has 512 filters.

Three Fully-Connected (FC) layers follow a stack of convolutional layers: the first two have 4096 channels each, the third performs 1000-way ILSVRC classification and thus contains 1000 channels (one for each class). The final layer is the soft-max layer.

The VGG 16 architecture has become a popular choice for many computer vision applications because of its strong performance on the ImageNet dataset, as well as its relative simplicity compared to other CNN architectures such as ResNet and Inception. The architecture was originally developed for image classification, but it has since been adapted for other tasks such

as object detection and semantic segmentation. For example, the fully connected layers can be removed and replaced with convolutional layers to create a fully convolutional network for semantic segmentation.

Constructing the VGG16 framework involves defining the architecture of the neural network using appropriate libraries and tools. Here are the general steps for constructing the VGG16 framework:

Import the necessary libraries such as TensorFlow, Keras, or PyTorch, depending on preference.

Define the input layer of the network, which should have the same dimensions as the input images. For example, for the ImageNet dataset, the input layer should be 224x224 pixels with 3 color channels (RGB).

Define the convolutional layers of the network using multiple 3x3 filters with a stride of 1 and padding to maintain the spatial dimensions of the feature maps. Use the appropriate activation function, such as ReLU.

Train the model on a large dataset such as ImageNet, using techniques such as data augmentation and early stopping to improve generalization and prevent overfitting. Evaluate the model on a separate test dataset to assess its performance and compare it to other models. It's important to note that the exact implementation details of the VGG16 architecture may vary depending on the specific library or tool you are using. However, the general principles of defining the layers, specifying the activation functions and regularization techniques, and compiling and training the model should be consistent across implementations.

Overall, training a CNN for paddy leaf disease prediction is a complex process that requires careful attention to data preprocessing, model architecture, and hyper-parameter tuning. However, with sufficient data and expertise, CNNs can be powerful tools for improving disease management in agriculture. CNN has multiple layers that process and extract important features from the image. There are mainly 4 steps to how CNN works

Convolution neural network algorithm is a multilayer perceptron that is the special design for identification of two-dimensional image information .

•Always has more layers: input layer, convolution layer, sample layer and output layer.

•In addition, in a deep network architecture the convolution layer and sample layer can have multiple.

•Step 1: Convolution Operation

•Step 1(b): ReLU Layer

Step: 2 Pooling

Pooling is a down-sampling operation that reduces dimensions and computation, reduces over-fitting as there are fewer parameters and the model is tolerant towards variation and distortion.

Step: 3 Flattening

Flattening is used to put pooling output into one dimension matrix before further processing.

Step: 4 Fully Connected Layer

A fully connected layer forms when the flattening output is fed into a neural network which further classifies and recognized images. And also implement multiclass classifier; we can predict diseases in leaf images with improved accuracy.

IV. IMPLEMENTATION

For this process, dataset is needed to be loaded in the system. The convolutional neural network algorithm is used for developing the disease prediction is done. Based on the collected data, we train and test the model to find out its accuracy and performance. The output of this process will be of checking whether the image uploaded is affected by alzheimer’s disease or not and also classifying the type of alzheimer.

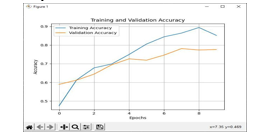

V. EXPERIMENTAL RESULTS

After the continuous training of epochs, the output is obtained it is attached. Once the training is completed we can start testing the system.

???????

???????

Conclusion

In conclusion, retinal image analysis using machine learning techniques has shown great potential in the early detection and prediction of several eye diseases such as diabetic retinopathy and glaucoma. With the increasing prevalence of these diseases worldwide, there is a growing need for more effective and efficient screening methods. Machine learning-based approaches can not only improve the accuracy and speed of diagnosis but also reduce the burden on healthcare systems and improve patient outcomes. The proposed system using CNN algorithms for retinal image segmentation, diabetic and glaucoma classification can help healthcare providers to make more informed decisions and provide personalized treatment plans. The combination of deep learning algorithms and retinal imaging has the potential to revolutionize the way we diagnose and manage these diseases, leading to better patient outcomes and a reduction in the overall healthcare burden. With the help of deep learning algorithms, medical professionals can process complex retinal images more efficiently, which can result in faster and more accurate diagnoses. Moreover, these approaches can help overcome the challenges associated with subjective interpretations of medical images. Human error and inter-observer variability can lead to inconsistencies in the interpretation of medical images, which can have a significant impact on patient outcomes. By leveraging machine learning algorithms, we can obtain more objective and standardized results that can help improve the quality of care.

References

[1] Basher, Abol, et al. \"Volumetric feature-based Alzheimer’s disease diagnosis from sMRI data using a convolutional neural network and a deep neural network.\" IEEE Access 9 (2021): 29870-29882. [2] Abrol, Anees, et al. \"Deep residual learning for neuroimaging: an application to predictprogression to Alzheimer’s disease.\" Journal of neuroscience methods 339 (2020): 108701. [3] De Silva, S., S. Dayarathna, and D. Meedeniya. \"Alzheimer\'s Disease Diagnosis using Functional and Structural Neuroimaging Modalities.\" Enabling Technology for Neurodevelopmental Disorders. Routledge, 2021. 162-183. [4] Garcia?Gutierrez, Fernando, et al. \"Diagnosis of Alzheimer\'s disease and behavioural variant frontotemporal dementia with machine learning?aided neuropsychological assessment using feature engineering and genetic algorithms.\" International Journal of Geriatric Psychiatry 37.2 (2022). [5] Zhang, Jie, et al. \"A 3D densely connected convolution neural network with connection-wise attention mechanism for Alzheimer\'s disease classification.\" Magnetic Resonance Imaging 78 (2021): 119-126. [6] Liu, Manhua, et al. \"A multi-model deep convolutional neural network for automatic hippocampus segmentation and classification in Alzheimer’s disease.\" Neuroimage 208 (2020): 116459. [7] Mendoza-Léon, Ricardo, et al. \"Single-slice Alzheimer\'s disease classification and disease regional analysis with Supervised Switching Autoencoders.\" Computers in biology and medicine 116 (2020): 103527. [8] Kumar, L. Sathish, et al. \"AlexNet approach for early stage Alzheimer’s disease detection from MRI brain images.\" Materials Today: Proceedings 51 (2022): 58-65. [9] Shaji, Sreelakshmi, Nagarajan Ganapathy, and Ramakrishnan Swaminathan. \"Classification of alzheimer condition using MR brain images and inception-residual network model.\" Current Directions in Biomedical Engineering 7.2 (2021): 763-766. [10] Faisal, Fazal Ur Rehman, and Goo-Rak Kwon. \"Automated detection of Alzheimer’s disease and mild cognitive impairment using whole brain MRI.\" IEEE Access 10 (2022): 65055-65066. [11] Hussain, Emtiaz, et al. \"Deep learning based binary classification for alzheimer’s disease detection using brain mri images.\" 2020 15th IEEE Conference on Industrial Electronics and Applications (ICIEA). IEEE, 2020. [12] Eroglu, Yesim, Muhammed Yildirim, and Ahmet Cinar. \"mRMR?based hybrid convolutional neural network model for classification of Alzheimer\'s disease on brain magnetic resonance images.\" International Journal of Imaging Systems and Technology 32.2 (2022): 517-527. [13] Mehmood, Atif, et al. \"A transfer learning approach for early diagnosis of Alzheimer’s disease on MRI images.\" Neuroscience 460 (2021): 43-52. [14] Shaikh, Tawseef Ayoub, and Rashid Ali. \"Automated atrophy assessment for Alzheimer\'s disease diagnosis from brain MRI images.\" Magnetic resonance imaging 62 (2019): 167-173. [15] Salehi, Ahmad Waleed, et al. \"A CNN model: earlier diagnosis and classification of Alzheimer disease using MRI.\" 2020 International Conference on Smart Electronics and Communication (ICOSEC). IEEE, 2020. [16] Xing, Xin, et al. \"Dynamic image for 3d mri image alzheimer’s disease classification.\" Computer Vision–ECCV 2020 Workshops: Glasgow, UK, August 23–28, 2020, Proceedings, Part I. Cham: Springer International Publishing, 2021. [17] Ebrahimi-Ghahnavieh, Amir, Suhuai Luo, and Raymond Chiong. \"Transfer learning for Alzheimer\'s disease detection on MRI images.\" 2019 IEEE International Conference on Industry 4.0, Artificial Intelligence, and Communications Technology (IAICT). IEEE, 2019. [18] Mehmood, Atif, et al. \"A deep Siamese convolution neural network for multi-class classification of Alzheimer disease.\" Brain sciences 10.2 (2020): 84.

Copyright

Copyright © 2023 Arsah A, Karolin Kiruba R, Kishan I, Neekitha C, Padmapriya M. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET51715

Publish Date : 2023-05-06

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online