Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Smart System for Visually Impaired People

Authors: Xenus Gonsalves, Royce Rumao, Kallis Fernandes, Greatson Lobo, Unik Lokhande

DOI Link: https://doi.org/10.22214/ijraset.2023.53574

Certificate: View Certificate

Abstract

The main objective of the Smart System is to enable effortless and more unhindered movement for visually impaired people. People who are blind have a full life of responsibilities. However, being blind makes it difficult for them to carry out their duties. Many blind people move around and carry out their tasks using a conventional stick. Traditional sticks, however, do not detect impediments, making them useless for those who are blind. The blind individual is unaware of the types of obstructions or items in front of them. The blind person is unaware of both the object\'s size and the distance between him or her and it. Being blind makes it challenging to move about. They will be able to recognise anything to help persons with vision impairment by making many of their daily routines easy, pleasant, and organised. (A barrier for those who are blind). It is designed to make navigation easier for persons who are blind or visually impaired. People who are blind or have low eyesight can use it to comfortably and easily navigate and complete their everyday duties. a cutting-edge smartphone application that makes it easier for those with vision impairment to move around. The software approach suggested in this paper makes it easier and more pleasant for people with vision impairment to navigate their surroundings. The suggested solution employs a camera device—which may be a phone—and an application to assist blind and visually impaired persons in recognising things in their environment, such as TVs, tables, cars, and people this may help them avoid these things. The system will also determine the object\'s distance (measured in metres) and positioning inside the video feed that was collected (left, right, or centred). The device will therefore alert the user via sound from the smartphone.

Introduction

I. INTRODUCTION

Visually impaired people do live like normal people and have unique methods of working and doing tasks. But because of the poor infrastructure and societal issues, they undoubtedly have problems. For someone who is visually impaired, especially the one who has lost eyesight completely, navigating the places around them is the most difficult task. Certainly, visually impaired people can walk freely in some familiar places like their home without any help because they know every place’s whereabouts. Those people who live with visually impaired people or the ones who visit them must take care and always try and avoid moving things without the visually impaired individual’s permission or knowledge. Technology that can help the physically disabled is seriously lacking. We wanted to create something that will benefit those who are visually impaired. Therefore, we created a mechanism that can be used to assist the visually impaired to walk around with ease. It is a known fact that there are an estimated 285 million individuals that have lost eyesight in the world, or almost 20% of the population in India. They go through navigational difficulties, mostly when there is no one to support them. They largely rely on someone else to provide them with even their most basic daily requirements and tasks to do. It's a very hard task, thus a system or technological solution is very crucial and needed desperately. One such attempt from us is we developed a machine learning system which enables visually impaired individuals to identify real-time common everyday objects using a video camera, generate voice alerts, and calculate the distance and position. The Obstacle Detection Mechanism can be implemented using the same technology.

II. LITERATURE SURVEY

The author of this research [1] suggests affordable smart eyewear for individuals who are has lost eyesight. The visually impaired individual can easily feel the impediment in front of him with the use of this technology, which can prevent mishaps. This item is purchased for an incredibly low cost. Waste materials were used to create this item. People who are blind are rendered autonomous by this. People who are visually challenged can make use of these smart glasses. Here, the SONAR sensor concept has been employed to identify obstacles. The Arduino is provided the obstacle's space, and the victim is informed.

In [2] They suggested a portable and lightweight transmission system in this study to help the blind read signs. Our goal is to make them straightforward and inexpensive so that they will be accessible to everyone and simple to use. The system is designed to be simpler than it is currently.

The platform used in this work consists of a set of smart glasses that can read warning indications and issue cautionary messages to users.

In [3] This study uses smart glasses to help people with vision impairments overcome their travel challenges. It used an ultrasonic sensor and a microcontroller to accurately estimate the distance and detect the barrier. The vision handicapped individual receives the information from the sensor and receives it through a headphone. This study introduces a single-smart gadget that enables visually impaired persons to move around at any time while avoiding various hazards in indoors and outdoors as well. The suggested gadget was more affordable and gentler.

In [4] this paper A device that uses the camera on a smartphone to help visually challenged people remember recollections of significant places. Additionally, to enable users who are visually impaired to connect ransmiss voice memos with ransmiss places. No special device needed user friendly It allows visually impaired individuals to associatearbi-trary voice memos with arbi-trary spots.

[5] describes an interior navigation wearable system that is assisted by the recognition of visual markers and the perception of ultrasonic impediments utilised as auditory help for those with visual impairments. This system, made use of visual markers to identify objects of interest around the person. In addition, the situation status is enhanced by data collected in real-time using sensors. A map with these plotted points on it is used to create a virtual trail in order to show the distance and direction between closer spots. The visually handicapped user wears glasses equipped with sensors such an RGB camera, an ultrasonic sensor, a magnetometer, a gyroscope, and an accelerometer.

6] describes a wholly original programme called Heat Watch that foretells heatstroke in real-time and prevents it by making sure that users drink water and take breaks. The application determined a personality's core temperature and supported it.With smartwatches, critical sensors and a thermal model are included. By wishing to implement current activity identification techniques to the acceleration sensors within just a smart watch, we further created the programme to track users' water intake. Additionally, the outcome shown that precision and recall significantly increased after they accepted some warning timing error.

According to [7], heatstroke can seriously affect a person's body if they exercise in a hot environment. However, because they disregard crucial physiological indicators, runners frequently are unaware that a heat stroke is occurring. This paper assesses a running person’s risk of heat stroke damage in an effort to solve this issue. It uses a wearable heat stroke detection device (WHDD). Additionally, the WHDD employs a few filtering techniques to adjust the physiological parameters. In order to test the WHDD's efficacy and learn more about these physiological markers, several persons were chosen to wear it throughout the workout experiment. The experimental findings demonstrate that the WHDD is capable of successfully identifying high-risk situations for a heatstroke damage from a runner’s feedback of the uncomfortable condition simplified workflow is given below.

In [8] this paper wireless communication module, a high-definition camera, an infrared sensor, and an eyeglass frame with the cartridge mounted are all components of an intelligent aid visually impaired glass system. Light in weight and portable device. Easy touse, user friendly. Cheap in price.

In [9] this paper A device that, by employing KRVision glasses, alerts individuals to people in their immediate vicinity in front of them. In order to offer acoustic feedback, these incorporate a depth camera, a colour camera, and boneconducting headphones. The colour camera is used to feed a real-time semantic deep learning system. The result also showed Low cognitive load it works well in both indoor and outdoor environments.

In [10] this paper Smart Glasses are made to make it easier for visually impaired persons to view and translate English-language typed text. These innovations look for a way to encourage visually impaired students to finish their education despite all of their obstacles. To develop a new way of reading texts for visually impaired people and facilitate their communication.

In [11] this paper Glasses Connected to Google Vision that Inform Visually impaired Peopleabout what is in front of them. Glasses that use headphones with an inbuilt Bluetoothconnection to tell the visually impaired what is in front of them. Google cloud vision is used which tells the user the exact object in front.

Using wearable smart technology, visually impaired people (VIPs) can autonomously travel through streets and public settings and call for assistance in [12]. The main components of the system are a microcontroller board, a number of sensors, cellular connectivity and GPS modules, and a solar panel. The outcome also indicated that adding additional sensors would enhance the system's functionality by enabling it to detect things like fire, water, holes, stairs, and items at eye level

In [13] this paper prototype device makes it easier for a visually impaired person to move around by alerting them to any surrounding impediments to assist them with daily chores. The assistance will be given in the form of voice instructions delivered via theheadset and will be based on current events in both indoor and outdoor settings. The final system Is a stick which makes it a big product to handle.

In [14] this paper The goal of this essay is to discuss the creation of a tool that will aid the visually impaired and provide an efficient remedy. Visually impaired persons have difficulty moving around independently and finding their way. By using pre-recorded voice commands, the proposed model seeks to direct the visually impaired person and avoid unintentional accidents with barriers while also offering active feedback The delay time was obtained when the echo signal arrived and the distance to the obstacle was computed.

In [15] this paper The project's goal Is to create a system that helps visually impaired individuals detect and recognise roadblocks. Additionally, it calculates the distance tothem. Yolov2 is used to train the system. This results in the description of a picture and its separation from the subject. The result also showed detection is not affected by smoke ,light,breeze etc. of the transmission the medium.

In this paper, [16], The "Smart-alert Walker" footwear, which have two ultrasonic sensors incorporated into them, and the "Smart-fold Cane," an intelligently folded cane, make up the integrated framework. The user of the Smartfold Cane may easily determine the distances between hazards and bodies of water along the path.Throughout the exercise, the R-C NN and Yolo Tiny models produced intermediate results, with mean accuracy of 92.98% and 91.35%, respectively.

In [17] this paper When the captured button is pressed, this system will take a picture of the document that is in front of the camera that is linked to the Raspberry Pi via USB. When the process button is pressed, the picture of the document is subjected to OCR technology. In our system for OCR technology, text and data that is understood or altered by a computer programme can be created from any clear images of text and symbols.

In [18], a convolutional neural network is used to construct a high-resolution depth map from a single RGB image using transfer learning. Following a conventional encoder-decoder architecture, we initialise our encoder utilising features extracted using high-performing pre-trained networks, along with augmentation and training processes that generate more accurate outcomes. We show that even with a relatively basic decoder, this method can deliver detailed, high-resolution images. 15 depth charts On two datasets, our network outperforms state-of-the-art performance with fewer parameters and training rounds. It also generates qualitatively superior results that more accurately capture object boundaries

This study [19] presents a visual system for blind individuals based on video scenes and graphics that resemble objects. Deep Learning is employed by this system to identify objects. to recognise various objects under various circumstances. Object detection is the process of identifying items in an image or video. An object detection model can be quickly created or used using the TensorFlow Object Detection API. People who are visually handicapped have very limited knowledge of self-velocity objects and direction, both of which are crucial for mobility.

The IoT-enabled automated system in [20] this research recognises various common items in indoor and outdoor locations real-world situations. It can help persons who are blind navigate safely. The recommended approach might also work with the current Bangladeshi banknotes. Objects can be found using laser sensors in the front, left, right, and ground directions. In order to inform the user wearing headphones, the Single- Shot Detector system employs Bluetooth to recognise objects and give an audio signal. The pretrained model is set up on a host machine utilising two manually created bespoke datasets. Overall, the system is able to recognise items of various shapes and sizes, although it can only distinguish between five different types of things. Accuracy in object detection and recognition for the developed system is 99.31% and 98.43%, respectively. The intended system can alert others if a true free fall has taken place.

III. GAP IDENTIFIED

Better sensors can be used for more accurate results and can be made more comfortable and price efficient. Hardware can be changed as to reduce costing and be made more user friendly. Sensors could be implemented to warn user about objects around them. Sensor-based systems can be developed to recognize obstacles. Making the system wireless. Increasing the range. barriers that are directly in front of the traveller, including their presence, location, and ideally their type. This has to do with support for avoiding obstacles. Information about the "surface or path the traveller is walking on, such as roughness, gradient, forthcoming stairs, etc. The ability to "draw up a mental map, vision, or schema for the chosen route to be followed" by the use of information. This concern relates to the examination of what is usually referred to as "cognitive mapping" in blind people. The location and type of objects alongside the travel path, such as entrances, fences, and hedges. Information that aids users in "maintaining a straight course, particularly the presence of some type of aiming point in the distance," such the sound of distant traffic. Location and identification of landmarks, even those that have already been observed. Blind people who use smart blind sticks cannot see concealed hazards like holes and staircases that are very dangerous to them.

Any blind person would find the vibrations as an output feedback extremely annoying Arduino does not support a number of well-known languages, including Java, Python, and JavaScript. Python is not natively supported by the Arduino IDE, but other open-source libraries, such pySerial, can be used to code the device. A system can be made by combining multiple systems which can identify the object in view and also calculate it’s distance and positioning from the user.

IV. PROBLEM STATEMENT

Visually impaired people have many challenges in their life and the biggest challenge for them is when they are self - navigating in different areas which is unknown to the person. In fact, physically moving around is the greatest challenge to these people. The main obstacle for visually impaired individuals is to travel in crowded areas where it is difficult for them to detect either to go left or right, so to avoid these problems we are creating a smart system that can recognise nearby objects, calculate it’s distance and position from the user, making it easier for the individuals with visual impairments to traverse around. The goal of this system is enhancing visually impaired victim’s capacity to navigate.

V. PROPOSED METHODOLOGY

Blind people face lots of problems in their day-to-day life. The biggest challenge for a visually handicapped person, especially the one with complete loss of vision, is to navigate around places. It really become difficult visually impaired people to identify different objects near them also they have no idea about the distance of the object.

VI. IMPLEMENTATION

The visually disabled person can use the camera or his phone to identify the object in front of them. The camera sends the video feed to the system which then uses the received video data and identifies the object using tensorflow. ‘COCO’ models are used to identify objects. We’ve used ‘SSD resnet inception’ algorithm. Then the bounding boxes given by the Tensorflow output are used to calculate the distance and the position of the object. This information is conveyed to the person through an audio device(e.g. Earphones).

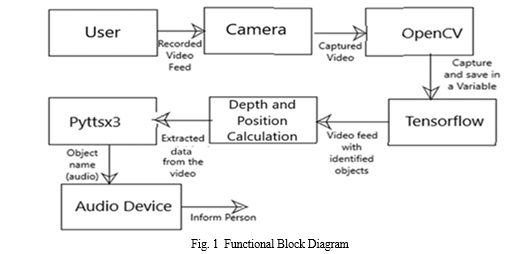

A. Functional Block Diagram

In Figure 2, the above stated technologies are used in the system as follows. After receiving the input from the Camera, OpenCV collects the video feed and stores it in a variable named ‘cap’. With OpenCV, a common framework for computer vision applications was developed, accelerating the inclusion of AI into products. This variable will be used throughout the program for performing any operations on the video. This variable/video feed is then passed to the Tensorflow. An open source framework for numerical computation called TensorFlow, integrates with Python, accelerates the development of neural networks, and facilitates the development of machine learning algorithms. It plays a major role in the system.

It is the main component for object identification. The received video is scanned by tensorflow to see if there are any known objects present. When an object is detected a bounding box is shown around the object in the video feed by Tensorflow. The bounding box contains two labels: object name and surety. For example, Car can be an object name and 95% can be the surety. Here, the algorithm is 95% sure that the displayed object in the video is a Car. Later, these bounding boxes are used to estimate the object depth and positioning using the above explained techniques. Finally, this data will be used by the Pyttsx3 to generate a voice. To obtain a reference to a Pyttsx3, an application calls the factory method pyttsx3.init(). The pyttsx3 default voice will generate words to say based on the data received. It informs the user about both the object name, distance from camera and position through any connected audio medium. For e.g. “Car detected at 2 meters in the center”.

B. SSD Algorithm

Here we have used SSD Inception Algorithm for object detection. We had tried to use the SSD mobilenet algorithm since it is way faster in detection. However, it compromised the accuracy for higher speed. As a result, we switched to SSD Inception to balance speed and accuracy required for our purpose.

SSD is made up of an SSD head and a backbone model.

- A trained image recognition network working as a feature extractor constitutes a backbone model. This is typically a network trained on ImageNet similar to ResNet, but lacking the final fully linked classification layer.[13]

- The SSD head simply adds one or more convolutional layers, and the outputs are read as object classifications and bounding boxes in the regions where the final layer activations occur spatially.[13]

- Consequently, what has remained is a deep neural system that, despite working with images of lower quality, can nevertheless maintain the spatial organization of the input image and extract its semantic content.[13]

Bounding Boxes:-

The boundary selection equation is used to calculate the predicted bounding box coordinates relative to the anchor box coordinates, and the logarithmic scale is used to handle objects of different sizes. These predicted bounding box coordinates are then used to calculate the localization loss during the training process of the SSD algorithm, which helps the algorithm learn to accurately localize objects in the images.

C. Tensorflow Object Detection

Tensorflow is a widely known Technology used in Artificial Intelligence, Machine Learning and Deep Learning. It makes many complex technologies like object detection to be implemented easily. It supports YOLO, R-CNN, etc. algorithms for object detection[12]. Here we have used SSD Inception Algorithm for object detection. We had tried to use the SSD mobilenet algorithm since it is way faster in detection. However, it compromised the accuracy for higher speed. As a result, we switched to SSD Inception to balance speed and accuracy required for our purpose.

D. COCO Datasets

An important object recognition, segmentation, and captioning collection is the MS COCO (Microsoft Common Objects in Context) dataset. There are more than 328K images in the dataset.

Splits: 2014 saw the debut publication of the MS COCO dataset. 83000 training images, 41000 validation images, and 41000 test images total 164000 pictures. The 2015 release of the 81000 image supplementary test set contained both the 40000 new photos and all of the prior training images. [11].

E. Object Distance Calculation

When it comes to object distance detection, a 2-D image makes it almost impossible to get the depth of a certain object since its just a picture of many pixels in different colors. It is not made in 3 dimensions, which it makes it impossible to get the depth directly. Some software techniques are used in these cases.

In our case, we are detecting objects first. When an object is detected, a bounding box is displayed outside the object. These boxes are four sided and the touch the exact edge of the detected object. The farther we are from the objects the smaller they look in the camera. Similarly, the bounding boxes too decrease in size with the objects. This is how we estimate the depth of the object in context. E.g. if a mouse takes more than half of the screen the bounding box will be huge.

The amount of space taken by an object or a bounding box horizontally(along X-axis) can tell us the distance. So the mouse, if seen very massive, is safe to say that it is very close to the camera.

Conclusion

The goal of this project is to create a application that will help the blind and visually handicapped. a sophisticated guiding application that can assist visually impaired persons in navigating a complex interior environment securely and effectively. Devices employed in this system are common to everyone, enabling their widespread application in the consumer market. An application for visually impaired people has been developed to make it easier for them to complete their daily tasks. It offers a real-time and practical navigational information mobile device, allowing a visually impaired person to decide on the best and most efficient course of action in both indoor and outdoor environments. The suggested approach is simple to use and helps the blind person identify any object in front of them. If there is an obstruction, the system notifies the user by playing a sound on the user\'s smartphone.

References

[1] R. Agarwal et al., \"Low cost ultrasonic smart glasses for visually impaired,\" 2017 8th IEEEAnnual Information Technology, Electronics and Mobile CommunicationConference (IEMCON), Vancouver, BC, Canada, 2017, pp. 210-213, doi: 10.1109/IEMCON.2017.8117194J. [2] Martinez, M.; Yang, K.; Constantinescu, A.; Stiefelhagen, R. Helping the Visually impairedto Get through COVID-19: Social Distancing Assistant Using Real-TimeSemantic Segmentation on RGBD Video. Sensors 2020, 20, 5202. [3] Takizawa, H.; Orita, K.; Aoyagi, M.; Ezaki, N.; Mizuno, S. A Spot Reminder System for the Visually Impaired Based on a Smartphone Camera. Sensors 2017, 17, 291. [4] Tailong Shi, Bruce, Ting-Chia Huang “Design, Demonstration and characterization of Ultra-Thin LowWarpage Glass BGA Packages for smart mobile Application processor”, Electronics Components and Technology Conference(ECTC),2016 IEEE 66th ,2016. [5] W.C.S.S. Simoes, V.F.de Lucena “Visually impaired user wearable audio assistance for indoor navigation based on visual marker sand ultrasonic obstacle detection”, Consumer Electronics(ICCE),2016 IEEE International Conference,2016. [6] Wang, T., Wu, D. J., Coates, A., & Ng, A. Y. (2012, November). End-to-end text recognition with convolutional neural networks. In Pattern Recognition (ICPR), 2012 21st International Conference on (pp. 3304-3308). IEEE. [7] Koo, H. I., & Kim, D. H. (2013). Scene text detection via connected component clustering and non text filtering. IEEE transactions on image processing, 22(6), 2296-2305. [8] Bourne RRA, Flaxman SR, Braithwaite T, Cicinelli MV, Das A, Jonas JB, et al.; Vision Loss Expert Group. Magnitude, temporal trends, and projections of the global prevalence of visually impairedness and distance and near vision impairment: a systematic review and metaanalysis. [9] T. P. Hughes, J. T. Kerry, S. K. Wilson, “Global warming and recurrent mass bleaching of corals”, Nature, Vol. 543, pp. 373–377, 2017. [10] M. S. Nashwan, S. Shahid, “Spatial distribution of unidirectional trends in climate and weather extremes in Nile river basin”, Theoretical and Applied Climatology, Vol. 137, pp. 1181–1199, 2019 [11] https://paperswithcode.com/dataset/coco [12] S A Sanchez et al 2020 IOP Conf. Ser.: Mater. Sci. Eng. 844 012024 [13] https://developers.arcgis.com/python/guide/how-ssd-works/ [14] Guard A and Gallagher S S 2015 Heat related deaths to young children in parked cars: an analysis of 171 fatalities in the United States. [15] S. Zafar, G. Miraj, R. Baloch, D. Murtaza, K. Arshad, “An IoT based real-time environmental monitoring system using Arduino and cloud service”, Engineering, Technology Applied Science Research, Vol. 8,No. 4, pp. 3238– 3242, 2018. [16] P. O. Antonio, C. M. Rocio, R. Vicente, B. Carolina B. Boris, “Heat stroke detection system based in IoT”, IEEE Second Ecuador Technical Chapters Meeting, Salinas, Ecuador, October 16-20, 2018. [17] Apiched Audomphon, Anya Apavatjrut. ”Smart Glasses for Sign Read- ing as Mobility Aids for the Visually impaired Using a Light Communication System”, 2020 17th International Conference on Electrical Engineer- ing/Electronics, Computer, Telecommunications and Information Tech- nology(ECTI-CON), 2020 [18] Md. Razu Miah, Md. Sanwar Hussain. ”A Unique Smart Eye Glass for Visually Impaired People”, 2018 International Conference on Ad- vancement in Electrical and Electronic Engineering (ICAEEE), 2018 Publication [19] Alhashim, I. (2018, December 31). High Quality Monocular Depth Estimation via Transfer Learning. arXiv.org. https://arxiv.org/abs/1812.11941 [20] Maid, P., Thorat, O., & Deshpande, S. (n.d.). Object Detection for Visually impaired User’s. Retrieved June 6, 2020, from https://www.irjet.net/archives/V7/i6/IRJET-V7I6577.pdf [21] Rahman, Md. Atikur & Sadi, Muhammad. (2021). IoT Enabled Automated Object Recognition for the Visually Impaired. Computer Methods and Programs in Biomedicine Update. 1. 100015. 10.1016/j.cmpbup.2021.10

Copyright

Copyright © 2023 Xenus Gonsalves, Royce Rumao, Kallis Fernandes, Greatson Lobo, Unik Lokhande. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET53574

Publish Date : 2023-06-01

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online