Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Social Distance Detection with the Help of Deep Learning

Authors: Abhijeet Pitlewad, Pravin Payghan, Omkar Uttekar, Rutuja Pandit

DOI Link: https://doi.org/10.22214/ijraset.2022.42712

Certificate: View Certificate

Abstract

This report presents a strategy for social distancing detection using deep learning to judge the gap between people to mitigate the impact of this coronavirus pandemic. The detection tool was developed to alert people to keep up a secure distance with one another by evaluating a video feed. The video frame from the camera was used as input, and therefore the open-source object detection pre-trained model supported the YOLOv3 algorithm was employed for pedestrian detection. Later, the video frame was transformed into top-down view for distance measurement from the 2D plane. The gap between people may be estimated and any noncompliant pair of individuals within the display are going to be indicated with a red frame and line. The proposed method was validated on a pre-recorded video of pedestrians walking on the road. The result shows that the proposed method is ready to see the social distancing measures between multiple people within the video. The developed technique may be further developed as a detection tool in realtime application.

Introduction

I. INTRODUCTION

Social distancing is a recommended solution by WHO to avoid COVID-19.

In public places to avoid the COVID-19 for maintaining the social distance government made rules also.

In this project we are making a generic deep neural network based model for automated people detection and tracking distance elimation in the crowd, using CCTV cameras.

Also we identify red zones with the highest possibility of virus spread and infection.

The fundamental difference between these two tasks is that image classification identifies an object in a picture whereas object detection identifies the item also as its location in a picture. Object Tracking and Object Detection are similar in terms of functionality. These two tasks involve identifying the article and its location. But, the sole difference between them is that the style of data that you simply are using.

Object Detection deals with images whereas Object Tracking deals with videos.

Object Detection applied on each and each frame of a video turns into an Object Tracking problem. As a video may be collection of fast-moving frames, Object Tracking identifies an object and its location from each and each frame of a video.

Social distancing could be a recommended solution by the World Health Organization (WHO) to attenuate the spread of COVID-19 publicly places. The bulk of governments and national health authorities have set the 2-meter physical distancing as a compulsory protection in shopping centers, schools and other covered areas.

In this research, we develop a generic Deep Neural Network-Based model for automated people detection, tracking, and inter-people distances estimation within the crowd, using common CCTV security cameras.

We identify high-risk zones with the very best possibility of virus spread and infections. This might help authorities to revamp the layout of a public place or to require precaution actions to mitigate high-risk zones.

- Its challenging task to maintaining social distancing in real time.

- It may be possible in two ways:- 1.Manually 2.Automatically

- In manually the observation isn't easy so this can be an arduous

- process together can’t keep their eyes for monitoring continously at 24*7.

- In Automated survelance system replace eyes with CCTV cameras and CCTV gives footage and an automatic.

- After inspection the system alerts when any event occurs and than take relevent action.

- The aim of the project to limit the impact of COVID-19.

II. RELATED WORK

Many researchers have worked in the medical and pharmaceutical fields aiming at treatment of COVID-19 infectious disease; however, no definite solution has yet been found. On the other hand, controlling the spread of such an unknown respiratory infectious disease is another issue.

Convolutional Neural Networks (CNN) have played a very vital role in feature extraction and complex object classification. With the development of faster CPUs, GPUs, and extended memory capacities, CNNs allow the scientists to make precise and fast detectors compared to conventional models. Yet, the long time training, detection speed and achieving better accuracy, are still remaining challenges to be solved.

This section highlights a number of the related works about human detection using deep learning. A bulk of recent works on object classification and detection involve deep learning are discussed. The state-of-the-art review mainly focuses on the present research works on object detection using machine learning. Human detection will be considered as an object detection within the computer vision task for classification and localization of its shape in video imagery. Deep learning has shown a research trend in multi-class beholding and detection in AI and has achieved outstanding performance on challenging datasets. Nguyen et al. presented a comprehensive analysis of state-of-the-art on recent development and challenges of human detection . The survey mainly focuses on human descriptors, machine learning algorithms, occlusion, and real-time detection. For visual recognition, techniques using deep convolutional neural network (CNN) are shown to realize superior performance on many image recognition benchmarks . Adapting the thought from the work , we present a computer vision technique for detecting people via a camera installed at the roadside or workspace. The camera field-of-view covers the people walking in a very specified space. The quantity of individuals in a picture and video with bounding boxes is detected via these existing deep CNN methods where the YOLO method was employed to detect the video stream taken by the camera. By measuring the Euclidean distance between people, the appliance will highlight whether there's sufficient social distance between people within the video.

III. METHODOLOGY

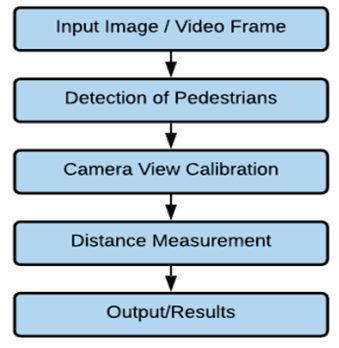

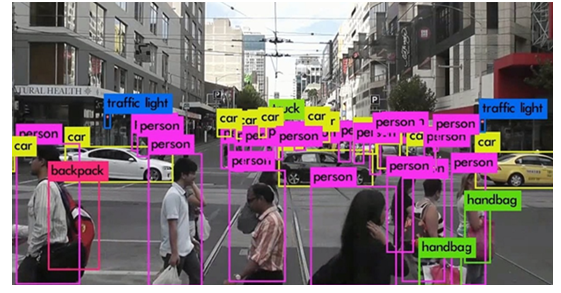

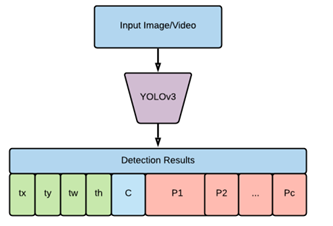

This social distancing detection tool was developed to detect the protection distance between people publicly spaces. The deep CNN method and computer vision techniques are employed during this work. Initially, an open-source object detection network supported the YOLOv3 algorithm was wont to detect the pedestrian within the video frame. From the detection result, only pedestrian class was used and other object classes are ignored during this application. Hence, the bounding box most closely fits for every detected pedestrian may be drawn within the image, and these data of detected pedestrians are used for the space measurement.

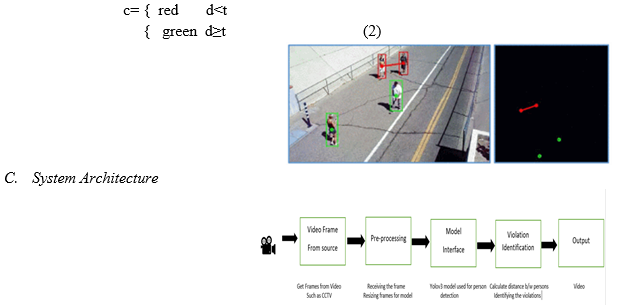

For camera setup, the camera is captured at fixed angle because the video frame, and therefore the video frame was treated as perspective view are transformed into a two-dimensional top-down view for more accurate estimation of distance measurement. During this methodology, it's assumed that the pedestrians within the video frame are walking on the identical flat plane. Four filmed plane points are selected from frame and so transformed into the top-down view. The situation for every pedestrian is estimated supported the top-down view. The gap between pedestrians may be measured and scaled. Looking on the preset minimum distance, any distance but the appropriate distance between any two individuals are indicated with red lines that function precautionary warnings. The work was implemented using the Python artificial language. The pipeline of the methodology for the social distancing detection tool is shown in Figure 1.

A. Pedestrian Detection

The objective is to develop a model to detect humans (people) with various types of challenges such as variations in clothes, postures, at far and close distances, with/without occlusion, and under different lighting conditions. To gain this we inspire from the strength of cutting-edge research; however, we develop our own unique human classifier and train our model based on a set of comprehensive and multifaceted datasets. Before diving into further technical details, we overview the most advanced object detection techniques and then introduce our human detection model. Modern DNN-based detectors consist of two sections: A backbone for extracting features and a head for predicting classes and location of objects. The feature extractor tends to encode model inputs by representing specific features that help it to learn and discover the relevant patterns related to the query object(s).

There are usually 2 types of head sections: one-stage and two-stage. The 2-stage detectors use the region proposal before applying the classification. First, the detector extracts a set of object proposals (candidate bounding boxes) by a selective search. Then it resizes them to a fixed size before feeding them to the CNN model. This is like R-CNN based detectors. There are usually 2 types of head segments: one-stage and two-stage. The two-stage detectors use the region proposal before applying the classification. First, the detector extracts a set of object proposals (candidate bounding boxes) by a selective search. Then it resizes them to a fixed size before feeding them to the CNN model. This is like the R-CNN based detectors. In spite of the accuracy of two-stage detectors, such methods are not suitable for the systems with restricted computational resources

On the other hand, the one-stage detectors perform a single detection process , known as “You Only Looks Ones” or YOLO detectors. Such detectors use regression analysis to calculate the dimensions of bounding boxes and interpret their class probabilities. It maps the image pixels to the enclosed grids and checks the probability of the existence of an object in each cell of the grids. This approach offers excellent improvements in terms of speed and efficiency

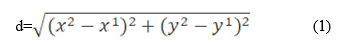

B. Distance Measurement

At this pipeline step, the position of the individual binding box (x, y, w, h) in the view view is detected and converted to the top-down view. For each pedestrian, the area in the top-down view is rated based on the central area of ??the bottom of the assembly box. The distance between all pedestrian pairs can be calculated from the top view down and the distances are measured by the measurement factor from the camera viewing point. Judging by the location of the two pedestrians in the picture (x1, y1) and (x2, y2) respectively, the distance between the two pedestrians, d., Can be calculated as:

Pedestrians on foot are less than the minimum acceptable distance, t., Marked in red, and all others marked in green. The red line is also drawn between two individuals whose distance is below the previously defined limit. The color function of the binding box, c, can be defined as:

Conclusion

In this work, a deep learning-based social distance monitoring framework is presented using an overhead perspective. The pre-trained YOLOv3 paradigm is used for human detection. As a person\'s appearance, visibility, scale, size, shape, and pose vary significantly from an overhead view, the transfer learning method is adopted to improve the pre-trained model\'s performance. The model is trained on an overhead data set, and the recently trained layer is appended with the existing model. To the best of our knowledge, this work is the first attempt that utilized handover learning for a deep learning-based detection paradigm, used for overhead perspective social distance monitoring. The detection model gives bounding box information, containing centroid coordinates information. Using the Euclidean distance, the pairwise centroid distances between detected bounding boxes are measured. To check social distance violations between people, an approximation of physical distance to the pixel is used, and a threshold is defined. A violation threshold is used to check if the distance value violates the minimum social distance set or not. Furthermore, a centroid tracking algorithm is used for tracking peoples in the scene. Experimental results indicated that the framework efficiently identifies people walking too close and violates social distancing; also, the transfer learning methodology increases the detection model\'s overall efficiency and accuracy. For a pre-trained model without transfer learning, the model achieves detection accuracy of 92% and 95% with transfer learning. The tracking accuracy of the model is 95%. The work may be improved in the future for different indoor and outdoor environments. Different detection and tracking algorithms might be used to help track the person or people who are violating or breaches the social distancing threshold. This social distancing detector didn\'t leverage a correct camera calibration, meaning that we couldn\'t (easily) map distances in pixels to actual measurable units (i.e., meters, feet, etc.). Therefore, the primary step to improving our social distancing detector is to utilize a correct camera calibration. Doing so will yield better results and enable you to compute actual measurable units (rather than pixels).

References

[1] Tanveer Ahmad, Yinglong ma, Muhammad Yahya,Belal Ahmad,” Object Detection through Modified YOLO Neural Network”, 2020 [2] Nashwan adnan,mehmet salur,mehmet karakose,” An Embedded Real-Time Object Detection and Measurement of its Size”,2018 [3] J.Rednon, S.Divvala, R.Girshick, A.Farhadi, “You only look once: unfied real time object detection “,2018 [4] Suresh K, bhuvan S, palangappa mb,” Social Distance Identification Using Optimized Faster Network“,2021 [5] Marina ivaši?-kos,Mate krišto,Miran pobar, “Human Detection in Thermal Imaging Using YOLO”, 2019 [6] Implementation of Mitigation Strategies for Communities with Local COVID-19, [online] Available: https://www.who.int/emergencies/diseases/novel-coronavirus-2019. [7] Implementation of Mitigation Strategies for Communities with Local COVID-19 Transmission, [online] Available: https://www.cdc.gov/coronavirus/2019-ncov/downloads/community-mitigation-strategy.pdf. [8] Ministry of Health Malaysia (MOHM) Official Portal. COVID-19 (Guidelines), [online] Available: https://www.moh.gov.my/index.php/pages/view/2019-ncov-wuhan-guidelines. [9] D.T. Nguyen, W. Li and P.O. Ogunbona, “Human detection from images and videos: A survey”, Pattern Recognition, vol. 51, pp. 148-75, 2016. [10] Tanveer Ahmad, Yinglong ma, Muhammad Yahya,Belal Ahmad,” Object Detection through Modified YOLO Neural Network”,2020

Copyright

Copyright © 2022 Abhijeet Pitlewad, Pravin Payghan, Omkar Uttekar, Rutuja Pandit. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET42712

Publish Date : 2022-05-14

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online