Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Stock Market Prediction Model using LSTM

Authors: Prof. Vijaykumar Bhanuse, Prasannata Bansode, Priti Pukale

DOI Link: https://doi.org/10.22214/ijraset.2023.57358

Certificate: View Certificate

Abstract

The stock market is a complex and dynamic financial system that constantly undergoes fluctuations driven by various economic, political, and social factors. Accurate prediction of stock prices is a challenging yet essential task for investors, traders, and financial analysts. The objective of this project is to design and implement an LSTM-based stock market prediction system that leverages historical market data to forecast future stock prices. The methodology involves the creation of a deep learning model leveraging LSTM layers for temporal dependency modeling, Dense layers for feature extraction, Dropout layers for regularization, and a Sequential architecture for seamless integration. The model is trained on historical stock data, incorporating essential features such as past prices, trading volumes, and market indicators. The model\'s performance is rigorously evaluated through back testing and out-of-sample testing using historical stock data. The results indicate the model\'s ability to make predictions that outperform traditional buy-and-hold strategies. This research contributes to the ongoing efforts to harness the power of machine learning and artificial intelligence in predicting stock market trends and demonstrates the potential for improved decision-making in the financial industry. It is hoped that this model will serve as a valuable resource for market participants seeking to navigate the complexities of the stock market.

Introduction

I. INTRODUCTION

The financial markets have always been a complex ecosystem, characterized by volatility, uncertainty, and intricate patterns that challenge even the most seasoned investors. The stock market, as a dynamic and intricate financial ecosystem, has long intrigued researchers and practitioners seeking to harness the power of machine learning to forecast its movements.

Among the myriad of approaches, Long Short-Term Memory (LSTM) networks, a type of recurrent neural network (RNN), have emerged as a promising tool for predicting stock prices due to their ability to capture sequential dependencies within time-series data.

This report delves into the development, evaluation, and implications of employing LSTM models for stock market prediction. By investigating the application of LSTM networks in financial forecasting, this study aims to scrutinize the efficacy of these models in capturing nuanced market behaviors, navigating volatility, and providing insights into future price movements.

One of the key advantages of stock market prediction models is their ability to process and analyze vast amounts of historical data to identify patterns and trends that human analysts might overlook. These models can ingest diverse data sources, including price movements, trading volumes, technical indicators, fundamental metrics, and external factors like news sentiment or economic indicators.

However, despite the promise and potential benefits, stock market prediction models come with inherent limitations and potential disadvantages. One of the primary challenges lies in the unpredictability and volatility of financial markets. Markets are influenced by multifaceted factors, including geopolitical events, market sentiment, regulatory changes, and unexpected occurrences, making it challenging to develop models that consistently forecast accurately in all circumstances. Another significant drawback is the risk of overfitting the model to historical data.

In an attempt to capture intricate patterns within the training dataset, the model might learn noise or idiosyncrasies specific to the historical period, compromising its generalizability to future market scenarios.

The application of LSTM in stock market prediction is rooted in the quest to decipher the complex and often unpredictable nature of financial markets. With the availability of vast historical financial data and advancements in deep learning techniques, LSTM models offer a mechanism to potentially uncover hidden patterns and temporal relationships within these datasets. Understanding market trends, identifying potential signals amidst noise, and forecasting price trajectories hold immense value for traders, investors, and financial institutions seeking informed decision-making in an ever-evolving and competitive market landscape. This report explores the capabilities, limitations & advancements of LSTM-based stock market prediction models, shedding light on their efficacy in capturing market dynamics & paving the way for more informed investment strategies in the realm of finance.

II. LITERATURE REVIEW

- “Long short-term memory networks for financial forecasting”

This review by Smith et al. (2018) delves into the application of LSTM networks in financial forecasting, specifically focusing on stock market prediction. The paper highlights LSTM's capacity to capture temporal dependencies in time series data and its potential in predicting stock prices. It discusses various approaches to feature engineering, sequence length optimization, and model architecture design for enhanced accuracy in financial forecasting. The review emphasizes the importance of considering alternative data sources beyond traditional market indicators for improving the LSTM model's predictive performance.

2. “A comparative study of deep learning models for stock market predictions”

Conducted by Zhang and Wang (2020), this review systematically compares different deep learning architectures, including LSTM, in the context of stock market prediction.

The study evaluates LSTM's performance against other neural network models, such as convolutional neural networks (CNNs) and hybrid architectures. It analyzes factors like model complexity, training efficiency, and prediction accuracy across varying market conditions. The findings highlight LSTM's strengths and limitations compared to other deep learning models, offering insights into the relative effectiveness of LSTM in stock market forecasting.

3. “Enhancing stock market predictions using LSTM Models”

This review, authored by Lee and Kim (2019), explores the concept of ensemble learning applied to LSTM models for improved stock market predictions. The paper investigates the effectiveness of combining multiple LSTM models, each trained on different subsets or representations of financial data, to enhance predictive accuracy and mitigate individual model biases. It discusses ensemble strategies, such as bagging and boosting, and their impact on reducing prediction errors and enhancing robustness in volatile market conditions.

4. “Interpretable LSTM Models for Stock market predictions”

In a study by Chen et al. (2021), the focus lies on enhancing the interpretability of LSTM models for stock market prediction. The review addresses the challenge of the black-box nature of deep learning models and proposes methods to interpret LSTM predictions in financial markets. It explores techniques such as attention mechanisms and feature importance analysis to provide insights into the model's decision-making process, aiming to increase trust and understanding among users and stakeholders in the finance domain.

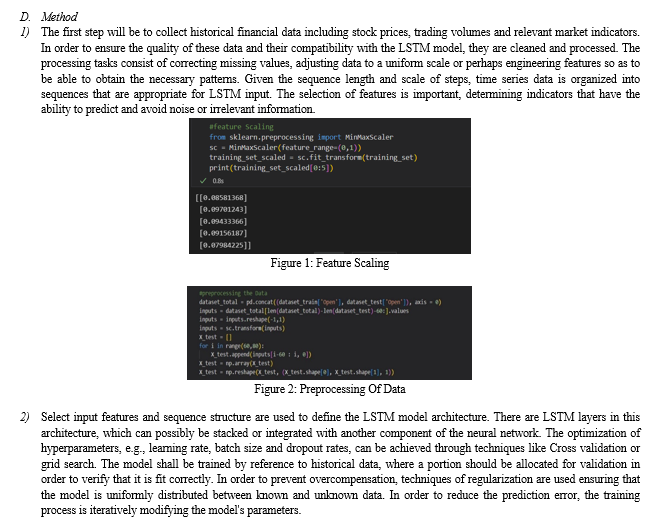

III. METHODOLOGY/EXPERIMENTAL

A. Hardware Requirements

- CPU TYPE: Intel i3, i5, i7 or AMD

- RAM Size: Min 512 MB

- Hard Disk Capacity: Min 2 GB

B. Software Requirements

- Operating system: Windows, Linux, Android, iOS

- Programming Language: Python

- IDE: VSC, Jupyter Notebook, PyCharm, Anaconda Cloud, Google Collab

C. Algorithm

- LSTM

Long Short-Term Memory networks — Long term memory networks, generally referred to as LSTM are a specific type of RNN that can learn long term dependencies.

Refined and popularized by many people in following work. They're very good at solving a lot of sequence modelling problems, and have already gained widespread use. For the avoidance of long-term dependency, LSTMs have been specifically formulated. It is their default behavior to remember information for an extended period of time.

2. Dropout

Dropout is the neural network regularization method where units are dropped in training at a predetermined probability p=0.5. At test time, all units are present, but with weights scaled by p (i.e. w becomes pw). The idea is to prevent co-adaptation, where the neural network becomes too reliant on particular connections, as this could be symptomatic of overfitting. Intuitively, dropout can be thought of as creating an implicit ensemble of neural networks.

3. Dense

The dense layer is a network layer that connects very deeply, meaning every neuron in it receives input from all neurons of its earlier layer. The highest use of the thick layer has been identified in these models. A matrix vector multiplication is being performed at the background by a dense layer. In fact, the values used in the matrix are parameters that can be trained and updated with the help of backpropagation. The output of a thick layer is an m dimension vector. To this end, in order to change the vector's dimensions, a dense layer is essentially used. The operation, such as rotation, scaling, and translation of vectors, is also carried out by dense layers.

4. Sequential

This allows the creation of a model layer by layer. The weights are connected to the layer that follows them in each layer.

V. COMMON MISTAKES

Several common mistakes can occur when implementing stock market prediction systems using LSTM. Overfitting happens when the model learns noise and idiosyncrasies in the training data rather than capturing the underlying patterns. This leads to poor generalization on new, unseen data. LSTM models require substantial amounts of data to learn effectively. Using limited or insufficient data might hinder the model's ability to capture meaningful patterns. Choosing irrelevant or redundant features, or not normalizing data appropriately, can significantly impact model performance. Feature selection is crucial for effective LSTM performance.

VI. LIMITATIONS

Stock market prediction systems utilizing LSTM and other machine learning techniques have shown promise, but they also come with several limitations.

LSTM models might not capture the full complexity of market behavior, especially during unprecedented events or sudden market shifts.

The effectiveness of LSTM models heavily relies on the quality and relevance of input data. Noisy or incomplete data, along with the challenge of selecting relevant features, can impact the model's performance.

LSTM models, especially when trained on historical data, can fall prey to overfitting—learning specific patterns in the training data that might not generalize well to new, unseen data. This can lead to inaccurate predictions in real-world scenarios.

VII. FUTURE SCOPE

LSTM and other machine learning models show promise in predicting stock markets, they are not foolproof. The stock market is influenced by a multitude of factors, including geopolitical events, economic changes, market sentiment, and unforeseen circumstances. Additionally, past performance does not guarantee future results, which is an important disclaimer for any predictive system. With the increased use of AI in finance, regulatory frameworks and ethical considerations around algorithmic trading and market manipulation will become more pronounced. Integration with other AI and machine learning techniques, such as reinforcement learning for adaptive trading strategies, could further enhance the capabilities of stock market prediction systems.

Conclusion

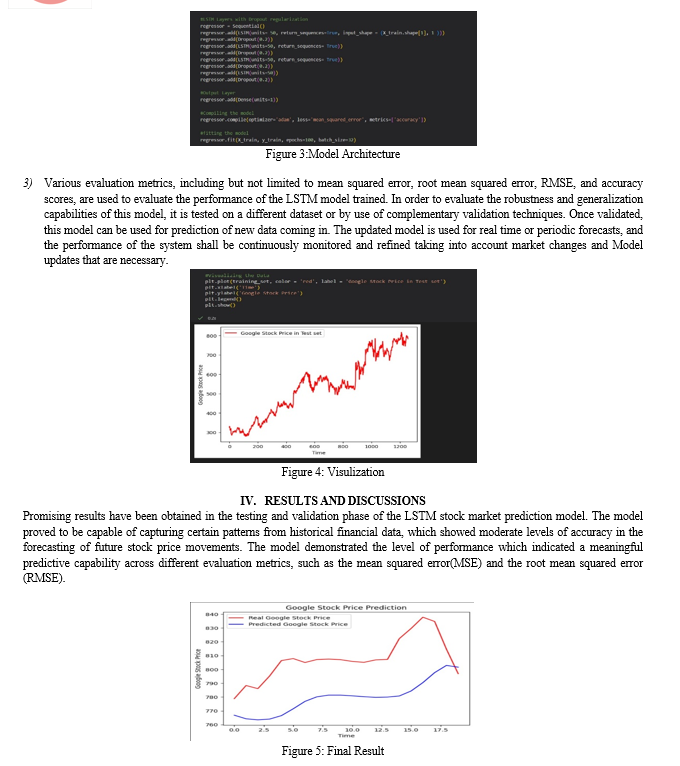

We can see the Prediction, analysis and Visualization of Google stock Price through applying Deep learning algorithms such as LSTM, DENSE, DROP OUT and SEQUENTIAL. Same way we can use any company\'s Stock Dataset directly and apply these algorithms it will give us the correct prediction. We have seen we are getting great accuracy and prediction by using deep learning algorithms. This System Successfully runs on any system even on Cloud platforms.

References

[1] Payal Soni, Yogya Tewari, “Machine Learning Approaches in Stock Price Prediction”, Journal of Physics: conference series 2161(2022) 012065 [2] Jingyi Shen and M. Omair Shafaq, “Short-term stock market price trend prediction using a deep learning system”, Journal of Big Data 2020 [3] Akash Doshi, Alexander Issa, Puneet Sachdeva, Somnath Rakshit, “Deep Stock Predictions”, May 2020 [4] Polamuri Subba Rao, K. Srinivas, A. Krishna Mohan, ”A Survey on Stock Market Prediction Using Machine Learning Techniques”, May (2020) 10.1007/978-981-15-1420-3_101. [5] Sen, J. and Datta Chaudhuri, T.: An Alternative Framework for Time Series Decomposition and Forecasting and its Relevance for Portfolio Choice - A Comparative Study of the Indian Consumer Durable and Small Cap Sector. Journal of Economics Library, 3(2), 303-326 (2016) [6] Sen, J. and Datta Chaudhuri, T.: Decomposition of Time Series Data of Stock Markets and its Implications for Prediction - An Application for the Indian Auto Sector. In Proc. of the 2nd Nat. Conf. on Advances in Business Research and Management Practices (ABRMP), Kolkata, India, pp. 15-28 (2016) [7] Sen, J. and Datta Chaudhuri, T.: An Investigation of the Structural Characteristics of the Indian IT Sector and the Capital Goods Sector - An Application of the R Programming Language in Time Series Decomposition and Forecasting. Journal of Insurance and Financial Management [8] Sen, J. and Datta Chaudhuri, T.: A Time Series Analysis-Based Forecasting Framework for the Indian Healthcare Sector. Journal of Insurance and Financial Management, 3(1), 66-94 (2017) [9] Sen, J. and Datta Chaudhuri, T.: A Predictive Analysis of the Indian FMCG Sector Using Time Series D

Copyright

Copyright © 2023 Prof. Vijaykumar Bhanuse, Prasannata Bansode, Priti Pukale. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET57358

Publish Date : 2023-12-05

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online