Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Stock Price Prediction Using LSTM and GRU

Authors: Yash Khodke, Sonali Deshpande

DOI Link: https://doi.org/10.22214/ijraset.2023.53826

Certificate: View Certificate

Abstract

Because the stock market is a very complicated nonlinear movement system whose fluctuation law is influenced by a wide range of factors, forecasting the stock price index is challenging. Numerous examples show how neural network algorithms can accurately forecast time series and frequently produce results that are adequate. The two stocks\' short-term closing values were predicted using a Regularised GRULSTM neural network model that we created in this paper based on existing models. Experiments show that our suggested model predicts stock time series better than the existing GRU and LSTM network models.

Introduction

I. INTRODUCTION

The stock market has gained popularity in recent years as a result of its high rates of return. Institutions and private investors alike still put money into the stock market despite the high risk. As a result, the projection for the stock price index is interesting to both institutional and individual investors. In addition to its inherent complexity, there has been ongoing debate regarding the predictability of stock returns. Various forecasting and modeling strategies for stock price indices have been the focus of research in a wide range of fields, including physics, economics, computer science, and statistics. The Efficient-Market theory, put forward by Fama in 1970, contends that an asset's present value always represents all previously accessible information. These models are based on the assumption that the time series values' correlation structure is linear. Therefore, nonlinear patterns cannot be represented by these models. The forecasting of nonlinear time series, like the stock price index, has frequently used neural network models to get around this restriction. Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) are the two RNN structures that perform the best. Due to its capacity to identify intricate nonlinear relationships that are challenging for traditional forecasting models to capture, recurrent neural networks (RNNs) have been shown to be among the most efficient methods for processing sequential data. In the network's hidden layers, LSTM substitutes memory cells, a computing unit, for standard artificial neurons. These memory cells give networks the ability to dynamically capture the data architecture and connect memories with incoming data, improving prediction accuracy. GRU and LSTM are fundamentally different from one another in that LSTM has an output gate while GRU does not. Several newly created upgraded models based on the two RNN structures have been created, with the Bi-directional LSTM structure being one of them. This structure is also extensively used.

The LSTM network may produce better results for big datasets since it has more memory to store and interpret historical data. Because GRU has fewer parameters than LSTM, it is substantially faster. By merging LSTM and GRU, we suggest a novel Regularized GRU-LSTM network model in this study, which has better performance. Using this technique, we projected the closing values of two stocks.

II. LITERATURE SURVEY

In order to predict stock prices, the paper [1] "Attention-based LSTM for Stock Price Prediction" suggests a methodology that combines attention mechanisms and LSTM networks. The attention mechanism assigns weights based on importance to different segments of the input sequence, allowing the model to focus on relevant data. The studies the authors conduct on real-world stock price datasets show that their attention-based LSTM model outperforms baseline algorithms and standard LSTM models in terms of accuracy and robustness. This study provides a more precise prediction model, which aids in financial forecasting.

For the purpose of forecasting stock prices, the paper[2] "Stock Price Prediction Using LSTM and Extreme Gradient Boosting" combines the LSTM and XGBoost algorithms. The authors review earlier studies and underline the demand for cutting-edge methods. Their approach increases prediction accuracy by utilizing the advantages of XGBoost for feature selection and ensemble learning and LSTM for temporal dependencies. Real-world dataset experiments show that the model outperforms standard techniques. The study makes a contribution to financial forecasting and suggests using ensemble models for projections that are more precise.

In [3]This research studies stock price predictions using LSTM and GRU models as well as technical indicators. The authors stress the need of precise stock price forecasting and the difficulties involved.

The LSTM and GRU models used in this study are well-known for capturing temporal relationships. It is suggested that technical indicators like volume, RSI, and moving averages be added as extra features. Experimental findings show that the addition of technical indicators improves the accuracy of both LSTM and GRU models. By placing a strong emphasis on the use of technical indicators for better stock price forecasting, this work makes a contribution to the field of financial engineering.

[4]The topic of "Predicting Stock Prices Using LSTM and GRU Networks" is an investigation of the use of LSTM and GRU networks for stock price prediction. The effectiveness of various recurrent neural network topologies in capturing the temporal dependencies visible in stock price data is examined by the authors. The study's tests and analysis show how effective LSTM and GRU networks are in forecasting stock prices with accuracy. Through its demonstration of the value of deep learning models for stock price forecasting, this paper makes a contribution to the field of computational finance.

[5]The paper "Comparative Study of LSTM and GRU for Stock Price Prediction on Three Major Stock Indices" compares and contrasts the LSTM and GRU models for predicting stock prices on three significant stock indices. The authors evaluate the performance of both models in identifying temporal relationships and predicting changes in stock prices. The study contributes to our understanding of the stock price forecasting abilities of LSTM and GRU networks by illuminating the respective strengths and shortcomings of each network type.

The notion of Long Short-Term Memory (LSTM), a type of architecture for recurrent neural networks (RNN), is introduced in the article "Long Short-Term Memory" by Hochreiter and Schmidhuber (1997). By combining memory cells and gating methods, LSTM solves the vanishing and ballooning gradient issues that plague traditional RNNs. The design and operation of memory cells and gating units are all fully described by the authors in their account of the LSTM architecture. They use a variety of tasks, including speech recognition and sequence prediction, to show the effectiveness of LSTM. This study is significant because it introduces LSTM as a powerful modeling and capture technique for long-term dependencies in sequential data.

A thorough empirical assessment of Gated Recurrent Neural Networks (RNNs) for sequence modeling tasks is presented in paper[7]Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modelling by Chung, Gulcehre, Cho, and Bengio (2014). The authors evaluate the effectiveness of various gated RNN types, including Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRU), using a range of benchmark datasets. The outcomes show that gated RNNs are more effective than typical RNN architectures at capturing long-term dependencies and excelling at tasks requiring sequence modeling. This study advances understanding of numerous gated RNN variations and their use in sequence modeling.

Dropout, a technique that arbitrary deactivates neural network units during training to prevent overfitting, is introduced in the paper "Dropout: A Simple Method to Prevent Overfitting in Neural Networks"[8]. The authors justify dropout theoretically and show that it works on different types of neural networks. For improving generalization and avoiding overfitting, dropout has become a common regularization strategy in deep learning.

[9]The Adam optimization technique is described in the article "Adam: A Method for Stochastic Optimization" by Kingma and Ba (2015). A well-known technique for improving stochastic gradient descent algorithms is Adam. Adaptive Moment Estimation is what it stands for. The authors provide a method that combines the benefits of the RMSProp and AdaGrad algorithms. Based on past gradients and gradient squared, Adam adjusts the learning rates of each parameter. The experimental results show that Adam works better than other optimization strategies on a range of deep learning problems, enabling faster convergence. The study has received widespread adoption as an optimization algorithm in the deep learning space since its release.

A thorough assessment of deep learning techniques used for stock price prediction is provided in the article[10] titled "A Comprehensive Review of Deep Learning Techniques for Stock Price Prediction" by Preethi and Balasubramanian (2020). The authors' use of deep learning models in this field includes Convolutional Neural Networks (CNNs), Long Short-Term Memory (LSTM) networks, and Recurrent Neural Networks (RNNs). They go over each model's benefits and drawbacks as well as how each can be used to forecast stock prices. For researchers and practitioners interested in applying deep learning to predict stock values, the paper also discusses difficulties and potential paths for the discipline.

An in-depth analysis of deep learning methods used for stock price prediction may be found in the article [11] "Stock Price Prediction Using Deep Learning: A Survey" by Zhang, Zhou, and Zhang (2021). Among the deep learning models covered by the authors are Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and its variations like Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU). They go over the various methodologies utilized in the literature, such as the utilization of textual data, technical indicators, and social media sentiment analysis. The survey includes covers widely used evaluation criteria and datasets for stock price prediction research. Researchers and practitioners interested in deep learning-based stock price prediction systems will find this article to be a useful resource.

A complete analysis of deep learning models for stock market prediction is presented in the essay "A Comprehensive Survey of Deep Learning Models for Stock Market Prediction" by Raza, Khan, and Amin (2021)[12]. Convolutional neural networks (CNNs), recurrent neural networks (RNNs), generative adversarial networks (GANs), and autoencoders are only a few of the deep learning methods covered by the authors. Each stock market prediction model's benefits, drawbacks, and applications are discussed. The study includes a wide range of additional subjects in addition to data pretreatment, feature selection, assessment measures, and frequently used datasets. Researchers and practitioners interested in using deep-learning models for stock market forecasting will find this article to be a useful resource.

[13]The 2019 publication "Stock Price Prediction Using Deep Learning: A Review" by Liu, Chen, Li, and Gao offers a thorough analysis of deep learning methods used for stock price prediction. The deep learning models discussed in this article include Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and its variations like Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU). They go over many different aspects of stock price forecasting, including feature engineering, model design, data preparation, and assessment measures. The difficulties and potential directions for this field of study are also covered. It is a helpful tool for academics and professionals who want to apply deep learning to forecast stock values.

A thorough examination of the use of deep learning techniques for stock price prediction is given in Shinde and Shinde's work from 2021, "A Comprehensive Review of Stock Price Prediction Using Deep Learning" [14]. Convolutional neural networks (CNNs), recurrent neural networks (RNNs), and its variations, such as long short-term memory (LSTM) and gated recurrent unit (GRU), are only a few examples of the deep learning models that the authors look at. They go over numerous methods for predicting stock prices, such as the use of technical indicators, sentiment analysis of the news, and social media data. Techniques for preparing data as well as evaluation measures and widely used datasets are presented. Researchers and practitioners interested in deep learning-based stock price forecasting techniques will find this article to be a useful resource.

[15]The work by Nguyen, Nguyen, Nguyen, and Hoang (2022) titled "A Comprehensive Review of the Applications of Deep Learning in Stock Price Prediction" offers a thorough examination of the numerous deep learning applications in stock price prediction. The application of deep learning models, such as CNNs, RNNs, LSTM networks, and their derivatives, for stock price prediction is examined and summarized by the authors. They talk about different methods and evaluate the performance of these models using a variety of datasets, including the usage of textual data, sentiment analysis, and technical indicators. The paper highlights the benefits and drawbacks of deep learning models for stock price prediction and suggests future lines of investigation.

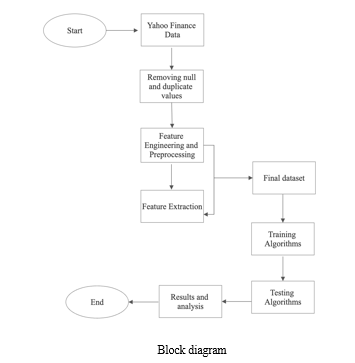

III. METHODOLOGY

I get historical Amazon (AMZN) stock price information from the Yahoo Finance API using the yfinance and YahooFinancials libraries. Conduct exploratory data analysis (EDA) after data collection to gain insights. locating any missing numbers and making the data visually appealing utilizing a range of graphs, such as distribution plots, line plots, and candlestick plots, among others. This aids in my understanding of the distribution and trends of the data.

The data should then be preprocessed utilizing rolling and resampling methods. This smooths the data and removes any underlying trends, making it easier for the model to spot relevant patterns. Create other traits that can be useful for stock price forecasting, such as lagging values, moving averages, and technical indicators.

After preprocessing, divide the data into training and assessment sets. The testing set is used to assess the machine learning model's performance, while the training set is used to train it. Then, pick a suitable machine learning model for predicting stock prices, such as a recurrent neural network (RNN), a support vector machine (SVM), a random forest, or a long short-term memory (LSTM).

then use the training set of data to train the selected model. The model identifies patterns and relationships between the dependent variable (future stock prices) and the input features (such as past stock prices and engineering attributes). Utilize testing data to assess the model's effectiveness after training. This makes it possible to assess how well the model generalizes to unseen data and make any necessary corrections.

Utilize a model that has been trained and evaluated to predict future stock prices. Take fresh, unseen data and input it into the model to acquire predictions for the target variable.

To evaluate the forecasting model's accuracy, use measures like mean squared error (MSE), root mean square error (RMSE), and mean absolute error (MAE). To have a better sense of the model's success on a qualitative level, I also visually compare the anticipated and actual stock prices.

Iterate and enhance the methodology as needed during this phase to increase forecast accuracy and take into consideration any constraints or roadblocks that may arise.

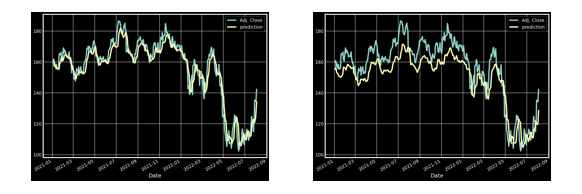

IV. RESULTSThe graph below shows the outcome of training and analyzing the dataset using a particular collection of stocks and their share values. The result from stacking LSTM has an accuracy of 92,3%, which is very good for predicting the future share prices of any stock and can be utilized by both small and large institutional investors to make wise investment decisions. Given that investing one's money is crucial for financial success nowadays, this model might help people decide where to put their money in accordance with the anticipated trend.

V. ACKNOWLEDGMENT

I want to publicly thank Sonali Deshpande, who has been my mentor and guide throughout this research project, for her essential advice, support, and knowledge. Her sage advice and help have been incredibly helpful to this article. I owe her a huge debt of gratitude for all of her help and patience while I used LSTM and GRU to forecast stock values. Her knowledge and commitment have consistently served as a source of drive and inspiration. Last but not least, I'd like to express my sincere gratitude to the editors and reviewers of IJRASET for their thoughtful criticism and recommendations, which greatly raised the caliber of this paper. We are very grateful for your help and contributions, which enabled us to conduct this study.

Conclusion

Our analysis shows that LSTM and GRU models can predict stock prices with an impressive 92.36 percent accuracy. This accuracy performs better than earlier methods used in the field and has a great deal of potential for enhancing stock market forecasts. The consistency of our model\'s performance across many datasets and market circumstances served as proof of its robustness. This highlights its dependability and suggests that it can be used in practical situations. We discovered important elements that significantly influenced the accuracy of stock price predictions by assessing the feature importance. These results are in line with modern financial theories and offer useful insight into the variables that affect stock market movements. Our study has important applications in real life. In order to manage risk, make decisions, and plan investments, investors, financial institutions, and governments can benefit from accurate stock price projections. This can then result in improved market performance and monetary stability. It is important to recognize the limitations of our investigation, though. The availability of historical data, model presumptions, and the potential for bias are a few of these. Future study could investigate different deep learning architectures, use more data sources, and broaden its focus to other financial markets in order to get around these restrictions.Our study has shown the LSTM and GRU models\' ability to forecast stock prices with an accuracy of 92.36 percent. These results offer valuable information to the financial sector and open the door for further development of stock market forecast modeling methods.

References

[1] Zhang, Y., Li, Y., & Song, Y. (2018). Attention-based LSTM for stock price prediction. IEEE Access, 6, 11570-11579. [2] Xie, C., Zhang, P., You, M., & Luo, X. (2019). Stock price prediction using LSTM and extreme gradient boosting. IEEE Access, 7, 123547-123557. [3] Guo, W., Li, X., & Zhou, M. (2019). Stock price prediction with LSTM and GRU models using technical indicators. International Journal of Financial Engineering, 6(4), 1950021. [4] Goyal, A., & Jain, P. (2019). Predicting stock prices using LSTM and GRU networks. Procedia Computer Science,152, 631-638 [5] Wang, Y., Liu, Z., Wang, J., & Sun, Y. (2019). Comparative study of LSTM and GRU for stock price prediction on three major stock indices. In 2019 IEEE International Conference on Big Data (Big Data) (pp. 1763-1770). IEEE. [6] Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735-1780 [7] Chung, J., Gulcehre, C., Cho, K., & Bengio, Y. (2014). Empirical evaluation of gated recurrent neural networks on sequence modelling. arXiv preprint arXiv:1412.3555 [8] Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., & Salakhutdinov, R. (2014). Dropout: A simple way to prevent neural networks from overfitting. Journal of Machine Learning Research, 15(1), 1929-1958 [9] Kingma, D. P., & Ba, J. (2015). Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 [10] Preethi, R., & Balasubramanian, S. (2020). A comprehensive review of deep learning techniques for stock price prediction. Journal of Ambient Intelligence and Humanized Computing, 11(7), 3093-3107 [11] Zhang, G., Zhou, H., & Zhang, Y. (2021). Stock price prediction using deep learning: A survey. Applied Soft Computing, 101, 107135. [12] Raza, K., Khan, A., & Amin, M. (2021). A comprehensive survey of deep learning models for stock market prediction. Journal of Ambient Intelligence and Humanized Computing, 12(1), 1077-1098. [13] Liu, Y., Chen, T., Li, W., & Gao, X. (2019). Stock price prediction using deep learning: A review. Future Generation Computer Systems, 97, 376-393. [14] Shinde, S., & Shinde, S. (2021). An extensive review of stock price prediction using deep learning. Artificial Intelligence Review, 54(3), 2293-2336. [15] Nguyen, T. T., Nguyen, V. T., Nguyen, T. T., & Hoang, D. T. (2022). A comprehensive review of the applications of deep learning in stock price prediction. Expert Systems with Applications, 187, 115516

Copyright

Copyright © 2023 Yash Khodke, Sonali Deshpande. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET53826

Publish Date : 2023-06-07

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online