Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Design and Analysis of Unlocking Student Emotions: Enhancing E-Learning with Facial Emotion Detection

Authors: Mr. Soham Jagtap, Mr. Aditya Marne, Mr. Adnan Sheikh, Mr. Vivek Potdar, Prof. Parinita Chate

DOI Link: https://doi.org/10.22214/ijraset.2023.57812

Certificate: View Certificate

Abstract

In all forms of learning, students are required to make the most of the time allotted to them during instruction. They will pay close attention to the material being taught to them. Students\' performance will rise as a result. In order to provide a framework for the future, we are attempting to ascertain the feelings and behaviors of the students participating in an online course as well as to examine their distracted behavior. This study on students\' tendency toward distraction can assist teachers in monitoring these students and providing assistance to those who choose to improvise their performances. To investigate the distracted behavior of students during virtual classes, one must possess knowledge in image processing, machine learning, and deep learning techniques. The COVID-19 pandemic has had a drastic effect, forcing the shutdown of traditional classrooms and a shift in teaching strategies to an online format. It is crucial to guarantee that students are appropriately engaged in online learning sessions in order to create an environment that is as engaging as traditional offline classrooms. This study suggests using a face emotion to gauge online learners\' interest in real time. This is accomplished by classifying the students\' moods during the online learning session by examining their facial expressions.,

Introduction

I. INTRODUCTION

The use of e-learning has become a revolutionary force in the always changing field of education, providing students of all ages and backgrounds with a level of flexibility and accessibility never before possible. Students are participating in educational experiences that go beyond traditional classroom walls more and more as a result of the widespread availability of online courses, virtual classrooms, and digital resources. But in this digital age, the emotional health and experiences of students have become even more important than the technological skills and content delivery.

A. Current Scenario in Online Education

Due to the pandemic that is currently affecting several nations, it is advised that students use any device to attend classes virtually. When teaching online, teachers need to keep an eye on their students' attentiveness. The observation indicates that teachers fall into several types. 1. The first category consists of teachers who, in addition to instructing, carefully watch how their students behave and call on them repeatedly to ensure that they are learning and staying alert. 2. Some teachers will start a lesson, point out to the students who are becoming side-tracked and give them a warning, then resume. 3. Some instructors never care if their students are distracted or not; they just keep teaching the lessons. 4. Some professors will pique students' interest by consistently inspiring zeal, forcing students to be curious, letting them take part in the activities, and piquing students' interest.

B. Our Study of Students Distraction

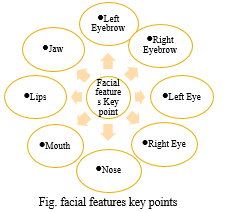

We were able to continue our investigation into facial landmark identification, computation of change, rotation, and other face landmarks in a video, thanks to our study of student distraction. Excellent research is being conducted in the areas of emotion detection, facial landmark recognition, driver fatigue, and facial recognition.

We found in our study that there are a number of reasons why online learners become distracted. Looking left or right, turning away from the class, or turning away from the devices can all be considered forms of distraction. When a student looks away from the device and moves their neck and eyes left or right for an extended period of time, it indicates that they are not engaged in the material and are not paying attention.

C. Problem Statement

In online education, understanding how students feel is important. This project aims to use technology to recognize students’ emotions through their facial expressions, making e-learning more responsive and engaging.

D. Goal

To enhance the quality and effectiveness of E-Learning experiences by systematically detecting, understanding, and addressing the emotional states of students, thereby promoting engagement, satisfaction, and improved learning outcomes.

II. LITERATURE SURVEY

- The efforts of various researchers were covered in this study. Reviews indicate that the study addressed a few of the concerns expressed regarding the identification of facial expressions through the use of several face detection, feature extraction, analysis, and classification techniques. The study provides in-depth details on current methods used in every phase of facial expression recognition. The study is very helpful; it provides detailed information about current methods used in all phases of that subject to support future research possibilities and directions and strengthen the understanding of current trends. The study also covered the advantages and disadvantages of several technological approaches that enhance the accuracy of facial expression recognition in picture processing.

- This study aims to facilitate learners' calm and productive learning experiences by enabling real-time emotion identification. According to the experimental findings, the CNN-BiLSTM algorithm reported here has an accuracy of 98.75%, which is at least 3.15% higher than other similar algorithms, and a recall rate that is at least 7.13% higher. Furthermore, good recognition results can be obtained with a recognition accuracy of at least 90%.

- Yuxin Cui, Sheng Wang, and Ran Zhao's Machine Learning-Based Student Emotion Recognition for Business English Class, this study developed a student emotion detection model, suggested a feature extraction technique for facial expression data, and gathered the emotional states of business English students. The following are the conclusions reached by this study: 1. There are three types of face expression extraction techniques: the global information approach, the geometric feature method, and the mixed feature method; there are three types of dynamic techniques: differential imaging, optical flow, and feature point tracking. 2. Tracking the feature points of changes in facial expressions is one technique for dynamic feature extraction; background data unrelated to expressions may be disregarded in the process. 3. The method for recognizing emotions keeps track of pupils' emotional states in real time while they are studying English. In order to keep students engaged in their studies, machine learning will promptly move to topics that interest them or are simpler to understand when it senses that they are getting bored or dissatisfied.

- Eye Blink Based Fatigue Detection for Computer Vision Syndrome Prevention by Divjak, M., and Bischof, H. In: MVA (2009), pp. 351-353.Three characteristics (eye tracking, head movement, and eye closure time) were analyzed and evaluated in Divjak and Bischof's research in order to generate a warning when the user was found to be experiencing "computer vision syndrome." They established the threshold value for both movements using Open CV to localize the head and eye. If the movement exceeds the minimum threshold value, an alert will be created to alert the user.

- Turabzadeh et al. conducted a study on real-time face expression recognition using the Local Binary Point (LBP) algorithm. The study involved extracting LBP features from the recorded video and using those features as input for a K Nearest Neighbour (K-NN) regression using dimensional labels. Using MATLAB Simulink, the system's accuracy was 51.28%, whereas in the Xilinx simulation, it was 47.44%.[6] Students' participation with an automated gaze system was measured by Bidwell and Fuchs. They used classroom recordings of videos to create a classifier for student interest. To gather the pupils' focus, they employed a face tracking technology. In order to train a Hidden Markov Model (HMM), the automated gaze pattern that was generated was compared to the pattern generated by the observations of a panel of experts. Nevertheless, HMM produced a subpar classification; they intended to create eight distinct behavior categories, but all they could do was determine whether a student is "engaged" or "not engaged."

III. ANALYSIS OF PROPOSED MODEL

A. Feasibility

- Technological Developments: It is now possible to create algorithms and systems for emotion detection thanks to developments in computer vision, natural language processing, and machine learning.

- Microphones and Webcams: The majority of students have access to microphones and Webcams, which makes data collection for emotion detection possible.

- Data Availability: It is possible to create precise algorithms since a substantial amount of data is accessible for training emotion detection models.

B. Scope

- Student Engagement: Teachers can adjust content to keep students interested by using emotion detection to measure motivation and engagement levels among their students.

- Tailored Learning: By modifying content according to a student's emotional state, emotion detection can result in tailored learning pathways.

- Early Intervention: By recognizing pupils who are having difficulties or are under stress, early intervention and assistance can be provided.

- Feedback and Improvement: Emotion data can give teachers insight into the success of their lesson plans and curriculum, enabling them to make necessary adjustments.

- Accessibility: By detecting students with varying emotional needs and offering suitable support, emotion detection can be utilized to make online learning more accessible.

C. Challenges

While implementing an online education system with emotion detection can offer various advantages, it also comes with several challenges. Addressing these challenges is crucial to ensure the ethical use of technology and the creation of a supportive learning environment. Here are some challenges associated with this model:

- Privacy: Gathering information about feelings gives rise to privacy issues. Anonymization of data, appropriate consent, and data security protocols are crucial.

- Ethical Concerns: It is important to use emotion detection in an ethical and responsible manner, avoiding any acts that can endanger kids or jeopardize their privacy.

- Accuracy: Complex emotions may not always be correctly interpreted by emotion detection programs. Negative and false positive results can cause problems.

- Cultural Differences: It can be difficult to develop universal models of emotions since various cultures express emotions in different ways.

- Resource-intensive: A substantial amount of infrastructure, technology, and experience may be needed to implement emotion detection systems.

- User Acceptance: Problems with user acceptance may arise from students' discomfort with the concept of emotion detection.

D. Implications

The integration of emotion detection in online education systems has various applications that can enhance the learning experience, improve student engagement, and provide valuable insights to educators. Here are some applications:

- Educational Design: The research's conclusions can be used to improve student motivation, engagement, and overall satisfaction when designing online courses.

- Early Intervention: Teachers can help students who could be experiencing emotional difficulties with the online learning process in a timely manner by using real-time emotion detection.

- Individualized Learning: Based on unique emotional states and preferences, emotion data can help create individualized learning pathways and content recommendations.

- Ethical Considerations: The project will give ethical data use first priority, protecting participant privacy and consent. We shall completely adhere to ethical norms for emotion recognition and data utilization in educational contexts.

- Enhanced Learning Experiences: More interesting, inspiring, and successful e-learning tactics and content can be created by taking into account and addressing students' emotional states.

- Enhanced Retention and Success: By identifying students who may be having difficulties or are disengaged, instructors can provide early intervention and support to enhance the learning outcomes of these children.

- Personalized Education: By using the data gathered on students' emotions, learning pathways may be created that are specifically tailored to each student's requirements and preferences.

- Inclusive Education: Emotion detection promotes inclusivity in online learning by identifying emotional barriers experienced by students with particular learning needs.

- Research and Innovation: This field's ongoing studies may produce cutting-edge methods and resources for emotion-aware e-learning, which would raise the standard of distance learning.

IV. SOFTWARE REQUIREMENTS SPECIFICATION

A. Functional Requirements

- User Registration and Authentication: Enable administrators, teachers, and students to securely register and log in with User Registration and Authentication. To increase security, enable multi-factor authentication.

- User Profile Management: Permit users to add and edit academic and personal details to their profiles. Provide users the ability to adjust their privacy settings and other preferences.

- Real-Time Communication: Incorporate live chat, discussion boards, and video conferencing capabilities to facilitate real-time communication between educators and learners. Encourage both individual and group conversations.

- Emotion Identification and Analysis: Use natural language processing, computer vision, and audio analysis methods to identify emotions. During online sessions and in response to course materials, give students access to real-time emotional analysis.

- Adaptive Learning: Adaptive learning modifies the pace and difficulty of course material by utilizing recognized emotions. On the basis of emotional states, suggest additional readings.

- Feedback and Alerts: Give students immediate input regarding their emotional states and degree of involvement through alerts and feedback. Notify teachers if you see signs of confusion, irritation, or disengagement from your kids.

- Management of Consent and Data Privacy: Put in place secure data storage and privacy safeguards to safeguard users' sensitive information. Make sure users give their informed consent before any emotional data is collected or used.

- Analytics and Reports: Produce reports on engagement levels, emotional trends, and student success. Provide analytics so that educators may make informed judgments based on facts.

B. Non-functional Requirements

- Performance Requirements:

a. Real-time Emotion Recognition: To ensure the least amount of delay, the system must be able to handle audio and visual input for emotion recognition in real-time.

b. Scalability: The platform must be able to accommodate an increase in users and courses without seeing a noticeable drop in performance. Peak loads during registration periods or live events should be supported.

c. Response Time: Quick response times for user engagements, such loading course materials, turning in assignments, or using real-time communication capabilities, should be provided by the system.

d. Content Delivery: Even for consumers with weaker internet connections, the platform should provide high-quality video streaming and content delivery without any lag or delays.

2. Safety Requirements

a. Data Privacy: To ensure that student emotional data is gathered and stored safely, the system must adhere to data privacy rules. Data that is personally identifiable (PII) needs to be safeguarded.

b. User Authentication: To stop unwanted access to the system, robust user authentication and authorization procedures must be in place.

c. Secure Communication: To avoid data breaches and eavesdropping, all data transported within the platform should be encrypted.

3. Security Requirements

a. Vulnerability Assessment: To find and fix system vulnerabilities, regular penetration tests and security assessments should be carried out.

b. User Data Protection: Put in place defences against typical security risks like SQL injection and cross-site scripting (XSS) to prevent data breaches.

c. Security Incident Response: Create an incident response strategy to deal with security lapses in a timely and efficient manner.

d. Access Control: Make sure users have the right amount of access according to their jobs by implementing access control to stop unauthorized modifications to settings or content.

e. User Account Security: To prevent unwanted access to user accounts, put in place password policies, account lockout procedures, and secure password storage.

f. Monitoring and Logging: To identify and address security incidents, employ thorough logging and ongoing monitoring of system activity.

C. System Requirements

1. Software Requirements

a. Emotion Detection Algorithms: Put into practice or make use of already-developed emotion detection algorithms. These algorithms may be based on natural language processing, computer vision, or both.

b. Machine Learning Libraries: To train and implement emotion detection models, use machine learning frameworks such as scikit-learn, PyTorch, or TensorFlow.

c. Data Collection and Storage: Software that ensures data security and privacy while gathering, storing, and managing emotional data from students.

d. Web Development Tools: HTML, CSS, JavaScript, and pertinent web frameworks may be needed for building a web-based e-learning platform.

e. Database management software, such as MySQL, PostgreSQL, or NoSQL databases, is used to manage student and emotional data.

f. Real-time Processing: To handle data as it is generated in live sessions, real-time data processing solutions such as Apache Kafka or Apache Flink are utilized.

g. User Interfaces: Create user interfaces that let educators and students see emotional responses and insights.

h. Tools for Privacy and Security: To protect data privacy and security, use encryption, access controls, and compliance tools.

i. Emotion Labelling Tools: Create or utilize tools to label emotional data so that models can be trained and validated.

j. Video and Audio Processing: Applications that handle audio and video data from students' microphones and webcams; these applications may use computer vision libraries such as OpenCV.

2. Hardware Requirements

a. A well-made megapixel camera module.

b. Cable for Power Supply

c. Class 10 Micro SD Card, 16GB

D. External Interface Requirements

- User Interfaces

a. Student Interface: Students should be able to access courses, see content, communicate with instructors, and get immediate emotional state feedback through an easy-to-use and intuitive user interface.

b. Instructor Interface: For the purpose of creating, managing, and tracking students' emotional states in real time, instructors ought to have their own interface. This interface ought to offer guidance and intervention resources.

c. Administrator Interface: Tools for controlling the complete platform, including user accounts, content, and system configurations, are necessary for administrators and support personnel to have access to.

d. Parent/Guardian Portal (K–12): An independent portal or interface for K–12 online learning that allows parents and guardians to keep an eye on their kids' academic performance, attendance, and emotional health

2. Hardware Interfaces:

a. Camera and Microphone: To collect audio and video data for emotion recognition, the system should communicate with the user's camera and microphone.

b. Mobile Devices: Verify that the user interfaces work well and are appropriate for a range of mobile devices, such as tablets and smartphones.

3. Software Interfaces

a. Learning Management System (LMS) Integration: To facilitate easy sharing of content and administration of courses, the platform ought to interface with widely used LMS systems.

b. Video Conferencing Integration: Real-time collaboration and online learning via integration with video conferencing platforms such as Zoom or Microsoft Teams.

c. Third-Party EdTech Tools: Facilitate content distribution and assessment through integration with third-party educational technology tools and APIs.

d. Data Analytics and Reporting Tools: Utilize these resources to obtain information and insights regarding emotional trends and student involvement.

4. Communication Interfaces:

a. Real-Time Chat and Messaging: Implement real-time chat and messaging for student-instructor interaction, group discussions, and student support.

b. Discussion Forums: Provide discussion forums for asynchronous communication and collaboration among students and instructors.

c. Email Notifications: Send email notifications to users for important updates, reminders, and system alerts.

d. APIs for Data Exchange: Develop APIs to allow data exchange between the platform and external systems, such as learning analytics or assessment tools.

V. OFTWARE QUALITY ATTRIBUTES

- Reliability: The system ought to possess exceptional dependability, guaranteeing minimal unavailability and minimal disturbances to virtual classes.

- Usability: Both students and instructors should find the platform easy to use, with an intuitive UI and simple navigation.

- Performance Efficiency: Enhance system performance to guarantee optimal resource utilization, reduce load times, and sustain system responsiveness during periods of high demand.

- Maintainability: Easy maintenance and upgrades, such as frequent software updates, bug patches, and feature enhancements, should be a priority for the platform's architecture.

- Scalability: Make sure the system can grow either vertically or horizontally to handle higher user loads and content without experiencing appreciable performance drops.

- Interoperability: Facilitate integration with external tools, learning management systems, and other educational technology to improve the learning process.

- Accessibility: Prioritize accessibility features to guarantee that the platform complies with accessibility standards (e.g., WCAG) and can be utilized by those with impairments.

- Compliance: Adhere to pertinent rules, industry best practices, and educational standards. This includes adhering to policies regarding data protection and the caliber of instructional content.

- Error Handling: Put in place strong error-handling procedures to give administrators and users interpretable error messages that aid in the efficient diagnosis and resolution of problems.

VI. METHODOLOGY OF PROPOSED MODEL

A. Automatic Frame Selection

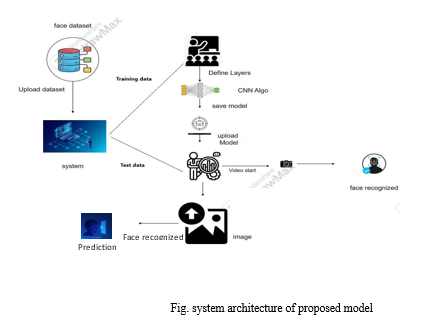

In face recognition for e-learning, automatic frame selection refers to selecting the most appropriate face image from a video to symbolize a user's identity. This guarantees that the right individual is taking online courses or taking tests, and it also helps to increase the accuracy of authentication.

The webcam's video feed serves as our input for the suggested method, which is designed to help learners learn through internet videos.

Frame-based processing is used to extract discriminative features from video streaming. However, none of the frame’s aid in the face's detection. In order to obtain the optimal frames for face detection, frame selection is done. Every twenty seconds, or after a predetermined amount of time, the suggested method pulls the images from the video stream.

B. Automatic Emotion Recognition using Deep Learning Models

- Inception-V3: E-learning face recognition models use Inception-V3 to extract characteristics from face photos. It contributes to the identification of distinctive face features, improving the model's capacity to identify and authenticate users and guaranteeing safe access to e-learning materials.

- VGG19: VGG19 is used to examine and extract significant facial features in e-learning face recognition models. It facilitates precise user identification and authentication by analysing facial photographs, guaranteeing safe and effective access to e-learning resources and tests.

- ResNet-50: ResNet-50's ability to capture minute facial characteristics makes it an important component of e-learning face recognition models. Accurate user identification is made possible by its deep design, which also improves the security and dependability of e-learning resource and assessment access.

C. An Engagement detection system

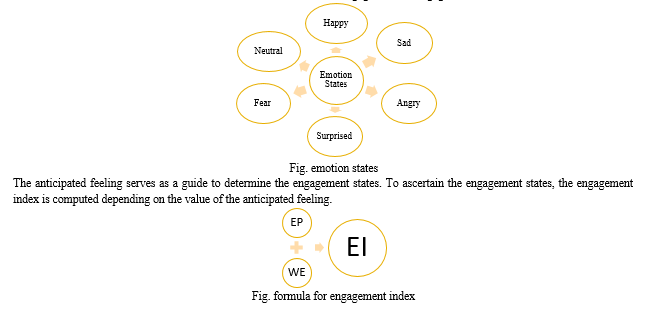

An engagement detection system keeps track of a student's attentiveness in online classes. It is frequently combined with face recognition technology in e-learning. It assesses students' degree of participation by examining their facial expressions and eye movements, which enables teachers to modify their lesson plans and enhance the learning process as a whole. In this instance, facial emotions are classified into six classes, which are further divided into engaged and disengaged classes.

where WE = Weight of related Emotion, and EP = Emotion Probability (Emotion = Neutral, Angry, Sad, Happy, Surprised, and Fear). A deep CNN classifier generates both the corresponding emotion weight and the EP (emotion probability) score. Emotion Weight is a term used to characterize the emotional state that represents a learner's engagement at that particular moment in time. The emotions are used to compute the engagement percentage.

VII. SYSTEM ARCHITECTURE

A. User Interface Layer

- Student Interface: This part gives students access to content, courses, and immediate emotional support.

- Instructor Interface: This interface lets teachers design and oversee classes, keep tabs on pupils' feelings, and offer help in real time.

- Administrator Interface: Users' accounts, content, and system configurations are all managed by administrators.

B. Application Layer

- User Maintenance: Take care of profile maintenance, authentication, and user registration.

- Real-time Communication: To facilitate real-time contact, offer live chat, discussion boards, and video conferencing.

- Emotion Detection and Analysis: Use natural language processing, computer vision, and audio analysis to implement emotion detection.

- Adaptive Learning Algorithms: Create algorithms that change information according to emotions that are identified.

- Alerts and Feedback: Provide students with immediate feedback and allow for instructor intervention.

- Privacy and Consent Management: Make sure that emotional data is collected and stored securely and with user consent.

C. Business Logic Layer

- Adaptive Learning Engine: Uses emotional data analysis to modify information and recommend more sources.

- Data Analytics and Reporting: Produce reports on emotional patterns and student participation.

- User Notifications: Set up email alerts and notifications for significant changes.

- Security and Access Control: Implement data security protocols and access control guidelines.

VIII. FUTURE SCOPE

Looking ahead, there is a lot of promise for improving E-learning and comprehending students' emotions in the future. As technology develops further, we should anticipate the release of more advanced instruments that are intended to track and react to students' emotional states more accurately, opening the door to a more customized and compassionate learning environment. Furthermore, as online and remote learning continue to expand in popularity, there will likely be an even greater need for emotional support and involvement in virtual classrooms. This changing environment offers exciting opportunities for the creation of cutting-edge teaching strategies that skilfully incorporate emotional intelligence into the classroom, fostering a holistic learning environment where students feel truly appreciated, understood, and inspired in addition to learning new material. This might therefore lead to a major improvement in their general well-being and academic performance, ushering in a new era of education that is both intellectually and emotionally stimulating.

Conclusion

Exposing students\' feelings and improving the state of e-learning is a journey that goes beyond technology and has the power to completely change the nature of education. With the advent of digital learning, we now know that although material can be displayed on screens, the real link that connects students to their online classes is emotional involvement. As we embark on this path, it\'s critical to keep in mind that learning about students\' experiences involves more than just data collection. It all comes down to empathy and comprehension. It entails identifying when a pupil is having a difficult period, acknowledging their accomplishments, and fostering an environment that is emotionally supportive. This is a personalized approach for every student, understanding that their emotions are as unique as their personalities; it\'s not a one-size-fits-all solution. By taking on this task, we\'re opening the door for an education system that is more understanding, inclusive, and productive. We\'re giving teachers the tools they need to adapt and satisfy the emotional needs of their pupils. We\'re making sure that in an increasingly digital world, students are acknowledged, heard, and valued. In summary, releasing students\' emotions and enhancing e-learning is not merely a means to an end but rather the beginning of a deeper educational journey in which emotions play a critical role in the process of learning. It\'s a commitment to developing our students\' hearts and souls in addition to their minds. Through this endeavour, we\'re transforming lives in addition to education.

References

[1] Vijayanand. G. Asst. Prof./CSE Muthyammal Engineering College, Rasipuram, Tamil Nadu, Emotion Detection using Machine Learning. 2Karthick, S. IV Year, CSE Muthyammal Engineering College, Rasipuram, Tamil Nadu. 3Hari, Rasipuram, Tamil Nadu; B IV Year; CSE Muthyammal Engineering College. 4 Rasipuram, Tamil Nadu - Jaikrishnan, V IV Year/CSE Muthyammal Engineering College [2] Xiaofeng Lu\'s CNN-BiLSTM algorithm-based Deep Learning Based Emotion Recognition and Visualization of Figural Representation [3] Yuxin Cui, Sheng Wang, and Ran Zhao\'s Machine Learning-Based Student Emotion Recognition for Business English Class [4] Eye Blink Based Fatigue Detection for Computer Vision Syndrome Prevention by Divjak, M., and Bischof, H. In: MVA (2009), pp. 351-353. [5] Turabzadeh, S., Meng, H., Swash, R.M., Pleva, M., and Juhar, J.: Real-time embedded systems using facial expression emotion recognition. Technologies. 2018. [6] Bidwell, J., and Fuchs, H.: Automated gaze tracking in the classroom for measuring student involvement. 49, 113 in Behav Res Methods (2011). [7] Kritika, L.B., GG, L.P.: Learner concentration metric-based student emotion recognition system (SERS) for e-learning enhancement. Procedia Computer Science, 85 (2016). [8] Kamath, A., Biswas, A., and Balasubramanian, V.: Recognizing student participation in e-learning environments by crowdsourced methods. pp. 1–9 in: IEEE Winter Conference on Applications of Computer Vision (WACV), 2016 IEEE. [9] Sharma, P., Esengönül, M., Khanal, S.R., Khanal, T.T., Filipe, V., Reis, M.J.C.S.: Facial emotion analysis as a means of assessing student concentration in an online learning environment. In: International Conference on Innovation and Technology in Education, pages 529–538, 2005. Springer, Teaching and Education (2018) [10] Methods for Facial Expression Recognition with Applications in Challenging Situations Anil Audumbar Pise, 1 , 2 Mejdal A. Alqahtani, 3 Priti Verma, 4 Purushothama K, 5 Dimitrios A. Karras, 6 Prathibha S, 7 and Awal Halifacorresponding author 8. [11] \"A comparative study of face landmarking techniques,\" Oya Celiktutan, Sezer Ulukaya, and Bulent Sankur, 2013, Article number: 13 (2013). [12] Real-Time Attention Monitoring System for Classroom: A Deep Learning Approach for Student’s Behavior Recognition by Zouheir Trabelsi 1,Fady Alnajjar 2,3,*ORCID,Medha Mohan Ambali Parambil 1ORCID,Munkhjargal Gochoo 2ORCID andLuqman Ali 2,3,4ORCID [13] Engagement matters: Student opinions of the significance of engagement techniques in the online learning environment, Martin, F., & Bolliger, D.U. (2018). [14] \"Teachers\' and Students\' Opinions About Students\' Attention Problems During the Lesson,\" Mehmet Ali Cicekci1 & Fatma Sadik2, Journal of Education and Learning, Vol. 8, No. 6, 2019, ISSN 1927-5250 E-ISSN 1927-5269, Published by Canadian Center of Science and Education

Copyright

Copyright © 2024 Mr. Soham Jagtap, Mr. Aditya Marne, Mr. Adnan Sheikh, Mr. Vivek Potdar, Prof. Parinita Chate. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET57812

Publish Date : 2023-12-29

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online