Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Using Face Expression Detection, Provide Music Recommendations

Authors: Vivek Krishna Gandolkar, Mrs. Bhavana G

DOI Link: https://doi.org/10.22214/ijraset.2022.45499

Certificate: View Certificate

Abstract

In the modern day, human feeling is extremely important. Human sentiments, which can be expressed or not, are the foundation of emotion. Emotion represents a person\'s unique behaviour, which may take many shapes. Extraction of emotion reveals a person\'s unique condition of behaviour. The goal of this research is to extract facial features, identify emotions, and play music in accordance with those emotions. The alternative algorithms employed are typically sluggish, less precise, and even call for extra gear such EEG or physiologic sensors. However, many of the current methods for suggesting music rely on historical data, and they utilise this data to make their recommendations. Face expressions are recorded using a local recording tool or an internal camera. Here, an algorithm is used to identify a feature in the collected image. The suggested technology will therefore play music selected depending on the facial muscles that was collected.

Introduction

I. INTRODUCTION

Numerous research over the last few years confirm that music affects how people feel and behave, as well as how their brains function. In one study of the reasons why individuals hear music, researchers have found that the connection between arousal & mood was one of the most important functions of music. The potential of music to improve participants' mood and increase their level of self-awareness are two of its most crucial uses. It has been shown that personality characteristics and emotions are closely connected to musical choices [1].

The brain areas that control emotions and mood also control the metre, timbre, beat, & intonation of music [2]. One of the most important aspects of lifestyle may be interpersonal interaction. It shows every nuance and a great deal of information about people, whether it be through body language, conversation, face expression, or feelings [3].

Currently, emotion detection is regarded as the most crucial method used in a wide range of applications, including card reader implementations, monitoring, iris image inquiry, crime, multimedia cataloguing, civil implementations, protection, and various aspects of human interfaces in multimedia environments.

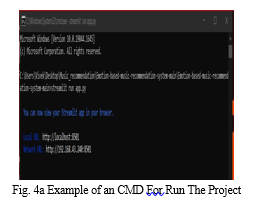

Automated emotion recognition in interactive media characteristics like music or movies is expanding quickly thanks to advancements in technology for digital systems and other efficient feature extraction algorithms, and this system can play a significant role in so many possible applications such as interpersonal communication systems as well as music entertainment. We provide a recommendation systems for emotion detection that uses facial expressions to identify user moods and provide a selection of suitable music [13–24].

A person's emotions may be detected by the suggested system, and if the individual is feeling down, a playlist of the most upbeat, musically-related songs will be played. Additionally, if the feeling is favourable, a particular playlist containing various musical genres that will amplify the pleasant sentiments will be given [4]. We utilised the Kaggle Facial Emotion Recognition dataset for emotion identification [5]. Songs from Bollywood and Hindi have been used to generate the music player's data set. Convolutional Neural Networks are used to implement face emotion recognition, and they provide accuracy of about 95.14 percent [2].

II. LITERATURE SURVEY

Reviewing the techniques is done to learn about their shortcomings and how to fix them. A scholarly paper's content, known as a literature review or literature survey, contains the most recent research findings as well as important theories related to a given subject. Many students, scientists, engineers, and other professionals from all over the world have been interested in the latent abilities of individuals that may supply inputs to any system in a variety of ways.

Facial expressions can reveal a person's present mental condition. In interpersonal communication, we frequently convey our sentiments through hand gestures, face expressions, and voice tones. Preema et al. [6] claimed that making and managing a big playlist takes a lot of time and effort. According to the report, the "music player itself chooses a tune based on the user's present mood. To create playlists depending on moods, the programme detects and categorises sound clips according to audio attributes. The Viola-Jonas method, used for face recognition and facial emotion extraction, is utilised by the programme.

In their research, Yusuf Yaslan et al. suggested an emotion-based music recommender systems that analyzes the user's emotion from signals received by ubiquitous computing devices that are coupled with physiological sensors for galvanic skin (GSR) & photoplethysmography (PPG) [3]. A fundamental aspect of human nature is emotion. They are essential to life in general. In this study, the arousal and valence predictions using multi-channel physiological data is considered as a solution to the challenge of emotion identification. Ayush Guidel et al claimed in [7] that it is simple to determine a person's mental state and present emotional mood from their facial expressions. This approach was created with attention for fundamental emotions. In this research, face identification was accomplished using a cnn model.

Using emotion recognition, the study put out along with Ramya Ramanathan et al. [1] described an intelligent music player. Initial grouping of the person's local music collection is done according to the mood the album evokes. This is frequently determined by taking into account the words of the music. The report makes a point in particular M. Athavle and others Online ISSN: 2582-7006 Journal of Information systems Electronics engineering (JIEEE) Customized Journal articles specialises in the methods are available for identifying human feelings for creating emotion-based media players, the method a music player detects human emotions, and the best way to use the proposed system for emotion detection.

Additionally, it provides a quick overview of the creation of playlists, the classification of emotions, and the operation of our systems. CH Radhika et al. [8] recommended manual playlist segmentation and song annotation based on the user's current emotional state as a time-consuming and labor-intensive process.

However, the currently used methods are inefficient, use more hardware than necessary, and offer significantly lower accuracy. The technique described in the study aims at cutting down on both the system's total cost and calculation time. It also tries to improve the system design's correctness. Comparing the system's face expression recognition module against a data set that would be both client and user-impartial serves to validate it.

III. METHODOLOGY

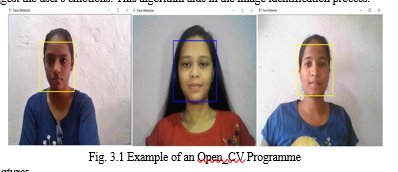

A. Face Recognition

One of the best methods for figuring out someone's mood is face recognition. We specifically use it because it maximises the training process categorization in between classes. This image analysis system is used to reduce the face space aspects that use the classifier (PCA) method but then it applies fishers linear discriminant (FDL) or the LDA method to acquire the characteristic of the image characteristics. While using the matching faces technique, which uses the minimum euclidean algorithm, we may identify the expressions that suggest the user's emotions. This algorithm aids in the image identification process.

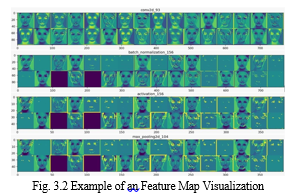

B. Discovering Features

Feature maps, the model's outputs, act as a midway explanation for each stage following the first. The image data you would like to analyse the extracted features for will be added. This will allow you to discover which attributes were most important for classifying the image. By modifying the settings of the features detector in relation to the source images or the feature return from blocks preceding it, feature maps are produced. By employing feature map visualisation, it will be able to see how each Convolution in the system internally reflects particular input.

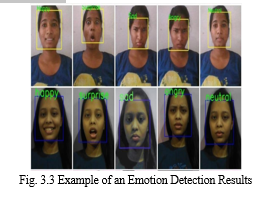

C. Detecting Emotions

The class of the image will be binary and multi-class to differentiate between various clothing styles or to identify numbers. It is impossible to understand the learnt attributes of just a CNN architecture since cnn models are analogous to a "black box." When we provide the input picture to the CNN model, it essentially offers the outputs [10]. Using CNN, a models that is equipped to distinguish emotions was weight-learned. A trained CNN model is fed a real-time image that was shot by the user. This model renames the image and predicts the emotion..

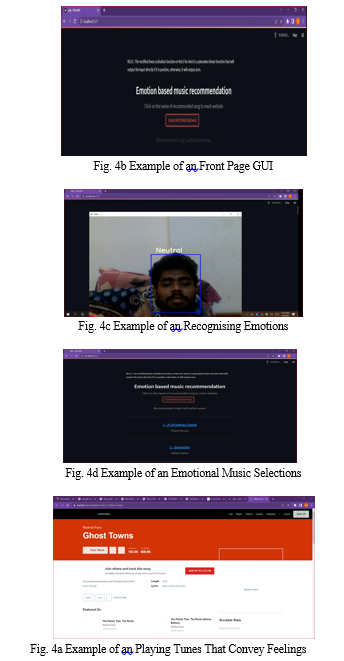

IV. RESULTS AND DISCUSSION

We might make a playlist, just as with other typical music programmes. The final choice is random mode, which enables us to select music at randomly rather than in particular particular order.

One of the remedies for raising the mood has been this. Four different emoticons that depict the user's emotions are also displayed simultaneously when a music is played based on those emotions. The music & emoticons that are detected in turn are assigned a number for each emotion.

V. FUTURE SCOPE

Even though this system is fully functional, there is still room for future development. The application can be altered in a number of ways to improve user experience overall and output better results. This feeling included backing the automated playing of music. The system's future plans include designing a mechanism that could aid in the treatment of individuals who are experiencing psychological anguish, stress, severe depression, and trauma through music therapy. Due to the current system's poor camera resolution and performance in extremely low light levels, there is a chance to add some capabilities as a potential fix in the future.

Conclusion

A detailed analysis of the literature reveals that there are various ways to put the Music Recommender System into practise. A review of approaches put forth by earlier researchers and developers was conducted. The goals of our system were fixed based on the results. Our idea will be a cutting-edge application of a trendy technology, since the power and benefits of AI-powered apps are on the rise. Throughout this system, we give a general explanation of how songs can effect a user\'s mood as well as advice on how to pick the best music tracks to lift a user\'s spirits. The technology in place is capable of identifying the user\'s emotions.

References

[1] Ramya Ramanathan, Radha Kumaran, Ram Rohan R, Rajat Gupta, and Vishalakshi Prabhu, an intelligent music player based on emotion recognition, 2nd IEEE International Conference on Computational Systems and Information Technology for Sustainable Solutions 2017. https://doi.org/10.1109/CSITSS.2017.8447743 [2] Shlok Gilda, Husain Zafar, Chintan Soni, Kshitija Waghurdekar, Smart music player integrating facial emotion recognition and music mood recommendation, Department of Computer Engineering, Pune Institute of Computer Technology, Pune, India, (IEEE),2017. https://doi.org/10.1109/WiSPNET.2017.8299738 [3] Deger Ayata, Yusuf Yaslan, and Mustafa E. Kamasak, Emotion-based music recommendation system using wearable physiological M. Athavle et al. ISSN (Online) : 2582-7006 International Conference on Artificial Intelligence (ICAI-2021) 11 Journal of Informatics Electrical and Electronics Engineering (JIEEE) A2Z Journals sensors, IEEE transactions on consumer electronics, vol. 14, no. 8, May 2018.https://doi.org/10.1109/TCE.2018.2844736 [4] Ahlam Alrihail, Alaa Alsaedi, Kholood Albalawi, Liyakathunisa Syed, Music recommender system for users based on emotion detection through facial features, Department of Computer Science Taibah University, (DeSE), 2019. https://doi.org/10.1109/DeSE.2019.00188 [5] Research Prediction Competition, Challenges in representation learning: facial expression recognition challenges, Learn facial expression from an image, (KAGGLE). [6] Preema J.S, Rajashree, Sahana M, Savitri H, Review on facial expression-based music player, International Journal of Engineering Research & Technology (IJERT), ISSN-2278-0181, Volume 6, Issue 15, 2018. [7] AYUSH Guidel, Birat Sapkota, Krishna Sapkota, Music recommendation by facial analysis, February 17, 2020. [8] CH. sadhvika, Gutta.Abigna, P. Srinivas reddy, Emotion-based music recommendation system, Sreenidhi Institute of Science and Technology, Yamnampet, Hyderabad; International Journal of Emerging Technologies and Innovative Research (JETIR) Volume 7, Is-sue 4, April 2020. [9] Vincent Tabora, Face detection using OpenCV with Haar Cascade Classifiers, Becominghuman.ai,2019. [10] Zhuwei Qin, Fuxun Yu, Chenchen Liu, Xiang Chen. How convolutional neural networks see the world - A survey of convolutional neural network visualization methods. Mathematical Foundations of Computing, May 2018. [11] Ahmed Hamdy AlDeeb, Emotion- Based Music Player Emotion Detection from Live Camera, ResearchGate, June 2019. [12] Frans Norden and Filip von Reis Marlevi, A Comparative Analysis of Machine Learning Algorithms in Binary Facial Expression Recognition, TRITA-EECS-EX-2019:143. [13] P. Singhal, P. Singh and A. Vidyarthi (2020) Interpretation and localization of Thorax diseases using DCNN in Chest X-Ray. Journal of Informatics Electrical and Elecrtonics Engineering,1(1), 1, 1-7 [14] M. Vinny, P. Singh (2020) Review on the Artificial Brain Technology: BlueBrain. Journal of Informatics Electrical and Electronics Engineering,1(1), 3, 111. [15] A. Singh and P. Singh (2021) Object Detection. Journal of Management and Service Science, 1(2), 3, pp. 1-20. [16] A. Singh, P. Singh (2020) Image Classification: A Survey. Journal of Informatics Electrical and Electronics Engineering,1(2), 2, 1-9. [17] A. Singh and P. Singh (2021) License Plate Recognition. Journal of Management and Service Science, 1(2), 1, pp. 1-14.

Copyright

Copyright © 2022 Vivek Krishna Gandolkar, Mrs. Bhavana G. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45499

Publish Date : 2022-07-10

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online