Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Virtual Eye for Blind People Using Deep Learning

Authors: Mamatha C, Kumuda H R, Nethra P D, Rajashekhar S A

DOI Link: https://doi.org/10.22214/ijraset.2022.45931

Certificate: View Certificate

Abstract

The most crucial sense for any living thing is vision. But due to a few unlucky circumstances, some people become blind as they age, while others are born blind. Extremely disabled people who are visually impaired are unable to move straight away or perform any fundamental tasks like a normal person. This artwork suggests a basic electronic guided incorporated vision device that is functional and configurable to facilitate the mobility of blind and visually impaired people both indoors and outside. The suggested device aims to develop a wearable visual resource for blind people in which receiving instructions verbally from the user is standard. Its capability includes identification of technology and people. This will benefit those who are blind. man or woman to control daily activities and move around their environment. The device enables the disabled people to be fairly self-organized and offers affordable, reliable solutions. To put it briefly, the \"VIRTUAL EYE FOR BLIND USING DEEP LEARNING\" assignment is intended to enable visually impaired people who have lost their sight and support them with basic mobility using an effective economic system.

Introduction

I. INTRODUCTION

Hundreds of thousands of people throughout the world struggle with blindness. In truth, the average person doesn't have time to even consider folks who are differently abled in today's hectic world. As a result, persons who are blind or visually impaired constantly need assistance with their daily tasks, especially when they are on the road. Most frequently, those people are seen as a burden by others, while others simply ignore them and leave them to fend for themselves. They become lonely as a result of this. In other cases, the thought of depending on someone can also lead to demotivation and a loss of confidence. Among the biggest obstacles is the difficulty of transitioning from one proximity to another without having trouble identifying people, identifying restrictions on their movement, and so forth. They can overcome some of these difficult circumstances with the help of a few technologies that are readily available on the market. Numerous studies are continuously conducted with the sole purpose of creating devices to help those who are blind. A system or gadget that can assist the visually handicapped in all of their activities is therefore required. As previously said, those who are blind or visually handicapped must drive more carefully, and in such cases, a Global Positioning System (GPS) equipped with obstacles detection may be useful. It becomes difficult for the uninformed to understand a person as well.

Blind persons typically recognize others solely through their voice. This is no longer effective because it may be challenging for the blind to understand a person whose voice they haven't heard in a while. Thus, a device to aid in identifying recognized persons is required. Face detection methods may be used to overcome this problem. One of the most useful image analysis programs is face popularity. The real issue here is creating a device that can combine all of them and serve as an eye for people who are blind. Therefore, the goal of this effort is to provide the visually impaired with resources for everyday tasks like travelling from one place to another and their identify.

II. RELATED WORK

Virtual Eye for the Blind The usage of IOT makes it possible for blind and visually impaired people to benefit from a sophisticated stick assistive navigation system. The Smart stick has a camera and raspberry pie attached to it that makes it easier to identify objects that blind people might use as obstacles. This information can then be easily diagnosed and communicated to blind people using earbuds that are directly connected to them. For the purpose of avoiding the puddles, every sensor, including the speech warning, is also situated near the bottom of the stick. Yolo and the Dark Flow set of rules can be used to achieve this.

The goal of any additional research is to design and implement a portable gadget that will aid visually impaired people in accurately calculating the distances between items and persons around them. The proposed device uses a single camera mounted on a Raspberry Pi board and a real-time object identification method based on CNN called YOLO (You Look Only Once) to allow verbal communication between a blind and deaf person. American Sign Language (ASL) is also intended to be recognized and identified. The resulting thing, person, or symbol is subsequently communicated to the disabled character via audio. When the child needs assistance or is in danger, a text message to the parent may be sent.

In addition to classifying and identifying items in an image, object detection also localizes those objects and creates bounded boxes around them. The main focus of this work is on identifying dangerous and potentially dangerous objects. We have the Tensor flow Object Detection API to teach version, and we have used a Faster R-CNN set of rules for implementation to make item detection for dangerous devices easier. The Object Detection API by TensorFlow is a powerful tool that makes it quicker and easier for anyone to create and set up successful picture recognition software. We now know how the tensor glide framework works.

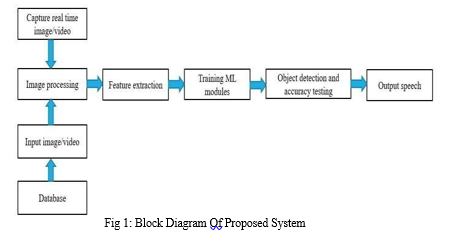

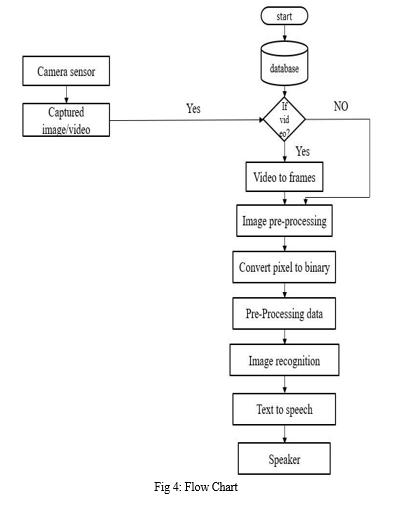

III. PROPOSED SYSTEM

The primary idea behind this work is the development of an object identifier integrated with a speech engine to allow blind people to receive a description if not an identification of a object or obstacle of which a photograph is shared. In the present work we are using a software implementation only for testing the integration of an image recognition and speech engine block implemented using OpenCV and TensorFlow. Hence the Problem statement may be defined as: “The development of an object identification system integrated with a speech engine to allow visually impaired to identify objects using from captured images”.

A. Open CV

OpenCV is a sizable open-source library used for computer vision, machine learning, and image processing. It now plays a significant role in real-time operation, which is essential in modern systems. You can use it to analyze images and videos in order to recognize objects, people's faces, or even a person's handwriting. Python is able to process the OpenCV array structure for analysis when it is coupled with a number of libraries, including NumPy. We use vector areas and mathematical operations on those areas to identify visual patterns and their diverse capabilities.

B. Tensor Flow

A complete open source platform for device mastery is called TensorFlow. TensorFlow is a powerful tool for managing all aspects of a system learning device, but this beauty focuses on using a specific TensorFlow API to enhance and train machine learning models. The high stage TensorFlow APIs are constructed at the low stage APIs, and they are arranged hierarchically. The low-stage APIs are used by machine learning researchers to develop and find new machine learning algorithms. To define and train a system that learns models and makes predictions in this area, use the high-level API tf.keras. The TensorFlow version of the open source Keras API is called tf.keras.

C. Seaborn

An open-source Python library built on top of Matplotlib is called Seaborn. It is utilized for information evaluation and information visualization. The Pandas library and data frames are both compatible with Seaborn without any issues. The created graphs can easily be customized as well. Several advantages of data visualization are listed below. When working on any assignment involving gadget learning or forecasting, graphs can help us identify informational trends that are useful.

D. Flask

Python-based Flask is a microweb framework. Because it is no longer dependent on certain tools or libraries, it is categorized as a microframework. It lacks the database abstraction layer, shape validation, and all other components where previously developed third party libraries offer standard functionality. But Flask supports extensions that can upload utility features just like they were done in Flask. There are extensions for object- relational mappers, form validation, upload management, many open authentication technologies, and various commonly used framework-related equipment.

IV. IMPLEMENTATION AND ALGORITHM

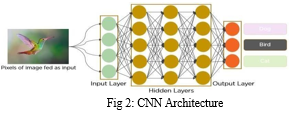

CNNs are a category of Deep Neural Networks which could understand and classify unique features from pics and are broadly used for studying visible pixel. Their programs variety from photograph and video recognition, picture classification, clinical photo evaluation, computer imaginative and prescient and natural language processing.

There are foremost parts to a CNN structure:

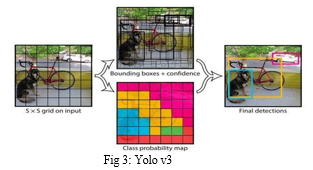

- A convolution tool that separates and identifies the diverse capabilities of the image for analysis in a method called as Feature Extraction. But YOLO divides the image into a grid of 13x13 cells. This way it simply appears at the image just as soon as and consequently faster. Each grid field predicts bounding boxes and the self-assurance of these bounding bins. The self assurance represents how accurate the model is that the box incorporates the item. Hence, if there may be no object, then the confidence must be zero. Also an intersection over union (IOU) is taken between the expected field and the ground fact to attract the bounding box. As described in, each bounding container has 5 predictions: x, y, w, h and self-belief. The (x,y) coordinates represent the center of the field relative to the bounds of the grid cellular. The width and height are predicted relative to the whole image.

- A fully connected layer that utilizes the output from convolution manner and predicts the elegance of the image based totally on the capabilities extracted in previous tiers.

YOLO(You Look Only Once)

You Only Look Once (YOLO) are an picture classifier that takes components of an photograph and system it. In conventional item classifiers, they run the classifier at every step offering a small window across the image to get a prediction of what's within the present day window. This method could be very sluggish because the classifier has to run generally to get the maximum certain result.

However, YOLO divides the image into a 13x13 cell grid. This indicates that it only looks at the image once, making it faster. Bounding boxes and their confidence are predicted by each grid box. The level of confidence indicates how accurately the model predicts that the object is in the box. Therefore, the confidence should be zero if there is no object. In order to design the bounding box, an intersection over union (IOU) is also obtained between the anticipated box and the actual ground truth. Each bounding box includes five predictions, as stated in: x, y, w, h, and confidence. The centroid of the box in relation to the boundaries of the grid cell is represented by the (x,y) coordinates. Relative to the entire image, the width and height are predicted. The IOU between the projected box and any ground truth box is represented by the confidence prediction, which is the last step.

The classifier predicts what is contained in each cell based on five of its surrounding boxes. We can determine how confident YOLO is in its forecast by looking at the confidence score it produces. The object that has been categorized is enclosed by the predicted bounding box.

The box is drawn thicker the greater the confidence score. A class or label is represented by each bounding box. Since each grid cell predicts five bounding boxes and there are 13 x 13 = 169 grid cells altogether, there are 845 bounding boxes. The boxes with final scores of at least 55% are kept because it turns out that the majority of these boxes will have very low confidence scores. The confidence score can be raised or lowered based on the requirements. The YOLO architecture, which is a typical neural network, may be found in the paper. While the fully connected layers of the network anticipate the output probabilities and coordinates, the early convolutional layers extract features from the image. YOLO employs two fully connected layers after a 24 layer standard layer structure.

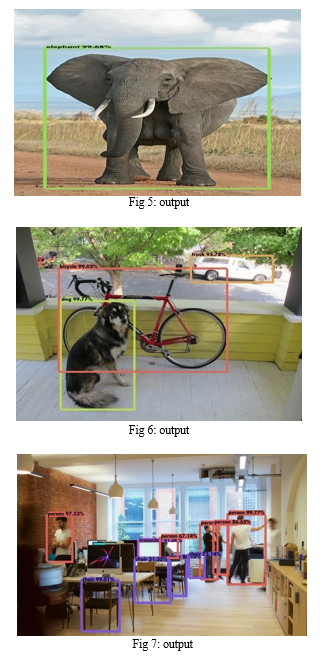

V. RESULT

The proposed system specializes in device detection. Wearable and portable versions of the device are available. The tool gives the visually impaired a multifunctional tool by integrating the operation of the numerous parts.

Because the output is provided as voice instructions, the clarity is excessive. It doesn't need Internet access to function because all of the data are put into the system before it is used. This is especially useful if Internet connectivity isn't constantly present throughout the city. The device is also very straightforward and easy to use because it doesn't use any Android or other touch display related technology.

Conclusion

In the modern world, living with a disability of any kind, including blindness, can be challenging for anyone. To manage the blind person\'s ability to perceive objects, a new AI-based prototype has been created. The CNN algorithm provided by this AI-based system is flexible and effective. The goal is to examine and resolve the majority of the problems with existing systems and develop a prototype artificial eye that provides blind individuals with independence and safety. A temporary simulation model is suggested to detect and recognize the object because the medical approach to solving this issue has failed. The suggested system\'s straightforward architecture and user-friendliness enable the subject to be independent in his or her own house. The technology also seeks to assist the blind in navigating their environment by spotting obstacles, finding their essentials, and reading signs and texts. Initial tests have produced encouraging results, allowing the user to safely and freely move about his needs and requirements.

References

[1] Sangami, M. Kavitha, K. Rubina and S. Sivaprakasam, “Obstacle detection and location finding for blind people”, International Journal of Innovative Research in Computer and Communication Engineering, vol. 3, no. 2, pp. 119-123. [2] S. K. Chaitrali, A. D. Yogita, K. K. Snehal, D. D. Swati and V. D. Aarti, “An Intelligent Walking Stick for the Blind”, International Journal of Engineering Research and General Science, vol. 3, no. 1, pp. 1057-1062. [3] S. Dambhare and A. Sakhare, “Smart stick for blind: obstacle detection, artificial vision and real-time assistance via GPS”, 2nd National Conference on Information and Communication Technology (NCICT), pp 31-33. [4] D. Zhou, Y. Yang and H. Yan, “A Smart \\\"Virtual Eye\\\" Mobile System for the Visually Impaired”, IEEE Potentials, vol. 35, no. 6, pp. 13-20. [5] Vision impairment and blindness: Fact sheet Available: http://www.who.int/mediacentre/factsheets/fs282/en/. [6] Object Detection and Count of Objects in Image using Tensor Flow Object Detection API, B. N. K. Sai and T. Sasikala,2019 International Conference on Smart Systems and Inventive Technology (ICSSIT), 2019, pp. 542-546. [7] A Virtual Eye to Aid the Visually Impaired , Jinesh Ashah, Aashreen Raorane ,Akash Ramani, Hitanshu Rami , Narendra shekokar,2020, 3rd International Conference on Communication System,Computing and IT Applications (CSCITA) - Mumbai, India (2020.4.3-2020.4.4) [8] B Deepthi Jain, Shwetha M Thakur, K V Suresh. \"Visual Assistance for Blind Using Image Processing\", 2018 International Conference on Communication and Signal Processing (ICCSP), 2018 [9] I Joe Louis Paul, S Sasirekha, S Mohanavalli, C Jayashree, P Moohana Priya, K Monika. \"Smart Eye for Visually Impaired-An aid to help the blind people\" , 2019 International Conference on Computational Intelligence in Data Science (ICCIDS), 2019. [10] Jinesh A Shah, Aashreen Raorane, Akash Ramani, Hitanshu Rami, Narendra Shekokar. \"EYERIS: A Virtual Eye to Aid the Visually Impaired\" , 2020 3rd International Conference on Communication System, Computing and IT Applications (CSCITA), 2020.

Copyright

Copyright © 2022 Mamatha C, Kumuda H R, Nethra P D, Rajashekhar S A. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET45931

Publish Date : 2022-07-23

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online