Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Adaptive Weed Detection and Removal Using Cutter and Pesticide Spraying AGBOT

Authors: Manasa S, Tanushree G, Suhas S, Sumanth C. C, Sukanya M

DOI Link: https://doi.org/10.22214/ijraset.2023.50354

Certificate: View Certificate

Abstract

Agriculture is one amongst the world\'s oldest sources of human nourishment. Weeds are a problem as they compete with desirable crops and use up water, nutrients, and space. Development of a successful weed removal system involves correct identification of the unwanted vegetation. The working of the robot is done on the basis of Arduino Uno, where every motion is controlled by the program which is fed into the Arduino. The main purpose of the robot is to identify the weed and remove the weed using cutter and spray the pesticide, using pesticide sprayer only where weeds were present.

Introduction

I. INTRODUCTION

Agricultural Development is the primary concern in the modern world with increasing demand for agricultural products and the need for upgrading the plantation system. Thereby, farmers and researchers have made several efforts to remove unwanted weeds for decades. Weed hinders the growth of crops by competing with the plants for water, nutrition, and sunlight, which results in caustic effects on crop production. Therefore, pesticides and other agrochemicals are popularly used in agriculture farms to kill weeds but may have some acute health effects on living organisms. Thus, one of the significant challenges toward the goal of sustainable development is to decrease the number of pesticides used for controlling the unwanted weeds in the field. This system gives a clear idea and

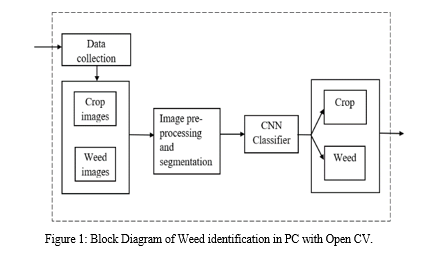

helps to make proper decisions by the use of the information provided by machine learning techniques, image processing as well as neural networks (classifiers). The system provides the report on observations on weed detection by sensing the Image data which is provided by the image processing techniques. The first phase works on training data set, the second phase works on processing on test data where input image size is changed as well as colour and texture features like energy, contrast, homogeneity and correlation is obtained. Then the classifier will classify the test images automatically to decide weed characteristics. For such techniques neural network is used based on learning on training the data. The simulated result shows that network classifier provide the minimum error and better accuracy in classifying the images.

II. METHODOLOGY

A. Data Collection

Data collection is the process of gathering relevant data and arranging it to create data sets for machine learning. The type of data (video sequences, frames, photos, patterns, etc.) depends on the problem that the AI model aims to solve. In computer vision, robotics, and video analytics, AI models are trained on image datasets with the goal of making predictions related to image classification, object detection, image segmentation, and more.

Most computer vision-related models are trained on data sets consisting of hundreds (or even thousands) of images. A good data set is essential to ensure that your model can classify predict the outcomes with high accuracy.

B. Collection Of Weed And Crop Images

Datasets consisting of weed and crop images are required for training Convolutional neural Network. Images will grouped into 2 classes. Crop images and weed images are stored in separate folder and are given for training CNN.

C. Image Preprocessing and Segmentation

Image preprocessing is the steps taken to format images before they are used by model training and inference. This includes, but is not limited to, resizing, orienting, and color corrections. Image preprocessing may also decrease model training time and increase model inference speed.

Image segmentation is a method of dividing a digital image into subgroups called image segments, reducing the complexity of the image and enabling further processing or analysis of each image segment. Technically, segmentation is the assignment of labels to pixels to identify objects, people, or other important elements in the image.

A common use of image segmentation is in object detection. Instead of processing the entire image, a common practice is to first use an image segmentation algorithm to find objects of interest in the image. Then, the object detector can operate on a bounding box already defined by the segmentation algorithm. This prevents the detector from processing the entire image, improving accuracy and reducing inference time.

D. Training Convolutional Neural Network

- Convolutional Neural Network

Convolutional neural network is special architecture used for deep learning. It is used to detect objects. CNN learns to identify objects without manual assistance. A convolutional neural system can have many layers that each figure out how to distinguish various features of an image. Filters are applied to each training image at different resolutions, and the output of each convolved image is used as the input to the next layer. The starting layers can detect very simple features, such as brightness and edges, and as the layers increase more complex features that uniquely define the object can be detected. The segmented image will be processed with CNN using ReLU and pooling layers.

2. Prepare Training and Test Image Sets

Split the images into training and validation datasets. Pick 70% of images from each crop and weed image dataset set for the training and the rest 30%, for the validation data. CNN is trained using the training and validation sets.

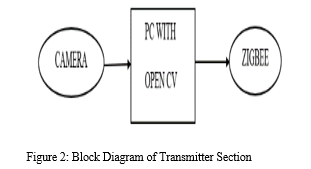

Many methods are used in concern with image processing to detect the weeds. The technique we using here is shown in figure. Using weed database which consist of healthy and unhealthy leaves, these images of the weeds are captured with the help of digital camera (PC camera). Fig.3.1 shows how the detection takes place through image processing technique. Here we will be using open cv, OpenCV is the huge open-source library for the computer vision, machine learning, and image processing and now it plays a major role in real-time operation which is very important in today’s systems. By using it, one can process images and videos to identify objects, faces, or even handwriting of a human. After detection, using the ZigBee module the command will be sent to weed robot, which will remove or cut the weed and spray the pesticide.

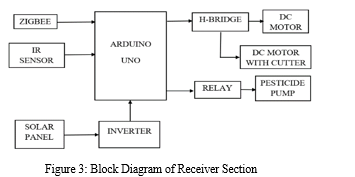

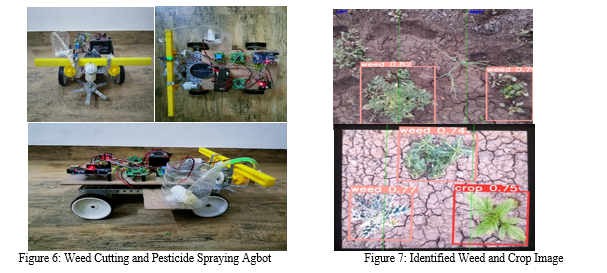

This robot movement and functionalities can be controlled either manually or automatically. The working of the robot is done on the basis of Arduino Uno every motion controlled by the program is fed into the Arduino H-Bridge is used to control the speed and direction of the Dc motors. Manual control is achieved by giving the required functionalities as commands to the robot as input through a cable wire connected to Arduino or through wireless communication like ZigBee. Automatic control is achieved when the robot is made to work all the functions one after the other by programming some amount of delay in between them. Once the weeds are detected then the command will be sent to the Agbot which then cuts the weeds using cutter, once the weed is cut, then the pesticide will be sprayed where weeds were present. Solar power stored into the battery the robot is operating with Battery.

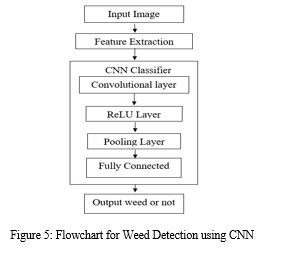

The first step of this proposed system, is that the input image will be captured through camera, then the image will be processed using CNN for the classification of weeds and crops. Weeds are detected, the agbot move towards the weed and weed is cut using a cutter. Once the weed is removed, the sprayer gets activated and pesticide is sprayed where weeds are present.

A. Convolution Neural Network

Convolutional neural network is the special type of feed forward artificial neural network in which the connectivity between the layers is inspired by the visual cortex. Convolutional Neural Network (CNN) is a class of deep neural networks which is applied for analyzing visual imagery. They have applications in image and video recognition, image classification, natural language processing etc. Convolution is the first layer to extract features from an input image. Convolution preserves the relationship between pixels by learning image features using small squares of input data. It is a mathematical operation that takes two inputs such as image matrix and a filter or kernel. Each input image will be passed through a series of convolution layers with filters (kernels) to produce output feature maps. Here is how exactly the CNN works. Basically, the convolutional neural networks have 4 layers that is the convolutional layers, ReLU layer, pooling layer, and the fully connected layer.

B. Convolutional Layer

In convolution layer after the computer reads an image in the form of pixels, then with the help of convolution layers we take a small patch of the images. These images or patches are called the features or the filters. By sending these rough feature matches is roughly the same position in the two images, convolutional layer gets a lot better at seeing similarities than whole image matching scenes. These filters are compared to the new input images if it matches then the image is classified correctly. Here line up the features and the image and then multiply each image, pixel by the corresponding feature pixel, add the pixels up and divide the total number of pixels in the feature. We create a map and put the values of the filter at that corresponding place. Similarly, we will move the feature to every feature matches that area. Finally, we will get a matrix as an output.

C. ReLU Layer

ReLU layer is nothing but the rectified linear unit, in this layer we remove every negative value from the filtered images and replaces it with zero. This is done to avoid the values from summing up to zeroes. This is a transform function which activates a node only if the input value is above a certain number while the input is below zero the output will be zero then remove all the negative values from the matrix.

D. Pooling Layer

In this layer we reduce or shrink the size of the image. Here first we pick a window size, then mention the required stride, then walk your window across your filtered images. Then from each window take the maximum values. This will pool the layers and shrink the size of the image as well as the matrix. The reduced size matrix is given as the input to the fully connected layer.

E. Fully Connected Layer

We need to stack up all the layers after passing it through the convolutional layer, ReLU layer and the pooling layer. The fully connected layer used for the classification of the input image. These layers need to be repeated if needed unless you get a 2x2 matrix. Then at the end the fully connected layer is used where the actual classification happens.

F. Image acquisition process

At first healthy and diseased leaves are handled. The dataset comprising of these healthy and diseased leaves is known as the preparation dataset. When the preparation dataset is handled then the add or provided leaf picture is the test weed picture. Further picture investigation is accomplished for a progressively appropriate presentation; the picture improvement process is connected.

G. The image enhancement

The image enhancement process is used to enhance the input image for further process which helps to carry the process of image analysis for more suitable display.

H. Feature Extraction

Feature extraction is a process of dimensionality reduction by which an initial set of raw data is reduced to more manageable groups for processing.

From the above processes the classification of weeds takes place, then the command will be sent to the robot using Arduino, then the Agbot will perform its operation by cutting the weed and then spraying of pesticide where weeds are present.

III. RESULTS AND DISCUSSION

The Results and Discussion section of a weed detection and pesticide spraying AgBot project is a crucial aspect of the research process, as it provides a detailed evaluation of the system's accuracy and efficiency. This section includes quantitative data, such as the percentage of weeds detected and sprayed accurately, the time taken to detect and spray weeds, and the amount of pesticide used.

Conclusion

Automatic weed detection and removal based on image processing technique using Convolutional Neural Network (CNN). The entire system for weed management is set up on a four-wheeled robot. The classification of weed and crop is used to detect the weed and remove it by using automated cutter to improve the productivity of the crop. The Agbot is used for spraying pesticide where weeds are present. Thus, the usage of pesticide can be reduced to a big extent not spraying it on to the entire field, thereby providing a way for natural growth of plants.

References

[1] Mansoor Alarm, ‘REAL-TIME MACHINE-LEARNING BASED CROP/WEED DETECTION AND CLASSIFICATION FOR VARIABLE-RATE SPRAYING IN PRECISION AGRICULTURE’ 2020. [2] ‘A REVIEW ON WEED DETECTION USING IMAGE PROCESSING’, Shaikh Anam Fatima, Dr. Praveen Shetiye, Dr. Avinash K. Gulve September 2020, IJRTE [3] ‘WEED RECOGNITION SYSTEM FOR CROPS IN FARMS USING IMAGE PROCESSING TECHNIQUES AND SMART HERBICIDE SPRAYER ROBOT’ by Kalyani Bhongale, 2017 [4] ‘WEED DETECTION USING IMAGE PROCESSING’, Ajinkya Paikekari, Vrushali Ghule, Rani Meshram, V.B. Raskar March-2016 IRJET [5] S. T., S. T., S. G. G.S., S. S. and R. Kumaraswamy, ‘PERFORMANCE COMPARISON OF WEED DETECTION ALGORITHMS,’ 2019 International Conference on Communication and Signal Processing (ICCSP), Chennai, India, 2019 [6] W. Zhang et al., ‘BROAD-LEAF WEED DETECTION IN PASTURE’, 2018 IEEE 3rd International Conference on Image, Vision and Computing (ICIVC), Chongqing, 2018 [7] Jialin Yu, Arnold W. Schumann, Zhe Cao, Shaun M. Sharpe and Nathan S. Boyd ‘WEED DETECTION IN PERENNIAL RYEGRASS WITH DEEP LEARNING CONVOLUTIONAL NEURALNETWORK’DOI=10.3389/fpls.2019.01422. [8] Ukrit Watchareeruetai, Pavit Noinongyao, Chaiwat Wattanapaiboonsuk, Puriwat Khantiviriya, ‘IDENTIFICATION OF PLANT NUTRIENT DEFICIENCIES USING CONVOLUTIONAL NEURAL NETWORKS’, 2018 [9] A. Camargo and J.S. Smith, “An image-processing based algorithm to automatically identify plant disease visual symptoms,” Biosystems Engineering, vol.102, pp.9–21, January 2009. [10] J.S. Cope, D. Corney, J.Y. Clark, P. Remagnino, and P. Wilkin, “Plant species identification using digital morphometrics: A review,” Expert Systems with Applications, vol.39, pp.7562–7573, June 2012. [11] Dr.K.Thangadurai, K.Padmavathi, “Computer Vision image Enhancement For Plant Leaves Disease Detection”, 2014 World Congress on Computing and Communication Technologies. [12] Monica Jhuria, Ashwani Kumar, and Rushikesh Borse, “Image Processing For Smart Farming: Detection Of Disease And Fruit Grading”, Proceedings of the 2013 IEEE Second International Conference on Image Information Processing (ICIIP 2013). [13] K. Oki, S. Mitsuishi, T. Ito, M. Mizoguchi, “An agricultural monitoring system based on the use of remotely sensed imagery and field server web camera data,” Giscience & Remote Sensing, vol. 46, pp. 305-314, 2009. [14] G. F. Zhang, X. D. Liu, Y. Q. Zhu, and B. P. Zhai, “An open WebGIS-based monitoring system on crop pests and diseases,” Journal of Nanjing Agricultural University, vol. 32, pp. 165-169, 2009. [15] Kaur, S.; Pandey, S.; Goel, S. Plants disease identification and classification through leaf images: A survey. Archives of Computational Methods in Engineering 2019, 26, 507–530.

Copyright

Copyright © 2023 Manasa S, Tanushree G, Suhas S, Sumanth C. C, Sukanya M. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET50354

Publish Date : 2023-04-12

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online