Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Human Follower Robot

Authors: Priyanka P. Vetal, Dr. Mukta Dhopeshwarkar, Pratik S Jaiswal

DOI Link: https://doi.org/10.22214/ijraset.2022.42049

Certificate: View Certificate

Abstract

In this digital and automotive day and age, robotics, and IoT produce an impact on human life. One can\\\'t just rely on the traditional mode of work in this era. One has to adapt the robotics and keep digging in it, as it\\\'s the near future for humans. So to do this there are many aspects to implement automotive in day-to-day life. One such event is to study a robot that follows humans that means which can detect human movement and react as per this movement. The study shows that there are many researchers, scientists, engineers who have worked and still working to improve this human movement detection in robotics. This paper has studied some of the previous work and gave a comparative analysis of the same

Introduction

I. INTRODUCTION

Robot technology has grown tremendously in recent times. The same establishment was just a dream for some people a few times back. But in this seaside world, there is now a need for robots like “A Human Follower Robot” that can communicate and communicate with them. (1) To accomplish this, a robot needs the ability to dream and to perform. (2) (3) The robot must be intelligent enough to follow a person in tight spaces, in an image, and inside or out (4). Photo processing done to get information about nature by appearance is really important. The following points should be considered carefully in practice. Living conditions should be truly stable and should not change. The width should be well placed in the requested area when blurring. The target should not be too far away from the visible detector as distance is very important. We should avoid using the same color next to the target robot. Otherwise, the robot would be confused. Usually the next dead robots are equipped with several different combinations of icons i.e. light detection and various icons. All detectors and modules operate in accordance with the definition and target tracking.

The robot's ability to track and trace a moving object can be used for a number of purposes.

- Helping people.

- Generating people easily.

- Can be used for self-defense purposes.

In this paper, we have introduced the dying robot system based on label identification and detection using a camera. Intelligent target recording is done using a variety of tools and modules (5) namely ultrasonic detector, magnetometer, infrared detector, and camera. The wise decision is made by the robots' control unit based on the information found in the icons and modules below, which is why it changes and tracks something by avoiding obstacles and without contradicting the target.

II. RELATED WORK

Another experimental work was done in this regard, in-depth photography was used by Calisi and the target was developed by designing a special algorithm (1). Ess and Leibe did the same job. They do a lot of work on sewing and acquisition. The great advantage of their system is that their algorithm worked in a complex environment and (2) (3). The stereo view is also made by Y. Salih to make discovery (4). This system enabled him to pursue the desired goal effectively. A combination of different detectors was used by R. Munoz ku. get information about the target to be tracked. In addition to using various equipment, he also used the stereo view to obtain accurate information. (5). The protective data combined with the information from the camera proved to be very useful in performing the task (6). Different algorithms are developed by testers for detection purposes. The beam was used in a single test to determine the style of moving legs (7) (8) (9) and the camera was used to describe an object or person (10) (11). Really simple fashion was also used for experimentation. In this way, one is accustomed to finding the distance to the robot and the person. These detectors detect radio swelling and are detected by detectors in a person to be tracked. In this way the robot followed the target (12).

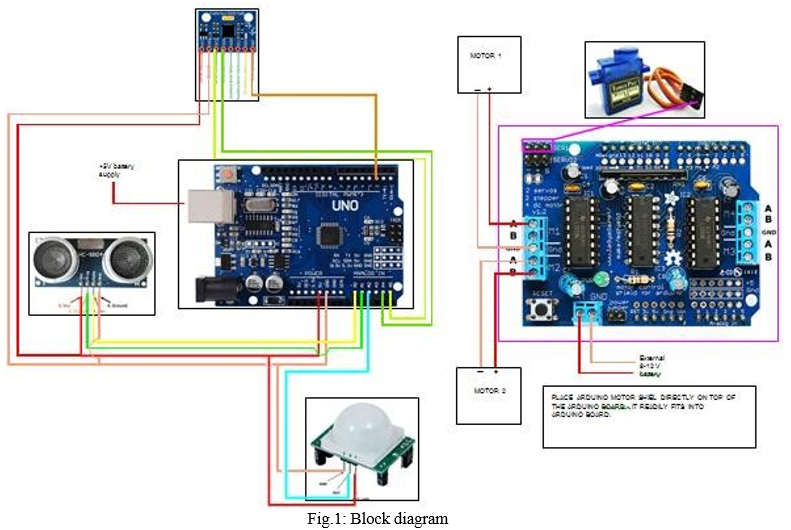

III. BLOCK DIAGRAM

IV. PROPOSED SYSTEM

An Arduino-based human tracking robot is used to track a person or a person with a leg movement where the person is moving the robot will follow him or her forward, left, right the robot will detect human movement and properly follow its movements. Using an ultrasonic sensor robot to locate a person and the ultrasonic sensor calculates the distance between the human and the robot also analyzes it. In our project the ultrasonic sensor is mounted on a servo motor which is a direct actuator that allows precise control of angular or linear position.

The Servo motor has a shaft that goes up to 180 degrees .in the servo motor we have an ultrasonic sensor that also moves the servo motor to get the correct position and direction of the moving person.

We have also used the gyro sensor also known as the angular rate sensor which is the angular velocity sensor .the gyro sensor is used to detect human movement angles and detects moving body angles at 30, 45., 60, 90, 180 degree respectively. Analyzing and deciding and sense the person moving slowly left, right, forward take the step of following a particular person where he or she is moving in the right direction with his or her moving direction. The main function of the gyro sensor is an angle sensor and control mechanism.

Many of the earliest constructions of flower flowers are not used by the PIR sensor .we have used the PIR sensor in our project which is used to detect the difference between living and non-living things. The PIR is an infrared sensor used to detect when a person has entered or exited a range of sensors.

A. Component Used

- Arduino Uno: It is the brain of our design. It can give all the instructions on their low items to be used by the mortal geste. It also provides feedback on other aspects and people. So that it can be used as a means of communication between humans and robots and vice versa. It has 8 bit CPU specification, 16 MHZ clock speed. Speed, 2 KB SRAM 32 KB flash Memory, 1 KB EEPROM.

- DC Gear Motors: DC Motor is a device that converts any type of energy into mechanical power or transmits chaos. In building a robot, the engine often plays an important role in providing robotic movement. After that 4 DC motors are used to drive robot.

- Motor Shield: Motor Shield is a motor driver module that allows you to use Arduino to control the speed and direction of the car. The Motor Shield may be powered by Arduino directly or 6V 15V external power with terminal input. The Motor Motorist Board was then designed to work with the L293D IC.

- Ultrasonic Detector: Ultrasonic detector is a tool that measures the distance to an object using an ultrasonic irradiator. The operating principle of this module is simple, it transmits ultrasonic palpitation from 40kHz through the air, and if there is a defect or object, it will jump back into the detector. By calculating travel time and sound speed, distance can be calculated.

- Servo Motor: Servo motor operates in PWM (Pulse width modulation) means that its gyration angle is controlled by the length of the heartbeat applied to its Control leg. Basically a servo motor is made with a DC motor controlled by a flexible resistor (potentiometer) and other gears.

- PIR Sensor: PIR detectors allow you to inhale the chaos, almost always used to determine whether a person has entered or exited a machine. They are small, affordable, low power, easy to use and never wear out. For that reason they often invest in electrical appliances and widgets used in homes or businesses.

- Gyro Scope Sensor: A gyroscope detector is a device that can measure and maintain exposure and angular velocity of an object. These are much more advanced than accelerometers. These can measure the exposure of the cock and the side of the object while the accelerometer can only measure direct movement. Gyroscope detectors are also called Angular Rate Detectors or Angular Haste Detectors. These detectors are activated when the exposure of an object is soft to human. Measured by degrees per second, angular acceleration is the change in rotation angle of an object per unit time.

When the upper part of the gyro rotates 90 degrees to the side, it continues its desire to move left. The same is true for the lower section-- it rotates 90 degrees to the side and continues its dream of moving right. This force rotates the wheel where it goes.

B. Algorithm

- Step 1: Initially turn the ultrasonic sensor into 30 degrees using a servo motor. (Considered 90 degree as forward ultrasonic sensor, 0 degree vertical)

- Step 2: Check the presence of the object within 600mm.

- Step 3: If the object is B / W 600mm to 250mm. Turn the robot over to the object. (In this case at (90-30) = 60 degree using the sensor to measure the angle).

- Step 4: Rotate the ultrasonic sensor at 60 degrees to 90 degrees. Standing angle pointing to an object.

- Step 5: Move the robot forward to the object until the robot is 250-300mm long or there is a delay.

- Step 6: If the object in step 2 is 250-200mm make the robot stop.

- Step 7: If the object in step 2 is less than 200mm, move the robot back to a distance of 250mm.

- Step 8: When an item is found in Step 2. Increase the servo angle by level. (In the second multiplication the servo angle is 31 degrees.)

- Step 9: Repeat steps 2 to step 3 until the servo angle = 1150 degree. (Total sweep angle 150-30 = 120 degree)

- Step 10: Set the servo direction from 150 to 30 degrees. Keeping step 2 to 8 in the loop. (Back lowers the servo angle by 1 degree instead of magnification.)

C. Working

Our system consists of a four-wheeled robot car equipped with a separate microprocessor and control unit as well as various equipment and modules namely ultrasonic trackers, infrared detectors that help navigate the people and objects around you. The finders below work in tandem and assist the robot in its operation and movement in its path by avoiding obstacles and keeping a certain distance from the object. We have used an ultrasonic detector to avoid crashes and to maintain a certain distance of the object. Ultrasonic detector works directly within 4 steps.

At an angle set to 0?, the finding area between the two receivers is small enough to make the robot difficult to make a decision to define the human leg. While, the angle set to 10?, the large finding area between the finders indicates that it is suitable for the analysis of the death leg within the area.

At that point, the angle set to 20?, the acquisition angle is considered to be slightly larger than the 10? angle. It may make the robot unsuitable for carrying legs when the distance between the legs and the robot ascends. Therefore, the fun can be eliminated. The angle of discovery of angle 30? is so complex that it can be confusing to make decisions there.

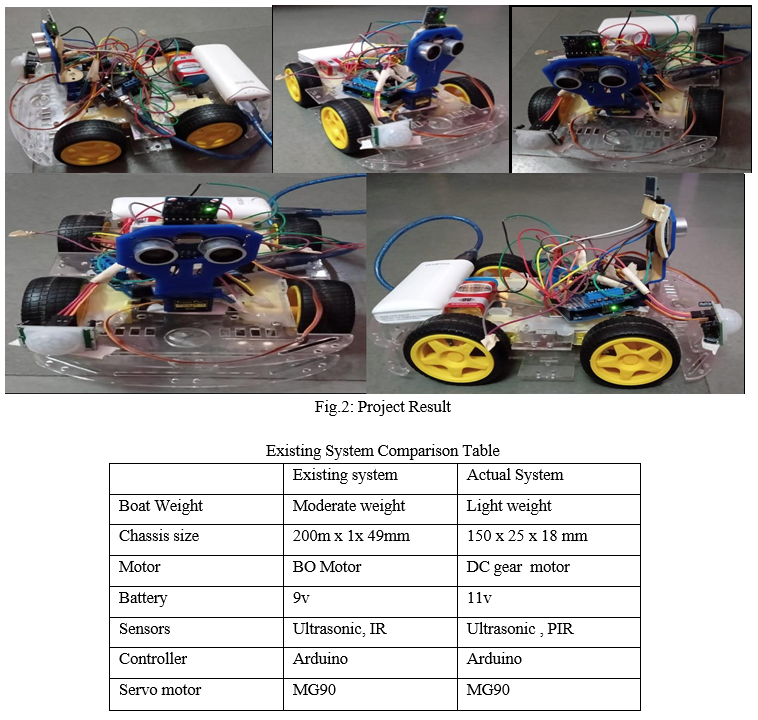

V. RESULT

Various tests were performed and the performance of the dying robot was tested. Testing is performed on ultrasonic and infrared detectors. It was noted that the detector operated directly within the 4-dimensional range. We also did experimenting to see if the robot kept a certain distance from the target object. We also tested intermittent interactions between Arduino, motor guard, and colored motors. Based on the results obtained from these tests and tests, we have made the necessary changes to the processing and control algorithm. After it was completed, we saw that the results produced truly satisfying the robot was following the wrong person wherever he went.

Conclusion

A successful implementation of a prototype of human follower robot is illustrated in this paper. This robot does not only have the detection capability but also the following ability as well. While making this prototype it was also kept in mind that the functioning of the robot should be as efficient as possible. Tests were performed on the different conditions to pin point the mistakes in the algorithm and to correct them. The different sensors that were integrated with the robot provided an additional advantage. The human following robot is an automobile system that has ability to recognize obstacle, move and change the robot\\\'s position toward the subject in the best way to remain on its track. This project uses Arduino, motors different types of sensors to achieve its goal. This project challenged the group to cooperate, communicate, and expand understanding of electronics, mechanical systems, and their integration with programming. A. Application 1) In military area also in household, travel and in shopping where human follower robot can used as an autonomous cart or automated trolley which follows the person. 2) In industrial application a robot can help to carry heavy items for a long distance. B. Future Scope 1) We can attach a camera on this robot to analyze and record the entire situation where human is going. There are numerous intriguing operations of this exploration in different fields whether service or medical. 2) Wireless communication functionality can be added to the robot to make it more protean and control it from a large distance. This capability of a robot could also be used for military purposes. By mounting a real- time videotape archivist on top of the camera, we can cover the surroundings by just sitting in our apartments. We can also add some variations to the algorithm and the structure as well to fit it for any other purpose. Also, it can help the public in shopping promenades. So there it can act as a luggage carrier, hence no need to carry up the weights or to pull that. Also, an ample quantum of variations could be done to this prototype for far and wide operations.

References

[1] K. Morioka, J.-H. Lee, and H. Hashimoto, “Human-following mobile robot in a distributed intelligent sensor network,” IEEE Trans. Ind. Electron., vol. 51, no. 1, pp. 229–237, Feb. 2004. [2] Y. Matsumoto and A. Zelinsky, “Real-time face tracking system for human-robot interaction,” in 1999 IEEE International Conference on Systems, Man, and Cybernetics, 1999. IEEE SMC ’99 Conference Proceedings, 1999, vol. 2, pp. 830– 835 vol.2. [3] T. Yoshimi, M. Nishiyama, T. Sonoura, H. Nakamoto, S. Tokura, H. Sato, F. Ozaki, N. Matsuhira, and H. Mizoguchi, “Development of a Person Following Robot with Vision Based Target Detection,” in 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2006, pp. 5286–5291. [4] H. Takemura, N. Zentaro, and H. Mizoguchi, “Development of vision based person following module for mobile robots in/out door environment,” in 2009 IEEE International Conference on Robotics and Biomimetics (ROBIO), 2009, pp. [5] Muhammad Sarmad Hassan, MafazWali Khan, Ali Fahim Khan,”Design and Development of Human Following Robot”, 2015,Student Research Paper Conference,Vol-2, No-15. [6] N. Bellotto and H. Hu, “Multisensor integration for human-robot interaction,” IEEE J. Intell. Cybern.Syst., vol. 1, no. 1, p. 1, 2005. [7] K. Morioka, J.-H. Lee, and H. Hashimoto, “Human-following mobile robot in a distributed intelligent sensor network,” IEEE Trans. Ind. Electron., vol. 51, no. 1, pp. 229–237, Feb. 2004. [8] Y. Matsumoto and A. Zelinsky, “Real-time face tracking system for human-robot interaction,” in 1999 IEEE International Conference on Systems, Man, and Cybernetics, 1999. IEEE SMC ’99 Conference Proceedings, 1999, vol. 2, pp. 830–835 vol.2. [9] T. Yoshimi, M. Nishiyama, T. Sonoura, H. Nakamoto, S. Tokura, H. Sato, F. Ozaki, N. Matsuhira, and H. Mizoguchi, “Development of a Person Following Robot with Vision Based Target Detection,” in 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2006, pp. 5286–5291. [10] H. Takemura, N. Zentaro, and H. Mizoguchi, “Development of vision based person following module for mobile robots in/out door environment,” in 2009 IEEE International Conference on Robotics and Biomimetics (ROBIO), 2009, pp. 1675–1680. [11] N. Bellotto and H. Hu, “Multisensor integration for human-robot interaction,” IEEE J. Intell. Cybern. Syst., vol. 1, no. 1, p. 1, 2005. [12] W. Burgard, A. B. Cremers, D. Fox, D. Hähnel, G. Lakemeyer, D. Schulz, W. Steiner, and S. Thrun, “The interactive museum tour-guide robot,” in AAAI/IAAI, 1998, pp. 11–18. [13] M. Scheutz, J. McRaven, and G. Cserey, “Fast, reliable, adaptive, bimodal people tracking for indoor environments,” in Intelligent Robots and Systems, 2004.(IROS 2004). Proceedings. 2004 IEEE/RSJ International Conference on, 2004, vol. 2, pp. 1347–1352. [14] N. Bellotto and H. Hu, “People tracking and identification with a mobile robot,” in Mechatronics and Automation, 2007. ICMA 2007. International Conference on, 2007, pp. 3565–3570. [15] S. Jia, L. Zhao, and X. Li, “Robustness improvement of human detecting and tracking for mobile robot,” in Mechatronics and Automation (ICMA), 2012 International Conference on, 2012, pp. 1904–1909. [16] M. Kristou, A. Ohya, and S. Yuta, “Target person identification and following based on omnidirectional camera and LRF data fusion,” in RO-MAN, 2011 IEEE, 2011, pp. 419–424. [17] T. Wilhelm, H. J. Böhme, and H. M. Gross, “Sensor fusion for vision and sonar based people tracking on a mobile service robot,” in Proceedings of the International Workshop on Dynamic Perception, 2002, pp. 315–320. [18] D. Calisi, L. Iocchi, and R. Leone, “Person following through appearance models and stereo vision using a mobile robot.,” in VISAPP (Workshop on on Robot Vision), 2007, pp. 46–56. [19] A. Ess, B. Leibe, and L. Van Gool, “Depth and appearance for mobile scene analysis,” in Computer Vision, 2007. ICCV 2007. IEEE 11th International Conference on, 2007, pp. 1–8. [20] A. Ess, B. Leibe, K. Schindler, and L. Van Gool, “A mobile vision system for robust multi-person tracking,” in Computer Vision and Pattern Recognition, 2008. CVPR 2008. IEEE Conference on, 2008, pp. 1–8. [21] Y. Salih and A. S. Malik, “Comparison of stochastic filtering methods for 3D tracking,” Pattern Recognit., vol. 44, no. 10, pp. 2711–2737, 2011. [22] R. Muñoz-Salinas, E. Aguirre, and M. García-Silvente, “People detection and tracking using stereo vision and color,” Image Vis. Comput., vol. 25, no. 6, pp. 995–1007, 2007. [23] J. Satake and J. Miura, “Robust stereo-based person detection and tracking for a person following robot,” in ICRA Workshop on People Detection and Tracking, 2009. [24] J. H. Lee, T. Tsubouchi, K. Yamamoto, and S. Egawa, “People Tracking Using a Robot in Motion with Laser Range Finder,” in 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2006, pp. 2936– 2942. [25] M. Lindstrom and J. O. Eklundh, “Detecting and tracking moving objects from a mobile platform using a laser range scanner,” in 2001 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2001. Proceedings, 2001, vol. 3, pp. 1364–1369 vol.3. [26] M. Kristou, A. Ohya, and S. Yuta, “Panoramic vision and lrf sensor fusion based human identification and tracking for autonomous luggage cart,” in Robot and Human Interactive Communication, 2009. RO-MAN 2009. The 18th IEEE International Symposium on, 2009, pp. 711–716. [27] C. Schlegel, J. Illmann, H. Jaberg, M. Schuster, and R. Wörz, “Vision Based Person Tracking with a Mobile Robot.,” in BMVC, 1998, pp. 1–10. [28] N.-Y. Ko, D.-J. Seo, and Y.-S. Moon, “A Method for Real Time Target Following of a Mobile Robot Using Heading and Distance Information,” J. Korean Inst. Intell. Syst., vol. 18, no. 5, pp. 624–631, Oct. 2008. [29] K. S. Nair, A. B. Joseph, and J. I. Kuruvilla, “Design of a low cost human following porter robot at airports.” [30] V. Y. Skvortzov, H.-K. Lee, S. Bang, and Y. Lee, “Application of Electronic Compass for Mobile Robot in an Indoor Environment,” in 2007 IEEE International Conference on Robotics and Automation, 2007, pp. 2963–2970.

Copyright

Copyright © 2022 Priyanka P. Vetal, Dr. Mukta Dhopeshwarkar, Pratik S Jaiswal. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET42049

Publish Date : 2022-04-30

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online