Ijraset Journal For Research in Applied Science and Engineering Technology

- Home / Ijraset

- On This Page

- Abstract

- Introduction

- Conclusion

- References

- Copyright

Virtual Health Care Robot Using Kinect Sensor

Authors: Vedashree R, Deepika S R, Leelavathi K, Raghavendra H, Prof. Nithya K

DOI Link: https://doi.org/10.22214/ijraset.2022.44863

Certificate: View Certificate

Abstract

Kinect cameras and therefore the other sensor combination framework is used to obtain real-time tracking of a person’s action skeleton. Various gestures, performed by different persons, Quaternion\'s of joint angles are first used as robust and significant features. we present a gesture human activity recognition system for the event of human-robot interaction (HRI) interface. Next, classifiers are used to recognize the different gestures. This work deals with different challenging tasks, like the real-time implementation of a gesture act recognition system and also the temporal resolution of gestures. The HRI interface developed during this work includes three Kinect and robots which will be remotely controlled by one operator standing before of one of the Kinect sensors. Moreover, the system is provided with a nation action for control of the robot.

Introduction

I. INTRODUCTION

In today's world robots are corporate among us. The robotic industry has been developing many new trends to upsurge efficiency and has significantly increased due to military and industrial applications. Robots are used for jobs that will be injurious to humans; repetitive jobs that are tedious, hectic, etc. Aside from controlling the robotic system through physical devices, recent techniques of controlling the robotics system through gestures and speech became very talked-about. Due to its of its gestures following capabilities it can work as answer for several problems or as an assistant for humans in various situations. One amongst would be to fight wars in place of humans as tracking and following an enemy to unknown places to scale back human causalities. It can be accustomed to help people with physical problems carry their objects. To attain this, it’s required to use a sensor that measures variables within an environment where these variables will be processed and analyzed to detect a someone. This project gives a quick overview of the newest research on robotics using Kinect. Till now the machines were used are either automated or remote-controlled. These are remotely controlled by RF, IR, and IOT (using Bluetooth and WI-FI modules). During this project, the machines are controlled virtually employing a natural user interface (NUI) console "Kinect". We are controlling a robot action using NRF wireless communication.

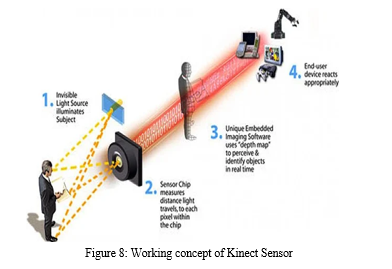

The Kinect sensor obtains the skeleton data from the topic using mathematical calculations it calculates the angle deviation between the various parts of the arm supported on its movement. The receiver will receive the information and convert it to its equivalent PWM signal. The PWM signal are going to be wont to control the motor present within the robot. The mix of the various servo motors will end in robotic movement. The image processing disbursed to urge the data about the encompassing visually could be a important thing. The subsequent point should be carefully noted while doing the processing.

II. MOTIVATION

To achieve this, it is required to use a sensor that measures variables within an environment where these variables can be processed and analyzed to detect a person. This project gives a brief overview of the latest research on robotics using Kinect. Till now the machines were used are either automated or remote control. These are remotely controlled by RF, IR and IOT (using Bluetooth and WI-FI modules). In this project, the machines are controlled virtually using a natural user interface (NUI) console “Kinect”. We are controlling a four-wheel robot action using NRF wireless communication.

III. LITERATURE SURVEY

In “Gesture control of a mobile robot using Kinect sensor” [1] by Katerina Cekova1, Natasa Koceska1, Saso Kocesk, International Conference on Applied Internet and Information proposed a methodology for gesture control of a custom developed mobile robot, using body gestures and Microsoft Kinect sensor. The Microsoft Kinect sensor’s ability is to track joint positions has been used in order to develop software application gestures recognition and their mapping into control commands. The proposed methodology has been experimentally evaluated. The results of the experimental evaluation, presented in the paper, showed that the proposed methodology is accurate and reliable and it could be used for mobile robot control in practical applications.

In “Kinect sensor-based gesture control robot for firefighting” [2] by Mohammed A. Hussein, 2 Ahmed S, Research Journal of Applied Sciences, Engineering and Technology proposed a methodology for a remote robot control system is implemented utilizes Kinect based gesture recognition as human-robot interface. The movement of the human arm in 3 d space is captured, processed and replicated by the robotic arm. The joint angles are transmitted to the Arduino microcontroller. Arduino receives the joint angles and controls the robot arm. In investigation the accuracy of control by human’s hand motion was tested.

In “Kinect based moving human tracking system with obstacle avoidance” [3] by Abdel Mehsen Ahmad, Zouhair Bazzaz, Advances in Science, Technology and Engineering Systems Journal presented a paper an extension of work originally presented and published in IEEE International Multidisciplinary Conference on Engineering Technology (IMCET). This work presents a design and implementation of a moving human tracking system with obstacle avoidance. The system scans the environment by using Kinect, a 3D sensor, and tracks the center of mass of a specific user by using Processing, an open-source computer programming language. An Arduino microcontroller is used to drive motors enabling it to move towards the tracked user and avoid obstacles hampering the trajectory. The implemented system is tested under different lighting conditions and the performance is analyzed using several generated depth images.

In “Gesture based robot control with Kinect sensor” [4], Bhatt Meet, Joshi Hari, International Journal of Innovative Technology and Exploring Engineering (IJITEE) presented a paper on implementation of human gesture-based robot control system. It uses a Kinect sensor which consists of a depth sensor, RGB camera. The Kinect sensor obtains the skeletal data from the subject. Using mathematical calculation, it calculates the angle deviation between the different parts of the arm based on its movement. The angle deviation data measured by the Kinect sensor will be transmitted by using a Wi-Fi module. The use of the Wi-Fi module will increase mobility. On the receiver, we use a microcontroller which will convert the received angle deviations values into equivalent PWM which will be used to control the servo motors. The combination of the different servo motors will result in the robotic movement

In “motion control of robot by using Kinect sensor” [5] Ashutosh zagade, Vishakha Jamkhedkar, International Journal of Scientific Development and Research presented a paper suggests that Gesture Controlled User Interfaces (GCUI) now provide realistic and affordable opportunities, which may be appropriate for older and disabled people. They have developed a GCUI prototype application, called Open Gesture, to help users carry out everyday activities such as making phone calls, controlling their television and performing mathematical calculations. Open Gesture uses simple hand gestures to perform a diverse range of tasks via a television interface.

In “Kinect senser based gesture control robot for firefighting” [6] by Nipun D. Borole1 Dr. Gayatri M., - International Journal for Scientific Research & Development, objective of this project was to build a prototype that would facilitate the testing of different algorithms for motion of robot. Utilization of Kinect camera has been done. The software’s that have been used are Processing and Microsoft Visual Studio. In the gesture control mode, the Kinect camera captures a stream of images that are forwarded to processing which runs the motion algorithm. Based on the hand movements, the robot gets the commands according to the motion.

IV. METHODOLOGY

Till now the machines and robotics related application were used are either automated or remote control. These are remotely controlled by RF, IR and IOT (using Bluetooth and WI-FI modules). In this project, the application controlled using “Kinect” sensor. We have to carry remote system for control application and in remote always function will be limited. We cannot increase functionality without replace remote and other hardware system.

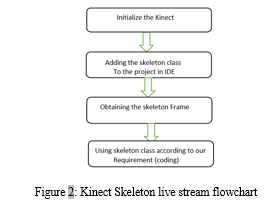

The central camera is color camera which is a RGB camera that can identify a user’s id or facial features and can also be used in augmented reality games and video calls. The two sensors make up the depth component of Kinect -an infrared projector and a monochrome CMOS sensor. These are used f or gesture recognition and skeleton tracking. The purpose of microphone arrays is not just to let the Kinect device captures sound and also locate the direction of audio waves and it recognizes the voice irrespective of the noise and the echo present in the environment and the tilt motor is used to adjust the Kinect position according to the view. Kinect Deploying According to the present Project and its specifications shows below.

V. JOINT ORIENTATION

It is a local axes representation hierarchical rotation based on a relationship defined by a bone on skeleton joint structure. There are 20 joints are recognized by the Kinect using visual studio. This node positions are referred in terms of Cartesian coordinates by the Kinect RGB camera and this will help in framing the code for the project.

VI. WORKING

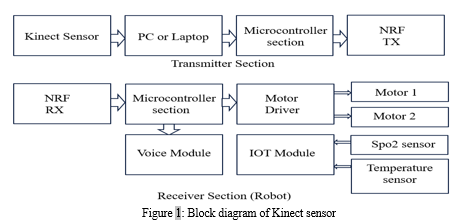

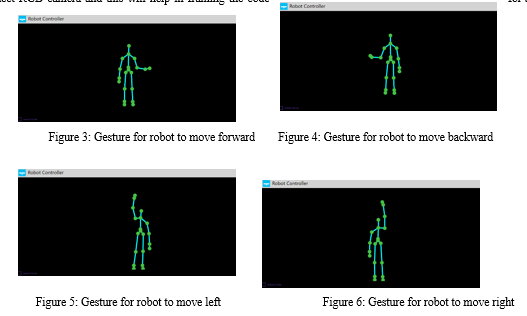

In this project there are 2 sections. First section is identifying the position of the body at what position the leg and hand is. Other section is robot. First thing is the system has to identify what is the position of the body and interrupt that signal, wirelessly send that signal to robot, identifying that to move right, left, backwards or front.

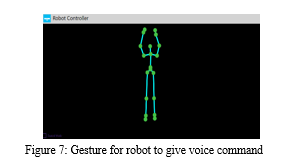

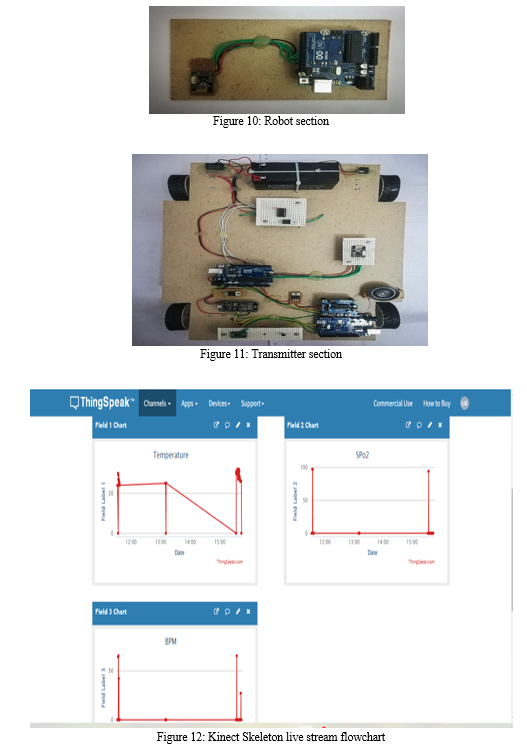

In transmitter section, the sensor is used is Kinect sensor for virtual reality. it basically used to identify the human position or human joints. (The Microsoft Kinect sensor and its software development kit) The human-machine interface of the non-public computer has achieved a new level where the users directly interact with human body movement. This new form of the human-machine interface has quickly spread to varied dimensions including education, medical aid, entertainment, sports, etc. These joints are easily identified by using visual studio, we write a program in it and take a sensor data. Once the sensor is detected that right hand is up now it sent wirelessly to NRF module through microcontroller. NRF is a transceiver, this will be transmitted data wirelessly, that will be received in the NRF receiver. And it sends to the serial data to microcontroller section saying that the right hand up send the signal wirelessly from microcontroller to NRF via USB cable.

Microcontroller sends the interrupted signal to the motor driver. Motor receives the signal from motor driver and moves right, left, forward or backward according to the signal received.

Spo2 sensor and temperature sensor is attached with the robot and the check the blood pleasure, temperature and the oxygen level of the patient.

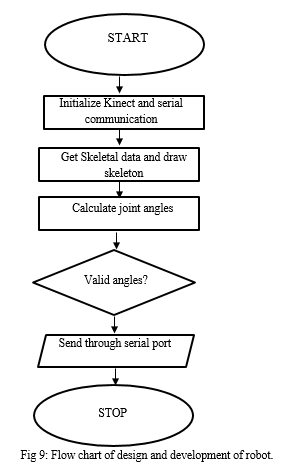

VII. DESIGN AND DEVELOPMENT OF ROBOT

In this project, we propose a virtual controlling of a robotics that uses skeleton tracking information provided by the Kinect. The experimental results are shown by human body hand skeletal positions.

- This provides virtual interactions directly with machines without any intermediate devices which results in human. We are designing 4-wheel robot for movement and we are connecting medical application related sensor in that robot. Arduino receives the joint angles and controls the four-wheel robot movement.

- The sensor will be connected on robot for medical application. User will able to test Spo2 value and heart beat using this robot.

- The robot once it will reach on particular location, the voice will come out for instruction.

VIII. APPLICATION

- Medical application

- Agriculture

- Industrial automation

IX. RESULTS

The Arduino controller is fitted inside the Robot to receive the control signals from PC and to control 2 motors through NRF Communication. To detect the human skeleton joint movement’s one can remotely control the robot to go forward, backward and to turn left, right. The Arduino controller interfaces with the NRF Module in which the transmitting and receiving pins of Arduino are connected to the SPI pins of the Module. The Communication is through the NRF USB wire with the NRF Module which provides the Data to the Arduino Board.

X. FUTURE IMPROVEMENTS

It is hoped that people would be able to use this gesture-based virtual health care robot to virtually operate a patient remotely. In addition to this, a camera could be added to monitor the patient from a long distance. Numerous features can be added to this implementation. The Kinect sensor have the ability to identify the twenty joints of the human skeleton with the help of visual studio, therefore as of now, we are using five joints to control the movement of the robot and the remaining joints could be utilized to add more gestures or actions. this could also be implemented in various fields like it could be used as a delivery robot by adding a camera and GPS navigation.

Conclusion

In this work, we presented a novel algorithm based on Extended Distance Transform to estimate the parameters of a stick skeleton model to the upper human body. We showed a running time analysis of the algorithm and showed it to be running at about 10fps. The algorithm achieved fair accuracy in estimating non-occlusion poses. We intend to extend this work by calculating concrete accuracy gestures by constructing a labelled skeleton dataset with depth images. Template matching based tracking can be used to track the faces and upper bodies through the sequence to reduce computation time. Furthermore, fore-shortening can be added to the algorithm by adjusting arm lengths on basis of the projected distance. In this project, we propose a virtual controlling of a robotics that uses skeleton tracking information provided by the Kinect. The experimental results are shown by human body hand skeletal positions. This provides virtual interactions directly with machines without any intermediate devices we can control different application with this implementation.

References

[1] Angelo chistmis, F.Austria,Ma.Lorence ,M.Modolid,sarah May D.Mejia,Racci Valenzuela,Engr.Rosello E. Tolentino \"Human Tracking using size modification and vector position for the person following robot using Microsoft Kinect Xbox 360\" 2021 7th international conference on advanced computing & communication system(ICACCS) [2] Bhatt Meet, Joshi Hari, Vaghasiya Denil, Rohit R Parmar, Pradeep M Shah Published By \"Gesture-Based Robot Control with Kinect Sensor\" Blue Eyes Intelligence Engineering & Sciences Publication International Journal of Creative Technology and Exploring Engineering (IJITEE) ISSN: 2278-3075, Volume-9 Issue-7S, May 2020. [3] Nikunj Agarwal, Priya Bajaj, Jayesh Pal, \"Human Arm Simulation Using Kinect\" Computer Science & Engineering Department, IMS Engineering College, Ghaziabad, Uttar Pradesh, India.2018 [4] Mahanthesha U, Pooja, Rakshitha R, Sherly Vincent, Smitha S Potdar \"Depth based Tracking of Moving Objects using Kinect\" Department of Electronics& Communication Engineering GSSS Institute of Engineering & Technology For Ladies, Mysuru,2018. [5] Katerina Cekova1, Natasa Koceska1, Saso Kocesk, “Gesture control of mobile robot using kinect sensor” International conference on Applied Internet and Information Technologies,2016 [6] Nipun D. Borole1 Dr. Gayatri M.phade,Mayuri.S.Mahajan, Aniket K.Patil “KINECT based gesture control robot for fire fighting” - International Journal for Scientific Research & Development. IJSRD vol.4,Issue 02, 2016. [7] Abdel Mehsen Ahmad, Zouhair Bazzal, Advances in Science, “Kinect based moving human tracking system with obstacle avoidance” Technology and Engineering Systems Journal vol. 2,2017. [8] Ashutosh zagade1, Vishakha Jamkhedkar,Shradda Dhakane, Vrushali Patankar, “A study on gesture control Arduiono Robot” International Journal of Scientific Development and Research,2019 [9] Farzin Foroughi, Peng zong “controlling servo Motor Angle by exploiting kinect SDK” International Journal of Computer Applications (0975 – 8887).April.

Copyright

Copyright © 2022 Vedashree R, Deepika S R, Leelavathi K, Raghavendra H, Prof. Nithya K. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Download Paper

Paper Id : IJRASET44863

Publish Date : 2022-06-25

ISSN : 2321-9653

Publisher Name : IJRASET

DOI Link : Click Here

Submit Paper Online

Submit Paper Online